# Сопутствующие статьи по теме Inference

Новостной центр HTX предлагает последние статьи и углубленный анализ по "Inference", охватывающие рыночные тренды, новости проектов, развитие технологий и политику регулирования в криптоиндустрии.

Industry News

- 1Podcast Notes: Hyperliquid Has Become the Top Interest Point for Traditional Hedge Funds

- 2Breaking: OpenAI Loses Key Figure, Father of Sora Departs, Turmoil Continues on the Eve of IPO

- 3Ethereum Showcases Dominance, Claiming No.1 Spot In Global Validator Network Spread

- 4Back at the AI Table, Zuckerberg's First Move Is Layoffs?

- 5Back at the AI Table, Zuckerberg's First Move Is Layoffs?

Indepth Research

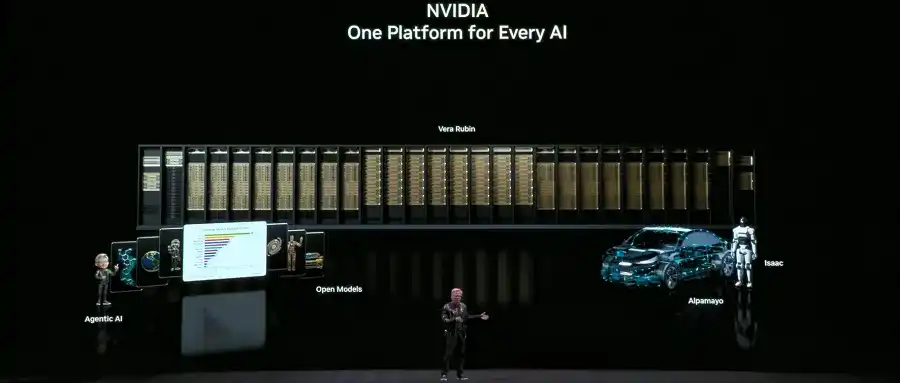

- 1a16z: The Next Frontier of AI, The Triple Flywheel of Robotics, Autonomous Science, and Brain-Computer Interfaces

- 2The Real Battlefield of AI Lies in the 'Dark Forest'

- 3DWF In-Depth Report: AI Outperforms Humans in DeFi Yield Optimization, but Lags 5x Behind in Complex Trading

- 4Fortune Investigation Exposes: High-Profile Crypto Trader's Fiancée Dies Mysteriously in Africa

- 5Expanding Glassnode: Macro and Traditional Finance Data