If you think it's just a small tool to cure AI's "amnesia," you're being naive. An underlying battle involving API arbitrage, third-party bans, tech giant outages, and even cryptocurrency monetization has completely erupted.

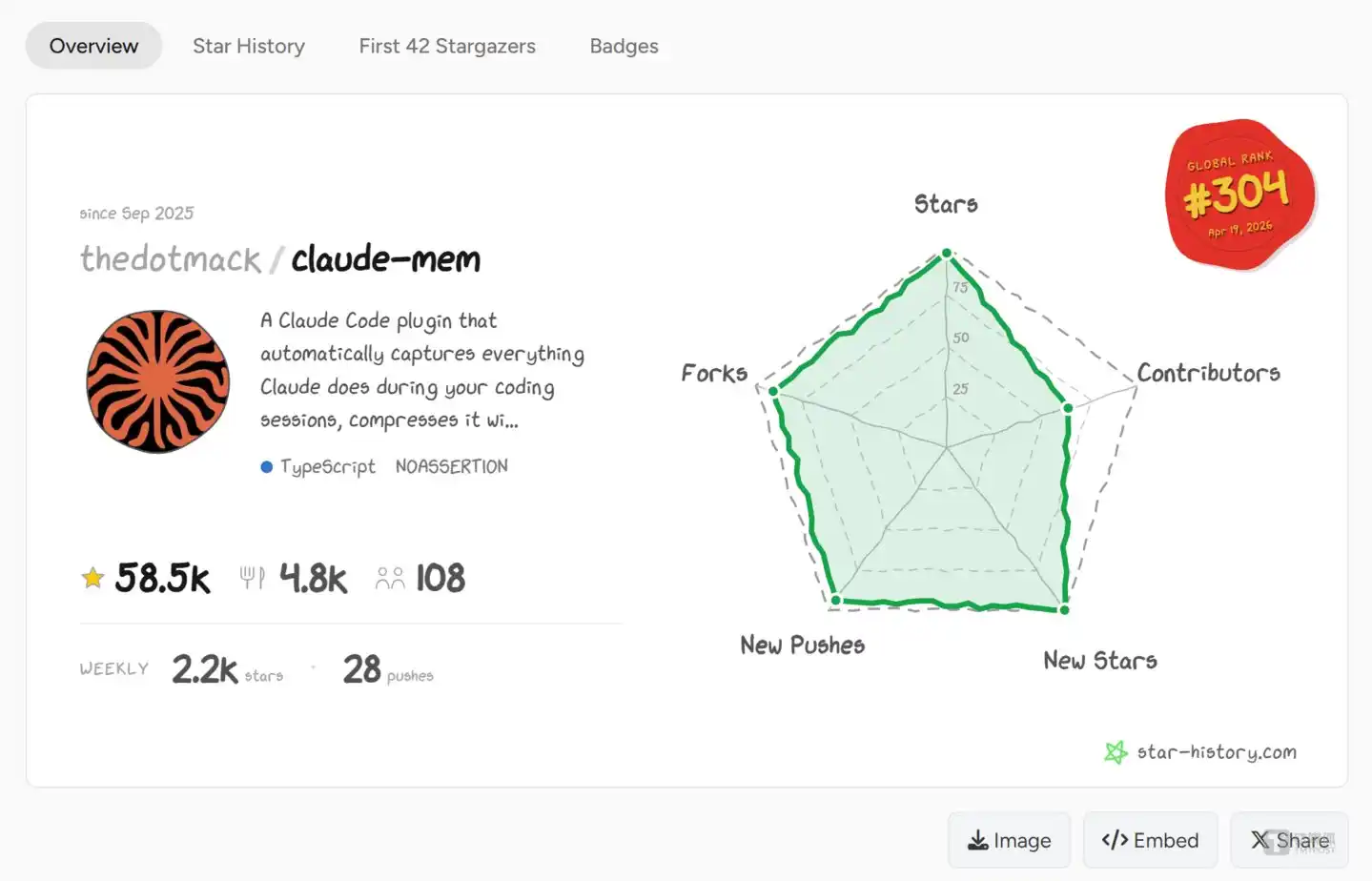

As early as September 1, 2025, a terminal installation command named npx claude-mem install quietly appeared on GitHub.

This single line of code nearly shattered the business plans of major AI model giants.

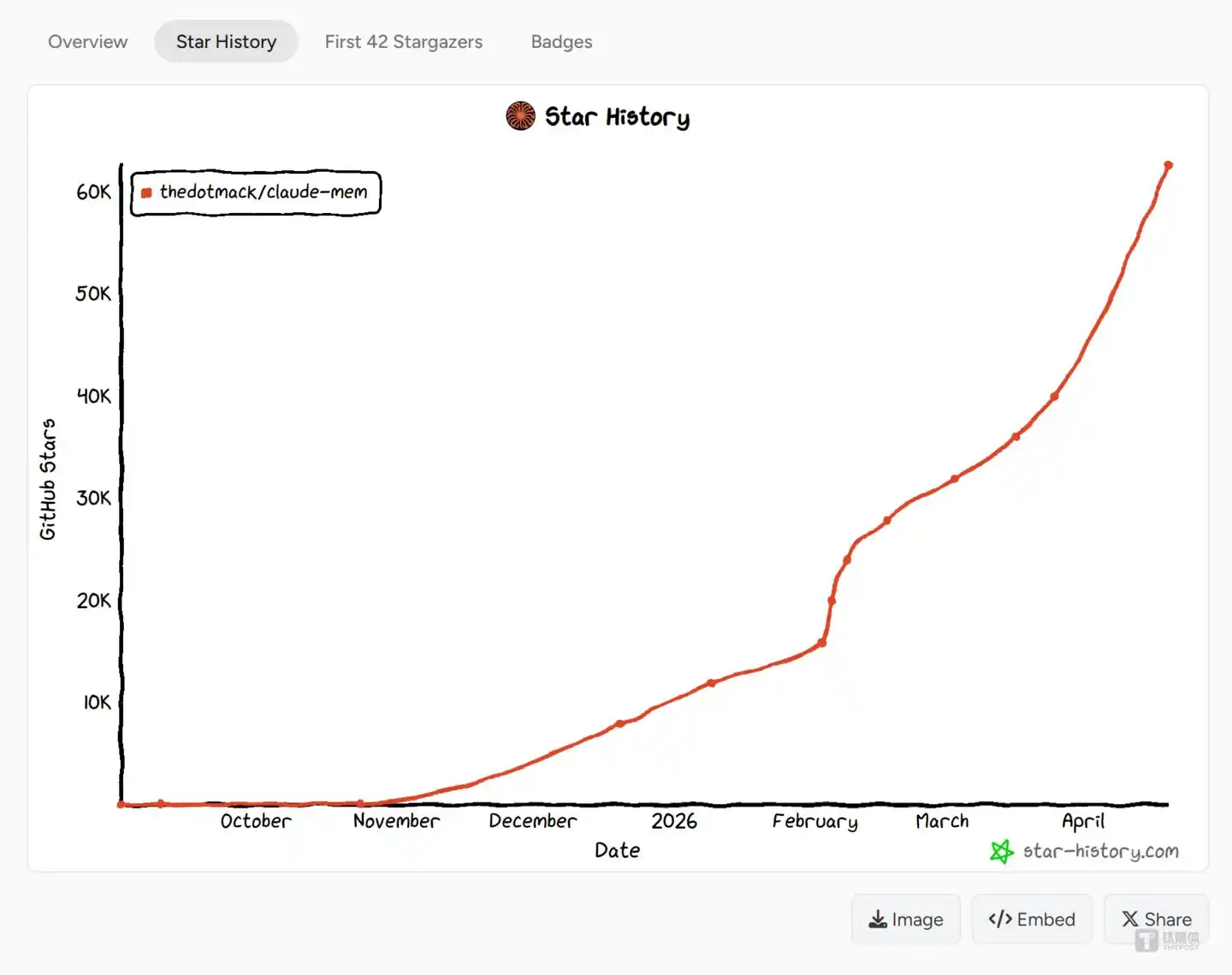

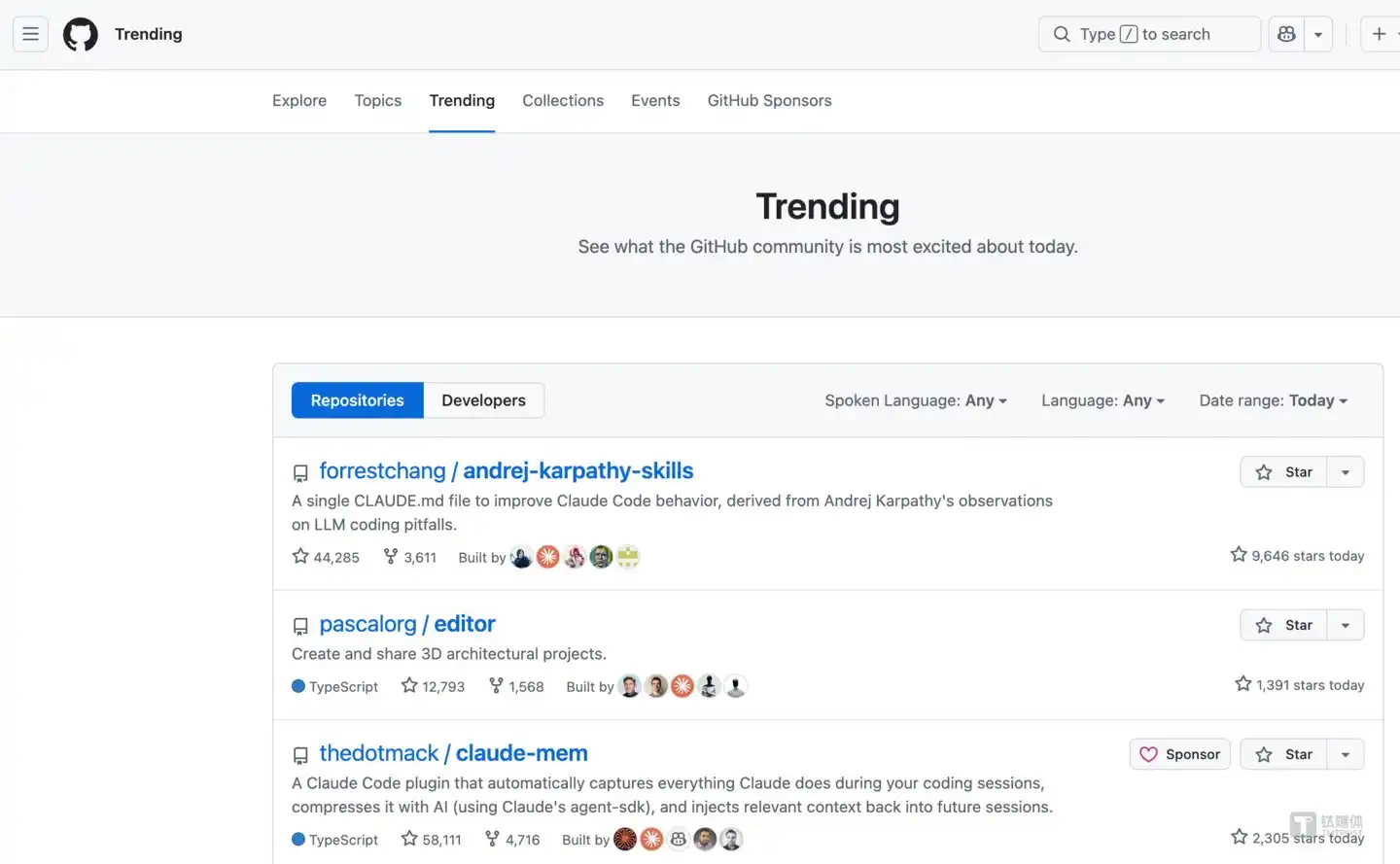

After simmering for months, it experienced a massive traffic explosion in April 2026. How explosive was the data? This open-source plugin amassed 62.6k stars, even setting astonishing records with a single-week surge of 9,012 stars and a single-day spike of 2,588 stars.

Is this merely a small tool to cure AI's "amnesia"?

Too naive.

In reality, it directly attaches a local memory bank to the physical terminal, brutally severing the revenue pipeline that big companies rely on from "repeated computation."

Subsequently, an underlying battle intertwined with API arbitrage, third-party bans, tech giant outages, and even cryptocurrency monetization, erupted completely.

The Costly "Context Tax" and the Amnesia Trap

To understand this geek rebellion, one must first puncture the industry's most hidden profit engine—the "context tax."

Current large AI models have a fatal flaw: they are stateless. Simply put, they "forget as soon as they turn around."

The moment you close the chat window, its memory is instantly wiped clean.

This creates a major problem: To make the AI understand what you're doing, every time you start a new session, you have to resend the entire history of conversation and thousands of lines of code as context to the cloud.

An analogy: You hire an expensive, photographic-memory, super-intelligent strategic consultant, but he "blacks out" every morning. You have to make him reread ten years of company financial reports every day just to ask him "what to do today."

The worst part? This consultant charges by the "total number of words read each day."

The massive cost generated by this repeated reading of historical data is the big companies' "context tax."

The data speaks for itself: Running projects in the official Claude Code terminal, over 48.3% of token transmission is purely wasted effort.

Every time you try to jog the AI's memory, you're疯狂 paying tax for无效 computation spinning its wheels.

Intercepting the "Digital Dam": Brutally Cutting 95% of无效 Token Consumption

Where there's exploitation, there's resistance.

Developer Alex Newman (@thedotmack) directly threw out Claude-mem.

This thing is like a "digital dam" built illegally by the open-source community on the big tech's information highway.

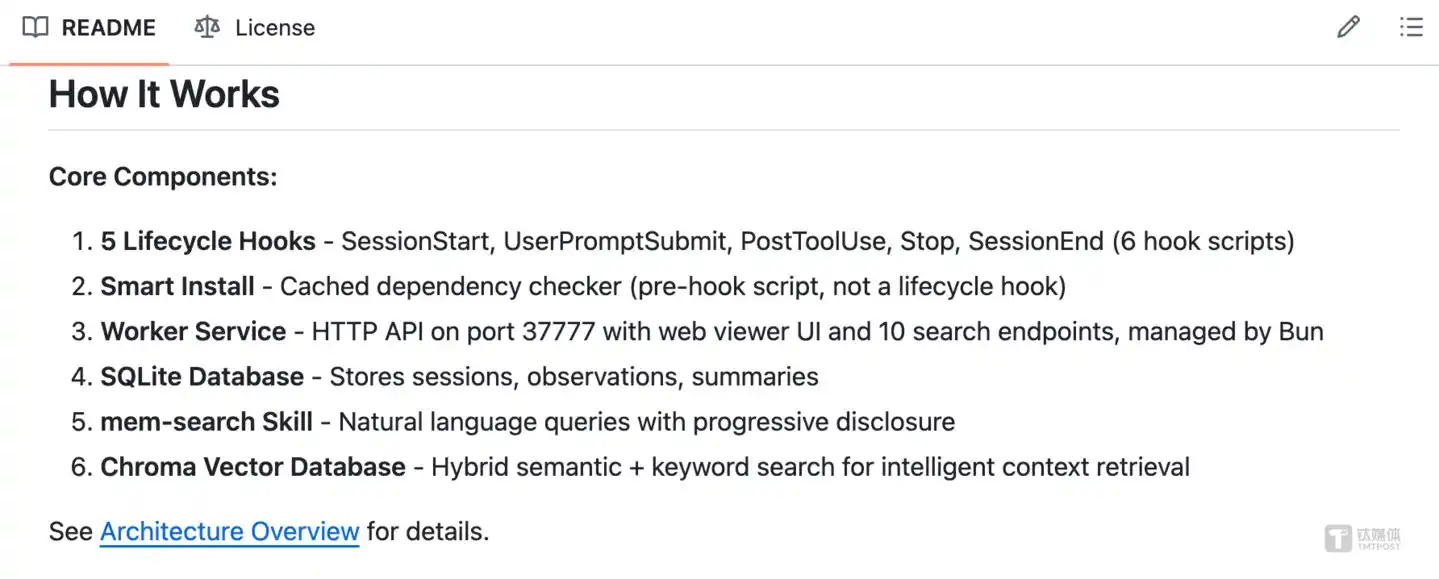

It doesn't write code; it only does two things: "listens" and compresses.

As you read files and type code locally, it quietly watches in the background. Then it automatically calls the large model to squeeze the水分 out of冗长 logs spanning thousands of tokens, compressing them into extremely short core memory summaries, and stuffing them into your local SQLite database.

Next time you start a new conversation? No need to暴力 transmit the full codebase. Retrieve on demand, feed precisely.

The effect is remarkable. Absolute operational data shows that with this method, token consumption for a single business session is slashed by up to 95%.

What does this mean? It directly guards the user's wallet zipper! It physically curbs the billing model where big companies吸血 by "repeatedly reading context." The computational cash-printing machine of big companies had its gears jammed.

API Arbitrage, OpenClaw Alliance, and the Big Tech Ban Hammer

What truly crossed the line for the giants was the underlying integration of Claude-mem with another open-source tool, which彻底击穿了 the vendors' billing fences.

According to Anthropic's pricing, high-tier users pay about $200 per month for "unlimited" computational buffet in the official terminal.

But if enterprises run similarly high-frequency automated tasks through the official API channel, the monthly bill easily surpasses $1000.

This huge computational cost difference gave rise to a third-party open-source AI gateway—OpenClaw.

OpenClaw is essentially a backend scheduler脱离 the official interface. It can connect to chat software like Telegram and Slack, driving the AI to perform 24/7 continuous retries and tool calls. However, high-frequency循环 operation originally极易 caused context collapse and massive computational overhead.

Thus, Claude-mem specifically released an OpenClaw bridge plugin. The technical link between the two formed an extremely hardcore computational threat: OpenClaw provides the infinite loop, official-interface-bypassing automated Agent execution environment; Claude-mem, by listening to the underlying data stream and compressing memory in real-time, directly erases the originally high cost of repeated token reading.

Countless developers used this golden combination,套上 the legal cloak of personal subscription accounts (OAuth). They used the low monthly subscription cost of $200 to drive high-frequency Agent clusters locally,肆无忌惮地抽干 the computational resources that should have cost thousands of dollars through enterprise API word-count billing.

Facing servers being疯狂薅秃 of redundancy, the giants finally couldn't sit still and drew the ban hammer.

In April 2026, Anthropic forcibly severed third-party OAuth authorization access channels.

The official stance was hard with no room for negotiation: Want to do automation? Go back to the enterprise channel and pay per token, word by word.

This被迫转向的昂贵过路费 was angrily called the "Claw Tax" by the tech community.

To make an example, Anthropic even briefly banned the personal main account of OpenClaw founder Peter Steinberger on a Friday.

Most戏剧性的是, right at the peak of this ban (April 15th), Anthropic's own backyard caught fire, suffering a rare system-level major outage on both its web端 and API interfaces.

The giant would rather pull the plug than protect its billing foundation.

Protocol Trap and the Magic of Tokenization

Amid the heavy siege by big companies, did Claude-mem, at the center of the storm, die?

No, it instead made an极其魔幻的资本跳跃.

Because the project's底层 used the extremely strict AGPL-3.0 open-source license, this "infectious" contract directly blocked the founder's path to making money by selling closed-source commercial software.

Traditional SaaS road blocked? The founder directly bypassed all VCs and threw the technical consensus into the cryptocurrency market.

They issued a crypto token on the highly liquid Solana mainnet—$CMEM—with a maximum supply of 1 billion coins.

Officially, the token is meant to establish a decentralized AI memory trading market.

But frankly, in the current climate where the geek community is full of anger towards big tech's computational hegemony, this is a precise "consensus monetizer."

The massive star流量, the developers' resentment towards the giants, instantly turned into real monetary liquidity premium on the exchange.

Initially, the geeks just wanted to resist capital exploitation with free open-source; in the end, they completed their own利益闭环 in an even more magical way within the casino named cryptocurrency tokens.

The Bloody Endgame of Large Models' Second Half

Looking beyond this soaring growth curve, one can already smell the残酷的商业法则 of the second half:

First: Computational红利 is an illusion; saving money is the moat.

Don't迷信 million-token context windows. The smarter the AI, the deeper the computational budget it consumes. Those who truly make money in the future might not be the developers writing fancy applications, but the underlying "fixers" who can use "external dams" to help companies slash massive无效 token consumption.

Second: Memory sovereignty is a non-negotiable底线.

Entrusting the technical decisions and iteration history of core projects entirely to cloud API processing? That's like handing the company's throat to someone else. Whoever can solve localized, high-fidelity memory holds the key to the next generation of AI terminals.

Third: Beware of the "open-source dependency trap."

Never build your castle on a foundation where others have absolute control. Business models deeply reliant on exploiting loopholes in giant APIs can be completely wiped out at any moment by a change in the terms of service. When the platform霸主 decides to收网, you won't even find the address to appeal.

The underlying computational war of large language models has just begun. Deciding the ownership of the future computing platform are these deep-web ghosts隐匿 in the depths of the code, fighting desperately for pricing power and data sovereignty.(This article was first published on Titanium Media App, author | Silicon Valley Technews, editor | Linshen)

Disclaimer: This article is based on public reports and open-source community data integration and deduction. The involved cryptocurrency ($CMEM) carries extremely high volatility and risk of归零, and does not constitute any investment advice.