There is only one way to use a true information advantage: place your bet before others price it.

Over the past two years, everyone has been anxious, trying to find the answer to the same question: What will be the next AI sector to rise?

Storage, optical modules, compute stocks, energy stocks... the narrative changes every few months. Each time, some miss the boat, and each time, some say "next time for sure."

Few ask another question: What are the people who understand AI best betting on?

The combined net worth of those who left OpenAI has now approached $1 trillion. And their ventures and investments are at the start of AI's next era.

Dario Amodei founded Anthropic, potential valuation $900 billion. Ilya Sutskever's SSI has no product, valuation $32 billion. Aravind Srinivas built Perplexity, valuation $21.2 billion. Mira Murati's Thinking Machines Lab, valuation $12 billion.

Therefore, OpenAI's most important output in recent years might not be GPT-4, but these departing employees it has exported to society.

Among them, the youngest person fired by OpenAI, Leopold Aschenbrenner, has become one of the most frequently cited names in capital markets over the past two years.

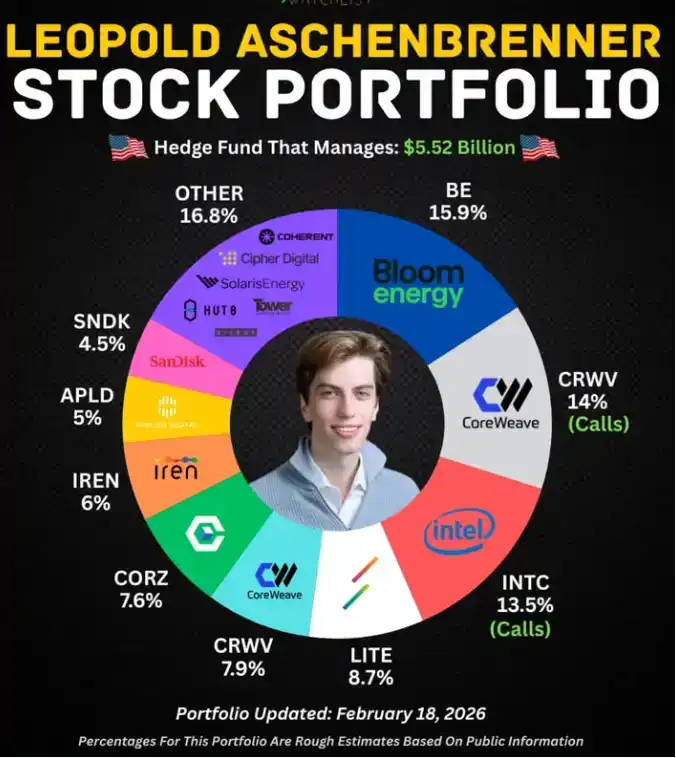

The legendary record has been chewed over repeatedly by the media: fired by OpenAI at age 23, wrote a 165-page report titled "Situational Awareness," raised a hedge fund from $225 million to $5.5 billion within a year, heavily invested in nuclear power and fuel cells—and got it all right.

The story is too perfect, the contrast too sharp, the outcome too successful. Today, whenever discussing investment logic in the AI era, he is almost an unavoidable figure.

But Leopold is merely the first of this group to be seen.

Those who left OpenAI have broadly taken two paths.

One is the path of Ilya, Mira, Aravind: starting companies, raising massive funds, rushing toward the next disruptive product—exactly like every other Silicon Valley genius exodus.

The other is much quieter: a group chose to place bets, leaving execution to others, specializing in judgment.

Leopold took the extreme form of this second path.

He went to public markets, used an operator's perspective from the AI industry, found assets in traditional energy stocks that were mispriced, and bought heavily. He doesn't understand energy, but he knows how much electricity AI will burn. That's enough. This kind of insight cannot be replicated by reading reports or attending industry conferences; it can only be accumulated by being in that position.

Beyond this path, there's another group doing things with the same logic but in a different form: smaller funds, completing due diligence in hours that others take months, where the list of rejections is more valuable than the investment list. They constitute the most easily overlooked, yet most worth examining, layer of this great exodus.

Most people leave a company taking their resume. Those leaving OpenAI take with them a set of answers others don't yet know they need.

I. There Is No Second Leopold

Leopold heavily invested in the nuclear power company Vistra and the fuel cell company Bloom Energy.

After both bets succeeded, he gradually adjusted his portfolio in late 2025, sold Vistra, and further concentrated funds on Bloom Energy and data center infrastructure.

Traditional energy analysts looking at these two stocks would pull up grid expansion plans, compare carbon tax policies, build demand growth models. Leopold's approach is completely different.

At OpenAI, he saw the scale of server rooms, saw the electricity bill for training a flagship model, heard engineers discuss why the next generation of data centers must be located next to nuclear power plants. These details are not in any financial report or analyst report, but they form a conclusion about energy demand more real than any model.

This playbook is called "cross-industry cognitive arbitrage" in investing: translating insider information from one industry into undervalued assets in another.

In the past, this was the domain of top macro hedge funds, relying on a global macroeconomic perspective.

Leopold did something more precise: he used the operator's perspective from the AI industry to find pricing lag vulnerabilities in traditional energy public markets.

This path is hard to replicate.

II. Zero Shot: The Most Valuable Thing Is the Rejection List

Evan Morikawa, founder of Zero Shot Fund, also came from OpenAI, with a similarly solid technical background. He went into VC.

Alumni, completely different paths.

Leopold's judgment comes from his concrete experience in AI's most core roles—a firsthand sense of model training costs, data center planning, energy demand. It can only be accumulated by being in that seat; there's no fast-forward button. Within OpenAI's core positions, very few are truly qualified to answer this question.

In April this year, a new fund sized at $100 million quietly surfaced, named Zero Shot.

This is a term from AI training, referring to a model answering directly without having seen any examples.

The three co-founders are from OpenAI: former DALL-E and ChatGPT application engineering lead Evan Morikawa, OpenAI's original prompt engineer Andrew Mayne, and former researcher and engineer Shawn Jain.

They have already invested in three companies: AI enterprise workflow company Worktrace, AI-enhanced factory robotics company Foundry Robotics, and another project still in stealth.

$100 million, in today's AI funds that often raise tens of billions, is a small number.

But talking about which sectors they refuse to invest in speaks volumes.

Mayne publicly stated he is bearish on most "vibes programming" tools—that category of products that help you write code with natural language.

The reason is straightforward: he knows what OpenAI has accumulated internally in the programming direction and knows how quickly the moats of such tools will be eroded directly by foundation models. Morikawa, meanwhile, keeps his distance from many "human-centric video data companies" in the robotics sector. Those enterprises specifically collecting human motion data to train robots—in his view, this technical path will hit a wall.

These two judgments, ordinary VCs can't make.

They haven't been at the source of information, haven't seen those internal discussions, so they have no way to judge which path is a dead end.

Zero Shot's advantage lies hidden in the rejection list. In a market where everyone is shouting about AI startups, knowing where the pitfalls are is more valuable than knowing who to bet on. Those who have already mined, a list of landmines is more useful than a treasure map.

They deliberately keep the size at $100 million, for specific reasons.

They know clearly in which stage their advantage is most valuable: the early stage when the technical path hasn't converged. At that stage, those with insider knowledge can distinguish at a glance which path is viable.

Once a project reaches Series C or D, financial data and public information will cover up the information advantage, and this card is played out.

The larger the scale, the more one needs to chase "certain big tracks," the more one is fighting using others' playbooks.

$100 million is their honest assessment of the boundary of their advantage.

III. Being an Angel Investor Is Another Business

Both Mira Murati and Zero Shot Fund invested in former OpenAI colleague Angela Jiang's Worktrace, a company using AI to optimize enterprise workflows.

But the investment logic is more solid than "good relationships."

Mira saw Angela's decision-making style under OpenAI's high-pressure environment, saw her judgment on AI product boundaries, saw her execution within real constraints. These things can't be faked in a two-hour founder pitch, nor fully reconstructed by the most meticulous due diligence.

Angela didn't need to convince Mira to believe in her, because Mira had already formed her judgment. The information cost for angel investment approaches zero, but the information quality far exceeds the market average.

A bigger flywheel is with Sam Altman.

Reportedly, Altman decides whether to follow-on invest within hours of hearing about a former employee starting a company, then adds capital from the OpenAI Startup Fund and substantial API resources.

He personally holds no OpenAI equity, but every alumnus's success expands OpenAI's data inlets, distribution channels, and policy influence. He is using capital to maintain an ecosystem that doesn't belong to him but continuously rewards him. This is an invisible form of equity, but it compounds in a very real way.

This ecosystem leads many to mistakenly think it's old colleagues helping each other out.

Comparing it with the PayPal Mafia clarifies the difference.

The PayPal Mafia's cohesion came from shared hardship: surviving the payment wars together, experiencing the eBay acquisition together, forming trench camaraderie during those near-death years. That trust was real, but their judgments about the future were individual. Thiel did venture capital, Musk built rockets, Hoffman built social networks—their paths diverged.

What binds the OpenAI alumni together is a shared bet on the future: AGI will come, the window is limited, now is a once-in-a-millennium opportunity to position. The driving force of belief is more lasting than camaraderie because it directly connects to interests. Once anyone's bet direction proves correct, the entire network benefits.

This also makes the entry threshold for this circle微妙 subtle.

If your product is good enough, raising money from this group isn't a problem. But if you harbor doubts about AI's future, or if your startup logic is premised on "AGI is still far away," even with an excellent product, it's hard to get a check from these people.

Differences in worldview end the conversation before the handshake.

IV. From Builders to Investors

The destinations of OpenAI alumni can be grouped into three categories.

Ilya, Aravind, Mira all chose entrepreneurship.

But even within entrepreneurship, they are doing completely different things. Aravind is in a fiercely competitive consumer business, Mira is building a tool platform, Ilya's SSI doesn't even have a product, got a $32 billion valuation, betting on the word "safety" itself.

Leopold and Zero Shot chose investing.

Leopold went to public markets, Zero Shot does early-stage VC. Both are paths externalizing judgment into capital, not personally executing. This is the minority among OpenAI alumni, but this minority deserves a separate look: when someone is willing to bet but not personally build, it usually means their judgment of the outcome is clear enough that they don't need action to explore.

People usually think the highest expression of genius is creation. But this group offers another answer: when judgment is clear enough, dispersing cognition by betting on multiple directions and letting those with execution capabilities build is a more efficient choice.

Leopold's report is titled "Situational Awareness," a military term referring to a pilot's real-time perception of the entire battlefield.

A pilot's situational awareness determines his actions two seconds later; losing it means death. What this group brought out from OpenAI is precisely situational awareness of this AI battlefield. They know the battle's direction, know where the high ground is, know which trench leads to a dead end.

What they are doing now is deploying accordingly.

The era's smartest people starting to choose ALL IN indicates that, in their view, the answer is already clear enough—clear enough that they no longer need to verify it by getting their hands dirty.