What would you do if AI suddenly declared you guilty, and everyone blindly believed its judgment? How would you prove your innocence?

This sounds like a plot from the movie "Minority Report," where crimes are predicted and people are framed. But abstractly, a sloppier version has already played out in reality:

AI misidentified a criminal, leading an innocent woman to be jailed for half a year, nearly wiping out her life.

1. What does it mean for the police to arrest "The Flash"?

Angela Lipps from Tennessee, USA, is this unlucky woman. In July 2025, a team of heavily armed police suddenly burst into her平淡的生活 (ordinary life) and pointed guns at her, informing her she was under arrest.

She was stunned, as she had no idea what she had done wrong. The police said she was suspected of involvement in a bank fraud case in North Dakota. It seemed like they had solid evidence and had issued a 5-star warrant. But after hearing the reason, she froze in place:

"But, I've never been to that state in my life."

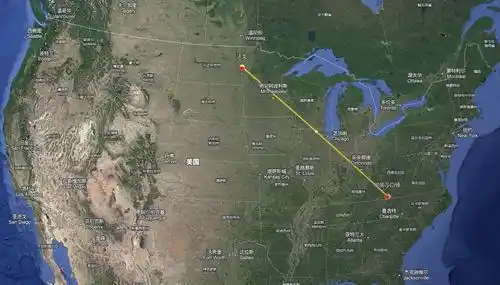

Looking at the map, there is indeed some distance between Tennessee and North Dakota. What connected her to the suspect was AI.

The police ignored her protests when arresting her. She only learned the specific case details after being in prison. The police in Fargo, North Dakota, were investigating a fraud case where the suspect used a fake military identity to scam tens of thousands of dollars from a bank. The police's investigation method was to first check surveillance footage, then consult AI, and finally make an arrest.

You might think they used AI to refine a Holmes-like detective who solves cases through rigorous logical reasoning, but it wasn't that "complicated." The police used an AI system to perform facial recognition on screenshots from the surveillance video and then filtered for suspects in the database.

This time, the AI dropped the ball big time, incorrectly matching Angela Lipps's photo. Then this intelligence was transferred to the investigating police station, where the staff made an even bigger mess. After manually checking her driver's license and social media, they thought the facial features, body shape, and hairstyle were similar, and thus determined she was the suspect.

Image from GoFundMe

The woman was utterly崩溃 (crushed). Didn't they check other clues? What if it was just a case of lookalikes? But what was even more崩溃 (crushing) was what came next: after a series of moves, she had no chance to prove her innocence.

According to local procedures, she wasn't directly taken to North Dakota for further investigation but was instead held in a local Tennessee jail. Because she was identified as an "interstate fugitive," she had no bail and no opportunity for police interrogation. She was just inexplicably detained for 108 days, from July until the end of October. It's hard to imagine how desperate she felt during this time.

Angela Lipps endured until October 30th, when she was finally taken out of jail to be transferred to North Dakota. At that time, she faced multiple charges. During the trip, she not only had to wear handcuffs but also had a chain around her waist. To her, walking through the airport was like being paraded through the streets.

And this was actually her first time ever on a plane.

What she didn't expect was that after arriving in North Dakota, she had to wait even more. It wasn't until December that she had a formal chance to explain the matter. Her lawyer directly subpoenaed her bank records to compare the timing. At the time of the crime, she was buying pizza 1900 kilometers away in Tennessee. This meant that unless she was "The Flash," she couldn't have committed the crime across spacetime.

It took only 5 minutes for the lawyer to clear things up for her.

By December 24th, her charges were finally dropped, but she couldn't be happy, because by then the "execution line" was already at her ankles.

Angela Lipps spent her 50th birthday in jail. During her imprisonment, she wasn't allowed to wear her dentures, ate junk food, and was under immense mental stress, causing her weight to skyrocket. She entered jail in summer clothes and came out in winter, without even a warm coat, making staying warm a problem, let alone getting home. The police didn't provide any travel money either. After being released, she was essentially stranded in North Dakota, trapped on the day AI wrongfully accused her.

Fortunately, some kind souls gave her a helping hand.

Her lawyer helped arrange a hotel for temporary stay and got her some food to get through the toughest moments. Local non-profit organizations came to her rescue and sent her back home to Tennessee.

But after returning home, restarting her life was hellishly difficult. During her time in jail, her finances collapsed: her rented apartment was gone, her dog was gone, and even her car and the things inside it were gone. Her personal belongings were temporarily stored in a warehouse, but later, because she couldn't afford the rent, all her daily necessities were cleared out. Even her interpersonal relationships were in jeopardy because the neighbors saw her being taken away and "disappearing" for half a year, thinking she had actually committed a crime.

Meanwhile, her bizarre experience quickly spread online, sparking疯狂吐槽 (furious吐槽/roasting). For example, many expressed disbelief that in the US, some people can commit "zero-dollar shopping" (looting without consequence), but if you touch a capitalist's bank, you can be arrested instantly, even if you didn't do it.

Because the incident blew up, her desperate situation took another turn for the better, and she received more help. She has now crowdfunded $80,000 in donations, which can fully be used to restart her life.

However, netizens' biggest expectation now is for her to find a dream team of lawyers and sue them to high heaven, potentially winning the case and receiving another compensation payout.

Because this wasn't an偶然失误 (accidental mistake) by the police; batch wrongful convictions have occurred before this, and some people's experiences were even worse than hers.

class="image-wrapper">2. The Chilling Sci-Fi Moments Brought by AI "Mistakes"

Angela Lipps's experience has actually happened over a dozen times in different regions, even in other countries.

For example, Robert Williams was one of the earliest victims in this type of incident. In 2020, police arrested him in front of his wife and daughter. He was detained for 30 hours, even though he was 20 centimeters taller than the actual perpetrator.

Another top倒霉蛋 (unlucky person) is Chris Gatlin. AI matched him, based on blurry surveillance footage, to a potential suspect in a subway assault case. He was subsequently locked up for 17 months straight, becoming the longest-detained innocent person so far. The离谱 (absurd) part is that until the very end, the investigators discovered there was also a bodycam recording that could serve as key evidence, but the suspect in it didn't match him at all.

Porcha Woodruff in 2023 had a similar experience. Police accused her of being involved in a carjacking. She laughed out loud upon hearing it, thinking the police were joking, because she was eight months pregnant at the time, and clearly didn't seem to be in any condition to commit such an act. Nevertheless, she was taken away and held for over ten hours. Later, she lost her lawsuit.

This year, a young man named Alvi Choudhury in the UK was also arrested under similar circumstances, accused of "burglary," but the evidence suggested it was done "remotely"—a scene also documented in film. The AI identified his photo from a 2021 detention. The police laughed out loud when they saw him in person because he looked at least 10 years older than the suspect.

Image from "Jumper"

In 2022, Indian businessman Praveen Kumar was arrested on his way to Switzerland. While transiting through Abu Dhabi, AI matched him to a wanted criminal. The local authorities interrogated him thoroughly, finally realizing he indeed wasn't the suspect, and deported him back to India. However, upon arrival at the Indian airport, he was detained twice more consecutively.

Among these incidents, the most离谱 (absurd) might be the experience of Russian scientist Alexander Tsvetkov. AI said he looked like a murderer, and he was jailed for 10 months.

This scientist suffered from双重诬陷 (double framing) by both AI and humans. In February 2023, he returned to Russia after a scientific expedition, only to be arrested right after getting off the plane. The reason was that AI showed a 55% similarity between him and a sketch of a suspect from a serial murder case over twenty years ago. Additionally, there was a tainted witness in the case who deliberately gave false testimony against him to reduce his own sentence. The致命 (fatal) part was that the police didn't investigate carefully and just arrested him.

After being detained, he initially thought it was a small misunderstanding, but the investigation dragged on endlessly. Under immense mental pressure and health issues, he was once compelled to plead guilty, only to recant later. Fortunately, his wife and colleagues from the research institute persistently worked for him, finding countless materials proving he was on an expedition elsewhere at the time of the crime. As media reported on the case, it sparked widespread public discussion. The situation only took a turn in December, and the charges weren't formally dropped until February 2024.

And as AI evolves, this type of case is happening more and more frequently, making it seem more like AI is "commanding," while some humans have become execution machines.

3. The Occupational Hazard of "Letting AI Decide"

The reason it feels abstractly like a "role reversal" is that both AI and humans can drop the ball. Even the strongest AI makes mistakes, and humans are always ready to "slack off."

Take the American Clearview AI system, for example—it's the one that sent the woman from the beginning of the article to jail, but it's no amateur software. Clearview AI boasts the world's largest image database, aggressively scraping tens of billions of images despite fines from multiple countries. Over 3,000 law enforcement agencies in the US use it, and last year it even signed a $9.2 million contract with ICE.

Simply put, Clearview AI is a "facial search engine." You upload a picture, it matches it within its database, and then spits out a bunch of similar images. Ultimately, whether it's the person you're looking for or not is still up to you to decide.

Although AI has made神速 (rapid) progress these years, its "accuracy rate" remains highly controversial, as there are always various differences between experimental test data and real-world usage when searching with images.

For instance, most of the time, the surveillance images the police find have the picture quality of a landline phone shot (extremely low resolution). Add factors like weird lighting, tricky angles, facial obstructions, etc., making recognition quite difficult. The database might also contain old pictures, and the matching results might just be two people who happen to look alike.

And when these errors are applied to specific individuals, they naturally suffer.

Is this a crow or a cat?

In theory, if it were just AI occasionally malfunctioning, there might be a way to fix it, as these are just "clues." The subsequent "conclusion" still needs human verification. But often, humans are more "离谱 (absurd)" than AI.

Actually, people have been complaining for years that American police are being led by the nose by AI, falling into "automation bias psychology"—meaning they rely on or even over-trust the results given by AI. If AI says these two people match, humans believe it. Height doesn't match? Not my problem. Alibi exists? I just make arrests. What other evidence? Tell it to the prison. They skip basic investigation steps while making others "skip life."

Humans are actually well aware of AI's shortcomings. After paying quite a bit in compensation, many regions in the US have established "firewalls," such as requiring independent evidence besides AI clues for solving cases. Some places have even outright banned the use of this type of AI technology in investigations.

But these constraints can't withstand how incredibly useful AI is. Although there's a probability of failure, clearly the number of successes is higher. Sometimes, the only clue the police painstakingly find is a surveillance photo of "door lock" quality (very poor), so there's a high probability they just want to try their luck with AI. As early as 2023, there were reports that US police had tried over a million searches using Clearview AI. Even later, when explicitly told not to use it, they used it secretly anyway. After all, they could always claim they didn't use it. If this software wasn't allowed, they'd switch to another. If it was banned locally, they'd ask another agency to help use it. They're utterly addicted to using it.

So, once AI first provides an unreliable clue, and then humans slack off by skipping the investigation, the dynamic duo unleashes a combo move, and wrongful convictions naturally follow.

For example, Brazil's Smart Sampa system has been facing similar issues in recent years. In 2024, São Paulo, Brazil, launched Latin America's largest AI facial recognition policing system, reportedly connected to 40,000 cameras.

The good news is that the effect is indeed fierce. Over the years, nearly four thousand criminals were arrested on the spot, and over three thousand fugitives were captured. Robberies dropped by nearly 15% in 2025,堪称 (can be called) an "assembly line for catching thieves."

The bad news is that at least 59 people were misidentified.

Among them were abstract cases, such as a mentally ill person being taken from a hospital as a criminal. Later, it was discovered his arrest warrant had expired, and he was released. Another guy was arrested 4 times in 7 months because AI confused him with a fugitive murderer. Each time, he was taken to the police station and released immediately, only to be arrested again a few days later. He was utterly terrified.

When we used to discuss which jobs couldn't be replaced by AI, we said AI couldn't serve prison time for people. But we never imagined the other side of this question: it can now make people "serve prison time."

This statement was a joke in the past, but now it's almost not news anymore. Actually, it's not just facial recognition; AI can also pull off some狠活儿 (tough stunts) in object recognition occasionally.

Last year, an AI security system at a US high school识别 (recognized) a bag of chips a young man was holding as a "potential gun," directly triggering an alarm. Eight police cars arrived and instantly restrained the young man. After searching him, they found the snack bag in the trash. The scene was extremely awkward, and the young man thought he was done for.

You have to admit, it kinda does

Who would have thought that the stronger AI gets, the bigger the mess some people can make with it. In the past, AI was dumb; it misidentified pictures and was laughed at by the whole internet. Now AI is strong; misidentifying one person lands a human in jail for half a year. In the future, let's not end up with abstract operations like AI judgment, AI lawyers, or新能源法庭 (new energy courts). Ultimately, AI itself is just a tool. The key lies in the ability and purpose of its users. Or rather, the stronger AI becomes, the less humans can afford to slack off.

In short, I'm starting to miss that dumb AI a little.

This article is from the WeChat public account "Cool Play Lab," author: Cool Play Lab