“Vibe coding tools are leaking vast amounts of personal and corporate data.” Recently, while researching the trend of "shadow AI," researchers from the Israeli cybersecurity startup RedAccess discovered that AI tools used by developers to build software quickly have exposed medical records, financial data, and internal documents from Fortune 500 companies to the open web.

RedAccess CEO Dor Zvi stated that researchers found approximately 380,000 publicly accessible applications and other assets created by developers using tools like Lovable, Base44, Netlify, and Replit. Among these, about 5,000 contained sensitive corporate information, and upon further inspection, nearly 2,000 applications appeared to expose private data. Axios independently verified multiple exposed apps, and WIRED also separately confirmed these findings.

40% of AI-Coded Apps Expose Sensitive Data,

Some Even Have Admin Privileges

As AI increasingly takes over the work of modern programmers, the cybersecurity field has long warned that automated coding tools are bound to introduce a large number of exploitable vulnerabilities into software. However, when these vibe coding tools allow anyone to create and host web applications with just a click, the problem is not just vulnerabilities, but the almost complete lack of any security protection, including highly sensitive corporate and personal data.

It is understood that the RedAccess team analyzed thousands of vibe coding web applications created using AI software development tools like Lovable, Replit, Base44, and Netlify. They found that over 5,000 of these had almost no security mechanisms or authentication. Many such web applications can be directly accessed along with their data by anyone who obtains their URL. Some had minimal barriers to entry, such as requiring registration with any email address.

Among these 5,000 AI-coded apps accessible to anyone simply by entering the URL in a browser, Zvi found that nearly 2,000 appeared to expose private data upon further inspection. Zvi said that approximately 40% of the apps exposed sensitive data, including medical information, financial data, corporate presentations and strategic documents, and detailed logs of user conversations with chatbots.

Screenshots of web applications he shared (some of which were verified to still be online and exposed) showed details including a hospital's work assignment information (containing doctors' personally identifiable information), a company's detailed advertising procurement data, another company's market entry strategy presentation, a retailer's complete chatbot conversation logs (including customers' full names and contact details), a shipping company's freight records, and various sales and financial data from multiple companies. Zvi also stated that in some cases, these exposed applications could potentially allow him to gain administrative access to systems, or even delete other administrators.

Zvi mentioned that RedAccess found it surprisingly easy to search for vulnerable web applications. Lovable, Replit, Base44, and Netlify all allow users to host web applications on the AI companies' own domains, rather than on the user's own domain. Therefore, researchers could identify thousands of applications built using these vibe coding tools by simply searching Google and Bing using these company domains combined with other keywords.

In the case of Lovable, Zvi also discovered a large number of phishing websites impersonating major corporations. These sites appeared to be created using the AI coding tool and hosted on the Lovable domain, including brands like Bank of America, Costco, FedEx, Trader Joe’s, and McDonald's. Zvi also pointed out that the 5,000 exposed apps discovered by RedAccess were only those hosted on the AI coding tools' own domains. There could potentially be tens of thousands more applications hosted on user-purchased domains.

Security researcher Joel Margolis noted that verifying whether real data is actually exposed in an unprotected AI-coded web app is not always straightforward. He and his colleagues previously discovered an AI chat toy that exposed 50,000 conversations with children on a website with minimal security. He said the data in vibe coding applications could be just placeholders, or the app itself might be only a proof-of-concept (POC). Wix's Brodie also believed that the two examples provided to Base44 looked like test sites or contained AI-generated data.

Nevertheless, Margolis believes the problem of data exposure from AI-built web apps is very real. He stated that he frequently encounters the type of exposure Zvi described. "Someone on the marketing team wants to build a website; they are not engineers and probably have little security background or knowledge," he pointed out. AI coding tools will do what you ask, but if you don't ask them to do it securely, they won't do it proactively.

“People Can Create at Will,”

But the Default Settings Are the Problem

Less than two weeks before RedAccess's research was published, another incident occurred: Cursor, running the Claude Opus 4.6 model, deleted PocketOS's entire production database and all volume-level backups in 9 seconds via an API call to infrastructure provider Railway.

Zvi bluntly stated, "People can create something at will and then use it directly in a production environment, representing a company to use it, without needing any permission. There's almost no boundary to this behavior. I don't think we can make the whole world receive security education." He added that his mother also uses Lovable for vibe coding, "but I don't think she considers role-based access control."

RedAccess researchers found that the privacy settings of multiple vibe coding platforms default applications to being public unless users manually change them to private. Many such applications are also indexed by search engines like Google, making it possible for anyone surfing the web to stumble upon them unintentionally.

Zvi believes that current AI web application development tools are creating a new wave of data exposure, rooted in the same combination of user error and insufficient security safeguards. However, a more fundamental issue than any specific security flaw is that these tools enable a whole new category of people within organizations to create applications. They often lack security awareness and bypass the company's existing software development processes and pre-deployment security review mechanisms.

"Anyone in the company, at any time, can generate an application, completely bypassing any development process or security checks. People can use it directly in a production environment without asking anyone's opinion. And that's exactly what they are doing," Zvi said. "The end result is that corporations are essentially leaking private data through these vibe coding applications. This is one of the largest-scale incidents ever, where people are exposing corporate or other sensitive information to anyone in the world."

In October last year, Escape.tech scanned 5,600 public vibe coding applications and also found that over 2,000 had high-risk vulnerabilities, over 400 exposed sensitive information (including API keys and access tokens), and 175 cases involving personal data breaches (including medical records and bank account information). All vulnerabilities found by Escape existed in real production systems and could be discovered within hours. In March this year, the company completed an $18 million Series A funding round led by Balderton, with one of its core investment rationales being the security gaps created by AI-generated code.

Gartner's "Predicts 2026" report pointed out that by 2028, the prompt-to-app approach adopted by "citizen developers" will increase software defect volume by 2,500%. Gartner believes a major new characteristic of such defects is that AI-generated code is syntactically correct but lacks an understanding of overall system architecture and complex business rules. The cost of fixing these "deep-context errors" will erode budgets originally intended for innovation.

Responses and Rebuttals from the Platforms

Currently, three AI coding companies have contested the claims made by RedAccess researchers, stating that the information shared was insufficient and they were not given enough time to respond. However, Zvi said that for dozens of exposed web applications, they proactively contacted the suspected owners. Executives from the companies stated they take such reports seriously, while also noting that the apps being publicly accessible does not necessarily mean there is a data breach or security vulnerability. Nonetheless, these companies did not deny that the web applications discovered by RedAccess were indeed publicly exposed.

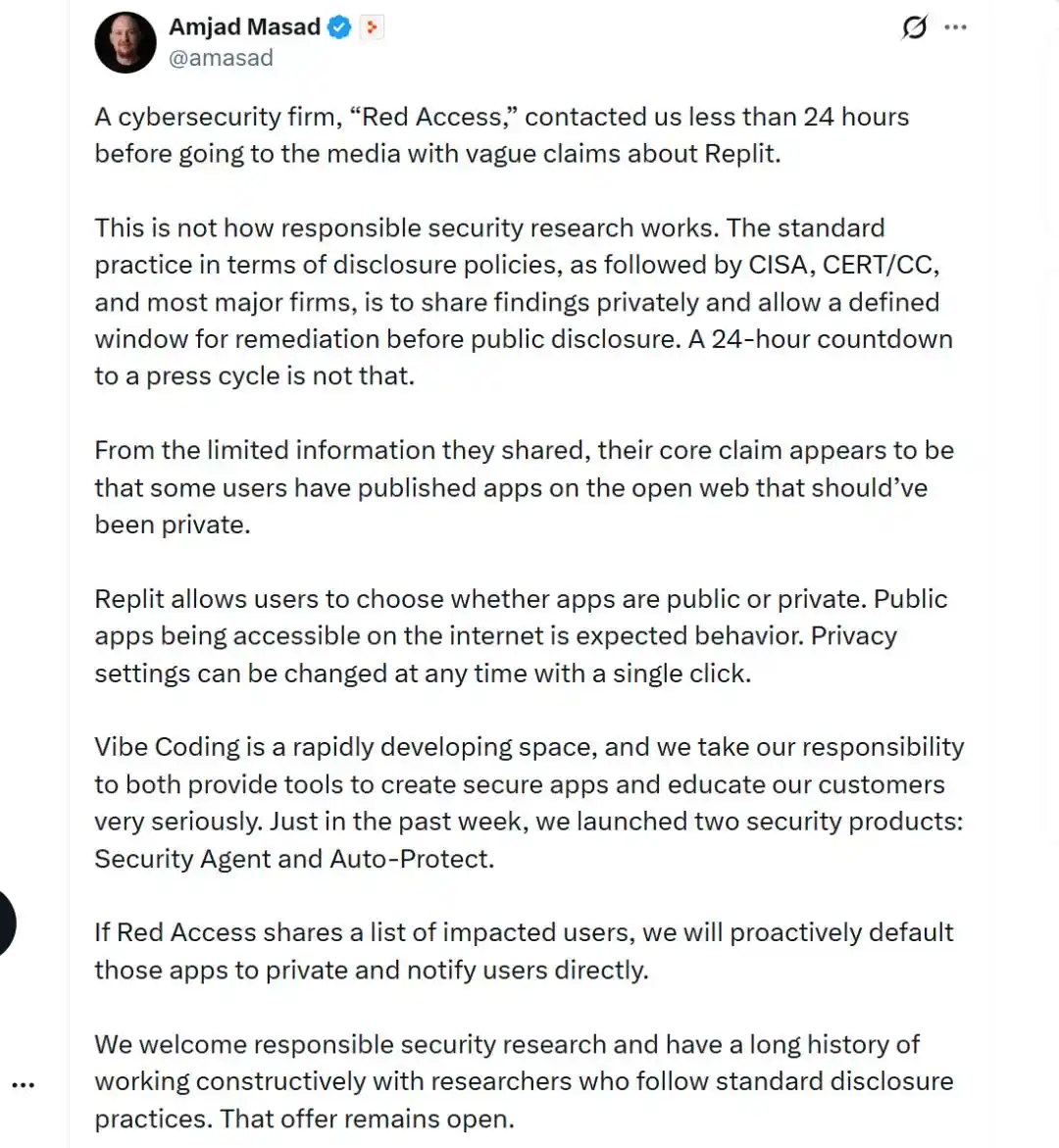

Replit's CEO, Amjad Masad, stated that RedAccess only gave them 24 hours to respond before disclosure. In his response on X, he wrote, "Based on the limited information they shared, the core claim from RedAccess appears to be: some users have published apps that should be private to the open internet. Replit allows users to choose whether their app is public or private. Public apps being accessible on the internet is expected behavior. Privacy settings can also be changed with one click at any time. If RedAccess shares the list of affected users, we will proactively default those apps to private and notify users directly."

A spokesperson for Lovable responded in a statement, "Lovable takes reports of data exposure and phishing websites very seriously, and we are actively obtaining the necessary information to investigate. This matter is currently ongoing. It should also be noted that Lovable provides developers with tools to build applications securely, but the ultimate responsibility for how an application is configured lies with the creator."

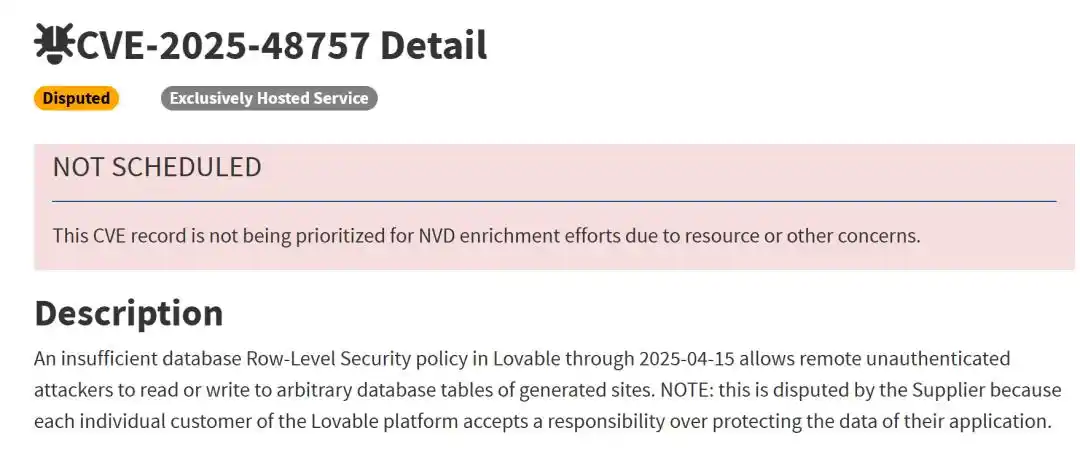

In the previously published CVE-2025-48757, it was recorded that Supabase projects generated by Lovable had insufficient or even missing Row-Level Security (RLS) policies. Some queries completely bypassed access control checks, leading to data exposure in over 170 production environment applications. The AI was responsible for generating the database layer but did not generate the security policies that should have restricted data access. Lovable contested the CVE classification, stating that protecting application data is the customer's own responsibility.

Blake Brodie, Head of Public Relations at Wix, the parent company of Base44, stated in a declaration: "Base44 provides users with robust tools to configure the security of their applications, including access control and visibility settings." She added, "Turning these controls off is an intentional and simple action that any user can perform. If an application is publicly accessible, that reflects a user's configuration choice, not a platform vulnerability."

Brodie also pointed out, "It's very easy to fabricate apps that appear to contain real user data. Without providing us with any verified cases, we cannot assess the veracity of these allegations." In response, RedAccess countered that they did provide relevant examples to Base44. RedAccess also shared several anonymized communication records showing that Base44 users thanked the researchers for alerting them to their apps' exposure issues, after which the apps were secured or taken down.

It is understood that Wiz Research independently discovered last July that Base44 had a platform-level authentication bypass vulnerability. The exposed API interface allowed anyone to create a "verified account" in a private application using only a publicly visible `app_id`. This vulnerability was akin to standing at the locked door of a building, shouting out a room number, and having the door automatically open. Wix fixed the vulnerability within 24 hours of Wiz's report, but the incident exposed an issue: on these platforms, millions of applications are created by users who often assume the platform has handled security for them, but the actual authentication mechanisms are very weak.

Reference Links:

https://www.wired.com/story/thousands-of-vibe-coded-apps-expose-corporate-and-personal-data-on-the-open-web/

https://www.axios.com/2026/05/07/loveable-replit-vibe-coding-privacy

https://venturebeat.com/security/vibe-coded-apps-shadow-ai-s3-bucket-crisis-ciso-audit-framework

This article is from the WeChat public account "AI Frontline" (ID: ai-front), author: Hua Wei