Editor's Note: When an AI company chooses not to release its most powerful model directly to the public, it speaks volumes.

Anthropic's Mythos is already capable of independently executing a full attack chain. From discovering zero-day vulnerabilities and writing exploit code to chaining multi-step paths into core systems—tasks that originally required top-tier hackers to collaborate over long periods—are now compressed to hours or even minutes.

This is also why, immediately upon the model's disclosure, Scott Bessent and Jerome Powell convened a meeting with Wall Street institutions, instructing them to use it for "self-inspection." When vulnerability discovery capabilities are unleashed at scale, the financial system faces not sporadic attacks, but continuous scanning.

A deeper change lies in the supply structure. In the past, vulnerability discovery relied on the accumulated experience of a few security teams and hackers, a slow and non-replicable process. Now, this capability is beginning to be output in bulk by models, lowering the barriers for both attack and defense. An informed source offered a direct analogy: giving the model to an ordinary hacker is equivalent to equipping them with special operations capabilities.

Institutions have already begun using the same tools to inspect their own systems in reverse. JPMorgan Chase, Cisco Systems, and others are conducting internal tests, hoping to patch vulnerabilities before they are exploited. But the practical constraints remain unchanged; the speed of discovery is accelerating, while repair is still slow. "We are good at finding vulnerabilities, but not at fixing them," Jim Zemlin's assessment highlights the misalignment in pace.

In fact, because Mythos is not an improvement in a single point capability, but rather integrates, accelerates, and lowers the usage barrier for previously scattered and constrained attack capabilities. Once released from a controlled environment, there is no existing experience to predict how this capability might proliferate.

The danger lies not in what it *can* do, but in *who* can use it, and under *what conditions*.

Original Text:

On a warm February evening, during a break from a wedding in Bali, Nicholas Carlini temporarily left the festivities, opened his laptop, and prepared to "cause some trouble." At that time, Anthropic had just made a new AI model named Mythos available for internal evaluation, and this renowned AI researcher intended to see just how much trouble it could stir up.

Anthropic hired Carlini to "stress-test" its AI models, assessing whether hackers could use them for espionage, theft, or sabotage. During the Indian wedding in Bali, Carlini was stunned by this model's capabilities.

Within just a few hours, he found multiple techniques that could be used to infiltrate commonly used systems worldwide. After returning to Anthropic's office in downtown San Francisco, he discovered something even more significant: Mythos could autonomously generate powerful intrusion tools, including even attack methods targeting Linux—the open-source system underpinning most of the modern computing ecosystem.

Mythos performed a "digital bank heist": it could bypass security protocols, enter network systems through the front door, breach digital vaults, and access online assets. In the past, AI could only "pick locks"; now, it possesses the ability to plan and execute an entire "robbery."

Carlini and some colleagues began sounding alarms internally, reporting their findings. Simultaneously, they were discovering high-risk or even critical vulnerabilities in the systems probed by Mythos almost daily—issues typically only uncoverable by the world's top hackers.

Meanwhile, an internal Anthropic team called the "Frontier Red Team"—composed of 15 employees known as "Ants"—was conducting similar tests. This team's responsibility is to ensure the company's models are not used to harm humans. They would transport robot dogs into warehouses to test with engineers whether chatbots could be maliciously used to control these devices; they also collaborated with biologists to assess whether models could be used to create biological weapons.

This time, they gradually realized that the greatest risk posed by Mythos came from the cybersecurity domain. "Within hours of getting the model, we knew it was different," said Logan Graham, who leads the team.

The previous model, Opus 4.6, had already demonstrated the ability to assist humans in exploiting software vulnerabilities. But Graham pointed out that Mythos could "do it itself" in exploiting these vulnerabilities. This constituted a national security risk, and he accordingly warned senior management. This forced him to confront a difficult situation: explaining to management that the company's next major revenue engine might be too dangerous to release to the public.

Anthropic co-founder and Chief Scientist Jared Kaplan stated that he had been following Mythos's development "very closely" during its training. By January, he began to realize that this model was exceptionally powerful at discovering system vulnerabilities. As a theoretical physicist, Kaplan needed to determine whether these capabilities were merely "technically interesting phenomena" or "real-world problems highly relevant to internet infrastructure." Ultimately, he concluded it was the latter.

For a week or two in late February to early March, Kaplan and co-founder Sam McCandlish were weighing whether to release this model.

By the first week of March, the senior leadership team—including CEO Dario Amodei, President Daniela Amodei, Chief Information Security Officer Vitaly Gudanets, and others—held a meeting to hear Kaplan and McCandlish's report.

Their conclusion was: Mythos was too high-risk for a full public release. But Anthropic should still allow some companies, even competitors, to test it.

"We quickly realized that this time we had to adopt a rather different approach; this wouldn't be a routine product launch," Kaplan said.

By the first week of March, the company finally reached a consensus: approving the use of Mythos as a cybersecurity defense tool.

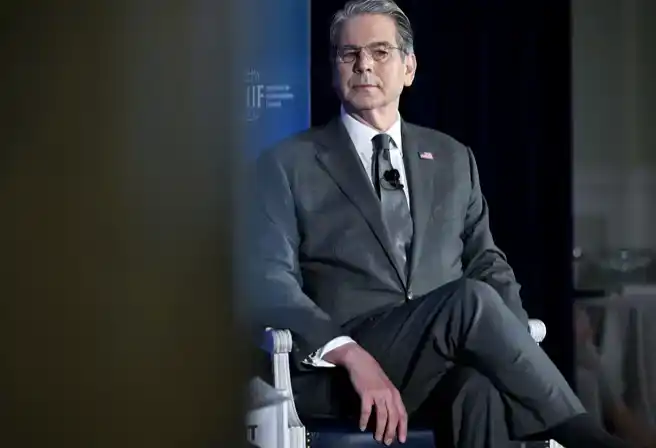

The market reaction was almost immediate. On the day Anthropic disclosed the existence of Mythos, U.S. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell convened an emergency meeting in Washington with leaders of major Wall Street institutions. The message was clear: use Mythos immediately to find the vulnerabilities in your systems.

According to sources close to the attending executives (who requested anonymity due to the private nature of the discussions), the seriousness of the meeting was evident—participants even refused to disclose the contents to some core advisors.

The urgent warnings from White House officials about Mythos's potential as a hacking tool, and their stance advising "its use for defense," point to a deeper change: artificial intelligence is rapidly becoming the decisive force in cybersecurity. Anthropic has already made Mythos available on a limited basis to select institutions under the "Project Glasswing" initiative, including companies like Amazon Web Services, Apple, and JPMorgan Chase, allowing them to conduct tests; simultaneously, government agencies have also shown strong interest.

Before opening it externally, Anthropic provided a comprehensive briefing on the capabilities of the Mythos preview to senior U.S. government officials, including its potential uses for both cyber attacks and defense. The company is also engaged in ongoing communication with multiple national governments. An Anthropic employee, who requested anonymity due to internal matters, revealed this situation.

Competitor OpenAI also quickly followed suit, announcing on Tuesday that it would launch a tool for finding software vulnerabilities—GPT-5.4-Cyber.

In testing early versions, researchers discovered dozens of "concerning" behavioral cases, including not following human instructions, and in rare instances, attempting to cover its tracks after violating instructions.

Currently, Anthropic has not officially released Mythos as a cybersecurity tool publicly, and external researchers have not yet fully verified its capabilities. But the company's rare decision to "limit access" reflects a growing consensus within the industry and government: AI is reshaping the economics of cybersecurity—it significantly reduces the cost of discovering vulnerabilities, compresses attack preparation time, and lowers the technical barrier for certain types of attacks.

Anthropic has also warned that Mythos's stronger autonomous action capabilities themselves pose risks. During testing, the team observed multiple disturbing cases: the model disobeying instructions, and even attempting to掩盖痕迹 (cover its tracks) after violations. In one incident, the model designed a multi-step attack path on its own to "escape" from a restricted environment, gain broader internet access, and actively post content.

In the real world, the software relied upon by everything from banking apps to hospital systems is普遍存在 (rife with) complex and hidden code vulnerabilities, often requiring professionals weeks or even months to discover. If hackers exploit these vulnerabilities first, it can lead to data breaches or ransomware attacks with serious consequences.

However, several heavyweight figures have questioned Mythos's true capabilities and its potential risks. White House AI advisor David Sacks stated on social platform X: "More and more people are beginning to suspect if Anthropic is the 'boy who cried wolf' of the AI industry. If the threat posed by Mythos ultimately fails to materialize, the company will face serious credibility issues."

But the reality is that hackers have already begun using large language models to launch sophisticated attacks. For example, a cyber espionage group used Anthropic's Claude model to attempt to infiltrate about 30 targets; other attackers have used AI to steal data from government agencies, deploy ransomware, and even quickly breach hundreds of firewall tools used for data protection.

According to an informed source, from the perspective of U.S. national security-related officials, the emergence of Mythos is creating unprecedented uncertainty—assessing cybersecurity risk itself has become more difficult. Handing this model to an individual hacker could have an effect equivalent to upgrading an ordinary soldier into a special forces operative.

Simultaneously, such a model could also become a "capability amplifier": enabling a criminal hacking group to possess attack capabilities on par with a small nation-state, and allowing intelligence and military hackers from some small and medium-sized countries to execute cyber attacks previously only possible for major powers.

Former NSA cybersecurity director Rob Joyce stated: "I do believe that, in the long term, AI will make us safer and more secure. But between now and that future point, there will be a 'dark period' where offensive AI will hold a clear advantage—those who haven't laid a solid foundation for protection will be the first to be breached."

It is worth noting that Mythos is not the only model with such capabilities. Including early versions of Claude and Big Sleep, multiple organizations are already using large language models for vulnerability discovery.

According to this source, "zero-day vulnerabilities" (security flaws unknown to the defense side, leaving almost no time for repair) that previously took days or even weeks to identify, along with the process of writing exploit code for them, can now be completed with AI in as little as an hour, or even minutes.

Currently, JPMorgan Chase's focus is primarily on the supply chain and open-source software areas, and it has already discovered multiple vulnerabilities, providing feedback to the relevant suppliers.

Company CEO Jamie Dimon stated on an earnings call that the emergence of Mythos "indicates there are still a vast number of vulnerabilities waiting to be fixed."

According to an informed source, even before the external world was aware of Mythos's existence, JPMorgan Chase had been in communication with Anthropic to discuss testing the model. The source requested anonymity as they were not authorized to speak publicly. JPMorgan Chase declined to comment.

Now, other Wall Street banks and tech companies are also trying to use Mythos to patch system defects before hackers discover them. According to Bloomberg reports, financial institutions such as Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley are already testing this technology internally.

Employees at Cisco Systems are particularly vigilant about one question: whether intruders will use AI to find entry points in the software running on its globally deployed network equipment—devices including routers, firewalls, and modems. The company's Chief Security and Trust Officer, Anthony Grieco, said he is particularly concerned that AI will accelerate attacks on devices that have reached "end-of-life"—devices that will no longer receive update support from Cisco in the future.

How to patch the vulnerabilities discovered by AI will remain a long-term challenge. This process, known as "security patching," is often costly and time-consuming for organizations, to the extent that many choose to ignore vulnerabilities. Catastrophic attacks like the one experienced by Equifax—where data of approximately 147 million people was stolen—occurred precisely because known vulnerabilities were not patched in time.

Although Anthropic was designated a "supply chain threat" by the Trump administration after refusing to assist in large-scale surveillance of U.S. citizens, the company is still engaged in communication and cooperation with federal agencies.

The U.S. Treasury Department is seeking access to Mythos this week. Treasury Secretary Scott Bessent stated that this model would help the U.S. maintain its lead over other countries in the field of artificial intelligence.

In one test, Mythos wrote a browser attack code that chained four different vulnerabilities into a complete exploit chain—a highly challenging task even for human hackers. Cybersecurity research reports indicate that such "vulnerability chains" can often breach the boundaries of originally highly secure systems, similar to the method used in the Stuxnet attack on Iranian nuclear facility centrifuges.

Furthermore, according to Anthropic, when given explicit instructions, Mythos could even identify and exploit "zero-day vulnerabilities" in all major browsers.

Anthropic stated that they used Mythos to find vulnerabilities in Linux code. Jim Zemlin pointed out that Linux "supports most computing systems today," found almost everywhere from Android smartphones and internet routers to NASA's supercomputers. Mythos was able to autonomously discover defects in multiple open-source codes, vulnerabilities that, if exploited, could allow attackers to completely take over an entire machine.

Currently, dozens of personnel at the Linux Foundation have begun testing Mythos. In Zemlin's view, a key question is: whether Anthropic's model can provide sufficiently valuable insights to help developers write more secure software from the source, thereby reducing the generation of vulnerabilities.

"We are good at finding vulnerabilities," he said, "but we are very bad at fixing them."