# Сопутствующие статьи по теме Misinformation

Новостной центр HTX предлагает последние статьи и углубленный анализ по "Misinformation", охватывающие рыночные тренды, новости проектов, развитие технологий и политику регулирования в криптоиндустрии.

Bitcoin

- 1How is the 'Bottom Structure' of a Bear Market Formed, and Where Are We Now?

- 2BlackRock Is Buying Up Bitcoin & Ethereum Again, And The Numbers Are Staggering

- 3Why Has Bitcoin Risen Against the Trend Amid Turmoil?

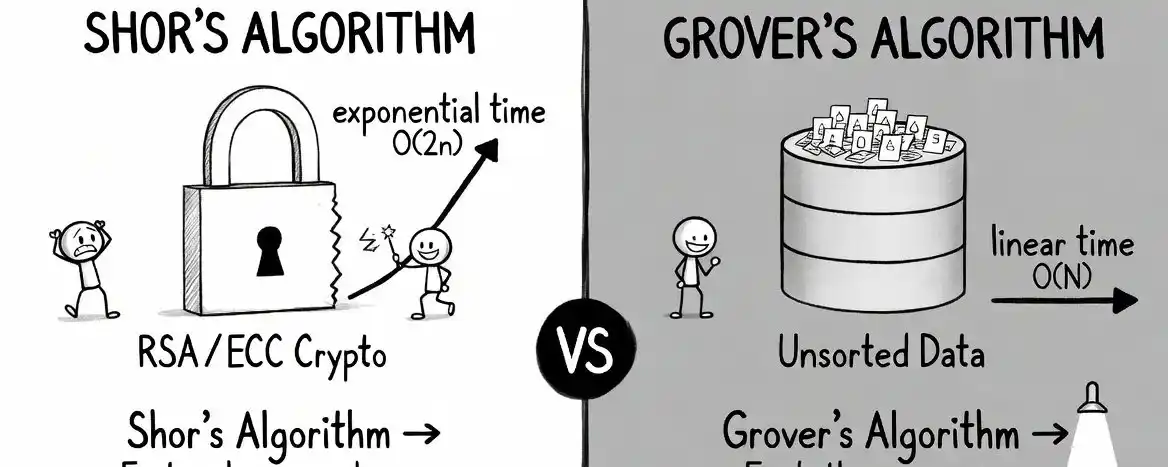

- 4Bitcoin Faces Quantum Risk — New Proposal Could Lock Vulnerable Coins

- 548 Hours, $2.7 Billion: Strategy's STRC ATM Mechanism is Accumulating Bitcoin at an Unprecedented Pace

Project Updates

- 1Massive XRP Adoption Trend Paints The Most Bullish Picture Yet

- 2Qubic Starts Dogecoin Mining Phase 2, Shifting Rewards Away From XMR

- 3PEPE Flashes Selling Climax Signal, What This Means For Price

- 4Analysts Outline a Price Path for Ozak AI That Shows Gradual Expansion Toward the $6 Range

- 5Utexo Partners with x402 to Provide Near-Instant USDT Settlement for the Agent Economy