Who Controls Computing Power, Implicitly Controls the Future of AI: Anastasia, Co-founder of Gonka Protocol

Who Controls Compute, Controls AI's Future: Gonka Protocol Co-Founder Anastasia

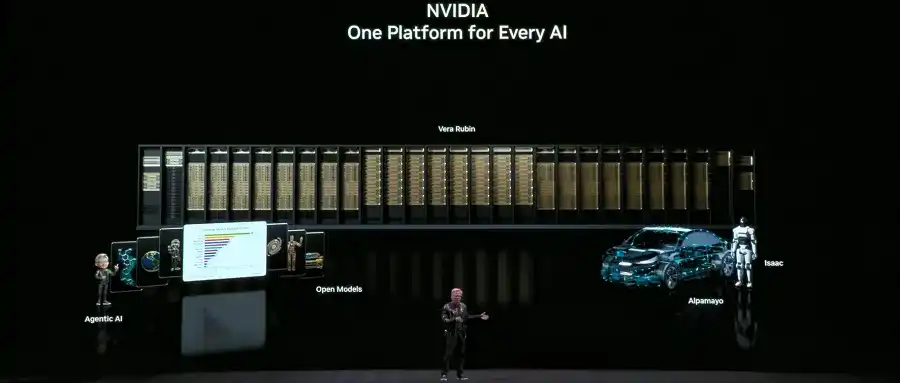

The centralization of compute power, not just AI models, is the critical power node in AI's future, argues Anastasia Matveeva, co-founder of Gonka Protocol. While public debate focuses on models, true power lies in the underlying infrastructure—access to GPUs, power, and data center capacity. This centralization creates structural barriers to innovation, enforces a rent-extraction model, and introduces systemic fragility.

Gonka is a permissionless global network designed to decentralize AI compute. It enables anyone to contribute or access GPU resources via a programmatic, open API. Key to its efficiency is an architecture that minimizes overhead, ensuring most compute is used for actual AI workloads (primarily inference) rather than network maintenance. Rewards and governance are tied to verified compute contribution, not capital stake.

The protocol addresses scalability and accessibility by allowing participants of all sizes to join without permission, with influence proportional to their compute power. It supports the emerging AI agent economy with transparent, dynamic pricing and reliable, verifiable computation. While currently not optimized for strict data sovereignty, its decentralized design avoids data accumulation, and its governance allows for future evolution to meet regulatory demands. The urgency for such decentralized solutions is high to prevent a calcified AI future dominated by a few infrastructure gatekeepers.

marsbit03/03 07:58