Author: Jin Guanghao

A while ago, I attended an AI gathering in Shanghai.

The event itself covered a lot about AI implementation.

But what impressed me the most was a learning method shared by an investor veteran.

He said this method saved him and changed his criteria for evaluating people in investments.

What exactly is it? It's learning to "ask questions."

When you're interested in a question, go talk to DeepSeek, keep talking until it can't answer anymore.

This technique of "infinite questioning" was quite shocking to hear at the time, but after the event, I put it aside.

I didn't try it, nor did I think about it again.

Until recently, when I came across the story of Gabriel Petersson dropping out of school, using AI to learn, and joining OpenAI.

It suddenly dawned on me what that veteran meant by "asking to the end" in this AI era.

Gabriel's Interview Podcast | Image: YouTube

01 "High School Dropout" Makes a Comeback as OpenAI Researcher

Gabriel is from Sweden and dropped out of high school.

Gabriel's Social Media Profile | Image: X

He used to think he was too dumb to ever work in AI.

The turning point came a few years ago.

His cousin started a startup in Stockholm, working on an e-commerce product recommendation system, and asked him to help.

So Gabriel went. With little technical background and no savings, he even slept on the couch in the company's common lounge for a whole year during the early startup phase.

But he learned a lot that year. Not in school, but forced by the pressure of real problems: programming, sales, system integration.

Later, to optimize his learning efficiency, he switched to being a contractor, allowing him more flexibility in choosing projects, specifically seeking out the best engineers to collaborate with and actively seeking feedback.

When applying for a U.S. visa, he faced an awkward problem: this type of visa requires proof of "extraordinary ability" in the field, typically needing academic publications, paper citations, etc.

How could a high school dropout have these?

Gabriel came up with a solution: he compiled his high-quality technical posts from programmer communities as a substitute for "academic contributions." This proposal was actually approved by immigration.

After arriving in San Francisco, he continued to use ChatGPT to self-study math and machine learning.

Now he is a research scientist at OpenAI, working on building the Sora video model.

At this point, you must be curious, how did he do it?

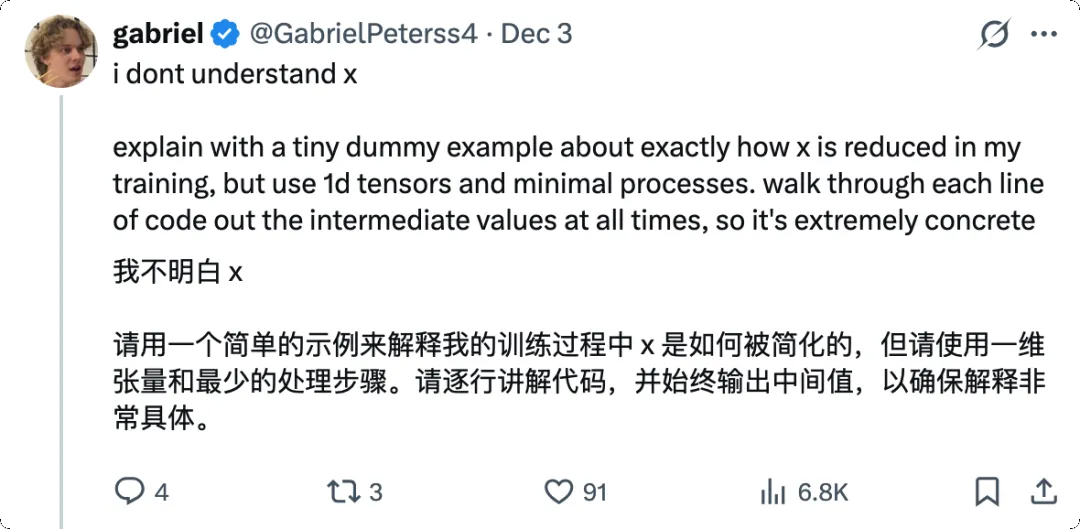

Gabriel's Viewpoint | Image: X

02 Recursive Knowledge Filling: A Counterintuitive Learning Method

The answer is "infinite questioning"—find a specific problem and solve it thoroughly with AI.

Gabriel's learning method is contrary to most people's intuition.

The traditional learning path is "bottom-up": build the foundation first, then learn applications. For example, to learn machine learning, you first need linear algebra, probability, calculus, then statistical learning, then deep learning, and finally you can touch actual projects. This process might take years.

His method is "top-down": start directly with a specific project, solve problems as they arise, and fill knowledge gaps when discovered.

He said in the podcast that this method was hard to promote before because you needed an omniscient teacher to always tell you "what to learn next."

But now, ChatGPT is that teacher.

Gabriel's Viewpoint | Image: X

How exactly? He gave an example: how to learn diffusion models.

Step one, start with the macro concept. He asks ChatGPT: "I want to learn about video models, what's the core concept?" AI tells him: autoencoders.

Step two, code first. He asks ChatGPT to directly write a piece of diffusion model code. At first, many parts are incomprehensible, but that's okay, just get the code running first. Once it runs, you have a basis for debugging.

Step three, the core: recursive questioning. He asks questions about every module in the code.

Drill down layer by layer like this until the underlying logic is thoroughly understood. Then return to the previous layer and ask about the next module.

He calls this process "recursive knowledge filling."

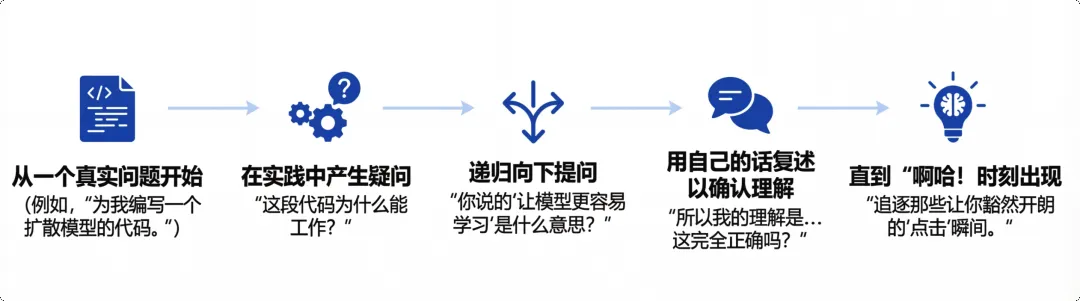

Recursive Knowledge Filling | Image: nanobaba2

This is much faster than studying step-by-step for six years; it might take just three days to build basic intuition.

If you're familiar with the Socratic method, you'll find this is essentially the same idea: approaching the essence of things through layered questioning, where every answer is the starting point for the next question.

Only now, he uses AI as the person being questioned, and since AI is nearly omniscient, it continuously expresses the essence of things in an easy-to-understand way to the questioner.

In fact, by adopting this approach, Gabriel performs "knowledge extraction" from AI, learning the essence of things.

03 Most of Us Using AI Are Actually Getting Dumber

After listening to the podcast, Gabriel's story made me wonder:

Why can he learn so well using AI, while many people feel they are regressing after using AI?

This isn't just my subjective feeling.

A 2025 Microsoft Research paper shows [1] that when people frequently use generative AI, their use of critical thinking significantly decreases.

In other words, we outsource our thinking to AI, and then our own thinking ability atrophies.

Skills follow the "use it or lose it" rule: when we use AI to write code, our ability to code by hand and brain quietly deteriorates.

The working style of "vibe coding" with AI seems efficient, but in the long run, the programmer's own skills decline.

You throw requirements to AI, it spits out a bunch of code, you run it, feel great. But if you turn off AI and try to write the core logic by hand, many find their minds blank.

A more extreme case comes from the medical field. A medical paper points out [2] that doctors' colonoscopy detection skills decreased by 6% three months after introducing AI assistance.

This number seems small, but think: this is real clinical diagnostic ability, concerning patients' health and lives.

So the question is: the same tool, why do some get stronger and some weaker?

The difference lies in what you treat AI as.

If you treat AI as a tool to do work for you, letting it write code, write articles, make decisions for you, then your ability will indeed degrade. Because you skip the thinking process and only get the result. Results can be copied and pasted, but thinking ability doesn't grow out of thin air.

But if you treat AI as a coach or mentor, using it to test your understanding, question your blind spots, force yourself to clarify vague concepts: then you are actually using AI to accelerate your learning cycle.

The core of Gabriel's method is not "let AI learn for me" but "let AI learn with me." He is always the one actively asking questions; AI just provides feedback and material. Every "why" is asked by himself, every layer of understanding is dug by himself.

This reminds me of an old saying: Give a man a fish, and you feed him for a day; teach a man to fish, and you feed him for a lifetime.

Recursive Knowledge Filling | Image: nanobaba2

04 Some Practical Insights

At this point, some might ask: I'm not in AI research, nor a programmer, what use is this method to me?

I think Gabriel's methodology can be abstracted into a more universal five-step framework. Everyone can use AI to learn any unfamiliar field.

1. Start from actual problems, not from chapter one of a textbook.

Whatever you want to learn, just start doing it, and fill in the gaps when you get stuck.

Knowledge learned this way has context and purpose, much more effective than memorizing concepts in isolation.

Gabriel's Viewpoint | Image: X

2. Treat AI as an infinitely patient tutor.

You can ask it any stupid question, have it explain the same concept in different ways, ask it to "explain like I'm five."

It won't laugh at you, nor will it get impatient.

3. Actively question until you build intuition. Don't settle for superficial understanding.

Can you restate a concept in your own words? Give an example not mentioned in the original text?

Explain it to a layperson? If not, keep asking.

4. There's a trap to be wary of: AI can also hallucinate.

During recursive questioning, if the AI explains a underlying concept wrong, you might go further down the wrong path.

So it's advised to cross-validate with multiple AIs at key nodes, ensuring the foundation of your questioning is solid.

5. Record your questioning process.

This forms reusable knowledge assets: next time you encounter a similar problem, you have a complete thought process to review.

In traditional观念, the value of tools lies in reducing resistance and improving efficiency.

But learning is恰恰相反: moderate resistance, necessary friction, is反而 the prerequisite for learning. If everything is too smooth, the brain enters power-saving mode and remembers nothing.

Gabriel's recursive questioning本质上 creates friction.

He constantly asks why, constantly pushes himself to the edge of not understanding, then slowly fills the gaps.

This process is uncomfortable, but it's this discomfort that truly embeds knowledge into long-term memory.

05 Future Career Trends

In this era, the monopoly of academic qualifications is being broken, but the cognitive threshold is invisibly rising.

Most people only use AI as an "answer generator," while a very few, like Gabriel, use AI as a "thinking exerciser."

Actually, similar uses have already appeared in different fields.

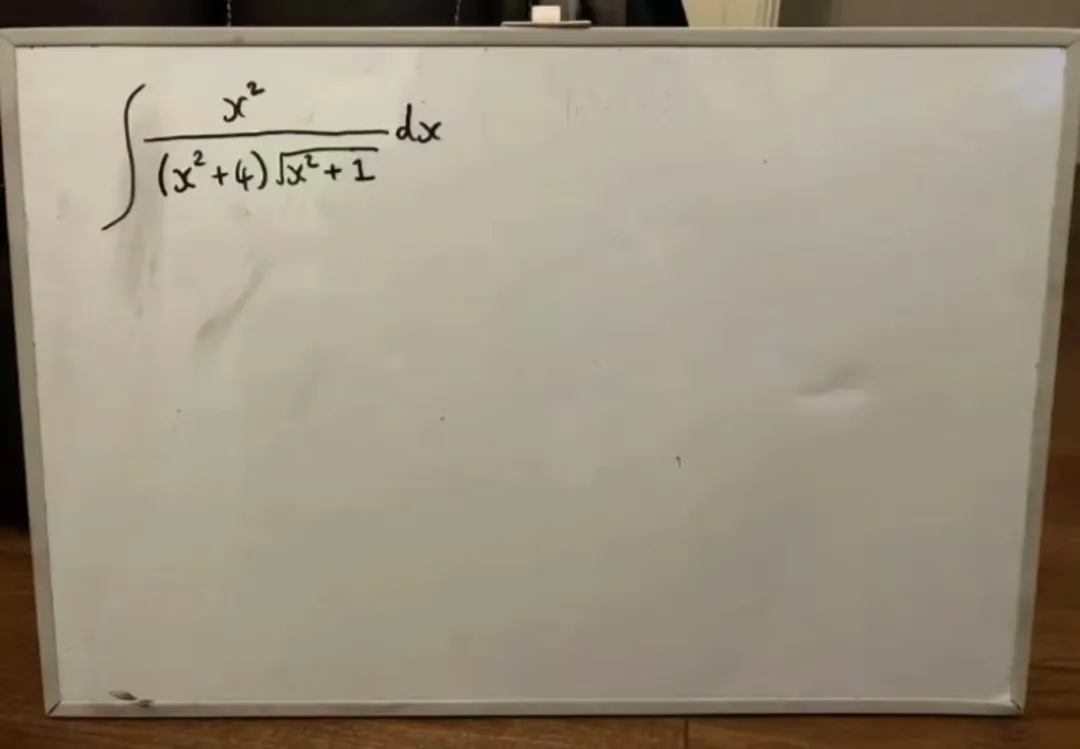

For example, on Jike, I see many parents using nanobanana to tutor their children. But they don't let AI give the answer directly; instead, they have AI generate解题 steps, showing the thinking process step by step, then analyze the logic of each step with the child.

This way, the child learns not the answer, but the method of solving the problem.

Prompt "Solve the given integral and write the complete solution on a whiteboard" | Image: nanobaba2

Others use features like Listenhub or NotebookLM to convert long articles or papers into podcast form, with two AI voices discussing, explaining, and questioning. Some think this is lazy, but others find that listening to the conversation and then going back to the original text actually improves comprehension efficiency.

Because the conversation naturally raises questions, forcing you to think: Do I really understand this point?

Gabriel's Interview Podcast to Podcast | Image: notebooklm

This points to a future career trend: specialized and versatile.

Before, to create a product, you needed to know front-end, back-end, design, operations, marketing. Now, you can, like Gabriel, use the "recursive gap-filling" method to quickly master 80% of the knowledge in your weak areas.

You were originally a programmer; by using AI to补全 design and business logic, you can become a product manager.

You were originally a good content creator; through AI, you can quickly补全 coding skills and become an independent developer.

Based on this trend, it can be inferred: "Perhaps, in the future, more 'one-person company' forms will appear."

06 Reclaim Your Initiative

Now, thinking back to that investor veteran's words, I understand what he really meant.

"Keep asking until it can't answer."

This phrase is a great mindset in the AI era.

If we are satisfied with the first answer AI gives, we are quietly degenerating.

But if we can, through questioning, force AI to explain the logic thoroughly, then internalize it into our own intuition: then AI truly becomes our external tool, not us becoming appendages of AI.

Don't let ChatGPT think for you; let it think with you.

Gabriel went from a dropout sleeping on a couch to an OpenAI researcher.

There's no secret in between, just tens of thousands of questions.

In this era full of anxiety about being replaced by AI, the most practical weapon might be:

Don't stop at the first answer, keep asking.