From late March to early April 2026, two landmark events occurred in the AI video sector within two weeks.

The first: Sora, once revered as the industry's "white moonlight," was announced to be completely shut down by OpenAI on March 24th—its standalone app, API interface, and video features embedded in ChatGPT were all taken offline. OpenAI has completely exited the consumer-grade video generation market.

The second: Less than two weeks later, on April 7th, an anonymous model codenamed "HappyHorse-1.0" unexpectedly topped the most authoritative AI video blind test leaderboard, Artificial Analysis, with an overwhelming score.

One is a Silicon Valley giant admitting defeat in a daily $15 million money-burning game; the other is an unknown technical dark horse kicking through the long-held top spot on the blind test leaderboard by Chinese teams. These two events, happening in the same time window, seem unrelated but actually point to the same conclusion: The rules of competition in AI video are undergoing a qualitative change—shifting from "whose model is smarter" to "whose computing power is cheaper and whose compliance wall is thicker."

The Truth Behind Topping the Charts: Overwhelming Pure Visuals and a "Specialized" Dark Horse

To judge the quality of a dark horse, first look at the referee.

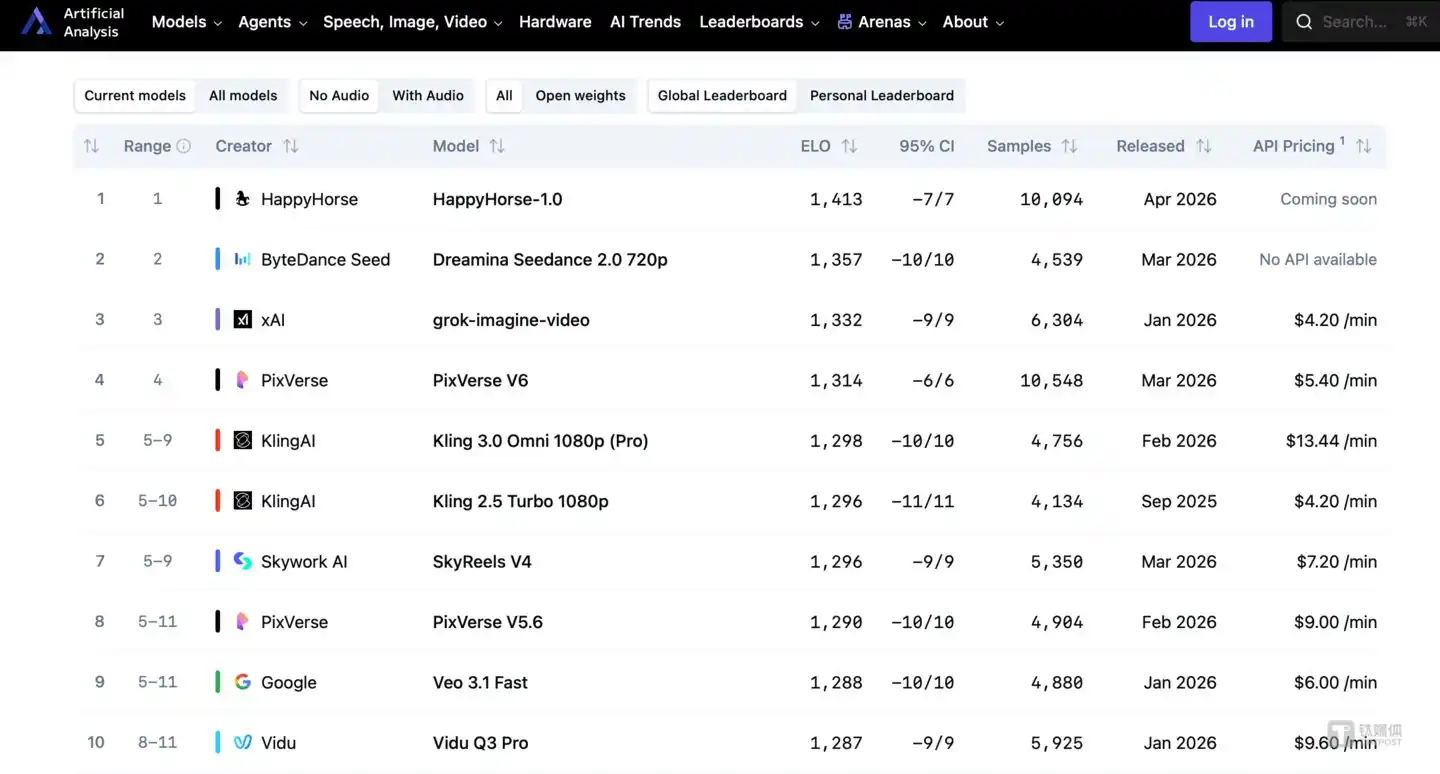

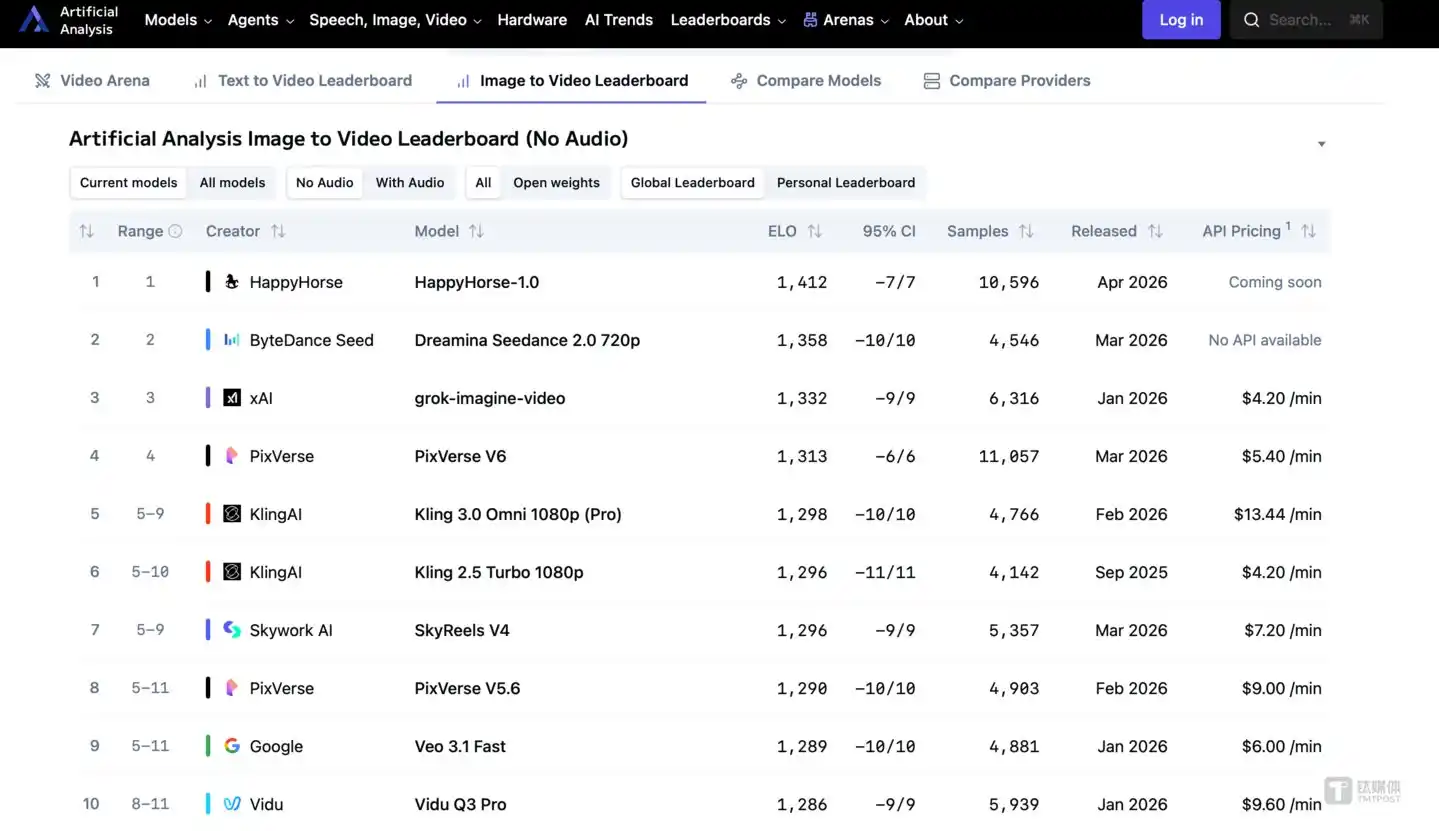

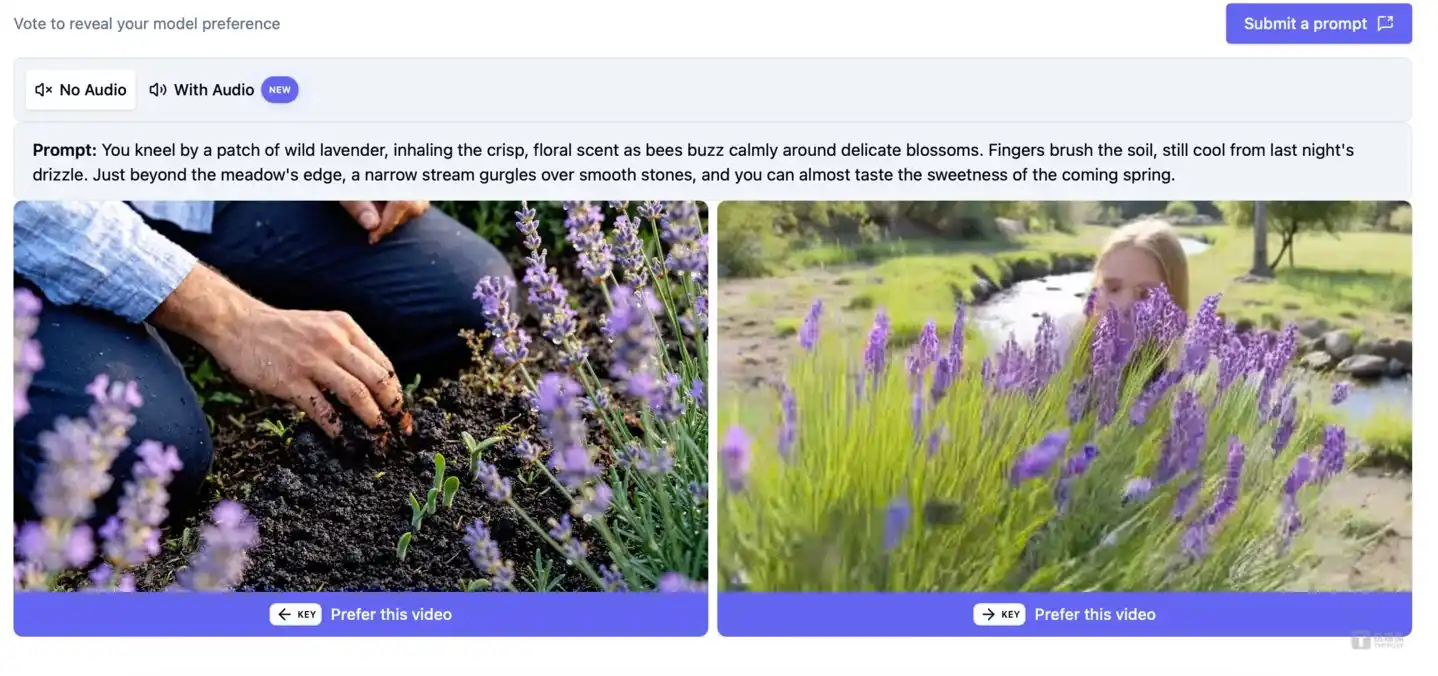

Artificial Analysis Video Arena is not a self-congratulatory PR leaderboard from manufacturers but an Elo rating voted on by thousands of real users in completely blind tests of generated videos.

HappyHorse-1.0's report card is overwhelming.

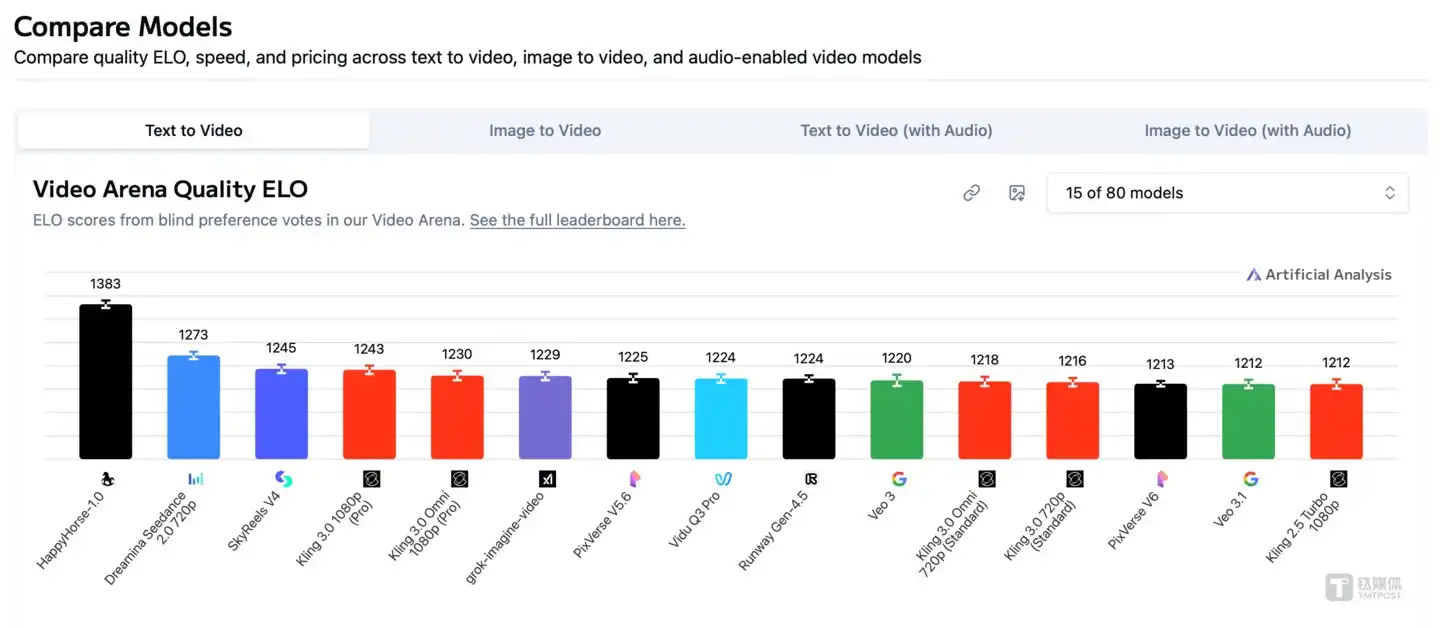

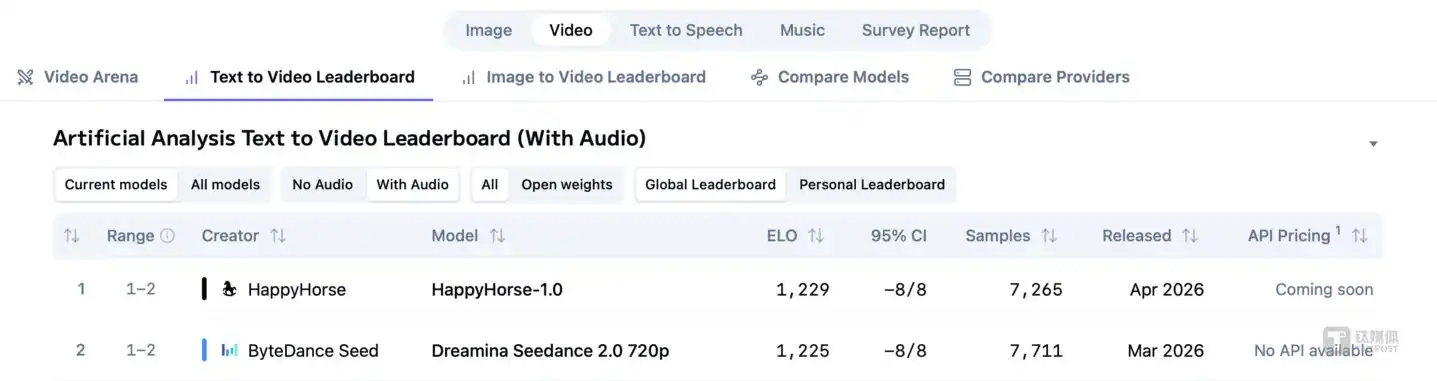

In the "Text-to-Video (No Audio)" track, it scored 1357 points (as of April 9th), a full 84 points ahead of the second-place Seedance 2.0 (1273 points). This means users were significantly more likely to choose it over any other model in blind tests. Stepping over it are not only ByteDance but also star products like Kling 3.0 and SkyReels V4.

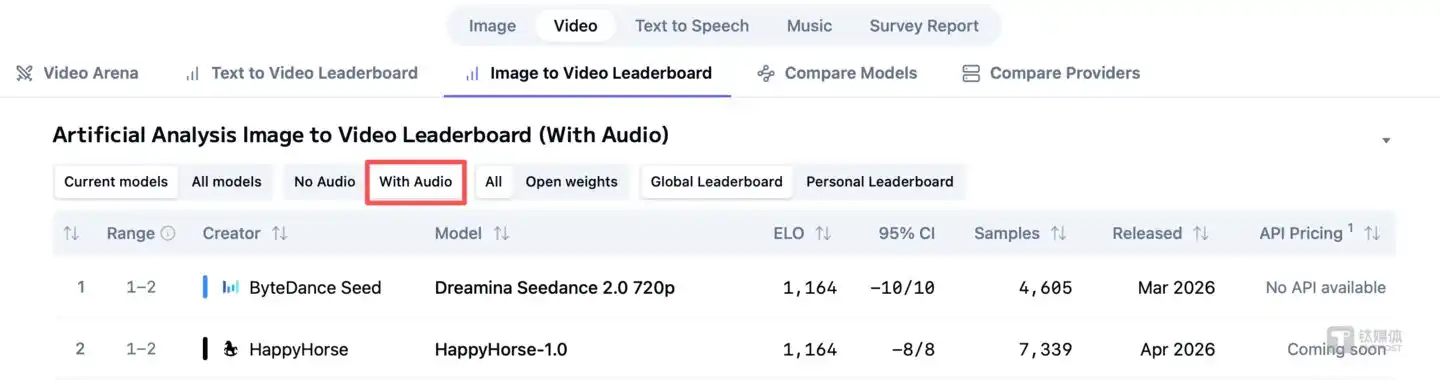

However, its "specialization" is also a fact. Once audio is included, it scored 1217 points in the "Text-to-Video (With Audio)" track, losing to Seedance 2.0 (1220 points) by just 3 points. In other words, HappyHorse-1.0 kicked through ByteDance's pure visual technical reputation defense line, but Seedance still held its ground in the audio-visual comprehensive experience.

The significance of this chart-topping performance lies more in breaking the market expectation that "domestic video models have solidified"—a new challenger can use a small 15B parameter model to crush all major players in the pure visual dimension.

How is it so fast?

On a single top-tier H100 GPU, it takes only 38.4 seconds to generate a 1080p HD video (with synchronized audio). The confidence in speed comes from the underlying 15 billion parameter (15B) unified Transformer architecture, combined with DMD-2 distillation technology, compressing the inference steps to just 8 steps.

Simply put, traditional large video models are like an "outsourcing team"—a large text model first understands your需求, then hands it over to a diffusion model to "draw," with significant communication loss in between. In contrast, the unified Transformer architecture used by HappyHorse-1.0 is an "all-rounder," processing both text and visual pixels within the same neural network, eliminating cross-modal intermediate loss.

Interestingly, HappyHorse-1.0 was initially questioned as "marketing futures" when it first topped the charts (April 7-8)—its website claimed to be open source, but the GitHub repository and model download links were all 404 or "coming soon." However, on April 9th, multiple media outlets reported that it had officially announced open source, and users could experience it online via text generation and image generation on its official website. It took less than 48 hours to go from "Schrödinger's open source" to actually releasing the weights.

Anonymous Conspiracy: Why Are Big Tech Companies Entering Incognito?

There are currently two mainstream speculations in the industry.

First, it comes from Alibaba's Taotian Group's newly established "Future Life Laboratory," led by former Kuaishou Technology Vice President and Kling AI head Zhang Di.

Second, it deeply draws on the underlying technology of daVinci-MagiHuman from domestic startup Sand.ai—Zhihu user Vigo Zhao compared HappyHorse-1.0's public benchmark data with known models line by line and found them highly consistent. Jiemian News also reported that the "most accepted conclusion in technical circles" is that HappyHorse is an optimized iteration of daVinci-MagiHuman.

The above speculations have not been officially confirmed. However, exclusive news this morning claimed that HappyHorse-1.0 was indeed developed by Alibaba, led by former Kuaishou VP and Kling technical head Zhang Di, who returned to Alibaba in November 2025. Additionally, Alibaba Cloud will soon launch the model on its Bailian platform, and Alibaba's recent organizational adjustments are related to this.

As of publication, Alibaba has not responded officially.

The question is: Since they hold a dragon-slaying sword, why don't big tech companies hold a press conference? Why anonymously混迹 on third-party blind test platforms?

Although there is currently no official explanation,推测 from industry惯例 and business logic suggests at least two layers of planning behind it.

The first layer is free "data harvesting."

The biggest bottleneck in current AI video is the lack of real human preference data. Anonymously空降 a blind test platform is equivalent to having global netizens perform free A/B testing for it. Without spending a penny, it can precisely identify the model's defects in the real world.

The second layer is avoiding the fatal "compliance landmine."

AI video is currently on the火山口 of copyright lawsuits. Releasing实名 before the large model has built digital watermarking and portrait blocking mechanisms can easily attract sky-high claims from Hollywood. Anonymous testing shows off muscles while providing legal physical isolation.

However, from another perspective, HappyHorse-1.0's狂欢 highlights Sora's落寞. Both are in video, so why the polarized fate? Upon closer thought, Sora's exit actually tears open the industry's most bloody scar: a严重倒挂 ROI (Return on Investment).

According to SemiAnalysis estimates, Sora's daily operating cost was as high as $15 million, burning about $5.4 billion a year. Its diffusion model architecture requires rendering about 30 images to generate 1 second of video, but common issues like object distortion and incoherent motion lead to a large number of videos being discarded. The final usability rate is estimated by analysts to be only 5% to 10%.

Producing 1 usable video wasted more than ten times the computing power. When a tool cannot be embedded into users' daily workflows and merely becomes a "novelty toy," no one is willing to pay持续. According to data disclosed by an a16z partner, Sora's 1-day retention rate was only 10%, 7-day was 2%, 30-day was只剩 1%, and 60-day was接近 0%.

Sora, with its $5.4 billion annual cost and cliff-like drop in retention, proved that the route of暴力堆算力 with pure diffusion models is unworkable. HappyHorse-1.0 offers another answer—15B parameters, unified Transformer architecture, 8-step inference, 38.4 seconds on a single card. The gap between the two is not parameter scale but architectural efficiency. The outcome of this architecture war may be more significant for the industry than any single chart-topping performance.

Looking at the Chinese AI giants remaining on the field, they are fighting another battle of computing power economics.

First, look at API call costs:

ByteDance's Seedance 2.0, its API pricing for 1080p pure video generation is 46 RMB / 1 million Tokens. Based on actual tests, generating a 15-second video consumes about 308,880 Tokens. Converted, the cost to generate one second of commercial-grade video is about 1 RMB (approx. $0.14).

This is the commercial reality. For the vast majority of enterprises, directly calling a closed-source API costing about fourteen cents per second is far more attractive than spending millions of RMB on H100 servers to折腾所谓的 "open-source models."

Million-Dollar Frameworks: The Ultimate Barrier for Giant Rivalry

If you think cheap computing power is the only barrier, you are too naive.

To access Seedance 2.0 and use真人 reference images to generate videos, enterprises need to sign an annual prepaid framework contract worth tens of millions.同时, new framework signings must also pay a deposit of 50% of the prepaid amount or 1 million RMB (whichever is higher) as a保证金, which is only gradually released after one year.

This million-dollar threshold is essentially a保证金 that makes enterprises bear the main responsibility—transferring the legal risks of generating deepfake videos (Deepfake) to头部 B-end enterprises with risk resistance capabilities through commercial contracts.

In mid-February this year, a video generated by an Irish director using Seedance 2.0, featuring a realistic fight between Tom Cruise and Brad Pitt on a rooftop, went viral. On February 13th, a cease-and-desist letter drafted by Disney lawyer David Singer was delivered to ByteDance. The Motion Picture Association (MPA) subsequently严厉指控 Seedance 2.0 for "large-scale unauthorized use of copyrighted content," and the actors' union SAG-AFTRA also issued尖锐 criticism for the unauthorized use of members' likenesses.

To protect themselves, giants have set extremely high capital thresholds and enterprise qualification reviews (KYC).

They simply don't care what funny videos ordinary C-end users can make; they want to become the "water, electricity, and coal" of B-end industrialized content production. By monopolizing computing power infrastructure and establishing a strict authorization system, they completely block mid-to-long-tail competitors.

What does the great reshuffle of the post-Sora era leave for the industry?

The underlying infrastructure game of AI video is already a专属牌桌 for重资本,重算力 giants. But what is being博弈 on the table is infrastructure, while golden opportunities are growing in the缝隙 underneath the table.

The core logic is simple: computing power costs are visibly plummeting—from several dollars per second in the Sora era to about 1 RMB per second for today's Seedance 2.0, to theoretically zero marginal cost locally after HappyHorse-1.0's open source. Every time the cost drops by an order of magnitude, it催生 a batch of new commercial scenarios.

Overall, the three most noteworthy directions in the AI video generation field currently might be:

E-commerce带货 video automation. Product promotion videos on domestic short video platforms are still mainly shot manually, costing 500-2000 RMB per piece with a production cycle of 2-5 days. If API computing power compresses this cost to 10-50 RMB and shortens the production cycle to minutes, the entire投放 logic will be rewritten—the volume of test materials can surge from 10 per day to 1000, and the efficiency and accuracy of A/B testing will improve qualitatively.

Short drama industrialized production. Vertical screen short dramas are exploding in the global market, with single-episode budgets typically ranging from 50,000 to 150,000 RMB, but shooting cycles and actor costs are rigid bottlenecks. Although AI video cannot yet replace real acting, it can already replace 30%-40% of shooting work on "non-emotional" shots like scene establishing shots, transition shots, and special effects shots, directly压缩 total production costs.

Overseas advertising localization. The same product投放 in Southeast Asia, the Middle East, and Latin America requires advertising materials in different languages, with different ethnicities and cultural symbols. The traditional method requires teams in multiple countries to shoot separately. AI video can compress this process to be completed by one person on one computer within a day, and the cost hardly increases linearly with the number of markets.

These three directions share a common characteristic: they do not require the model to be number one in跑分, nor do they require movie-grade画质, but they require cost to be low enough, speed fast enough, and stability good enough—and this is precisely the scenario where API calls are more suitable than local deployment.

HappyHorse-1.0 kicked the door open. But standing behind the door is the commercial infrastructure that ByteDance and Kuaishou have been building for two years—computing power supply chains, compliance review systems, and B-end customer networks.

A technical dark horse can win a weekend of applause, but winning the war requires积累 in another dimension. From today, the competition rules for AI video have shifted from "whose model is stronger" to "whose workflow is thicker."(This article was first published on Titanium Media App, author| AGI-Signal, editor| Lin Shen)