Author: Lao Bai

Original Title: Crypto × AI from the Primary Market Perspective: An Experiment in Tokenized Illusion

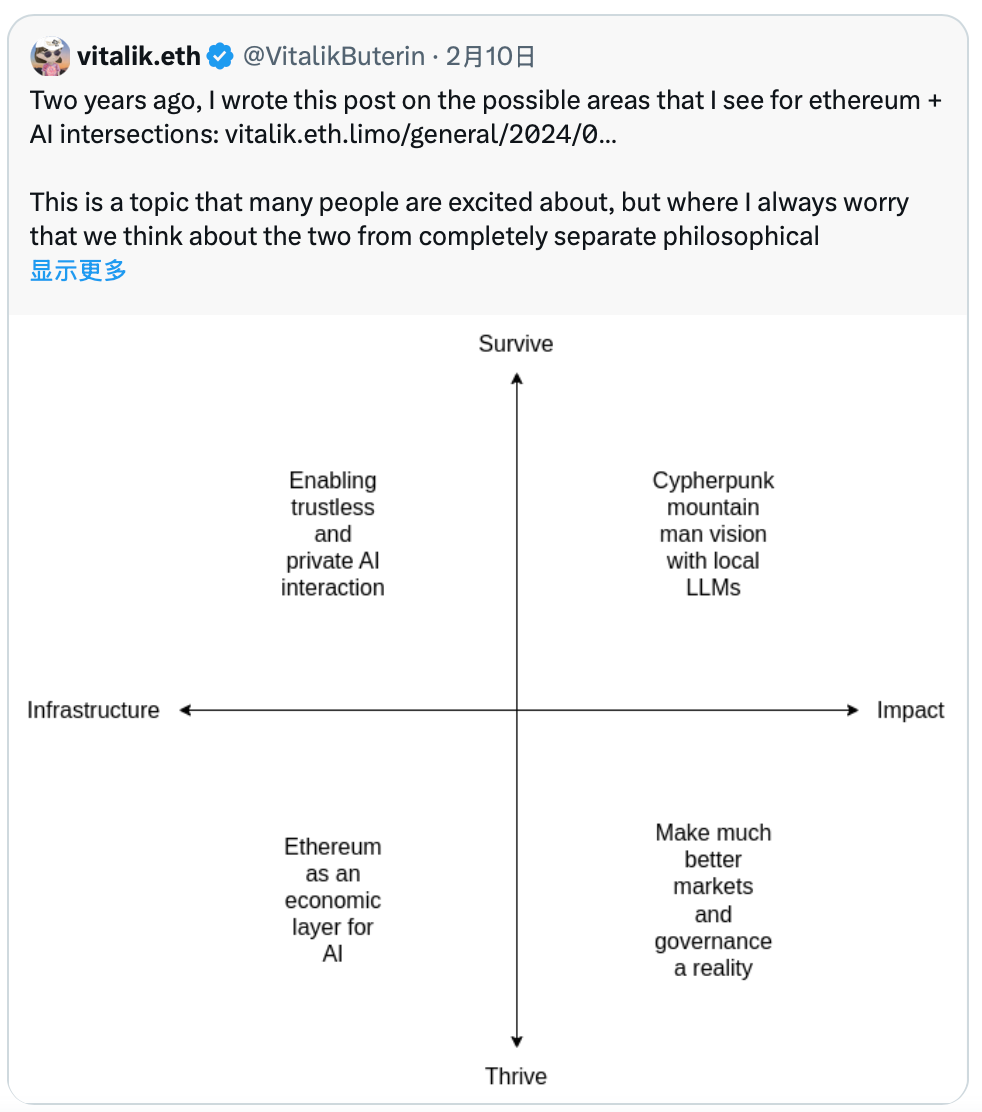

After two years, V God has tweeted again, and I’ll follow up on the research report from two years ago, even the timing is exactly the same, February 10th. (Related reading: ABCDE: Sorting Out AI+Crypto from a Primary Market Perspective)

Two years ago, V God actually implicitly expressed that he wasn’t very optimistic about the various Crypto Helps AI trends that were popular at the time. The three popular narratives in the circle back then were computing power assetization, data assetization, and model assetization. My research report two years ago mainly discussed these three narratives and some observations and doubts about them from the primary market. From V God’s perspective, he was more optimistic about AI Helps Crypto.

The examples he gave at the time were:

-

AI as a participant in the game;

-

AI as the game interface;

-

AI as the game rules;

-

AI as the game objective;

Over the past two years, we have made many attempts in Crypto Helps AI, but the results have been minimal. Many sectors and projects ended up – issuing a token and that’s it, without real commercial PMF (Product-Market Fit), which I call the "Tokenized Illusion".

1. Computing Power Assetization – Most cannot provide commercial-grade SLA, are unreliable, and frequently go offline. They can only handle simple small and medium model inference tasks, mostly serving niche markets. Revenue is not linked to the token......

2. Data Assetization – High friction, low willingness, and high uncertainty on the supply side (retail users). The demand side (enterprises) needs structured, context-dependent, professional data suppliers with trust and legal liability. DAO-based Web3 project parties find it difficult to provide this.

3. Model Assetization – Models themselves are non-scarce, replicable, fine-tunable, and rapidly depreciating process assets, not final-state assets. Hugging Face itself is a collaboration and dissemination platform, more like GitHub for ML, not an App Store for models. Therefore, so-called "decentralized Hugging Face" projects aiming to tokenize models have mostly ended in failure.

Additionally, over these two years, we have tried various forms of "Verifiable Inference," which is a typical story of looking for a nail to hit with a hammer. From ZKML to OPML to Gaming Theory, etc., even EigenLayer shifted its Restaking narrative to Verifiable AI.

But it's basically similar to what happened in the Restaking sector – few AVSs (Actively Validated Services) are willing to pay continuously for extra verifiable security.

Similarly, verifiable inference is mostly verifying "things that no one really needs to be verified." The threat model on the demand side is extremely vague – who exactly are we protecting against?

AI output errors (model capability issues) far outnumber maliciously tampered AI outputs (adversarial problems). The various security incidents on OpenClaw and Moltbook some time ago showed that the real problems come from:

-

Wrong strategy design

-

Excessive permissions granted

-

Unclear boundaries

-

Unexpected interactions between tool combinations

-

...

Almost none of the imagined nails like "model being tampered with" or "inference process being maliciously rewritten" exist.

Last year I posted this picture, I wonder if any old-timers remember.

The ideas V God presented this time are clearly more mature than two years ago, also due to the progress we've made in privacy, X402, ERC8004, prediction markets, and other directions.

It can be seen that the four quadrants he divided this time, half belong to AI Helps Crypto, and the other half belong to Crypto Helps AI, no longer being clearly biased towards the former as it was two years ago.

Top left and bottom left – Utilizing Ethereum's decentralization and transparency to solve AI's trust and economic collaboration problems

1.Enabling trustless and private AI interaction (Infrastructure + Survival): Using technologies like ZK, FHE, etc., to ensure the privacy and verifiability of AI interactions (not sure if the verifiable inference I mentioned earlier counts).

2. Ethereum as an economic layer for AI (Infrastructure + Prosperity): Enabling AI agents to conduct economic payments, recruit other robots, pay deposits, or establish reputation systems through Ethereum, thereby building a decentralized AI architecture instead of being limited to a single giant platform.

Top right and bottom right – Utilizing AI's intelligent capabilities to optimize the user experience, efficiency, and governance of the crypto ecosystem:

3. Cypherpunk mountain man vision with local LLMs (Impact + Survival): AI as the user's "shield" and interface. For example, local LLMs (Large Language Models) can automatically audit smart contracts, verify transactions, reduce reliance on centralized front-end pages, and safeguard individual digital sovereignty.

4. Make much better markets and governance a reality (Impact + Prosperity): AI deeply participates in Prediction Markets and DAO governance. AI can act as an efficient participant, amplifying human judgment by processing information on a large scale, solving various market and governance problems such as insufficient human attention, high decision-making costs, information overload, and voter apathy.

Previously, we were desperately trying to make Crypto Help AI, while V God stood on the other side. Now we finally meet in the middle, though it seems unrelated to various XX tokenizations or some AI Layer1. I hope that looking back at today's post in two years, there will be some new directions and surprises.

Twitter:https://twitter.com/BitpushNewsCN

Bitpush TG Discussion Group:https://t.me/BitPushCommunity

Bitpush TG Subscription: https://t.me/bitpush