From a purely experiential perspective, since 2025, the update frequency of the Ethereum core developer community has been unusually intense.

From the Fusaka upgrade to Glamsterdam, and then to long-term planning around issues like kEVM, quantum-resistant cryptography systems, and Gas Limit over the next three years, Ethereum has densely released multiple roadmap documents covering three to five years within just a few months.

This pace itself is a signal.

If you carefully read the latest roadmap, you will find a clearer and more radical direction emerging: Ethereum is transforming itself into a verifiable computer, and the end point of this path is L1 zkEVM.

I. The Three Shifts in Ethereum's Narrative Focus

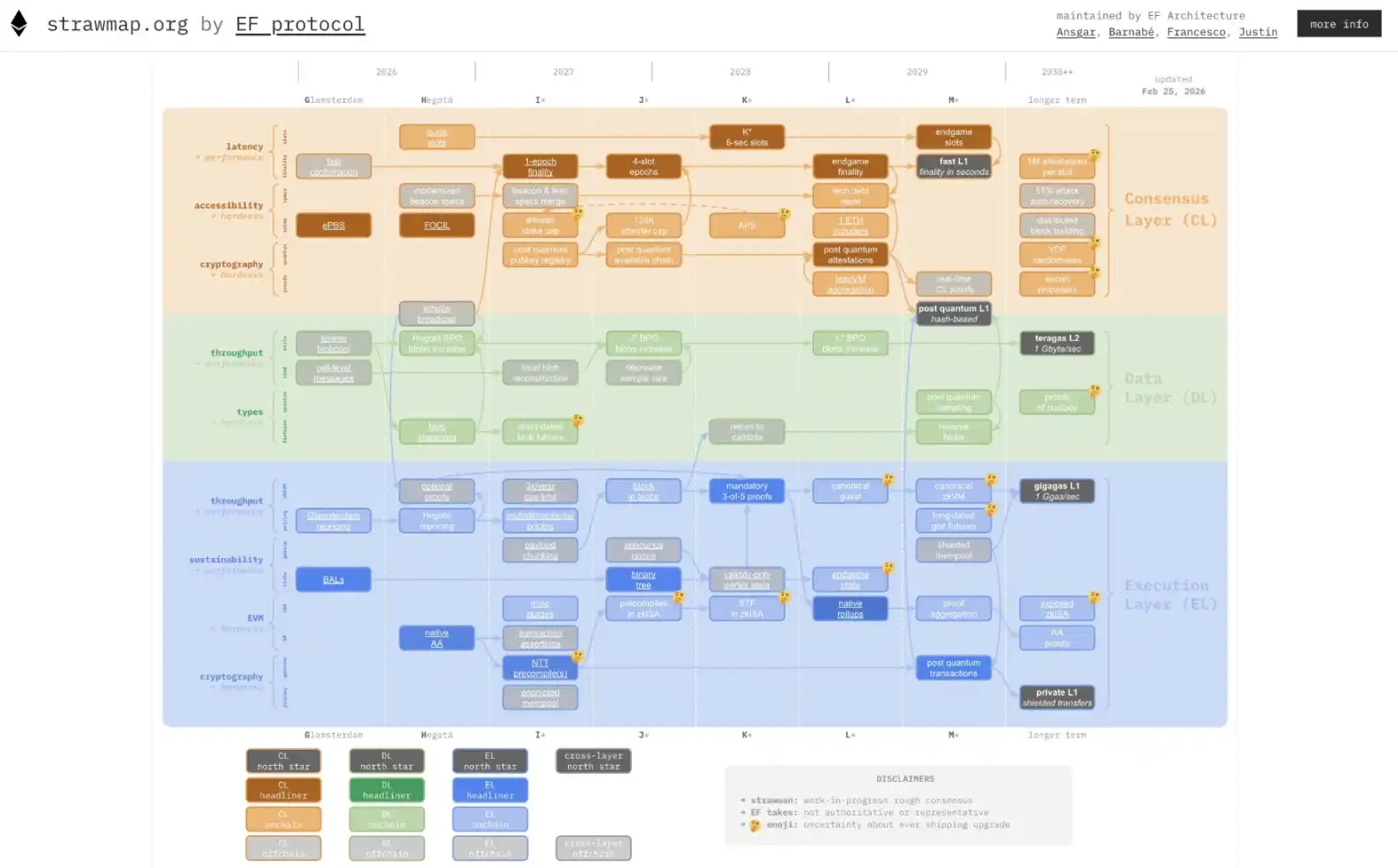

On February 26, Ethereum Foundation researcher Justin Drake posted on a social platform stating that the Ethereum Foundation had proposed a roadmap draft named Strawmap, outlining the upgrade direction for the Ethereum L1 protocol in the coming years.

This roadmap proposes five core goals: a faster L1 (second-level finality), a "Gigagas" L1 achieving 10,000 TPS through zkEVM, high-throughput L2 based on Data Availability Sampling (DAS), a quantum-resistant cryptography system, and native private transaction functionality; the roadmap also plans for seven protocol forks by 2029, averaging about one every six months.

It can be said that over the past decade, Ethereum's development has always been accompanied by the continuous evolution of its narrative and technical路线.

The first stage (2015–2020) was the programmable ledger.

This was the initial core narrative of Ethereum, namely "Turing-complete smart contracts." At that time, Ethereum's biggest advantage was that it could do more things compared to Bitcoin, such as DeFi, NFTs, and DAOs, all products of this narrative. A large number of decentralized financial protocols began operating on-chain, from lending and DEXs to stablecoins. Ethereum gradually became the main settlement network for the crypto economy.

The second stage (2021–2023) saw the narrative taken over by L2.

As Gas fees on the Ethereum mainnet soared, making transaction costs unaffordable for ordinary users, Rollups began to take the lead in scaling. Ethereum also gradually repositioned itself as a settlement layer, aiming to be the foundational base providing security for L2s.

Simply put, this involved migrating most of the execution layer's computation to L2, scaling through Rollups, while L1 was only responsible for data availability and final settlement. During this period, The Merge and EIP-4844 served this narrative, aiming to make L2s cheaper and safer to use Ethereum's trust.

The third stage (2024–2025) focused on narrative introspection and reflection.

As is well known, the prosperity of L2 brought an unexpected problem: Ethereum L1 itself became less important. Users began operating more on Arbitrum, Base, Optimism, etc., rarely interacting directly with L1. The price performance of ETH also reflected this anxiety.

This led the community to debate: if L2s capture all the users and activity, where is the value capture for L1? It wasn't until the internal turbulence within Ethereum in 2025 and the series of roadmaps laid out in 2026 that this logic began to evolve profoundly.

In fact,梳理 (sorting out) the core technical directions since 2025, Verkle Trees, Stateless Clients, EVM Formal Verification, native ZK support, etc., have repeatedly appeared. These technical directions all point to the same thing: making Ethereum L1 itself verifiable. It is important to note that this is not just about allowing L2 proofs to be verified on L1, but about enabling every step of L1's state transition to be compressed and verified via zero-knowledge proofs.

This is the ambition of L1 zkEVM. Different from L2 zkEVM, L1 zkEVM (in-protocol zkEVM) means integrating zero-knowledge proof technology directly into the Ethereum consensus layer.

It is not a replica of L2 zkEVMs (like zkSync, Starknet, Scroll), but rather transforming Ethereum's execution layer itself into a ZK-friendly system. So, if L2 zkEVM is about building a ZK world on top of Ethereum, then L1 zkEVM is about turning Ethereum itself into that ZK world.

Once this goal is achieved, Ethereum's narrative will upgrade from being L2's settlement layer to the "root trust for verifiable computation."

This will be a qualitative change, not the quantitative change of the past few years.

II. What is the True L1 zkEVM?

It's worth reiterating a common point: in the traditional model, validators need to "re-execute" every transaction to verify a block, whereas in the zkEVM model, validators only need to verify a ZK Proof. This allows Ethereum to increase the Gas Limit to 100 million or even higher without increasing the burden on nodes (Further reading: 'The Dawn of the ZK Route: Is the Roadmap for Ethereum's Endgame Accelerating?').

However, transforming Ethereum L1 into a zkEVM is by no means a matter of a single breakthrough; it requires simultaneous progress in eight directions, each being a multi-year engineering effort.

Workstream 1: EVM Formalization

The prerequisite for any ZK proof is that the object being proven has a precise mathematical definition. However, today's EVM behavior is defined by client implementations (Geth, Nethermind, etc.), not by a strict formal specification. The behavior of different clients might be inconsistent in edge cases, making it extremely difficult to write ZK circuits for the EVM—after all, you can't write proofs for an ambiguously defined system.

Therefore, the goal of this workstream is to write every EVM instruction, every state transition rule, into a machine-verifiable formal specification. This is the foundation of the entire L1 zkEVM project. Without it, everything that follows is building on sand.

Workstream 2: ZK-Friendly Hash Function Replacement

Ethereum currently uses Keccak-256 extensively as its hash function. Keccak is extremely unfriendly to ZK circuits, with huge computational overhead, significantly increasing proof generation time and cost.

The core task of this workstream is to gradually replace the use of Keccak inside Ethereum with ZK-friendly hash functions (like Poseidon, Blake series), especially on the state tree and Merkle proof paths. This is a change that affects everything, as the hash function permeates every corner of the Ethereum protocol.

Workstream 3: Verkle Tree Replacing Merkle Patricia Tree

This is one of the most anticipated changes in the 2025–2027 roadmap. Ethereum currently uses the Merkle Patricia Tree (MPT) to store the global state. Verkle Trees replace hash linkages with vector commitments, which can compress witness size by tens of times.

For L1 zkEVM, this means the amount of data needed to prove each block is drastically reduced, and proof generation speed is significantly improved. It also means the introduction of Verkle Trees is a key infrastructure prerequisite for the feasibility of L1 zkEVM.

Workstream 4: Stateless Clients

Stateless clients refer to nodes that, when verifying blocks, do not need to locally store the complete Ethereum state database; they only need the witness data附带 (attached) with the block itself to complete verification.

This workstream is deeply bound with Verkle Trees because stateless clients are only practically feasible if the witness is small enough. Thus, the significance of stateless clients for L1 zkEVM is twofold: on one hand, it greatly reduces the hardware threshold for running nodes, aiding decentralization; on the other hand, it provides a clear input boundary for ZK proofs, allowing the prover to only need to process the data contained in the witness, not the entire world state.

Workstream 5: ZK Proof System Standardization and Integration

L1 zkEVM needs a mature ZK proof system to generate proofs for block execution. However, the current technical landscape in the ZK field is highly fragmented, with no公认 (consensus) optimal solution. The goal of this workstream is to define a standardized proof interface at the Ethereum protocol layer, allowing different proof systems to接入 (access/be integrated) through competition, rather than designating a specific one.

This maintains technological openness while also leaving room for the continuous evolution of proof systems. The Ethereum Foundation's PSE (Privacy and Scaling Explorations) team has substantial preliminary积累 (accumulation) in this direction.

Workstream 6: Decoupling Execution Layer and Consensus Layer (Engine API Evolution)

Currently, Ethereum's Execution Layer (EL) and Consensus Layer (CL) communicate via the Engine API. Under the L1 zkEVM architecture, every state transition of the execution layer requires generating a ZK proof, and the generation time for this proof may far exceed a block's出块间隔 (block time).

The core problem this workstream needs to solve is how to decouple execution and proof generation without breaking the consensus mechanism—execution can be completed quickly first, proof generation can be滞后异步 (generated asynchronously later), and then validators can complete final confirmation at an appropriate time. This involves a deep改造 (overhaul) of the block finality model.

Workstream 7: Recursive Proofs and Proof Aggregation

The cost of generating a ZK proof for a single block is high, but if proofs for multiple blocks can be recursively aggregated into one proof, the verification cost can be significantly reduced. Progress in this workstream will directly determine how低成本 (low-cost) L1 zkEVM can operate.

Workstream 8: Developer Toolchain and EVM Compatibility Guarantee

All underlying technical transformations must ultimately be transparent to smart contract developers on Ethereum. The existing hundreds of thousands of contracts cannot fail due to the introduction of zkEVM. Developers' toolchains cannot be forced to rewrite.

This workstream is the most easily underestimated but often the most time-consuming. Historically, every EVM upgrade required extensive backward compatibility testing and toolchain adaptation work. The scale of changes for L1 zkEVM is far greater than previous upgrades, so the workload for toolchains and compatibility will be an order of magnitude higher.

III. Why is Now the Right Time to Understand This?

The release of Strawmap coincides with a time when the market has doubts about ETH's price performance. From this perspective, the most important value of this roadmap lies in redefining Ethereum as "infrastructure."

For builders, represented by developers, Strawmap provides directional certainty. For users, these technical upgrades will ultimately translate into perceptible experiences: transactions finalized within seconds, assets seamlessly flowing between L1 and L2, privacy protection becoming a built-in feature rather than a plugin.

Objectively speaking, L1 zkEVM is not a product that will be launched in the near future; its complete implementation may take until 2028-2029 or even later.

But at least it redefines Ethereum's value proposition. If L1 zkEVM succeeds, Ethereum will no longer be just the settlement layer for L2, but the verifiable trust root for the entire Web3 world, allowing any on-chain state to ultimately be traced back mathematically to Ethereum's ZK proof chain. This is decisive (决定性) for Ethereum's long-term value capture.

Secondly, it also affects the long-term positioning of L2. After all, when L1 itself possesses ZK capabilities, the role of L2 will change—evolving from "secure scaling solutions" to "specialized execution environments." Which L2s can find their place in this new landscape will be the most值得观察 (worth observing) ecological evolution in the coming years.

Most importantly, the author feels it is also an excellent window to observe Ethereum's developer culture—the ability to simultaneously advance eight interdependent technical workstreams, each being a multi-year engineering effort, while maintaining a decentralized coordination method, is itself Ethereum's unique capability as a protocol.

Understanding this helps to more accurately assess Ethereum's true position in various competitive narratives.

Overall, from the "Rollup-centric" approach of 2020 to the Strawmap of 2026, the evolution of Ethereum's narrative reflects a clear trajectory: scaling cannot rely solely on L2; L1 and L2 must co-evolve.

Therefore, the eight workstreams of L1 zkEVM are the technical mapping of this cognitive shift. They collectively point to one goal: enabling the Ethereum mainnet to achieve an order-of-magnitude performance improvement without sacrificing decentralization. This is not a negation of the L2 route, but its perfection and supplement.

In the next three years, this "Ship of Theseus" will undergo seven forks and replace countless "planks." When it arrives at the next stop in 2029, we may see a truly "global settlement layer"—fast, secure, private, and as open as ever.

Let's wait and see together.