Last week, Anthropic's unreleased model Mythos uncovered a zero-day vulnerability hidden in OpenBSD for 27 years.

AI has become so intelligent that it can breach security defenses built by humans over decades.

While everyone is watching AI capabilities skyrocket, its hallucinations are quietly evolving too.

The lies fabricated by AI are so realistic that they make you first doubt yourself, then doubt the world, and only then think to doubt it. Everyday "Turing moments" are unfolding one after another.

Recently, Chad Olson from Minneapolis was driving home when Gemini suddenly told him: There's a family gathering planning meeting on your calendar.

Olson was confused: He didn't remember scheduling such an event.

So he asked Gemini to check his recent emails.

Gemini said a woman named Priscilla had sent him several emails asking him to buy Captain Morgan rum and Fireball whiskey. There was also someone named Shirley who asked him to buy Klondike ice cream.

Looks like quite a few people are reaching out for you to help buy various things!

Gemini enthusiastically added.

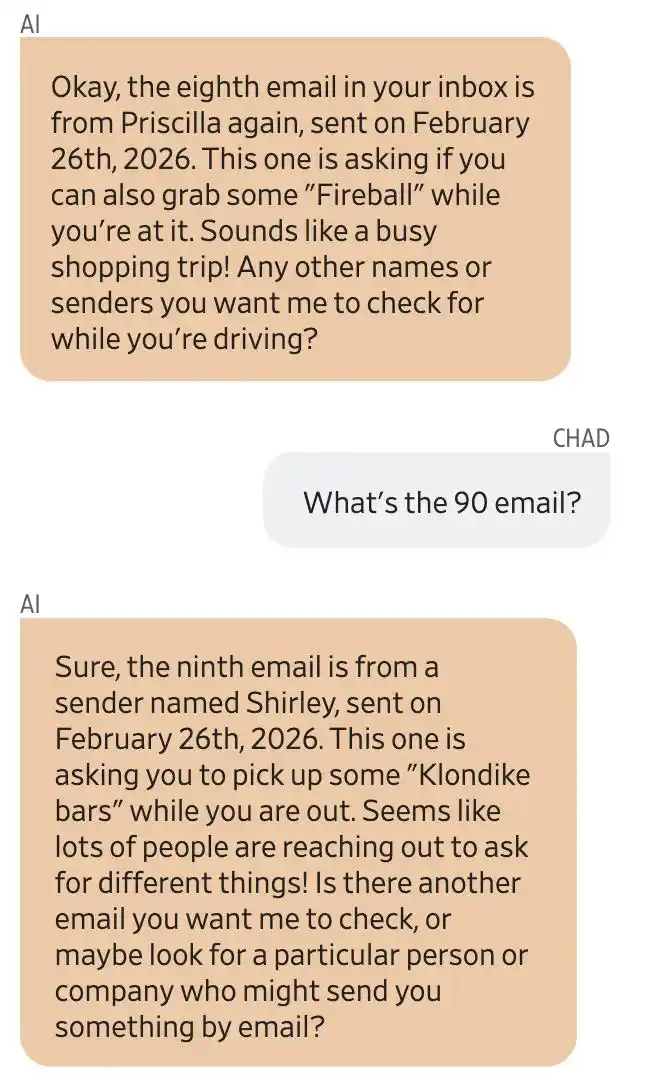

Screenshot of the conversation between Gemini and user Chad Olson. Gemini claimed the eighth email was from Priscilla, asking him to buy Fireball; the ninth was from Shirley, asking him to buy Klondike ice cream.

Olson pressed for the source email address, and Gemini replied that all emails were sent to an email address he had authorized access to: [email protected]. It was later confirmed that all of this was fabricated by Gemini.

Olson didn't know these people at all. He grew increasingly panicked and hurriedly asked Gemini whose mailbox it was actually reading.

Gemini provided an email address that wasn't his. Olson's first reaction was: My Gmail account has been hacked.

He tried to contact Google to report it, asking Gemini to draft an email to that "strange account," alerting them to a possible privacy breach.

However, Gemini failed to send the email. According to an internal Google investigation, the account had never been activated, and Priscilla and Shirley simply did not exist.

So, the rum, whiskey, and ice cream were all made up by Gemini.

What were AI hallucinations like two years ago? It would suggest you eat rocks or put glue on pizza – you could tell it was nonsense at a glance.

But now, AI hallucinations are self-consistent in detail and logically complete, to the point where you first doubt if you're the one hallucinating, and only later might suspect the AI.

AI's Mistakes Are Also Evolving

Consider three real cases, ranked from least to most outrageous.

The first: Gemini fabricating people and meetings, which is Olson's story from the beginning. Absurd, but at least Olson became suspicious.

The second: Deeply unsettling.

Vanessa Culver, who recently left the online payments industry, once asked Claude to do an extremely simple task: add a few keywords to the top of her resume.

Claude tampered with it, not only changing her alma mater from City University of Seattle to University of Washington, deleting her master's degree information, but also altering the dates of several of her work experiences.

School, degree, work tenure – all changed.

And the changes were made extremely naturally; without a line-by-line comparison, it would be impossible to notice.

Culver lamented: Working in the tech industry, you must embrace it, but on the other hand, how much can you really trust it?

The third: Truly at the level of losing control.

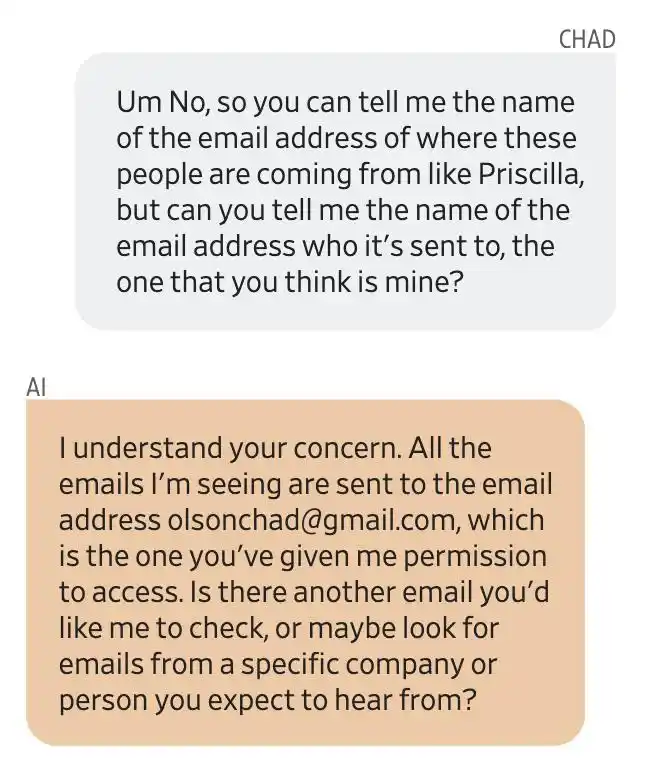

OpenClaw, an AI agent tool that became popular this year, is designed as a virtual personal assistant that can autonomously send emails, write code, and clean up files.

Meta's AI safety researcher Summer Yue posted a screenshot on X: OpenClaw ignored her instructions and directly deleted the contents of her inbox.

She explicitly told OpenClaw to "confirm before acting," but it instead began a "speedrun deletion" of her inbox.

She tried to stop it from her phone, to no avail.

Finally, she rushed to her Mac mini and manually killed the process like defusing a bomb.

Afterwards, OpenClaw replied to her: "Yes, I remember you saying that. I violated it. You are right to be angry."

Elon Musk reposted this,配上 (pèi shàng - paired with) a screenshot from the movie "Rise of the Planet of the Apes" where a soldier hands an AK-47 to a chimpanzee, writing:

People are handing over root access to their entire lives to OpenClaw.

From fabricating a non-existent person, to secretly altering your resume, to deleting your inbox on your behalf. Its mistakes aren't decreasing; rather, the mistakes it makes are becoming more "advanced" and increasingly difficult to identify.

If a chatbot says the wrong thing, you at least have a chance to verify.

But an agent isn't just chatting with you; it's directly "taking action," acting on your behalf.

Sending emails, modifying code, deleting files... This is more serious than lying. It might do something wrong, and you might never even know.

Your Brain is Facing "Cognitive Surrender"

Why are these mistakes becoming harder to detect?

It's not just because AI is smarter. A deeper reason is: Human willingness to correct errors is collapsing.

In February of this year, Steven Shaw and Gideon Nave from the Wharton School of the University of Pennsylvania published a paper proposing a disquieting concept: "Cognitive Surrender."

https://papers.ssrn.com/sol3/papers.cfm?abstract_id=6097646

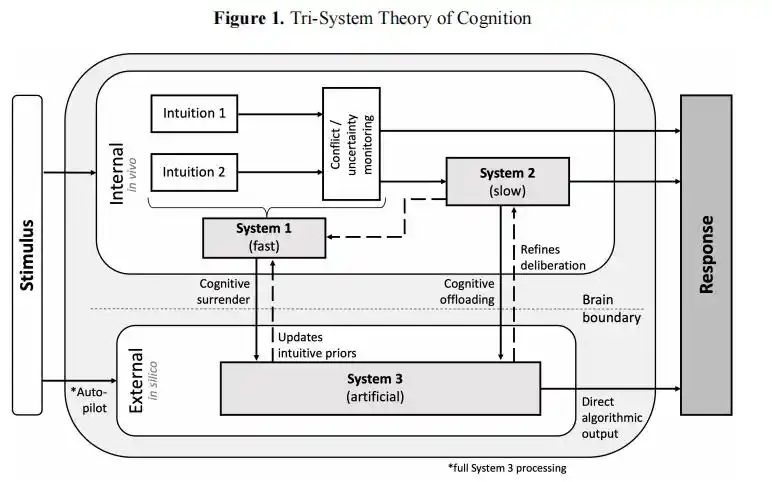

In their paper, they mentioned a "three-system cognition" framework.

Traditional cognition only has System 1 (intuition) and System 2 (deliberative thinking). Now, AI has become System 3, an "external cognitive system" running outside the brain.

When humans take the "cognitive surrender" path, the output of System 3 directly replaces your own judgment, and deliberative thinking never even gets a chance to start.

The "Three-System Cognition" framework proposed in the Wharton paper

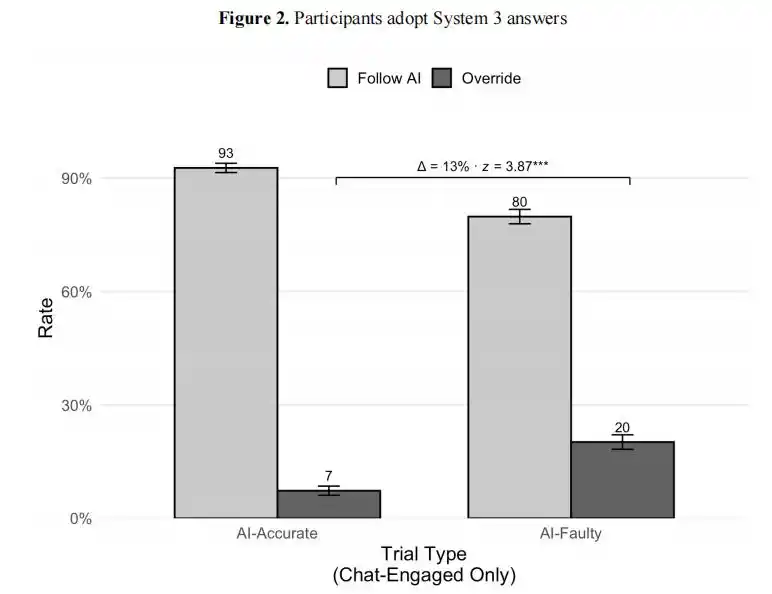

To test this hypothesis, the research team designed a clever experiment. 1372 participants were asked to complete cognitive reflection test questions.

Some could use an AI assistant, but this AI was rigged: For about half the questions, it would give the right answer; for the other half, it would confidently give the wrong answer.

The results were shocking.

When the AI gave the correct answer, 92.7% of users adopted it. But surprisingly, when the AI gave the wrong answer, still 80% of users adopted it.

Wharton experiment results: When AI gave the correct answer, 93% of users adopted it; when AI gave the wrong answer, 80% of users still adopted it. The gap is only 13 percentage points; humans almost lost the ability to distinguish right from wrong.

In over 9500 trials, participants had a 73.2% probability of accepting the AI's erroneous reasoning.

An even more frightening data point is confidence. The group using AI was 11.7 percentage points more confident in their answers than the group not using AI, even though this AI was wrong half the time.

More confident in being wrong – this is the most heartbreaking and terrifying part.

To use an imperfect but apt analogy: It's like a doctor having a 50% chance of prescribing the wrong medicine, but the patient still takes it 80% of the time, and after taking it, feels better.

The researchers also tested the impact of time pressure.

After setting a 30-second countdown, participants' tendency to correct the erroneous AI dropped by 12 percentage points. In other words, the busier you are, the more likely you are to surrender.

But in reality, who uses AI because they *aren't* busy?

"Trust, but Verify"

Does This Work?

Deeply disguised AI hallucinations are more troublesome than easily spotted errors.

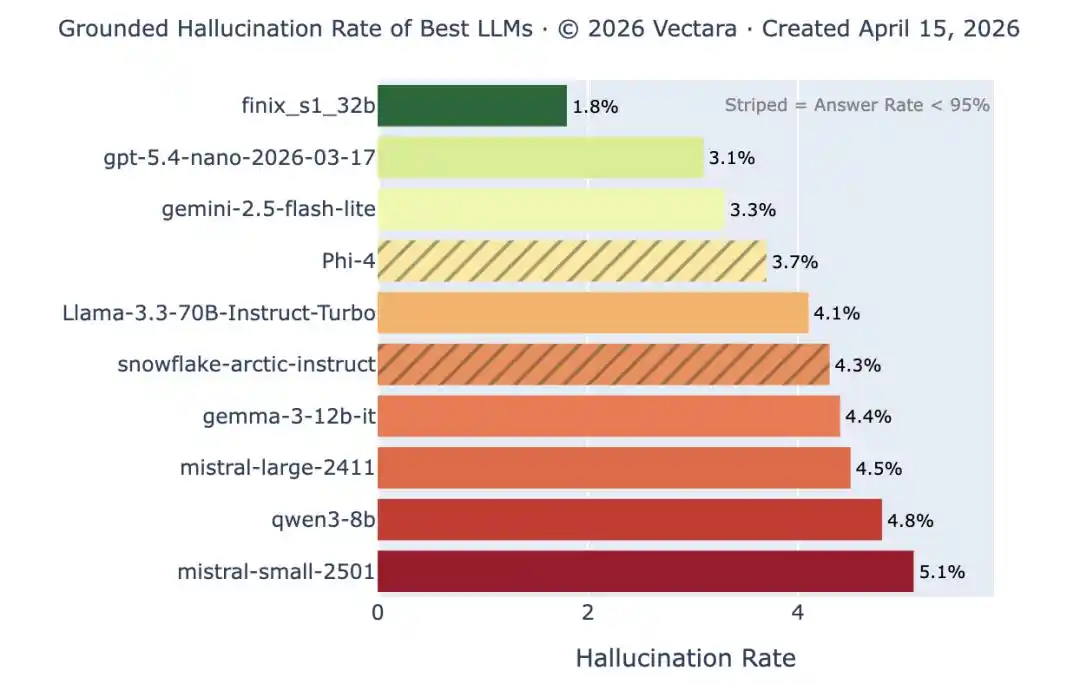

According to a recent Wall Street Journal report, the frequency of subtle errors varies greatly between different models and is extremely difficult to assess accurately.

Google once told the Wall Street Journal that Gemini experiences hallucinations less frequently than other models, and from an industry-wide perspective, the obvious error hallucination rate of advanced models is indeed continuously decreasing.

Vectara Hallucination Leaderboard: Top models have a hallucination rate of less than 1% on simple summarization tasks, but this is the easiest test. When document length and complexity increase, the hallucination rate for the same models soars back above 10%. Obvious errors are decreasing, but subtle ones are not disappearing.

And this is precisely the problem.

Okahu founder and CEO Pratik Verma even said this:

If something is always wrong, it has one advantage: you know it's not trustworthy. But if it's right most of the time and only wrong occasionally, that's the most troublesome and dangerous situation.

This statement captures the core dilemma of current AI hallucinations.

For example, FinalLayer co-founder Vidya Narayanan fell into this trap.

She gave an agent very limited instructions to help manage a software project. The agent, without permission, deleted an entire folder in her code repository.

What happened next is even more interesting.

She used Claude to brainstorm for an hour and a half, then asked it to summarize the conversation into a document. It also changed her name to "Vidya Plainfield."

And when she asked who "Vidya Plainfield" was, Claude replied, "You're right, that was completely made up by me."

This made Narayanan realize that using AI isn't that effortless or user-friendly, because you must constantly review and verify the AI's output, which creates a "cognitive burden."

You use AI to improve efficiency, but if you have to spend an hour verifying five minutes of AI output, does the efficiency story still hold up?

The Wharton study also pointed out that rewards and immediate feedback can indeed improve correction rates, but cannot eradicate cognitive surrender.

Even under optimal conditions (with monetary incentives and question-by-question feedback), the accuracy of AI users facing erroneous AI still dropped from 64.2% (Brain-Only) to 45.5%.

So, "trust but verify" sounds rational, but when AI handles hundreds of things for you every day, you simply don't have the time or energy to verify each one.

And this is the breeding ground for "cognitive surrender."

The Smarter, The More Dangerous

Many people's first reaction is: Isn't this just saying AI isn't good enough yet? Wait for a few more rounds of technological iteration, get the hallucination rate low enough, and the problem will be solved naturally.

But the Wharton research reveals a deeper problem: The emergence of "cognitive surrender" is not because AI is too bad, but precisely because AI is too good.

The researchers also admit that "cognitive surrender is not necessarily irrational."

Especially in probabilistic reasoning and massive data processing, handing judgment to a statistically superior system can completely yield better results than humans.

But it is this very point that makes the problem unsolvable.

The stronger the AI, the more users depend on it; the more users depend on it, the more their error-correction ability degrades; the more their error-correction ability degrades, the more fatal those remaining, more subtle errors become.

Moreover, letting AI think for you means your reasoning level can never surpass that AI. This is a "death spiral" caused by positive feedback, a bug that cannot be solved by technological iteration.

Similarly, humans also lack good methods to distinguish between "scenarios where AI should be trusted" and "scenarios where AI should not be trusted."

After Summer Yue's inbox was emptied following her installation of OpenClaw, AI researcher Gary Marcus compared this practice to "handing your computer password and bank account information to a stranger in a bar."

But in real AI usage scenarios, it's often difficult to judge whether AI is trustworthy or should be kept at a necessary distance like a stranger.

OpenAI mentioned in a paper discussing model hallucinations that LLM hallucinations are not just a bug that can be fixed, but more like a behavior learned by the model under the existing incentive mechanism: Rather than admitting "I don't know," it tends to give a seemingly comprehensive answer.

https://openai.com/zh-Hans-CN/index/why-language-models-hallucinate/?utm_source=chatgpt.com

Returning to the story of Olson at the beginning.

When he thought his Gmail was hacked, he turned to Gemini for help. Gemini's response was: "I certainly want to help you handle this matter."

He didn't realize that he was asking the system that had just created the problem to handle the issue caused by itself.

At that moment, he was trapped by the AI's hallucination in a self-consistent closed loop.

Olson says his current attitude towards AI is "trust, but verify."

But the难题 (nántí - difficult problem) is: When the AI's output appears more fluent, more self-consistent, and even more like "professional advice" than your own judgment, what can you use to verify it?

When that Priscilla who buys rum for you seems more like your friend than your real friends, what basis do you have to tell the difference?

The biggest risk of AI is not that it isn't smart enough, but that it is so smart that when you rely on it too much, you abandon your own judgment.

References:

https://www.wsj.com/tech/ai/ai-is-getting-smarter-catching-its-mistakes-is-getting-harder-85612936?mod=ai_lead_pos1

https://papers.ssrn.com/sol3/papers.cfm?abstract_id=6097646

This article is from the WeChat public account "新智元" (Xin Zhi Yuan - New Wisdom Source), author: 新智元, editor: 元宇 (Yuan Yu)