This summer, Musk is set to do something unprecedented in history. He's packing a major AI model company into a rocket-building company to take them public together.

The AI model company is called xAI, originally Musk's weapon to challenge OpenAI. Now it's being dissolved and folded into SpaceX. Recently, some of its reserved computing power has also been shared with its former rival, Anthropic.

This move appears highly unconventional from the outside.

SpaceX alone tells a story compelling enough. After three failed launches and rockets exploding mid-air, everyone thought Musk was crazy. More than 20 years later, it has become the world's most valuable private company and a key contractor for NASA's Artemis moon program. If it goes public with its target valuation of $2 trillion, it would be nearly the largest IPO in human history.

But just before that victory arrives, Musk has bundled xAI into it.

Investors aren't thrilled. There's a missing, convincing logical link between rockets and AI models. Moreover, xAI isn't a winning hand already played. Over the past 20 years, Musk has conquered every industry he entered—electric vehicles, satellite internet, rockets. Except one: AI.

This seems somewhat contradictory: Musk did not come out on top in the first phase of AI competition; yet this IPO might be his biggest bet of the past 20 years.

He wants to turn a rocket company into the infrastructure company of the AI era. Only then does the logic of integrating xAI and SpaceX make sense.

Now, he's also pulling in Anthropic, the enemy of his enemy, as an ally. This is essential for the new infrastructure story of "SpaceXAI" to hold up.

Grok Is Not a Winner

In April 2026, SpaceX secured an option: it can acquire Cursor later this year for up to $60 billion.

Cursor is one of the most popular AI programming tools today. Interestingly, its breakthrough success largely relied on Anthropic's Claude. In other words, Musk is considering spending a huge sum to buy a tool built on a competitor's model.

This fact alone speaks volumes about xAI's predicament.

The first phase of the AI battlefield roughly looks like this.

In the chatbot arena, ChatGPT dominates alone, with over 800 million weekly active users, equivalent to five Doubaos.

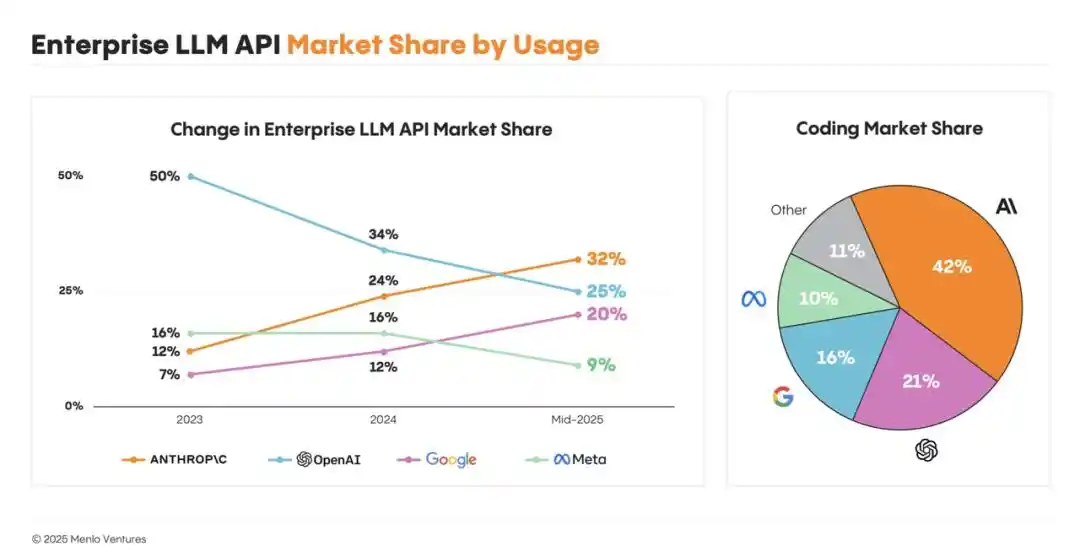

In the enterprise API market, Anthropic surpassed OpenAI in market share last year, approximately 32% to 25%. Eight of the top 10 highest-revenue companies in the US are its clients.

In the AI programming tool market, Claude Code captured about 42% of the enterprise share, achieving an annualized revenue of $2.5 billion. Cursor, which Musk wants to buy, is itself part of the Claude ecosystem.

And xAI? It created Grok. Its biggest gateway is X, the social media platform controlled by Musk himself—a space closer to a public opinion field and real-time information flow.

This is xAI's awkward position: it doesn't lack buzz, but buzz is mostly what it has left. Grok occasionally ranks first in model benchmarks; people use it for memes and jokes, but rarely integrate it into their workflows or daily lives. It hasn't won in commercialization, the enterprise market, or the developer ecosystem—the three key battles.

Musk left OpenAI in 2018 and founded xAI in 2023. Between these years lie personal disagreements on direction and his desire to reclaim influence in AI. But by 2026, xAI could no longer afford to lose; its position within Musk's entire business empire had become much more critical than at the outset.

xAI's problems might stem from Musk's management style; he couldn't effectively lead scientists.

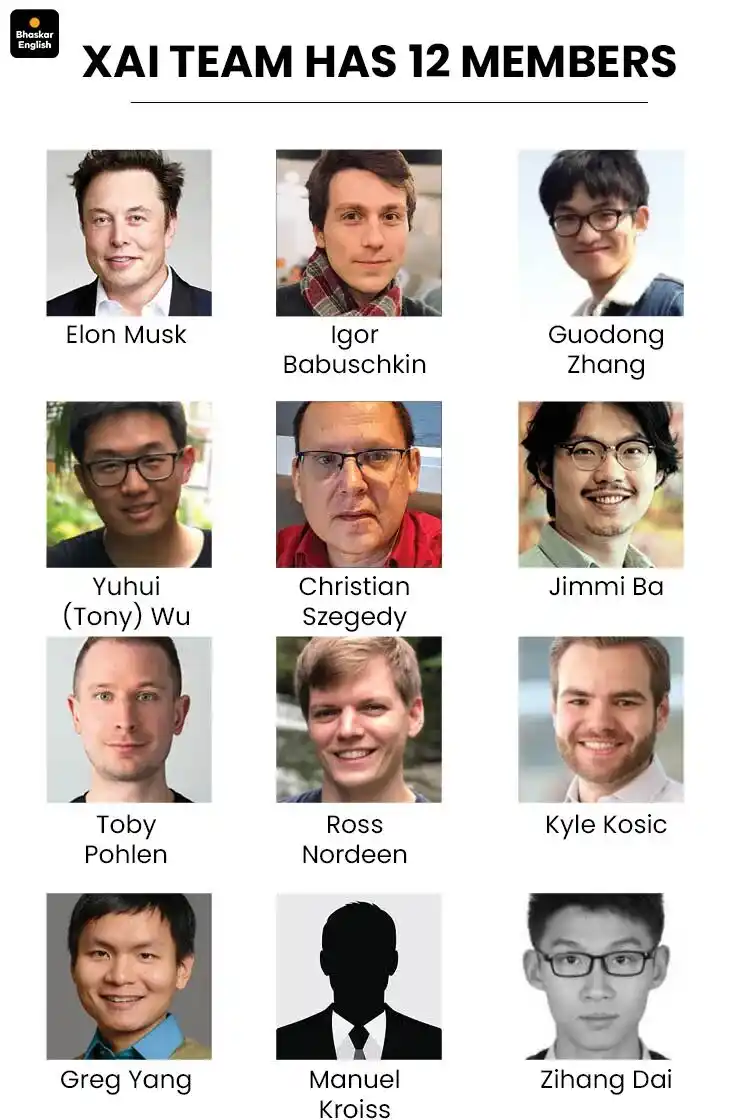

In March of this year, four co-founders left xAI in one go. Within just a few months, the original 12-member "AI Dream Team" was almost entirely replaced.

Over the past 20 years, Musk succeeded because he found a methodology. Applying first principles, he could always engineer complex problems.

Rockets could be broken down into engines, materials, reusability, and launch costs; electric vehicles into batteries, motors, supply chains, and factory efficiency. Every key step could be compressed and turned into an assembly line.

This approach made Tesla and SpaceX.

But large AI models are different. They require nurturing a research culture, an aesthetic sense, and product intuition—things hard to achieve through "first principles." You need to find the right people, provide ample resources and the right environment, and hope it grows organically.

Musk lacks that patience.

Initially, he did assemble a star-studded team spanning academia and industry.

But starting in 2025, the founding team at xAI began a continuous exodus, with top scientists poached from Google leaving one after another. In early 2026, xAI experienced an organizational earthquake; multiple co-founders had their responsibilities reassigned and internal permissions revoked.

In February, Yuhuai Wu left; he was a core engineering figure in xAI's early days. Less than 48 hours later, another co-founder, Jimmy Ba, also announced his departure. In March, co-founders Zihang Dai, Guodong Zhang, Manuel Kroiss, and Ross Nordeen collectively exited—including those originally seen as Musk's trusted lieutenants brought over from Tesla's Autopilot system.

As technical geniuses departed, the space behind xAI also became crowded. Meta doubled down on superintelligence. China's DeepSeek, Kimi, and Qwen rapidly caught up with low costs and open-source ecosystems, squeezing Grok's position.

The prevailing external view is that Musk was too impatient, imposing engineering project timelines on AI research, ultimately stunting its growth.

But next, he didn't change his method. He became more relentless.

Transforming an AI Lab into an AI Factory

Musk parachuted in a batch of executives to take over xAI.

The new President is a satellite network engineer with no background in AI whatsoever; the new CFO is familiar with engineering project cash flow but not necessarily with the commercialization of large models. They all came from SpaceX.

Musk intends to turn xAI—in his eyes, that underperforming AI lab—into a more efficient AI factory.

To understand his judgment, one must look at the other side of the AI battlefield. OpenAI is projected to possibly incur losses up to $14 billion in 2026; Anthropic has already secured about 3.5 gigawatts of computing power, approaching the electricity consumption level of a mid-sized city.

The next phase of AI is no longer just about model and algorithm competition. It's turning into a heavy industry race about computing power, electricity, land, and capital endurance.

This is Musk's familiar territory.

xAI's supercomputing center, Colossus, was built in 122 days and quickly expanded to 200,000 GPUs; the next phase target is 1 million. The pace of competition has completely shifted away from that of an AI lab.

Therefore, the newly parachuted executives aren't there for research. They are there to manage projects, cash flow, and delivery schedules. First, build up the infrastructure; then compress resources into a high-speed operational pipeline.

This is precisely Musk's core judgment: xAI's lag might not be because it overemphasized efficiency, but because it wasn't efficient enough.

xAI isn't the consensus winner. But just like when he built Tesla and SpaceX, Musk's assessment of opportunity has always differed from the masses.

In March of this year, he wrote on X that Tesla would be one of the companies achieving AGI, and likely the first to create AGI in a "humanoid or physical-world controlling" form.

That statement is key. In Musk's narrative, the destination of AI is entering the physical world.

ChatGPT, Claude, Gemini are the current frontrunners, but Musk is betting on another path. If AI ultimately needs to drive cars, control robots, and run factories, the competition will enter his area of strength.

So Musk is still securing resources for xAI. He probably doesn't believe he'll lose at all. He just needs to stay at the table.

But xAI is burning over $10 billion a year, and its private fundraising capacity has peaked. The most recent round was a Series E in January this year, where xAI raised $20 billion, $5 billion above its original target. This doesn't necessarily indicate greater market confidence; the entire AI asset valuation is inflating, and capital doesn't want to miss Musk's card. Grok's revenue is only a few hundred million dollars, with losses dozens of times higher.

He must find a bigger ship to secure a blood supply for xAI.

That ship is SpaceX. Beneath it, Starlink is currently the only stable cash cow within the Musk empire, generating tens of billions of dollars annually.

But a pure aerospace story struggles to reach the $2 trillion valuation ceiling. Musk's solution: What if SpaceX isn't just a rocket company, but the infrastructure company for the AI era?

He believes the future bottleneck for AI isn't just computing power, but also electricity, networks, and data centers—all core businesses of SpaceX.

Recently, he even proposed a more radical vision: moving AI data centers into space. On Earth, electricity, land, and cooling will become increasingly constrained; space offers continuous solar energy without competing with residents for power. SpaceX wouldn't just launch satellites; in the future, it could launch AI servers.

It sounds like science fiction, but it's already written into the IPO story and Musk's own compensation targets.

This is what he wants the market to believe: SpaceX is both an aerospace company and the most稀缺的 (scarce) AI infrastructure company. With this framing, xAI's losses are no longer losses, but capital expenditures for the listed company's business expansion.

He's Betting on Himself

From a rational investor's perspective, the question is: Will the capital market buy it?

Astute investors see two things simultaneously: SpaceX's hard assets are real, and xAI's uncertainties are real. Falcon rockets and Starlink are proven cash flows; xAI, space data centers, physical AI—these are just future options.

At least judging from current valuation figures, the market hasn't shown a 1+1>2 reaction. At the end of 2025, SpaceX's reference valuation was $800 billion; when bundling with xAI in February this year, the deal first lifted SpaceX to $1 trillion, then valued xAI at $250 billion, totaling $1.25 trillion. This looks more like simple addition, lacking a synergy premium.

Because the integrated "SpaceXAI" hasn't validated its AI infrastructure story.

This is also why Musk is collaborating with Anthropic—to create the first showroom for the new business model.

The latest news is that Anthropic will gain access to about 300 MW of computing power from SpaceXAI's Colossus 1 data center. Of course, this also raises more market doubts: is the new company a cutting-edge model company, or is it turning into a data center middleman?

Looking purely at business logic, xAI should have been tied to Tesla. Robotaxi, FSD, and Optimus are natural endpoints. Tesla's fleet of 7 million vehicles generates hundreds of millions of hours of real driving data daily—a goldmine for training physical AI. That synergy is the most natural, the logic cleanest.

But Musk didn't do that, for subtle reasons.

Tesla is a public company; Musk owns about 20%, and major decisions face the board, courts, and shareholders. SpaceX is different; he holds about 42% economic interest and controls about 79% of voting rights through super-voting shares. Public investors can buy returns, but Musk firmly holds the steering wheel.

Musk's consistent method is to make the entire system operate at high speed around his singular will. This method was validated in car manufacturing—Tesla is a benchmark for global automakers. It also worked for rockets and satellites—Starlink deployed 7,000 satellites in 4 years. Now it's AI's turn; he wants to use the same method to compress it into his system.

On the surface, this is a merger and public listing of SpaceX and xAI. Looking deeper, it's Musk's bet on physical AI. At the very bottom, he's betting on himself.

He's betting that the methodology of the past 20 years will still be effective in the AI era—centralizing resources, compressing time, vertical integration, and using one person's will to command a vast system.

But this methodology has one prerequisite: opponents stop or slow down.

Among the opponents he defeated in the past, internal combustion vehicle makers stopped, Boeing and Lockheed Martin stopped, traditional telecom companies stopped.

This time, his opponents haven't stopped. OpenAI, Anthropic, Google, Nvidia, along with China's ByteDance and Kimi—none have stopped. They are as fast, as relentless, as willing to burn money, and as confident they are reshaping the world as Musk is.

This is the first time in 20 years Musk is facing opponents just like him.

So, returning to the initial question: by putting xAI into SpaceX, is he cutting losses or counterattacking?

Perhaps neither.

He's simply ensuring that, whoever ultimately wins this war, he is still sitting at that table.

This article is from WeChat public account "Pixel 301", author: Pixel 301