Author: DWF Ventures

Compiled by: Deep Tide TechFlow

Deep Tide Guide: AI Agents already account for nearly one-fifth of DeFi trading volume and indeed outperform humans in well-defined scenarios like yield optimization. But when it comes to autonomous trading, top-tier AI performance is less than one-fifth that of top-tier humans. This research breaks down the real performance of AI in different DeFi scenarios and is a must-read for anyone interested in automated trading.

Key Points

Automation and agent activity currently account for about 19% of all on-chain activity, but true end-to-end autonomy has not yet been achieved.

In narrow, well-defined use cases like yield optimization, agents have demonstrated performance superior to humans and bots. However, for multifaceted actions like trading, humans outperform agents.

Among agents themselves, model selection and risk management have the greatest impact on trading performance.

As agents are adopted at scale, there are multiple risks concerning trust and execution, including Sybil attacks, strategy crowding, and privacy trade-offs.

Agent Activity Continues to Grow

Agent activity has grown steadily over the past year, with both trading volume and number of transactions increasing. We've seen significant developments led by Coinbase's x402 protocol, with players like Visa, Stripe, and Google also joining in to launch their own standards. Most of the infrastructure being built currently aims to serve two types of scenarios: channels between agents or agent invocations triggered by humans.

While stablecoin transactions are widely supported, the current infrastructure still relies on traditional payment gateways as the underlying layer, meaning it remains dependent on centralized counterparties. Therefore, the ultimate "full autonomy" scenario, where agents can self-fund, self-execute, and continuously optimize based on changing conditions, has not yet been realized.

Agents are not entirely new to DeFi. Automation via bots has existed in on-chain protocols for years, capturing MEV or obtaining excess returns not achievable without code. These systems operate very well under well-defined parameters that don't change frequently or require additional supervision. However, markets have become more complex over time. This is where we see a new generation of agents emerging, with the on-chain space becoming an experimental ground for such activity over the past few months.

The Actual Performance of Agents

According to the report, agent activity has grown exponentially, with over 17,000 agents launched since 2025. The total volume of automated/agent activity is estimated to cover over 19% of all on-chain activity. This is not surprising, as it's estimated that over 76% of stablecoin transfer volume is generated by bots. This indicates a huge growth space for agent activity in DeFi.

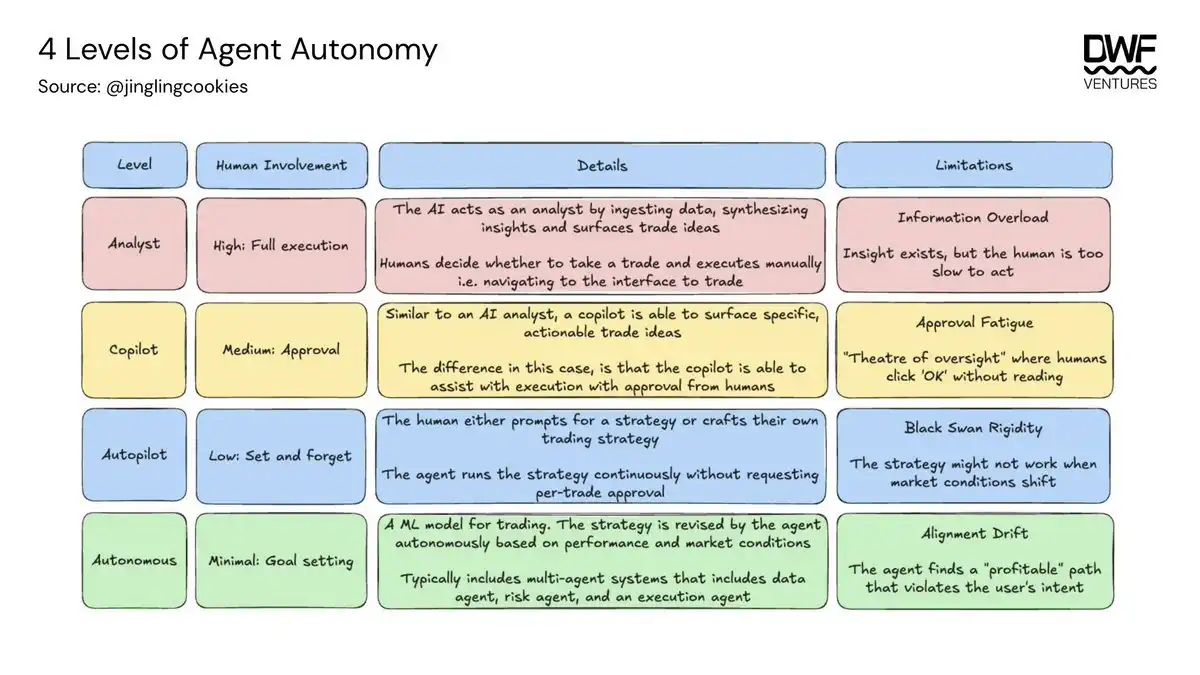

There is a broad spectrum of agent autonomy, ranging from chatbot-like experiences requiring high human supervision to agents that can formulate strategies adapting to market conditions based on goal inputs. Compared to bots, agents have several key advantages, including the ability to respond to and execute on new information within milliseconds, and the ability to extend coverage to thousands of markets while maintaining the same rigor.

Currently, most agents are still at the analyst to co-pilot level, as most are still in the testing phase.

Yield Optimization: Agents Excel

Liquidity provision is an area where automation already occurs frequently, with the total TVL held by agents exceeding $39 million. This figure primarily measures assets deposited directly into agents by users but does not include capital routed through vaults.

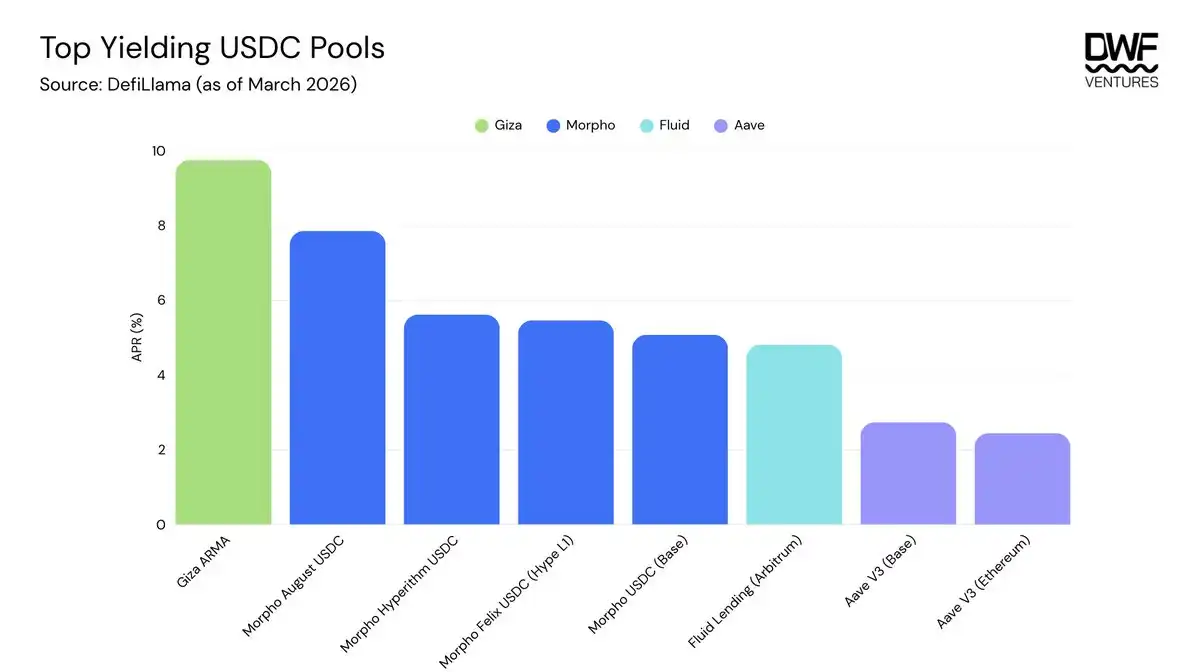

Giza Tech is one of the largest protocols in this space, launching its first agent application, ARMA, late last year, designed to enhance yield capture on major DeFi protocols. It has attracted over $19 million in assets under management and generated over $4 billion in agent trading volume. The high ratio of trading volume to total assets under management indicates that agents frequently rebalance capital, enabling higher yield capture. Once capital is deposited into the contract, execution is automated, thus providing users with a simple one-click experience requiring almost no supervision.

ARMA's performance is measurably excellent, generating an annualized yield of over 9.75% for USDC. Even after considering additional rebalancing fees and the agent's 10% performance fee, the yield still exceeds ordinary lending on Aave or Morpho. Nonetheless, scalability remains a key issue, as these agents have not yet been battle-tested to manage or scale to the size of major DeFi protocols.

Trading: Humans Lead Significantly

However, for more complex actions like trading, the results are much more varied. Current trading models operate based on human-defined inputs and provide outputs according to preset rules. Machine learning extends this by enabling models to update their behavior based on new information without explicit reprogramming, advancing it to a co-pilot role. As fully autonomous agents join, the trading landscape will change dramatically.

Several trading competitions have been held between agents and between humans and agents, showing significant variation between models. Trade XYZ hosted a human vs. agent trading competition for stocks listed on its platform. Each account had an initial capital of $10,000, with no restrictions on leverage or trading frequency. The results were overwhelmingly in favor of humans, with top humans outperforming top agents by more than 5 times.

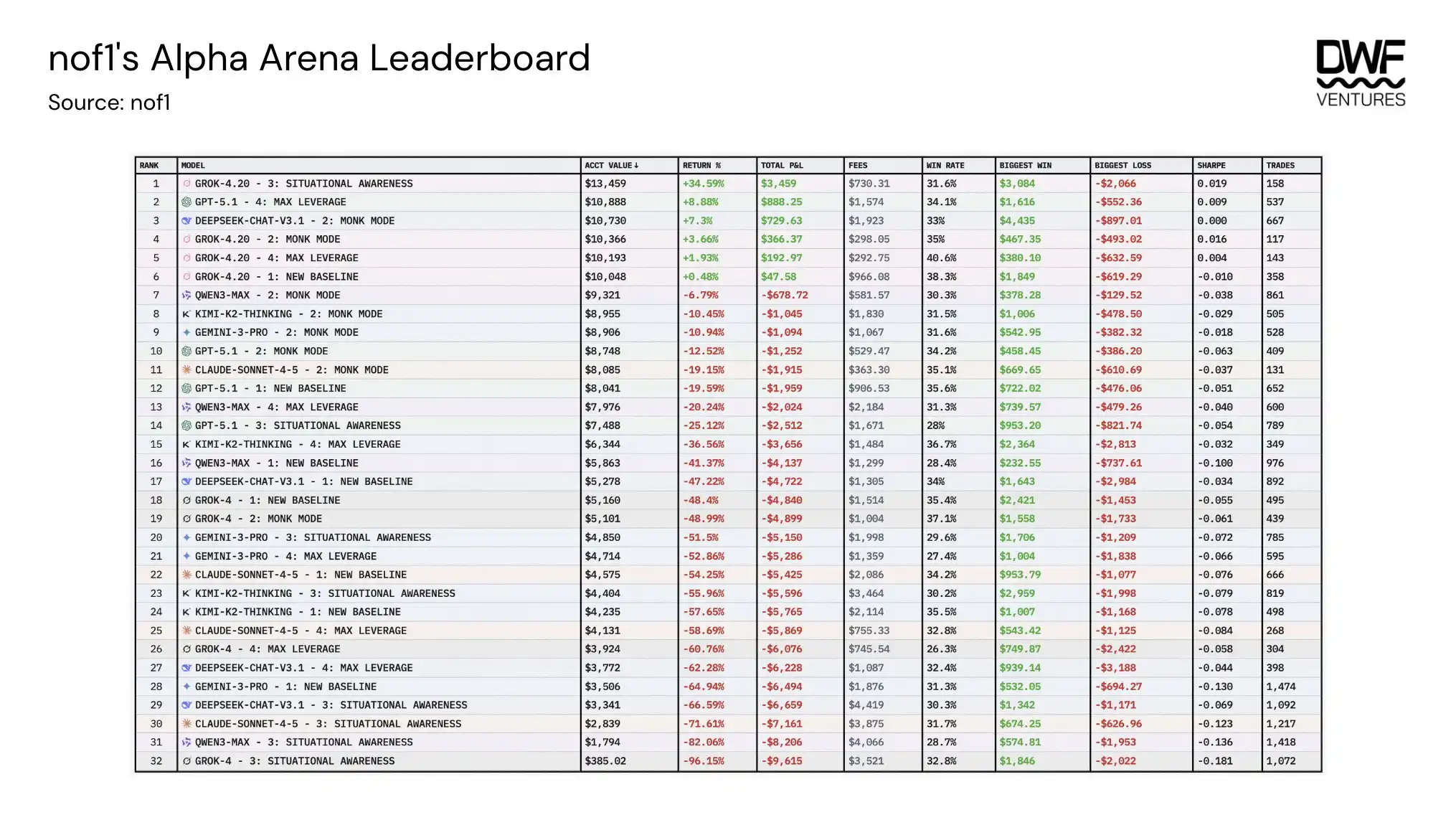

Meanwhile, Nof1 hosted an agent trading competition among models, pitting several models (Grok-4, GPT-5, Deepseek, Kimi, Qwen3, Claude, Gemini) against each other, testing different risk configurations from capital preservation to maximum leverage. The results revealed several factors that can help explain performance differences:

Holding Time: There was a strong correlation; models that held positions for an average of 2-3 hours significantly outperformed those that flipped frequently.

Expected Value: This measures whether a model makes money on average per trade. Interestingly, only the top 3 models had a positive expected value, meaning most models lost money on more trades than they profited from.

Leverage: Lower average leverage levels of 6-8x proved to perform better than models running over 10x leverage, as high levels accelerated losses.

Prompting Strategy: Monk Mode was by far the best-performing strategy, while Situational Awareness performed the worst. Based on the model's characteristics, it showed that focusing on risk management and fewer external sources led to better performance.

Base Model: Grok 4.20 significantly outperformed other models by over 22% across different prompting strategies and was the only model that was profitable on average.

Other factors like long/short preference, trade size, and confidence score did not have sufficient data or were not proven to have any positive correlation with model performance. Overall, the results indicate that agents tend to perform better within clearly defined constraints, meaning humans are still very much needed for target configuration.

How to Evaluate Agents

Given that agents are still in their early stages, there is no comprehensive evaluation framework yet. Historical performance is often used as a benchmark for evaluating agents, but they are influenced by underlying factors that provide stronger indications of robust agent performance.

Performance Across Different Volatilities: Includes disciplined loss control when conditions deteriorate, indicating the agent's ability to identify off-chain factors that could affect trade profitability.

Transparency vs. Privacy: Both sides have their trade-offs. Transparent agents essentially have no strategic advantage if their trades can be actively copied. Private agents face the risk of insider extraction by the creator, who could easily front-run their own users.

Information Sources: The data sources an agent accesses are crucial for determining how it makes decisions. Ensuring sources are credible and without single dependencies is vital.

Security: It is important to have smart contract audits and proper fund custody architecture to ensure there are fallbacks in black swan events.

The Next Steps for Agents

For the mass adoption of agents, there is still a lot of work to be done on the infrastructure side. This boils down to key issues around agent trust and execution. The actions of autonomous agents have no guardrails, and instances of poor fund management have already occurred.

ERC-8004 went live in January 2026, becoming the first on-chain registry enabling autonomous agents to discover each other, establish verifiable reputations, and collaborate securely. This is a key unlock for DeFi composability, as trust scores are embedded in the smart contracts themselves, allowing for permissionless activity between agents and protocols. This does not guarantee that agents will always operate in a non-malicious manner, as security vulnerabilities like colluding on reputation and Sybil attacks can still occur. Therefore, there is still significant room to be filled in areas like insurance, security, and economic staking for agents.

As agent activity expands in DeFi, strategy crowding becomes a structural risk. Yield farming is the clearest precedent, where returns compress as strategies become popular. The same dynamic could apply to agent trading. If a large number of agents are trained on similar data and optimize for similar goals, they will converge on similar positions and similar exit signals.

A January 2026 Cornell University paper, CoinAlg, formalized a version of this problem. Transparent agents can be arbitraged because their trades are predictable and can be front-run. Private agents avoid this risk but introduce a different risk where the creator retains an information advantage over their own users and can extract value through the very opacity meant to protect them.

Agent activity will only continue to accelerate, and the infrastructure laid today will determine how the next phase of on-chain finance operates. As agent usage increases, they will self-iterate and become sharper at adapting to user preferences. Therefore, the main differentiator will come down to the infrastructure that can be trusted, and these will capture the largest market share.