Solana picked up an infrastructure vote of confidence on Wednesday after Alibaba Cloud used a Hong Kong keynote to demo “high-performance” Solana RPC connectivity, framing the work as part of its broader push to fuse AI tooling with Web3 developer workflows.

In a clip shared by Solana’s official X account, the demo came during an Accelerate APAC 2026 keynote titled “Fueling Web3 Innovation with AI on Cloud,” delivered by Zhao Qingyuan of Alibaba Cloud Intelligence Group. The pitch was straightforward: reduce latency and operational overhead for builders who rely on fast, reliable RPC access, especially in trading-heavy use cases where milliseconds can matter.

Alibaba Flexes Solana RPC Throughput

Zhao framed the talk as a practical example of how large language models can compress development cycles. In the keynote, he said he recently migrated a archive node away from a Google Bigtable setup to an Alibaba Cloud in-house database implementation over a weekend, leaning on AI-assisted coding despite limited prior familiarity with Solana’s usual developer stack.

“Just this weekend, I spent two days — I migrated the Solana archive node from a Google Bigtable implementation to Alibaba Cloud’s in-house database implementation,” Zhao said. “I haven’t even learned Rust before, and I just used web coding to do this in two days. And it will download the data from Hugging Face for the historical slots, and it will synchronize the data with the mainnet, and it can provide the RPC service.”

The remarks landed alongside Alibaba Cloud’s broader messaging around its Qwen family of models, positioned by the company as a general-purpose LLM stack that can be used for coding, assistants, and multimodal workflows.

The more market-relevant part of the demo was the latency claim. Zhao described a setup where users connect to RPC nodes through Alibaba Cloud’s backbone network rather than via general public internet routes.

In a table shown during the talk, he said a “get slot” RPC call latency was reduced from roughly 25 milliseconds to about 10 milliseconds under the backbone-network approach, calling it “a huge reduction.” For “get block,” described as a 4MB block payload, he said latency fell from “more than 200 milliseconds” to “less than 200 milliseconds,” while emphasizing stability and suitability for low-latency workloads.

JUST IN: Alibaba, the world’s largest ecommerce company, demos high-performance Solana RPCs pic.twitter.com/wwqVLelqUv

— Solana (@solana) February 11, 2026

Alibaba Cloud also leaned into geography. Zhao pointed to the firm’s global footprint, highlighting regions such as Frankfurt, the US, and multiple Asia-Pacific hubs including Tokyo, Singapore, and Hong Kong, as a “perfect match” for Solana’s builder base and latency-sensitive applications.

While the clip stops short of announcing a formal partnership or a productized Solana RPC offering with pricing or SLAs, the optics are notable: a major cloud provider using a Solana ecosystem stage to publicly benchmark RPC latency improvements, and explicitly tying that to trading and “co-location for the high frequency calls.”

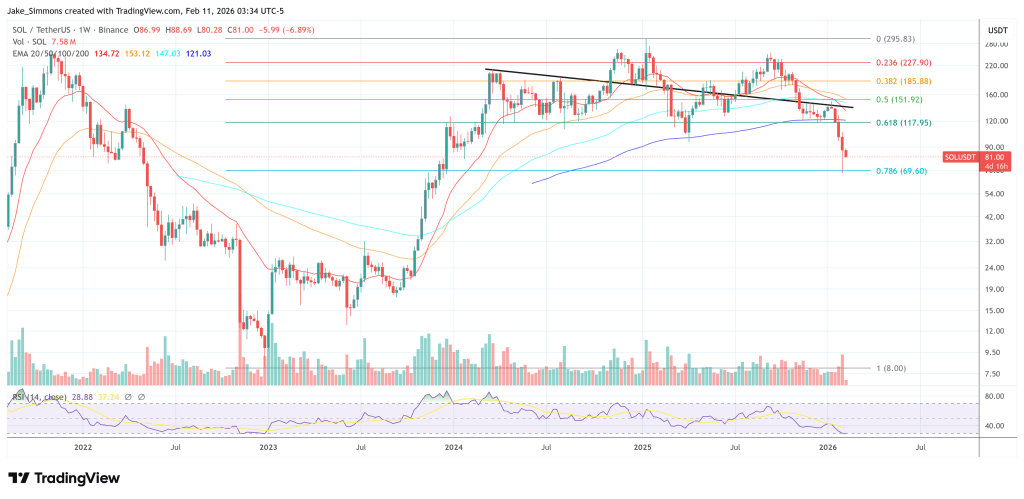

At press time, SOL traded at $81.