In crypto, timing can make or break a project and can decide whether a new launch succeeds or fails. Many in the NFT community expected the 30th of March to be the launch day of OpenSea’s long-awaited $SEA token.

However, OpenSea CEO Devin Finzer has delayed the launch, saying,

Market conditions are challenging across crypto right now, and $SEA only launches once.

The reason behind the $SEA token delay

Elaborating on the issue, Finzer took to X and noted,

@openseafdn is pushing back the timeline. a delay is a delay. i’m not going to dress it up, and i know how it lands.

Instead of rushing the launch during a weak market, OpenSea is taking several steps to keep users engaged.

First, the platform will cut trading fees to 0% for 60 days starting on the 31st of March. This move aims to boost activity and attract users to its mobile app and perpetual Futures platform.

Second, OpenSea is offering fee refunds to users who traded during Rewards Waves 3 to 6. However, users who claim the refund must give up the “Treasures” points they earned.

Third, OpenSea will end the “Waves” rewards system. Instead, the company plans to move away from constant point-farming campaigns and follow a clearer timeline set by the OpenSea Foundation.

OpenSea CMO supports the decision

Standing in support of Finzer, Adam Hollander, CMO of OpenSea, added,

I’ve been a CEO before. the hardest decisions are those which are painful in the short-term and require deep conviction in your vision...there aren’t many CEOs who, when presented with the same situation, would decide to give back millions rebuild trust with their users.

That said, OpenSea’s decision to delay the $SEA token is closely linked to the current state of the NFT market. The industry has matured, but it has also become much smaller and less liquid compared to the 2021 boom.

The NFT market faces weak market sentiment

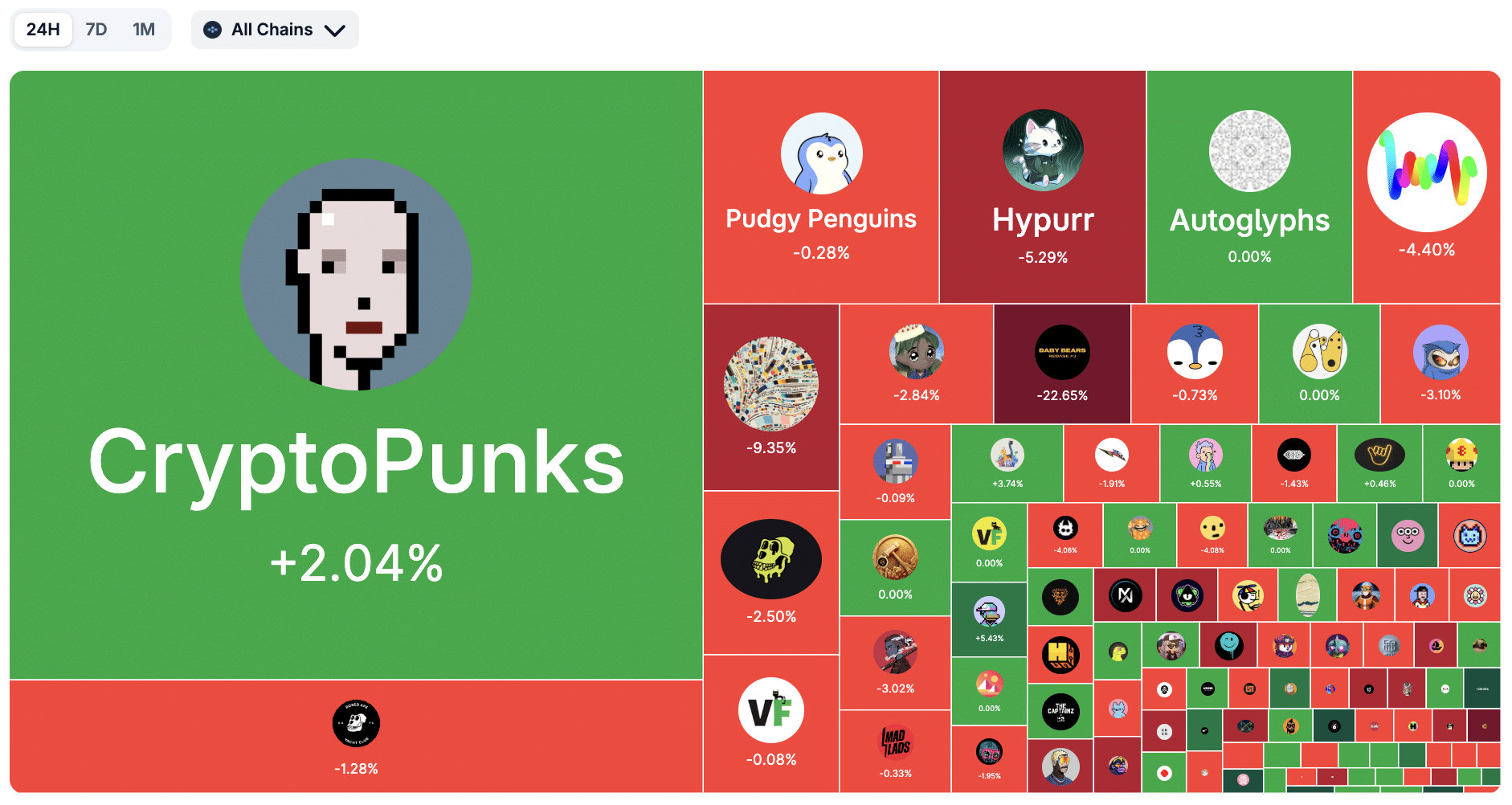

Presently, the global NFT market cap is around $1.75 billion, which is far below the levels seen during the peak of the NFT craze.

The NFT market cap has risen by about 4% in a day, but still, daily sales volume of around $1.73 million shows that trading activity is still limited.

Although trading volume has increased by 39.1%, most activity remains concentrated in a few well-known NFT collections.

As a result, the market shows fast trading but limited depth, with only a small number of assets attracting strong demand.

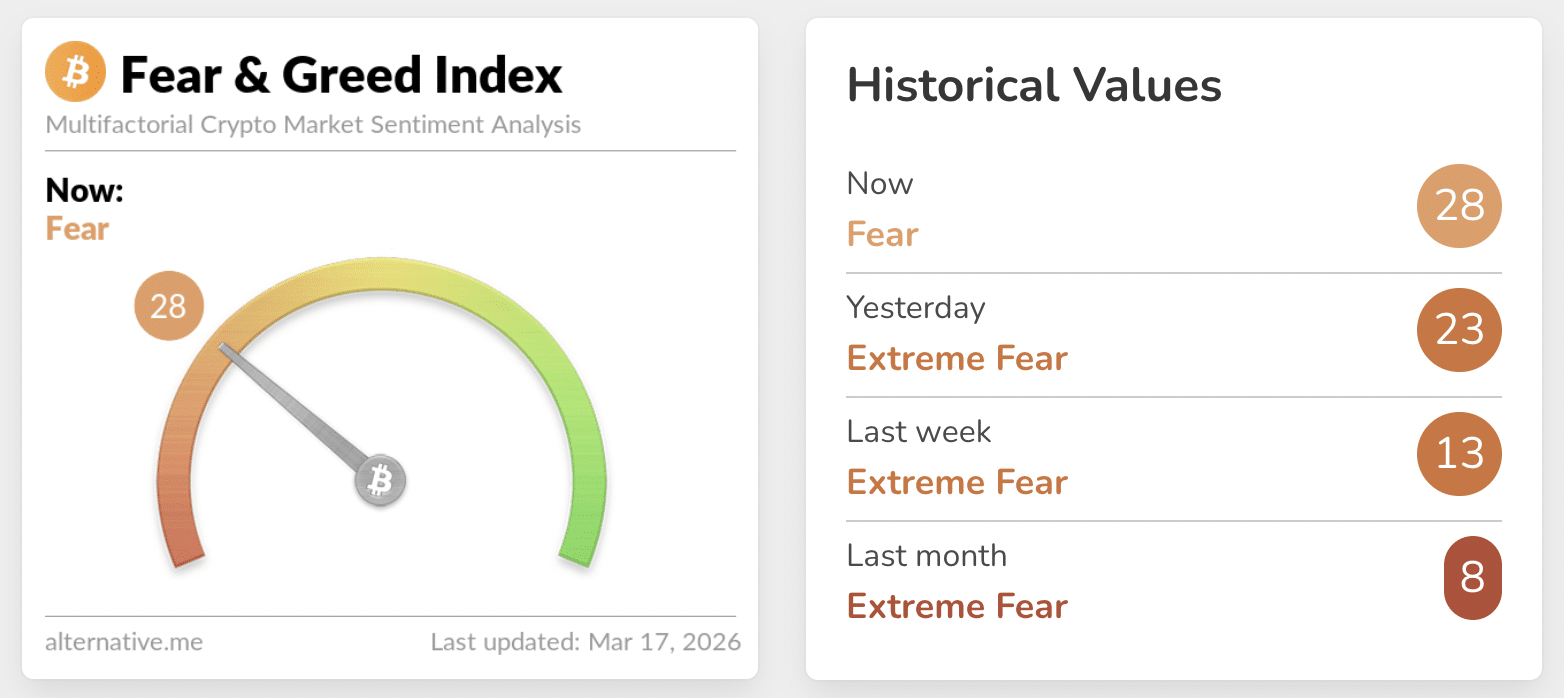

Well, this trend is not just limited to NFTs alone. The broader crypto market is also facing a slowdown. Notably, the crypto Fear and Greed Index reflects this cautious sentiment, remaining in the ‘fear’ zone, although it has slightly recovered from earlier ‘extreme fear’ levels.

By postponing the launch, the company may be trying to avoid releasing a major token during a period when there isn’t enough buying demand in the market.

Simply put, OpenSea plans to launch $SEA only when both the platform and market conditions improve.

Final Summary

- OpenSea’s decision to delay the $SEA token shows the importance of launching a token when market demand is strong.

- Compared to the boom of 2021, the NFT sector is now smaller and less liquid, forcing platforms to rethink their strategies.