Blockchain network Polygon has rolled out its latest protocol upgrade, known as the Madhugiri hard fork, which aims to achieve a 33% increase in network throughput and reduce block consensus time to one second.

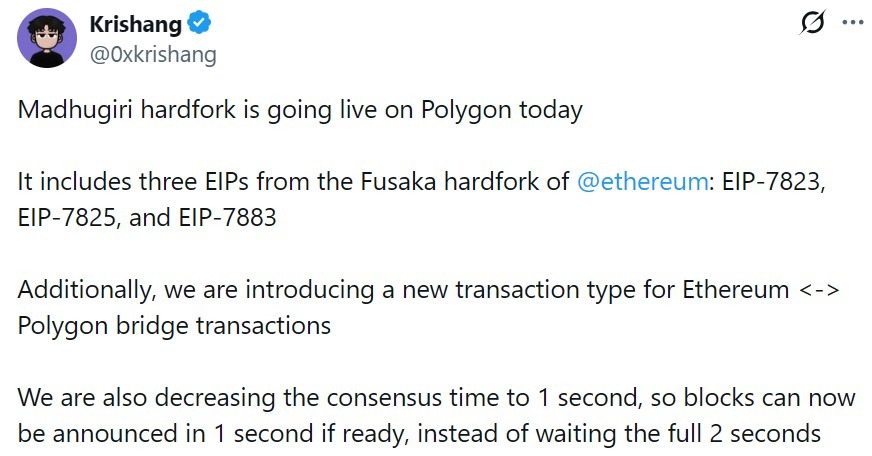

Polygon core developer Krishang Shah said on X that the update includes support for three Fusaka Ethereum Improvement Proposals, specifically EIP-7823, EIP-7825 and EIP-7883. These EIPs make heavy mathematical operations more efficient and secure by limiting the amount of gas they consume.

They also prevent single transactions from consuming excessive computing power, helping the network run more smoothly and predictably.

The upgrade introduces a new transaction type for Ethereum to Polygon bridge traffic and adds a built-in flexibility feature for future upgrades. Polygon previously said that the update makes throughput increases as easy as “flipping a few switches.”

“We are also decreasing the consensus time to 1 second, so blocks can now be announced in 1 second if ready, instead of waiting the full 2 seconds,” Shah wrote.

New update reinforces Polygon for stablecoins and RWAs

With Madhugiri now live, Polygon aims to reinforce its infrastructure while materially improving its performance. These are prerequisites for high-frequency and high-trust use cases, such as real-world asset (RWA) tokenization and stablecoins.

Aishwary Gupta, the global head of payments and RWAs at Polygon Labs, previously forecasted a “stablecoin supercycle.”

Gupta said there will be a surge of “at least 100,000 stablecoins” in the next five years. However, he said this would not only be about minting tokens and must have a corresponding utility like yield.

Gupta also advocated for more transparency and accountability in the RWA sector. He previously argued that RWA numbers are meaningless if the assets cannot be audited, settled or traded.

“When transparency and accountability are established, RWAs will reach even greater heights, unlocking trillions in institutional capital,” he wrote.

Related: Polygon co-founder mulls resurrecting MATIC a year after POL rebrand

Hard fork follows major Heimdall upgrade

The upgrade comes on the heels of rapid prior improvements. On July 10, Polygon deployed Heimdall 2.0, dubbed by Polygon Foundation CEO Sandeep Nailwal as the network’s “most technically complex” hard fork since its launch.

The update reduced transaction finality times from one to two minutes to roughly five seconds.

However, on Sept. 10, the network experienced a significant disruption when a bug caused finality delays of 10 to 15 minutes, affecting validator sync, remote procedure call services and third-party tooling. Despite this, the team assured the community that blocks were still running.

On Sept. 11, the Polygon Foundation announced that the consensus and finality functions had been restored through a hard fork. With the update, nodes were no longer stuck, while checkpoints and milestones were finalized as expected.

Magazine: Ethereum’s Fusaka fork explained for dummies: What the hell is PeerDAS?