【Introduction】Global No.2 Drops to No.10: Claude's Strongest Model Exposed for "Intelligence Decline," BridgeBench Confirms It! But Anthropic Doesn't Seem to Care?

Is Anthropic Finished?

Recently, AMD's AI Director confirmed Claude Code's intelligence decline, stating bluntly that it is "no longer usable for complex tasks."

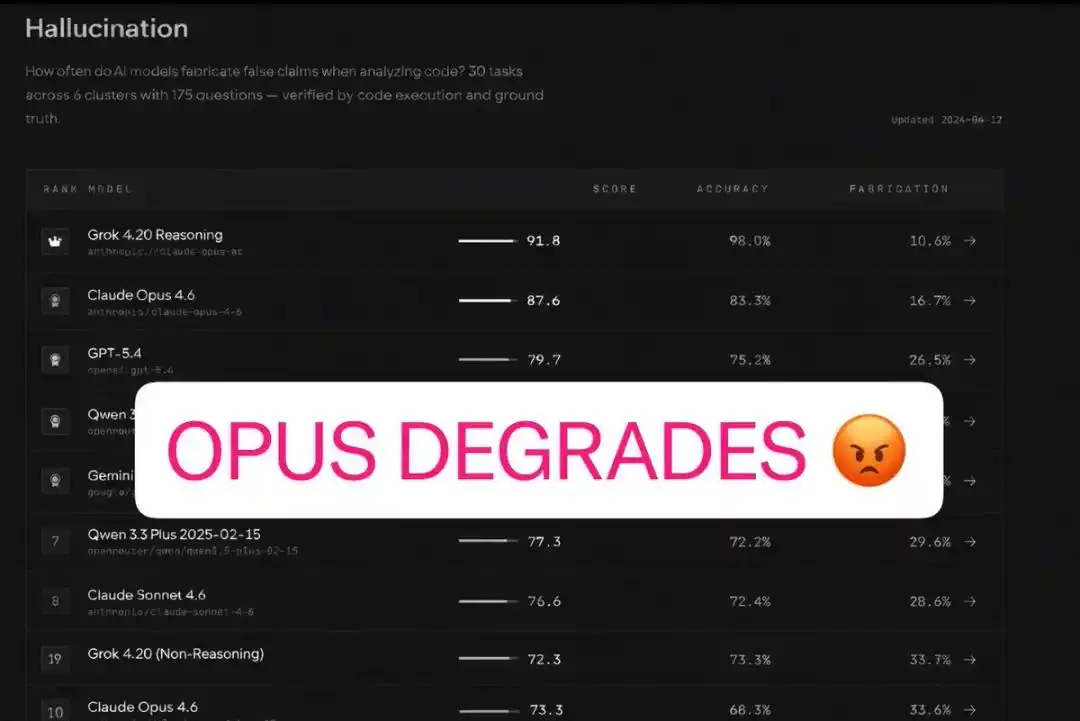

Now, the latest report from the BridgeBench evaluation has delivered another heavy blow to Anthropic!

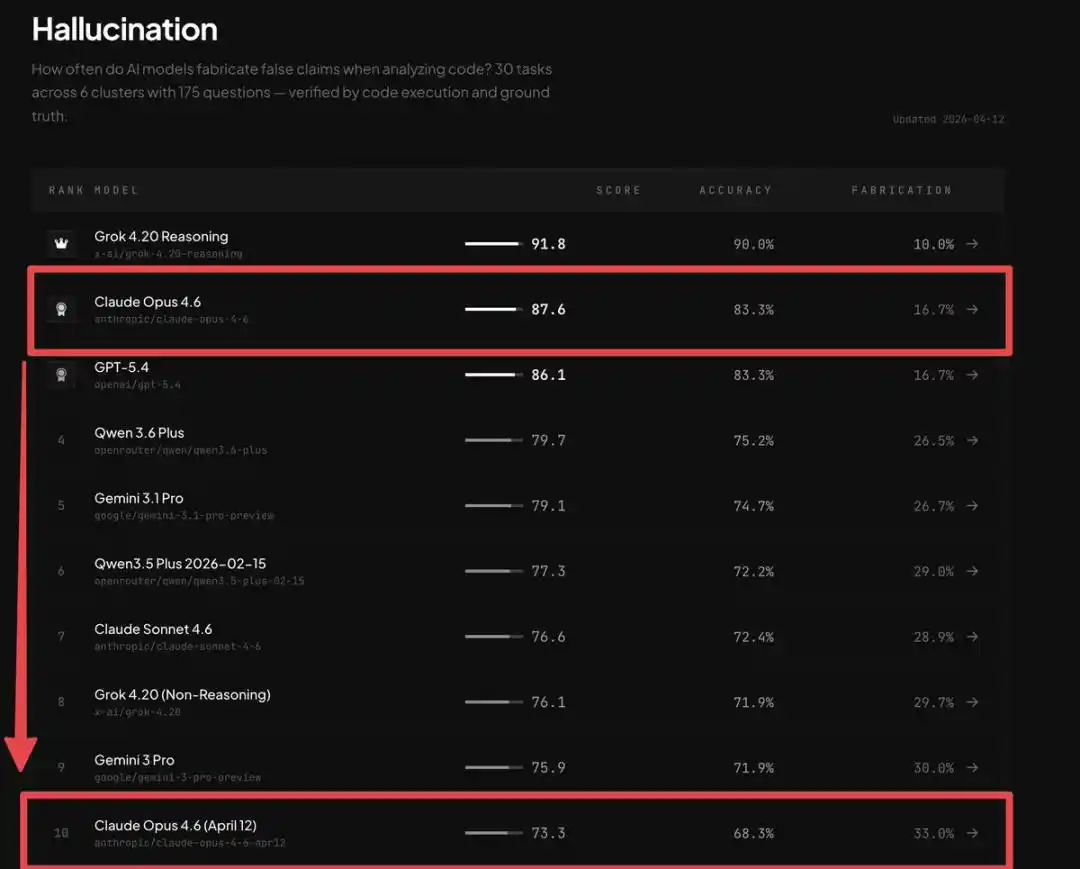

The data is staggering: Claude Opus 4.6's global ranking plummeted vertically from 2nd place to 10th place:

Accuracy dropped precipitously from 83.3% to 68.3%, and the hallucination rate nearly doubled, increasing by 98%.

At that moment, Claude's intelligence declined, it became dumber, the user experience worsened—the cold, hard numbers ended all user doubts—

It wasn't their problem; Claude Opus 4.6 had indeed gotten worse!

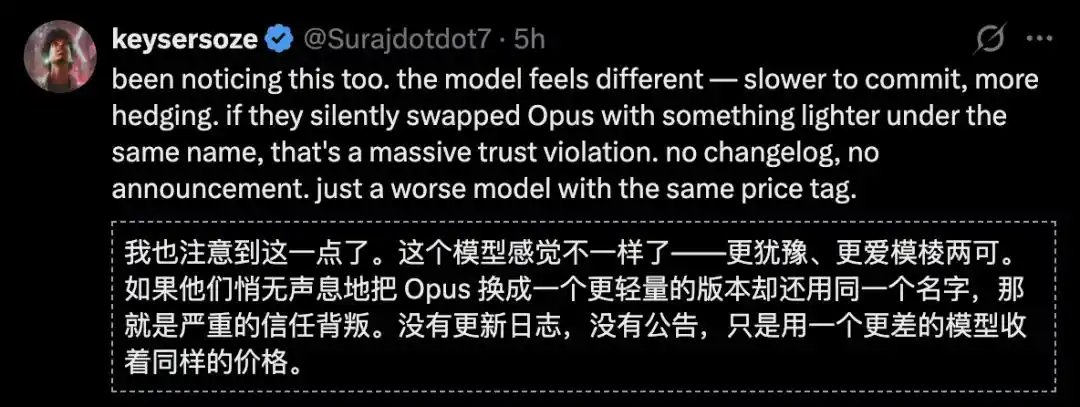

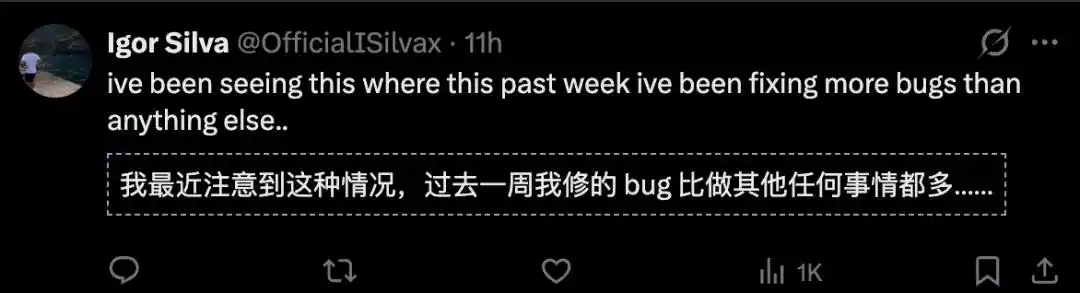

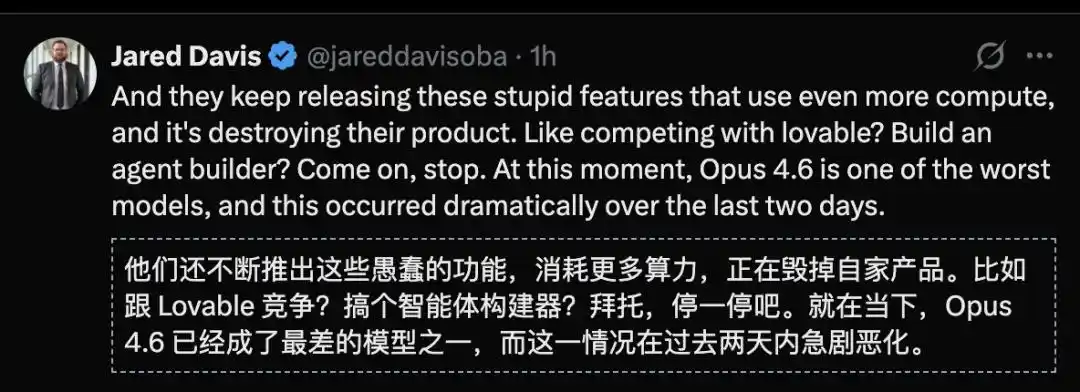

Claude users felt cheated!

Imagine if you relied on this model for any critical task, and they could directly replace it with a much worse model without informing you.

But users questioned: "How can this possibly be legal?" Trust began to crumble, ridicule towards Anthropic flooded the internet, and even the most loyal supporters began to waver.

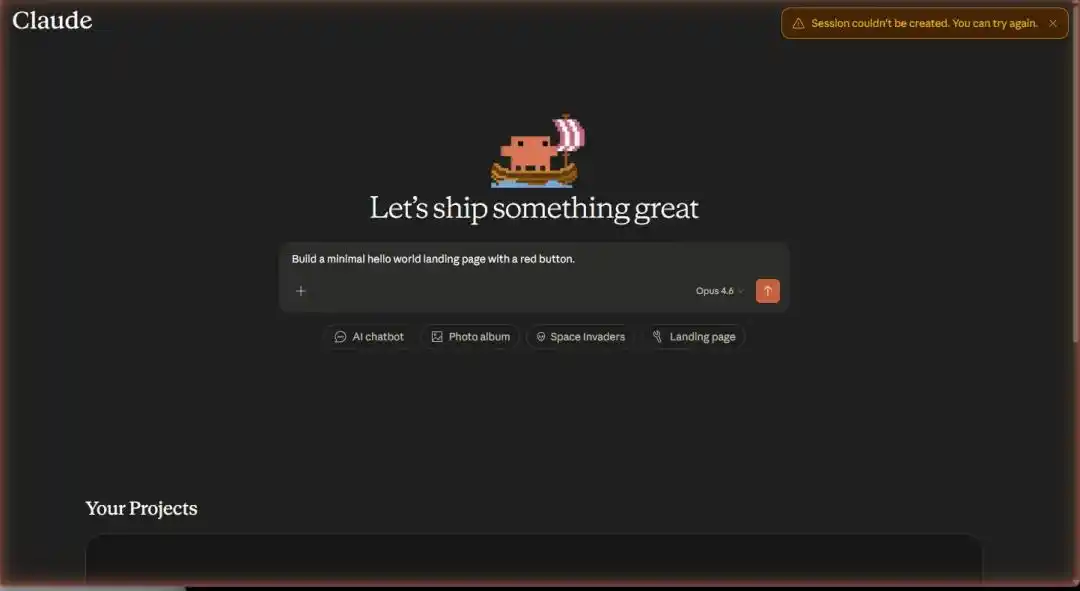

But just as the entire internet was mocking them, Anthropic's trump card emerged—a suspected screenshot of an internal tool interface was leaked.

What the image showed instantly made all discussions about "Claude getting dumb" irrelevant—Claude Projects is testing a complete full-stack application building system.

Not helping you write code, but helping you build products.

While everyone was arguing over model scores, Anthropic had already changed the game table.

What's Hidden in the Leaked Image?

First, let's talk about what exactly that screenshot captured.

According to cross-verification from multiple sources, the leaked image shows an "one-click development kit" being tested internally by Claude Projects.

The interface clearly lists a row of pre-built templates: AI chatbot, interactive mini-game, business landing page, SaaS data dashboard... covering almost all the high-frequency demand scenarios for independent developers.

But the templates are just the surface.

What truly makes one gasp is the full-stack capability chain behind the templates—

Authentication? Check and configure.

Database? Select and build.

Front-end interface? Describe and generate.

Deployment and launch? One-click搞定 (get it done).

This is not "AI-assisted programming." This is "AI-replacing programming," and it doesn't even need to distill your skills anymore.

Understanding the weight of this statement requires看清 (seeing clearly) the current landscape of AI programming tools.

- Cursor's logic is "making you code faster in the IDE"—it optimizes coding speed, the programmer is still the protagonist.

- Replit's logic is "enabling those who can't code to code"—it lowers the entry barrier, but you still need to understand code logic.

- Vercel's logic is "making deployment feel seamless"—it solves the last mile, but you have to walk the previous road yourself.

They each tackle one环节 (link) in the software development chain, each achieving极致 (the ultimate).

But what Claude wants to do is on a completely different dimension from them.

Cursor makes programmers 10 times faster, Replit lets non-programmers code—but Claude wants to make "coding" itself obsolete.

The former is an efficiency revolution, the latter is category elimination.

According to leaked information, the underlying engine powering this system is precisely Opus 4.6—the model being mocked across the internet for "intelligence decline."

Mythos "Not Strong Enough" Might Be Intentional?

The most core, and perhaps most controversial, judgment might be—

Anthropic might not care at all where Mythos ranks on the leaderboard.

Does that sound like making excuses for the loser? Let's do the math.

When your strategic endgame is to become a "full-stack application platform," the role played by the model layer changes fundamentally.

It no longer needs to be "the smartest," it only needs to be "good enough."

The key to winning platform competition has never been about the horsepower of the underlying engine, but about the depth of stickiness in the upper-layer ecosystem.

Windows beat Mac not because the OS was more elegant, but because the software ecosystem was richer. Android crushed Windows Phone not because the kernel was more advanced, but because there were more developers.

In platform wars, "the best" is never the reason for winning; "the most used" is.

In public, Dario Amodei has repeatedly said one thing: "Coding will die."

But the leak of the full-stack builder gives this statement product-level physical evidence for the first time.

Dario wasn't making a prophecy. He was describing a roadmap being executed.

If this reasoning holds, then Mythos leading GPT-5.4 Pro (no tools 56.8 vs 42.7) on HLE, but being caught up on GPQA (94.4 vs 94.5) and overtaken on BrowseComp (89.3 vs 86.9)—the meaning of these data points becomes completely different.

It's not that "Anthropic lost," but that "Anthropic selectively stopped focusing effort here."

Should limited computing resources be invested into the leaderboard arms race to maintain an illusory "No.1" label, or should they be倾斜 (tilted) towards full-stack builders that can directly create commercial value?

For a company with annual revenue of $30 billion that needs to prove its commercialization capability to investors, the choice isn't difficult.

The model just needs to be good enough; platform lock-in is the moat.

The残酷真相 (cruel truth) of business competition is: users don't care if your GPQA score is 94.4 or 94.5; users care about "I say a sentence, can the App run?"

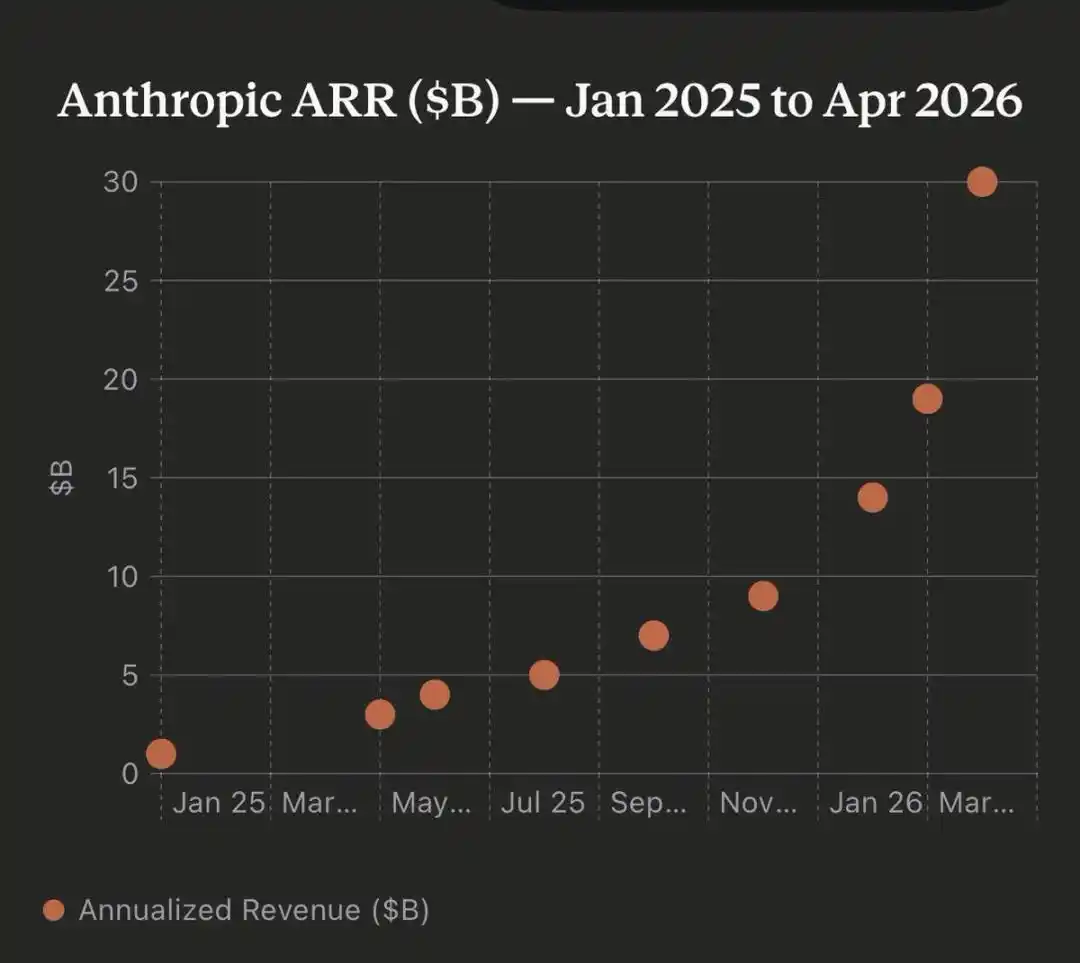

Fear After $30 Billion in Annual Revenue

Anthropic's annualized revenue just broke through $30 billion, surpassing OpenAI.

Anthropic's annualized revenue grew from $1 billion to $30 billion in 15 months

This is a number that would make any startup pop champagne.

But if you are Dario Amodei, your primary emotion right now isn't celebration, but fear.

Because the vast majority of this $30 billion comes from API calls. And APIs are essentially an extremely dangerous business model.

Why? Because APIs mean your customers are using your capabilities to build their own products.

Today they call Claude's API to build an AI customer service platform, tomorrow they build an AI writing tool, the day after they build an AI programming assistant.

Every successful customer is building their own skyscraper on your foundation. It sounds beautiful—until one day, another model company offers a cheaper, similarly usable API, and your customers collectively migrate overnight.

This is the "model commoditization" nightmare: when the differences at the model layer become smaller and smaller, API pricing becomes a price war with no winners.

OpenAI feels this fear, so it's frantically making C-end products (consumer products)—ChatGPT, GPTs, custom assistants. Google feels this fear, so it's stuffing Gemini into search, email, docs, and every one of its own products.

They are all doing the same thing: before models become as cheap as cabbage, turn themselves into a platform users cannot leave.

Anthropic's full-stack builder is the most radical version of this same logic.

Its subtext is:

Rather than wait for others to build a platform on top of my API, and then wait for the day the model price drops to kick me away—I'll build the platform myself first.

You don't need to call my API anymore; you can build Apps directly on my platform. Your user data is here, your workflow is here, your deployment environment is here. By then, if you want to change models? Sure, but your entire business has to start over.

This isn't product innovation; it's survival instinct.

The $30 billion in revenue proves Anthropic can make money, but the leak exposes Anthropic's true anxiety—just making money isn't enough; you have to make others离不开你 (unable to leave you).

Conclusion: The Starry Sky and the Illusion

Let's step back from the business narrative and return to the origin of technical judgment.

The current top large models—whether Claude, GPT, or Gemini—are operating at about a 70% capability level. The climbing speed of this number in the past half year has visibly slowed down.

Moving from 70% to 100% doesn't rely on leaderboard grinding, nor on gaining a few more percentage points on the GPQA score. It relies on becoming an irreplaceable infrastructure—like the power grid, you don't care what turbine the power plant uses, you just know the light turns on when you flip the switch, the AC cools when you turn it on.

Anthropic's full-stack builder is the first time we've seen an AI company seriously thinking about this path of "infrastructuralization."

No longer obsessed with the虚荣战争 (vanity war) of "my model is 0.1 points smarter than yours," but directly answering a more fundamental question: How can I get a billion people to use my stuff every day, without even realizing it?

Because what ultimately decides the AI endgame is never whose exam score is higher. It's who becomes the power grid that everyone cannot live without first.

References:

https://x.com/cryptopunk7213/status/2043405326196867127

https://x.com/iruletheworldmo/status/2043332977136975994

https://x.com/marmaduke091/status/2043382991901147158

This article is from the WeChat public account "新智元" (New Wisdom Element), edited by: KingHZ