With the ERC-8004 (Trustless Agents) standard officially deployed on the Ethereum mainnet, AI Agent identity and reputation management have entered a new stage of verifiability and trustlessness. This standard provides agents with an on-chain verifiable "identity system" through three core registries: the Identity Registry, Reputation Registry, and Validation Registry. This article will adopt a security audit perspective, combining the technical details of ERC-8004, to analyze the key points of risk for each registry, providing a practical audit guide for developers and auditors.

Technical Details and Audit Key Points

The key to ERC-8004 lies in its three registries:

1. Identity Registry

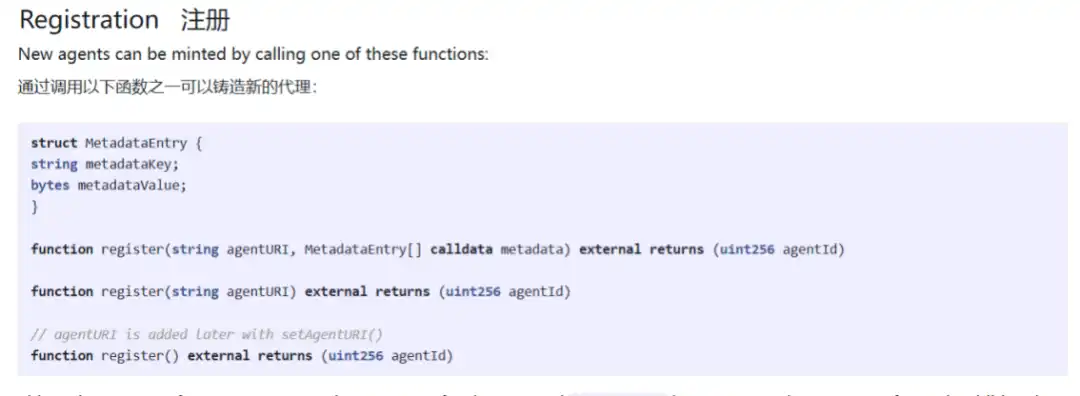

A minimal on-chain handle based on ERC-721, with URIStorage extension, resolving to the agent's registration file, providing a portable, censorship-resistant identifier for each agent.

In the ERC-8004 architecture, the Identity Registry is built on ERC-721 and extends the URIStorage functionality. In other words, each agent corresponds to a unique NFT on the chain, this NFT is called an AgentID.

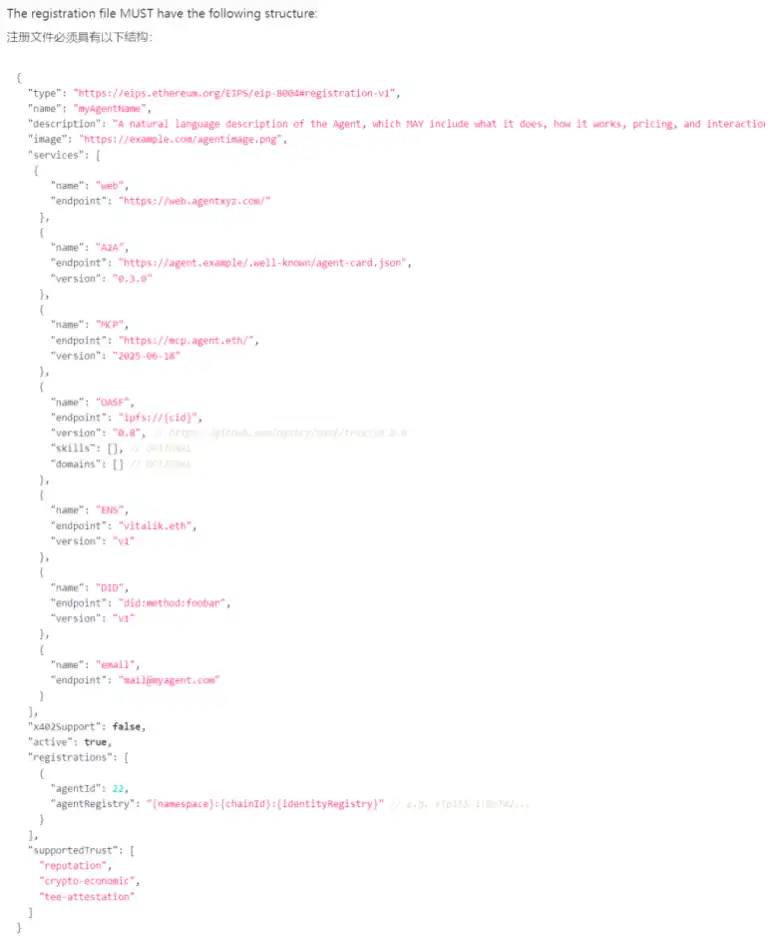

When a developer creates an agent, they call the register function of the registry contract, minting a new AgentID. This token is bound to a tokenURI, pointing to a JSON file stored off-chain—the so-called "agent registration file." The registration file must follow strict specifications, typically including three core types of content:

- Basic information, such as name, description, avatar URL;

- Service endpoints, which are the network addresses where the agent can be accessed, supporting various protocols like HTTP, WebSocket, Libp2p, A2A, MCP;

- Capability declarations, i.e., a list of tasks the agent can perform, such as image generation, arbitrage trading, or code auditing.

Self-declaration alone is clearly insufficient to establish trust, so ERC-8004 introduces a domain verification mechanism. The agent must host a signature file under its declared domain, at the path /.well-known/agent-card.json. The on-chain registry verifies this link, thereby binding the on-chain AgentID to the corresponding DNS domain. The significance of this step is to prevent phishing and impersonation attacks; an agent cannot arbitrarily claim to belong to a domain; it must prove control with a cryptographic signature.

Audit Key Points:

● Check the access control of the setTokenURI function, ensuring only the agent owner or an authorized role (e.g., onlyOwnerAfterMint) can update the URI.

● Review whether the URI supports immutable storage schemes (e.g., IPFS, Arweave). If centralized HTTP links are used, assess the security of domain control rights to prevent DNS hijacking.

● Verify the legality of the URI format to avoid contract exceptions caused by null pointers or invalid URIs.

● Verify that the contract strictly enforces cryptographic signature verification (e.g., EIP-712) when checking domain signatures, preventing signature forgery or replay attacks.

● Check the expiration mechanism for domain ownership proofs to prevent long-valid signatures from being reused.

● Ensure the domain resolution process does not rely on centralized oracles, avoiding single points of failure or manipulation.

2. Reputation Registry

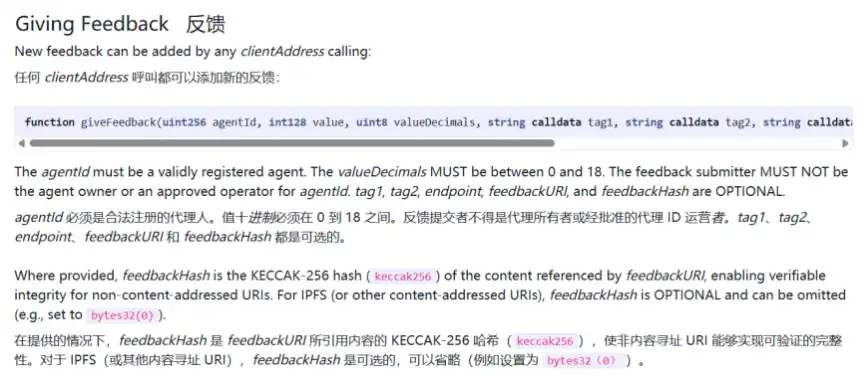

A standard interface for publishing and obtaining feedback signals. Scoring and aggregation happen both on-chain (for composability) and off-chain (for complex algorithms), enabling an ecosystem of professional services like agent scoring, audit networks, and insurance pools.

Used for providing feedback and evaluation on registered AI Agents. Simple feedback submission occurs on-chain, with the potential for complex, aggregated off-chain processing before being recorded on-chain.

ERC-8004 also incorporates a "Payment-Proof Linking" mechanism to prevent malicious review spamming. When Agent A completes an evaluation of Agent B, it calls the giveFeedback function. This function not only accepts a score (0-100) and a comment hash but also allows for a paymentProof field, typically the hash of an x402 transaction. This makes the cost of spamming reviews extremely high, significantly reducing the possibility of Sybil attacks. Ultimately, the system naturally rewards agents with stable and high-quality performance.

Audit Key Points:

● Verify that the giveFeedback function mandates the paymentProof parameter and check if this parameter is a valid x402 transaction hash (or conforms to other payment standards). Ensure payment proofs cannot be reused (e.g., record used hashes) to prevent multiple reviews from a single payment.

● Check if the scoring range (0-100) is enforced at the contract level to avoid out-of-bounds scores disrupting aggregation logic.

● Evaluate the anti-manipulation properties of the off-chain aggregation algorithm: e.g., whether it uses medians, trims extreme values, or uses weighted averages, and whether it penalizes anomalous behavior (like a large number of reviews in a short time).

● Review slashing conditions for clarity and verifiability, e.g., whether they rely on on-chain evidence or fraud proofs submitted by third-party oracles.

● Ensure slashing logic does not contain centralized privileges (e.g., an admin can arbitrarily confiscate staked funds); slashing trigger conditions should be executed automatically solely by the smart contract.

● Test the lock-up period and conditions for staked fund withdrawal to prevent agents from urgently withdrawing funds just before facing slashing.

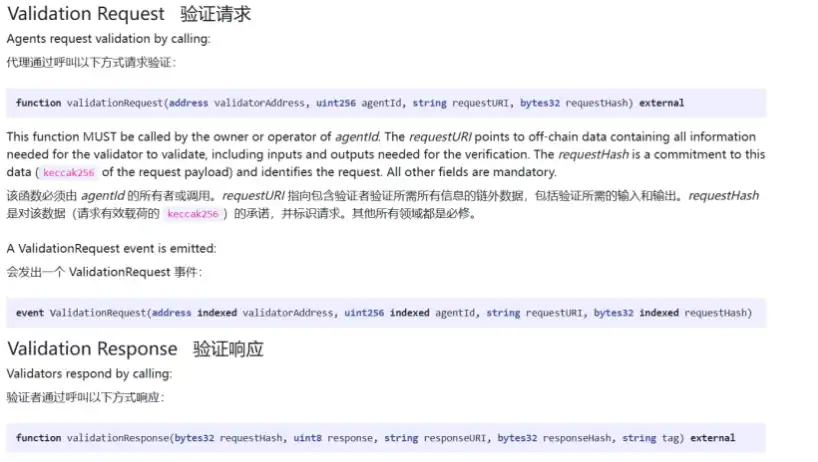

3. Validation Registry

A universal hook for requesting and recording checks by independent validators (e.g., zkML verifiers, TEE oracles, trusted judges).

Reputation reflects the past, but in high-risk scenarios (like large fund allocation), history alone is not enough. The Validation Registry allows agents to submit results for verification by third parties or automated systems, which can use methods like attested safe inference re-execution, zkML verifiers, or TEE oracles to verify or reject requests.

The first model is cryptoeconomic validation, based on game-theoretic security design. The agent must stake a certain amount of native token or stablecoin in the registry. If the agent fails to perform or provides incorrect results, the verifier network can submit a fraud proof, triggering the smart contract to automatically slash its staked funds. This model is suitable for tasks where results are easy to verify but the computation process is opaque, such as data scraping or simple API services.

The second model is cryptographic validation, based on mathematical security principles. TEE attestation (Trusted Execution Environment) allows the agent to run in a security-hardened hardware environment, such as Intel SGX or AWS Nitro. The Validation Registry can store and verify remote attestation reports from the hardware, proving that the agent is indeed running an untampered specific version of the code.

zkML (Zero-Knowledge Machine Learning) is another method of cryptographic validation. The agent submits a zero-knowledge proof along with the inference result. This proof can be verified at very low cost by an on-chain verification contract, mathematically guaranteeing that the output was indeed produced by a specific model (e.g., Llama-3-70B) on a specific input. This prevents "model swapping" attacks, where a service provider claims to use a high-end model but actually uses a lower-tier model to save costs.

Audit Key Points

For cryptoeconomic validation, check:

● Check the submission window for fraud proofs: Is enough time given for verifiers to discover and submit proofs? A window too short might miss malicious behavior, while too long locks funds for extended periods.

● Verify the adjudication logic for fraud proofs: Does it rely on a multi-signature verifier set? If so, review the decentralization level and threshold settings for verifier selection; if adjudication is fully on-chain, ensure the basis for judgment (e.g., on-chain verifiable results) exists and is unambiguous.

● Ensure the staked amount matches the risk, preventing low-cost malicious behavior (e.g., staking too little, where the profit from misbehavior far exceeds the loss).

For TEE attestation, check:

● Check if the contract verifies the timeliness of the TEE proof (e.g., includes a timestamp or block height) to prevent expired proofs from being accepted.

● Verify that the proof content includes the agent's code hash and input/output digests, ensuring the proof is bound to the specific task and avoiding cross-task reuse.

● Evaluate if the TEE proof verification logic relies on external oracles (e.g., Intel IAS). If it does, audit the oracle's security and decentralization level.

For zkML validation, check:

● Confirm the contract integrates an audited zk verification library (e.g., SnarkVerifier) and is correctly configured with verification keys for the specific proof system (e.g., Groth16, PLONK).

● Check if the verification contract restricts the models and input ranges applicable to the proof, preventing model swapping attacks (e.g., a proof generated for a small model is claimed to be from a large model).

● Evaluate the decentralization level of proof generation: Does it rely on a single prover? If multiple provers exist, a consensus mechanism is needed to prevent malicious provers.

Conclusion

ERC-8004 provides a standard for building trust in AI Agents, and its security is crucial for the entire on-chain Agent ecosystem. Security audit work requires a deep understanding of the design intent and potential risks of the three registries. Furthermore, the complexity of cross-contract interactions and common vulnerabilities cannot be ignored. Comprehensive and rigorous audits are necessary to ensure ERC-8004 truly delivers on its "trustless" promise, laying a secure foundation for the future of autonomous agents.