Editor's Note: As "AI writing code" gradually becomes an industry consensus, what truly changes productivity is not the model itself, but how you set rules for the model, organize workflows, and embed it into a sustainable operating system.

Starting from a simple CLAUDE.md file, to multi-agent collaboration, and then to an automated development loop, this method transforms the development process from a "dialogue between humans and AI" into "managing an AI engineering team." In this process, errors are constrained upfront, workflows are structured, and code generation, testing, and review are gradually taken over by the system instead of being manually executed.

More notably, the article also reveals a neglected detail: in long contexts and complex systems, model behavior is not entirely controllable. Whether it's hidden token consumption or diluted instructions, they can invisibly affect output quality. This makes "how to manage AI," rather than just "how to use AI," the new core competency.

At this point, developers no longer focus on coding but instead work around rule design, workflow scheduling, and result validation. Those who have completed this step first have already started shifting from "doing things personally" to "letting the system do things for them."

Below is the original text:

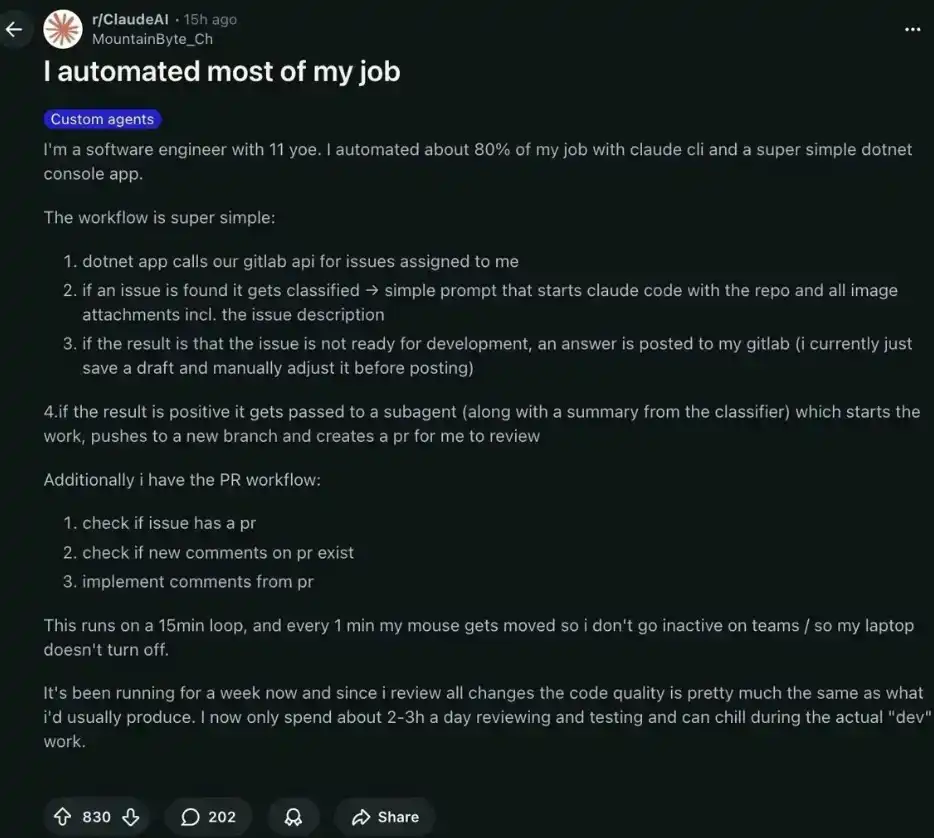

A Google engineer with 11 years of experience automated 80% of his work using Claude Code and a simple .NET application.

Now, he only works 2-3 hours a day instead of the original 8 hours. The rest of the time, he is basically in a "relaxed" state, with the system running on its own, generating $28,000 in passive income for him each month.

What he has mastered is precisely that set of methods you haven't yet learned.

Part 1—Writing CLAUDE.md According to Karpathy's Principles

Andrej Karpathy—one of the world's most influential AI researchers—once systematically summarized the most common mistakes large language models make when writing code: over-engineering, ignoring existing patterns, and introducing unnecessary additional dependencies that no one asked for.

Someone compiled these observations into a unified CLAUDE.md file.

The result: this project gained 15,000 stars on GitHub in a week. In a sense, it can be said that 15,000 people changed their way of working because of it.

The core idea is actually very simple: if errors are predictable, they can be prevented in advance with clear instructions. Just by placing a markdown file in the code repository, you can provide Claude Code with a complete set of structured behavioral rules, thereby unifying decision-making and execution across the entire project.

Inside this file, there are mainly four core principles:

· Think Before Coding → Avoid wrong assumptions and overlooked trade-offs

· Simplicity First → Avoid over-engineering and bloated abstractions

· Surgical Changes → Avoid modifying code that no one asked to change

· Goal-Driven Execution → Test first, then validate against clear success criteria

It doesn't rely on any framework, nor does it require complex tools—just one file can change Claude's behavior at the project level.

The real difference is:

· Without CLAUDE.md: Claude violates specifications about 40% of the time

· Using Karpathy's CLAUDE.md: Violation rate drops to about 3%

· Setup time: Only 5 minutes

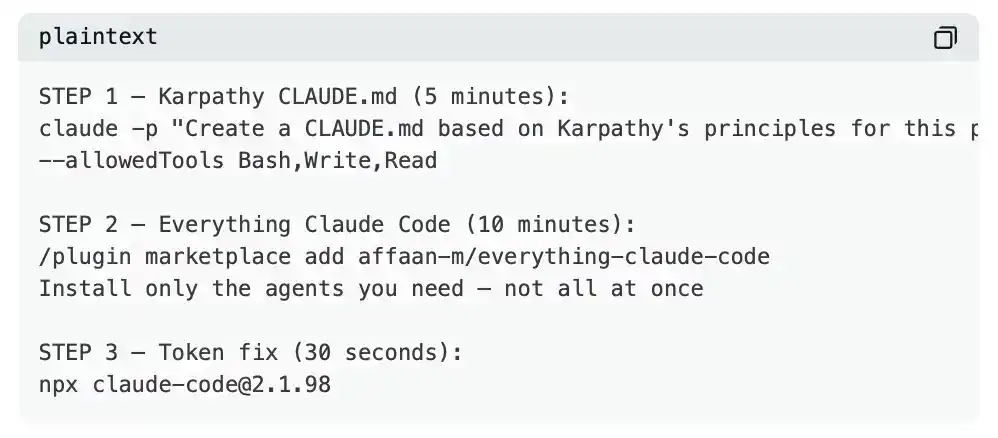

Command to automatically generate your own CLAUDE.md file:

claude -p "Read the entire project and create a CLAUDE.md based on:

Think Before Coding, Simplicity First, Surgical Changes, Goal-Driven Execution.

Adapt to the real architecture you see." --allowedTools Bash,Write,Read

It replaces this kind of Claude: over-engineering simple tasks, introducing unrequested dependencies, and even arbitrarily modifying files that shouldn't be touched.

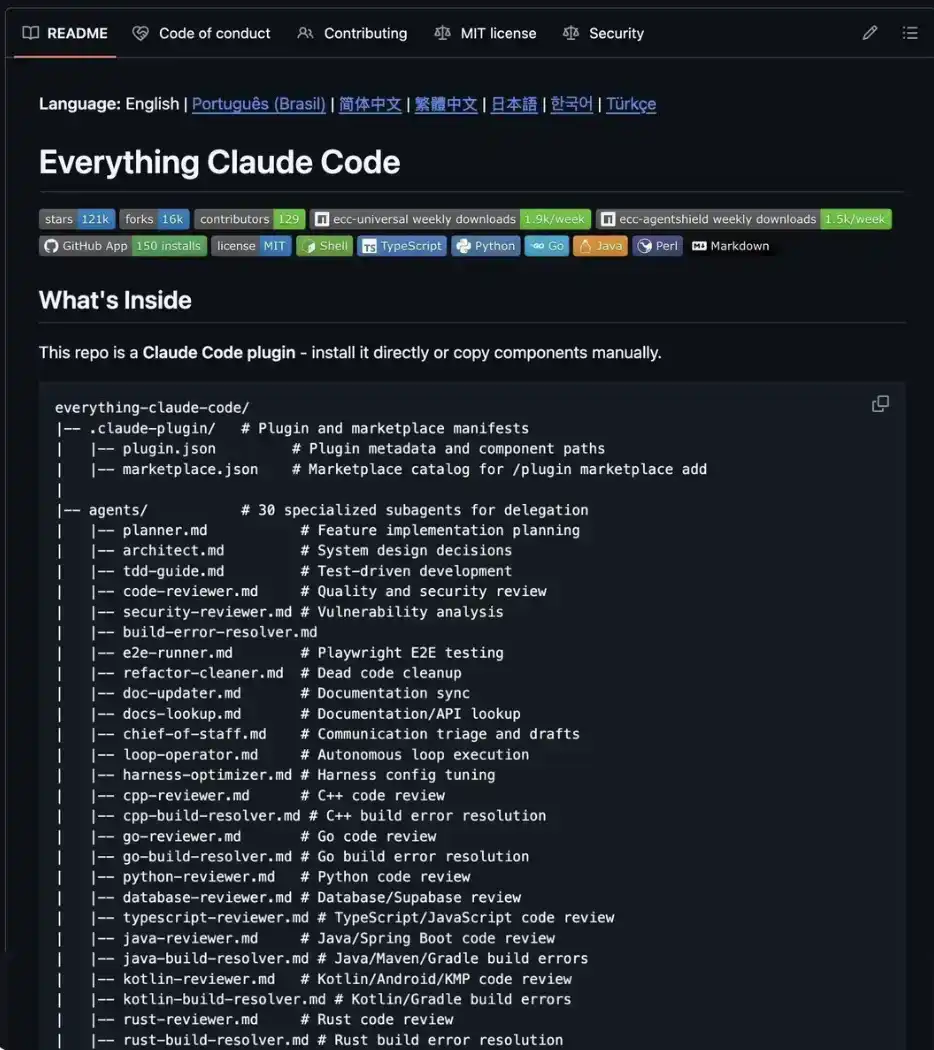

Part2 Everything Claude Code: A Complete Engineering Team in a Repository

Everything Claude Code (Over 153,000 stars on GitHub)

This is not just a collection of prompts; it's more like a complete AI operating system for building products.

Supports running on Claude, Codex, Cursor, OpenCode, Gemini, and more—one system, usable everywhere.

Installation:

/plugin marketplace add affaan-m/everything-claude-code

Or install manually—just copy the components you need into the project's .claude/ directory. Don't load everything at once—loading 27 agents and 64 skills simultaneously will likely exhaust your context allowance before you even input your first prompt. Only keep what you truly need.

The real difference is:

· Before: You were conversing with an AI

· After: You are managing an automatically running AI engineering team

It replaces: The weeks you would have spent building your own agent system, separately setting up different tools for planning/review/security, and the $200–500 monthly cost for various AI services.

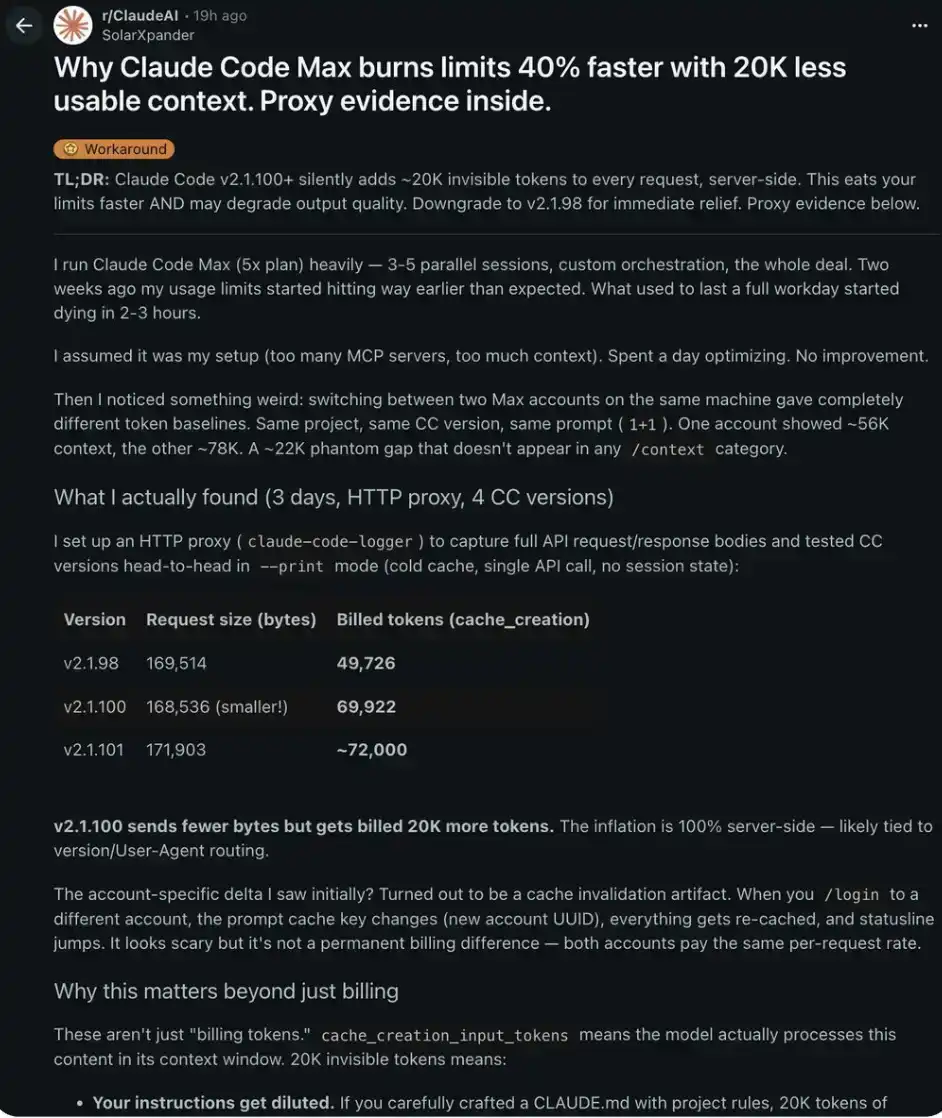

Part3 A Hidden "Scandal": Claude Code v2.1.100 Is Quietly Consuming Your Tokens

Someone set up an HTTP proxy and intercepted and analyzed the complete API requests of 4 different versions of Claude Code.

They found:

v2.1.98: 169,514 bytes request → 49,726 tokens charged

v2.1.100: 168,536 bytes request → 69,922 tokens charged

difference: -978 bytes but +20,196 tokens

v2.1.100 sent fewer data bytes but charged an extra 20,000 tokens. This "inflation" happens entirely on the server side—you can't see it, nor can you verify it through the /context interface.

Why this matters beyond billing: these extra 20,000 tokens are stuffed into Claude's actual context window.

This means:

→ Your CLAUDE.md instructions get diluted by these 20,000 "hidden contents"

→ Output quality degrades faster in long conversations

→ When Claude ignores your rules, it's hard to find the cause

→ Claude Max usage allowance is consumed about 40% faster than normal

Fix takes only 30 seconds: npx [email protected]

This is a temporary solution before Anthropic officially fixes the issue, but in practice, you can almost immediately feel the change in session effectiveness.

It replaces: You no longer need to guess why Claude suddenly stops following your instructions.

Case Study: What a Complete Automated System Looks Like

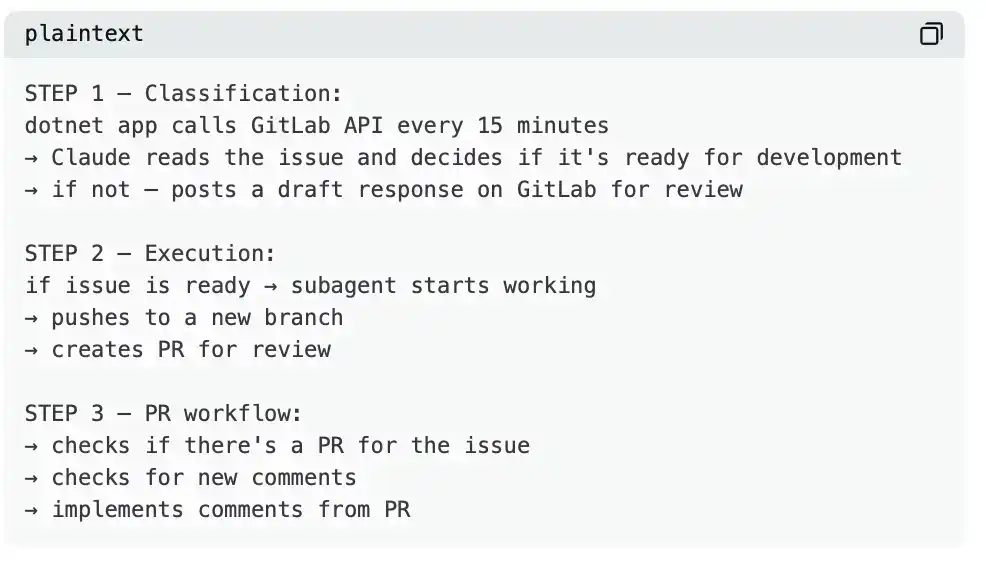

An engineer with 11 years of experience built a system consisting of three parts:

Results after one week:

· Before: 8 hours a day writing code

· After: Only 2–3 hours a day doing code review and testing

· Code quality: Basically unchanged—because he reviews each one

· Teams status: Always online—mouse moves automatically every minute

· Remaining time: Free to use all day

This isn't some "magic"; it's the result of CLAUDE.md + the right agents + a loop mechanism running every 15 minutes.

Complete checklist:

What you gain after reading this:

· Before: Claude violated existing specifications 40% of the time

· After: Using Karpathy's CLAUDE.md, violation rate dropped to 3%

· Before: You needed weeks to build agents

· After: 27 agents ready to use out-of-the-box

· Before: Claude Max exhausted its allowance in 2–3 hours

· After: Downgrading to v2.1.98 recovers about 40% of the usage limit

· Before: Needed 8 hours a day to write code

· After: Only 2–3 hours for review, the rest runs automatically

· Setup time: 15–20 minutes

· Daily savings: 5–6 hours

· Monthly savings: 100–120 hours

If your time is worth $30 per hour—you are "invisibly losing" $3000–3600 every month.

If it's $100 per hour—that's $10,000–12,000 flowing away monthly, just because you're still manually writing code that Claude could have done itself.

Most developers will never reach this level—not because they can't, but because they think it's complicated. In reality, between you and "full automation," there are only three commands and one file.

The engineer I mentioned at the beginning isn't some genius, nor is he a senior engineer from Google. He just spent one evening setting up the system—since then, the system does the work, and he lives his life.

You can do the same thing tonight. While others are still arguing whether AI will replace developers, those who have already set up the system are just collecting money and relaxing.

The choice is clear. You are building your own life—so choose the right path.