Every time prediction markets get caught up in controversy, we keep circling around the same question without ever truly confronting it:

Are prediction markets really about truth?

Not accuracy, not utility, not whether they outperform polls, journalists, or trends on social media. But truth itself.

Prediction markets price events that haven't happened yet. They are not reporting facts; they are assigning probabilities to a future that is still open, uncertain, and unknowable. Somehow, we've started treating these probabilities as a form of truth.

For most of the past year, prediction markets have been basking in their victory lap.

They beat the polls, they beat cable news, they beat experts with PhDs and PowerPoints. During the 2024 U.S. election cycle, platforms like Polymarket reflected reality faster than almost any mainstream forecasting tool. This success solidified into a narrative: prediction markets are not only accurate, but superior—a purer way to aggregate truth, a more genuine signal of people's beliefs.

Then, January arrived.

A new account appeared on Polymarket, betting around $30,000 that Venezuelan President Nicolás Maduro would be ousted by the end of the month. At the time, the market gave that outcome a very low probability—single digits. It looked like a bad trade.

A few hours later, U.S. forces arrested Maduro and flew him to New York to face criminal charges. The account closed its position, profiting over $400,000.

The market was right.

And that's exactly the problem.

People often tell a comforting story about prediction markets:

Markets aggregate dispersed information. People with different views back their beliefs with money. As evidence accumulates, prices move. The crowd gradually converges on the truth.

This story assumes one crucial thing: the information entering the market is public, noisy, and probabilistic—like tightening polls, candidate gaffes, storms shifting, companies missing earnings.

But the Maduro trade wasn't like that. It felt less like reasoning and more like precise timing.

At that moment, prediction markets stopped looking like a clever forecasting tool and started to seem like something else: a place where proximity trumps insight, and access trumps interpretation.

If a market is accurate because someone knows something the rest of the world doesn't and can't, then the market isn't discovering truth; it's monetizing information asymmetry.

The importance of this distinction is far greater than the industry is willing to admit.

Accuracy can be a warning sign. When faced with criticism, prediction market proponents often repeat the same line: if there's insider trading, the market reacts earlier, thus helping everyone else. Insider trading speeds up the emergence of truth.

This argument sounds clean in theory, but in practice, its logic unravels.

If a market is accurate because it incorporates leaked military operations, classified intelligence, or internal government timelines, then it is no longer an information market in any publicly meaningful sense. It becomes a shadow venue for trading secrets. There is a fundamental difference between rewarding better analysis and rewarding proximity to power. Markets that blur this line will eventually attract regulatory scrutiny—not because they aren't accurate enough, but precisely because they are *too* accurate in the wrong way.

"They made over $100k a day on the Maduro play. I've seen this pattern too many times to doubt it: insiders always win. Polymarket just makes it easier, faster, more visible. Wallet 0x31a5 turned $34k into $410k in 3 hours."

What's unsettling about the Maduro incident isn't just the scale of the payoff, but the context in which these markets are exploding.

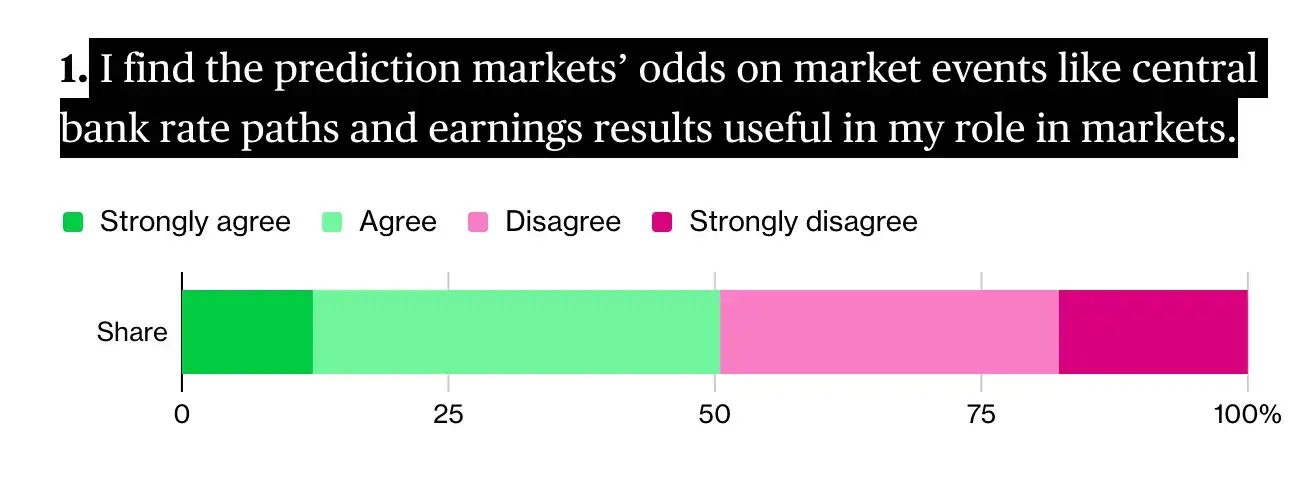

Prediction markets have evolved from fringe novelties to a standalone financing ecosystem taken seriously by Wall Street. According to a Bloomberg Markets survey last December, traditional traders and financial institutions see prediction markets as financial products with staying power, though they also acknowledge these platforms expose the blurry line between gambling and investing.

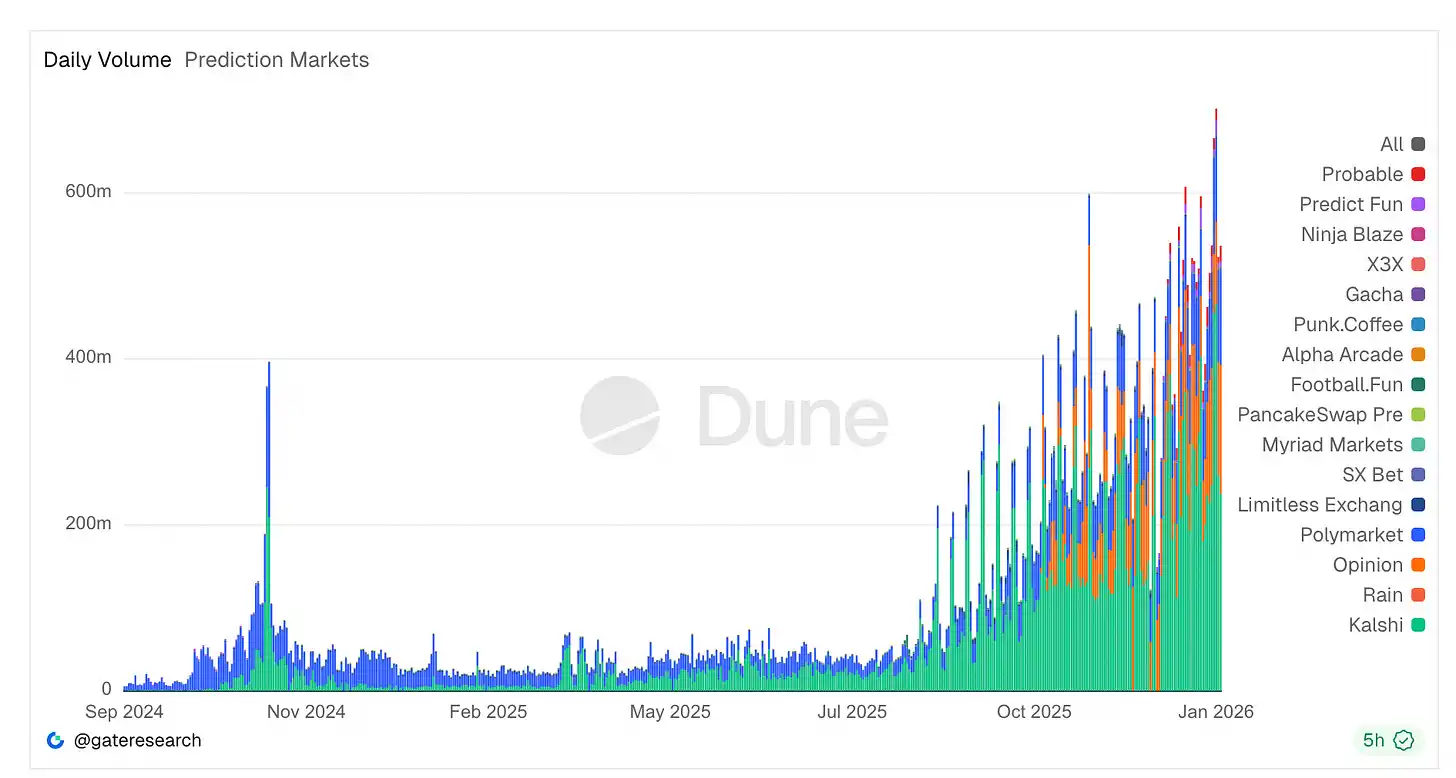

Trading volume is surging. Platforms like Kalshi and Polymarket now handle tens of billions in annual notional volume—Kalshi alone processed nearly $24 billion in 2025, with daily records constantly刷新 (refreshed) as political and sports contracts attract liquidity on an unprecedented scale.

Despite scrutiny, daily trading activity hit an all-time high of around $700 million. Regulated platforms like Kalshi dominate volume, while crypto-native platforms hold cultural centrality. New terminals, aggregators, and analytical tools emerge weekly.

This growth has also attracted heavyweight financial capital. The owner of the New York Stock Exchange committed up to $2 billion in strategic trades to Polymarket's corporate entity, valuing it around $9 billion, signaling Wall Street's belief that these markets can compete with traditional trading venues.

Yet, this boom is colliding with regulatory and ethical ambiguity. Polymarket, which was banned early on for operating unregistered and paid a $1.4 million CFTC fine, only recently regained conditional U.S. approval. Meanwhile, lawmakers like Rep. Ritchie Torres have introduced bills specifically aimed at banning government insiders from trading following the Maduro payoff, arguing the timing of such bets looks more like an opportunity for front-running than informed speculation.

Yet, despite legal, political, and reputational pressure, market participation hasn't declined. In fact, prediction markets are expanding from sports betting into more areas like corporate earnings metrics, with traditional gambling firms and hedge fund desks now staffing experts for arbitrage and pricing inefficiencies.

Taken together, these developments suggest prediction markets are no longer on the fringe. They are deepening ties to financial infrastructure, attracting professional capital, and spurring new laws, all while their core mechanism remains fundamentally a bet on an uncertain future.

The Overlooked Warning: The Zelensky Suit Incident

If the Maduro incident exposed the insider problem, the Zelensky suit market revealed a deeper issue.

In mid-2025, Polymarket opened a market betting on whether Ukrainian President Volodymyr Zelensky would wear a suit by July. It attracted massive volume—hundreds of millions of dollars. It seemed like a joke market but turned into a governance crisis.

Zelensky appeared in a black coat and trousers by a well-known menswear designer. The media called it a suit. Fashion experts called it a suit. Anyone with eyes could see what happened.

But the oracle vote determined: not a suit.

Why?

Because: a few large token holders had bet huge sums on the opposite outcome, and held enough voting power to push through a resolution in their favor. The cost of buying the oracle was even lower than their potential payout.

This wasn't a failure of the decentralized ideal, but a failure of incentive design. The system worked exactly as its rules were written—a human-led oracle is only as honest as the 'cost of lying.' In this case, lying was clearly cheaper.

It's easy to dismiss these events as edge cases, growing pains, or temporary glitches on the path to a more perfect prediction system. But I think that reading is wrong. These aren't accidents; they are the inevitable result of three elements combining: financial incentives, ambiguous rule wording, and not-yet-mature governance mechanisms.

Prediction markets don't discover truth; they arrive at a settlement outcome.

What matters isn't what most people believe, but what the system ultimately deems a valid result. That determination often sits at the intersection of semantic interpretation, power plays, and money plays. And when significant money is involved, that intersection quickly gets crowded.

Once you understand this, controversies like these cease to be surprising.

Regulation Doesn't Come from Nowhere

The legislative response to the Maduro trade was predictable. A bill moving through Congress would ban federal officials and staff from trading on political prediction markets while possessing material non-public information. This isn't radical; it's basic rules.

The stock market figured this out decades ago. Government officials shouldn't profit from privileged access to state power—this is a non-controversial view. Prediction markets are just discovering it now because they've insisted on pretending to be something else.

I think we've overcomplicated this.

Prediction markets are places where people bet on outcomes that haven't happened yet. If the event goes their way, they make money; if not, they lose money. Everything else we say about it is post-hoc.

It doesn't become something else just because the interface is cleaner or the odds are expressed as probabilities. It doesn't become more serious just because it runs on a blockchain or economists find the data interesting.

The incentives are what matter. You get paid not for having insight, but for correctly predicting what happens next.

I think what's unnecessary is our persistent effort to make this activity sound nobler. Calling it prediction or information discovery doesn't change the risk you're taking or why you're taking it.

To some extent, we seem reluctant to simply admit: people basically want to bet on the future.

Yes, they do. And that's fine.

But we should stop pretending it's anything else.

The growth of prediction markets is fundamentally driven by demand for betting on 'narratives'—whether elections, wars, cultural events, or reality itself. This demand is real and enduring.

Institutions use it to hedge uncertainty, retail uses it to act on beliefs or for entertainment, and the media sees it as a barometer. None of this requires dressing up the activity.

In fact, it's this disguise that creates friction.

When platforms brand themselves as 'truth machines' and claim the moral high ground, every controversy feels like an existential crisis. When a market settles in a disturbing way, the event gets elevated into a philosophical dilemma, rather than what it is—a dispute over settlement terms in a high-stakes betting product.

This misalignment of expectations stems from the dishonesty of the narrative itself.

I'm not against prediction markets.

They are one relatively honest way humans express belief amidst uncertainty, often surfacing unsettling signals faster than polls. They will continue to grow.

But we do ourselves a disservice by romanticizing them into something more lofty. They are not epistemological engines; they are financial instruments tied to future events. Recognizing this distinction is what will make them healthier—clearer regulation, sharper ethics, and more sensible design will all follow from it.

Once you admit you're operating a betting product, you stop being surprised when betting behavior shows up.