ByteDance's Volcano Engine recently officially launched GLM-5.1 in its Coding Plan, with the official statement claiming "aligned with the original full capabilities, no purchase limits." Prior to this, Volcano's Coding Plan had long only offered older models like GLM-4.7. This update not only introduced GLM-5.1 but also integrated multiple latest domestic large models such as Minimax M2.7, Kimi k2.6, and DeepSeek-V3.2.

This means developers can call upon multiple leading models simultaneously with just one subscription fee. Market feedback indicates that this "bundled model" significantly reduces developers' trial-and-error costs. Currently, the Lite plan is priced at 40 yuan per month, and the Pro plan at 200 yuan per month, making many developers willing to "buy a spot first."

Zhipu's GLM-5.1 itself demonstrated impressive engineering capabilities in an update in early April 2026. In two official videos released by Zhipu, "Building a Linux Desktop from Scratch in 8 Hours" and "655 Iterations, Increasing Query Throughput of the Vector Database to 6.9 Times the Initial Official Version," it redefined public imagination regarding large models' "8-hour effective execution."

Journalist's On-the-Ground Visit to Developer Community: Majority of Users Report "Not Durable"

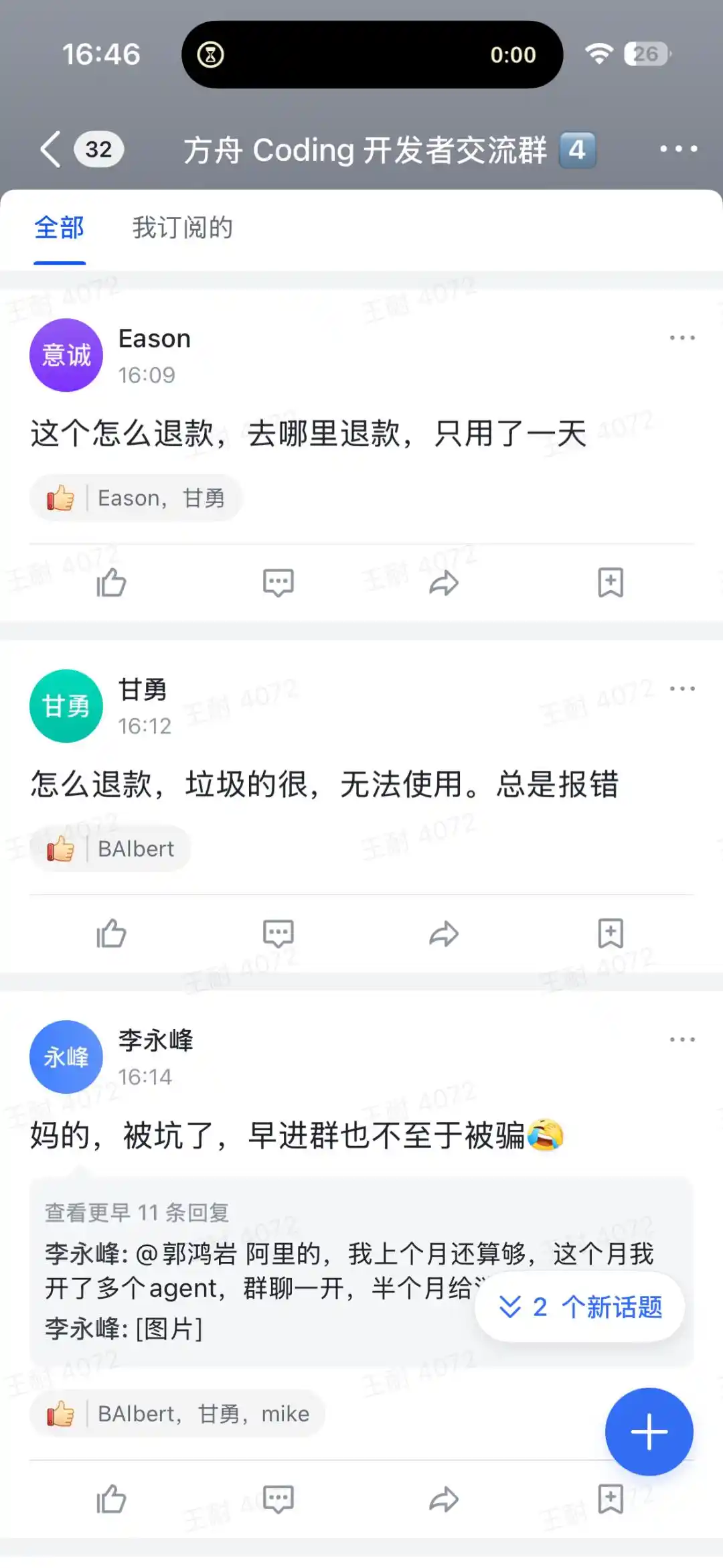

Upon entering a Volcano Coding developer exchange group, the journalist found that alongside posts sharing experience feedback, a large number of users reported a gap between expectations and actual experience. Scrolling through a few pages of the exchange community revealed numerous posts complaining and requesting refunds, with many netizens exclaiming "feel cheated."

The controversies mainly focus on two points:

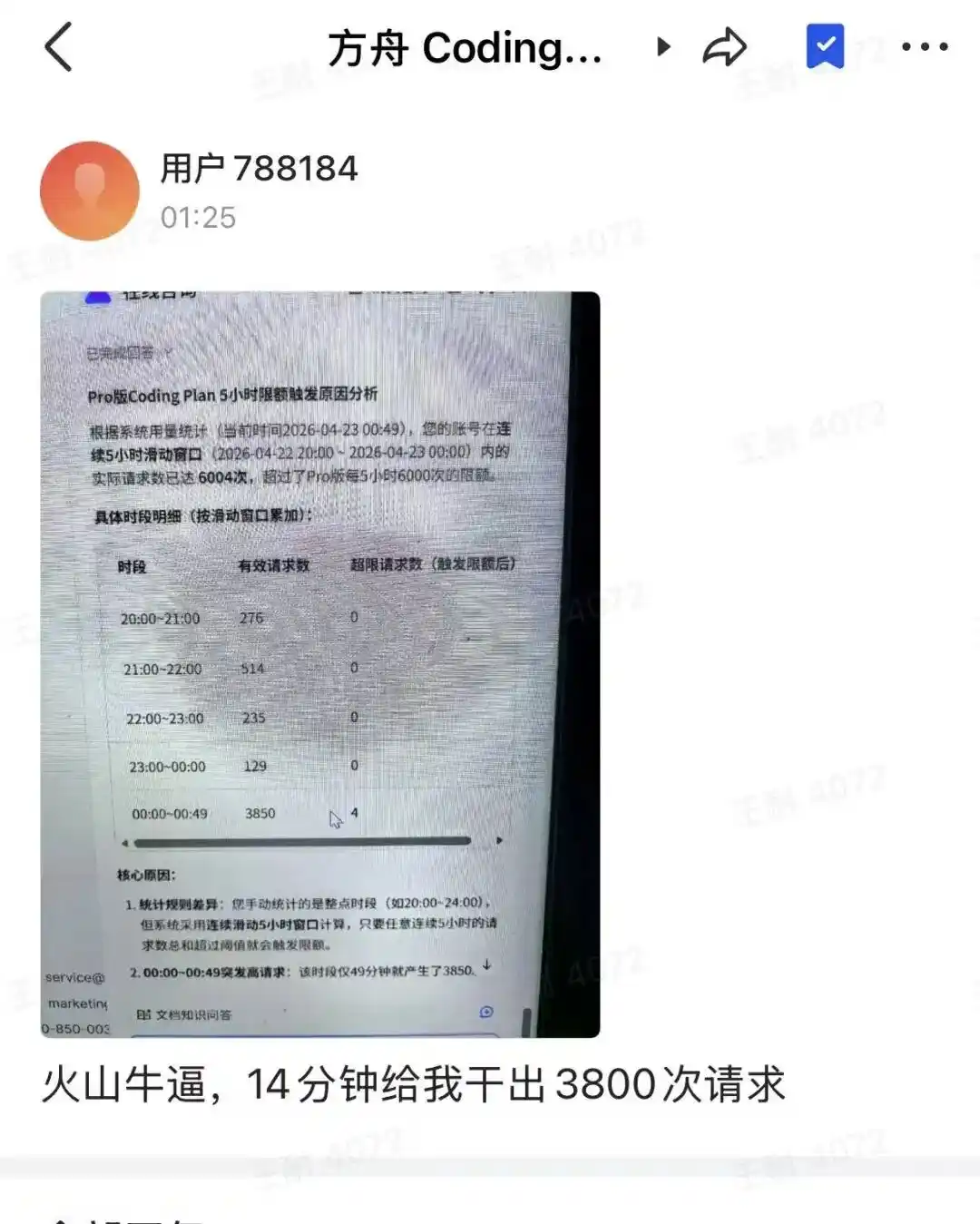

One is the issue of usage limits being consumed too quickly. A user named "Hakimi" posted saying "a few rounds of dialogue in one task and the 5-hour limit is almost used up." Another netizen shared that the reason their "5-hour limit was triggered" was because the account had a continuous sliding window over 5 hours, with the actual number of requests exceeding 6004, surpassing the system limit.

The second is the decline in experience due to computational resource scheduling pressure. Many users reported encountering 429 errors (too many requests) and "first-character delays of over one minute during peak hours being the norm." One user bluntly stated: "The 5-hour limit triggers too frequently, making it unusable for serious development."

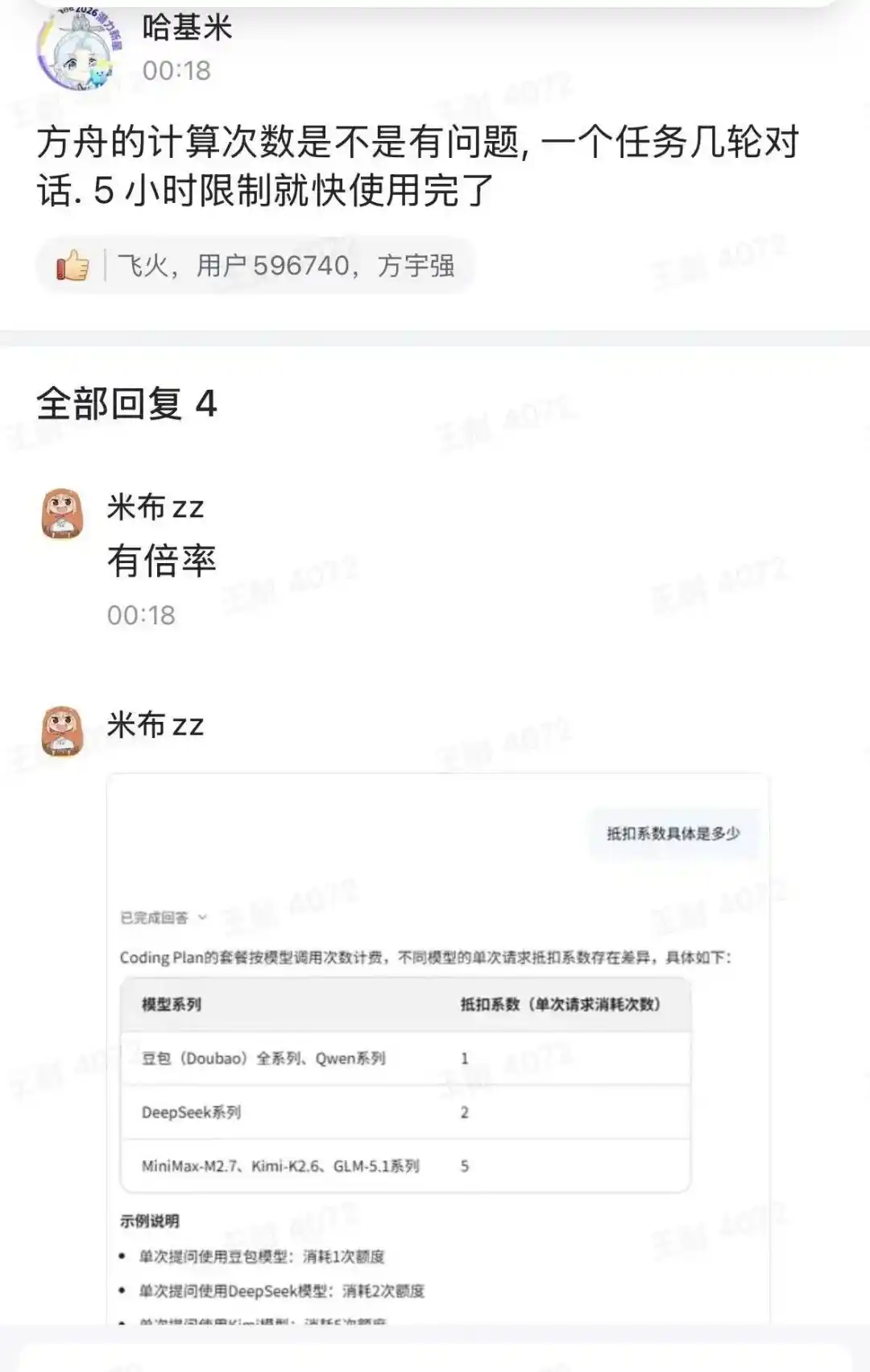

Simultaneously, behind the low price of 40 yuan per month for the Coding Plan, there is also a hidden "undercurrent" regarding different deduction coefficients for "a single call request" within the plan. For example, a user posted an image in the developer exchange group showing the "differences in deduction coefficients for calling different models." For instance, the Doubao series and Qwen series have a deduction coefficient of 1, the DeepSeek series is 2, and the MiniMax-M2.7, Kimi-K2.6, and GLM-5.1 series are 5.

This also reflects that building a "model supermarket" is not as easy as imagined. Developers are attracted by the "cost-effectiveness," but the shortcomings exposed initially in areas like computational resource scheduling have caused many developers to hesitate after trying it out. This also reveals the growing pains of the "bundled model" in its early stages. As users flock in, the carrying capacity of the computing platform faces challenges. Finding a sustainable balance between attracting users with low prices and maintaining service quality will be a long-term challenge for Volcano Engine and its followers.

Cloud Vendors Collectively Shift to "Model Supermarkets": Initial Signs of Stratification and Solidification

This "integrative" update by Volcano Engine's Coding Plan is not an isolated incident.

Since early 2026, mainstream cloud vendors like Alibaba Cloud, Baidu Intelligent Cloud, and Tencent Cloud have all been advancing multi-model integration layouts. For example, Alibaba Cloud, as an industry pioneer, earlier launched the multi-model subscription package "Bailian Coding Plan," currently supporting the Qwen series, kimi-k2.5, glm-5, MiniMax-M2.5, and other models. Currently, the Pro price is 200 yuan per month, and the Lite package stopped new purchases from March 20th and stopped renewals and upgrades from April 13th.

Tencent Cloud's large model Coding Plan subscription service was fully updated in March 2026, supporting multiple latest models including Tencent HY 2.0 Instruct, GLM-5, Kimi-K2.5, and MiniMax-M2.5. Baidu Qianfan officially launched its AI coding subscription service, Coding Plan, in February 2026, also one of the较早 (relatively early) domestic cloud vendors to offer such services.

The "model supermarket" model is not a choice of just one company but is becoming a track where cloud vendors are racing to layout. However, tearing open the aggregation strategy of cloud vendors, whoever can provide more stable services, more transparent quota rules, more flexible disaster recovery mechanisms, and whoever can extend beyond programming to more enterprise-level service capabilities, and whether the renewal rate can keep up, all become new core competitive factors.

Internationally, Amazon Bedrock and Microsoft Azure's model aggregation service platforms, though different in scenarios from the domestic Coding subscription model, belong to the same integration trend.

Overall, industry competition is shifting from "single model capability competition" to "platform integration capability + ecosystem service capability" competition, and industry concentration will rapidly increase.

Wang Kai, Chief Asset Allocation Analyst at Guosen Securities, told reporters that although industry differentiation is accelerating, judging the integration period might be slightly premature. "More accurately, this is the refinement and iteration of industry chain分工 (division of labor). Model vendors focus on algorithms, cloud vendors focus on engineering delivery, each leveraging their main business advantages." He believes that regardless of whether other cloud vendors follow suit, the competitive landscape will evolve from individual efforts to ecological niche differentiation.

Increased Pressure for Large Model Companies to Become "Pipelined"?

So-called "pipelining" does not mean model companies disappear, but rather that they lose product premium, user connection rights, and discourse power, with profits shifting towards the computing platform side, becoming a "dominated" role.

Under the aggregation wave of cloud vendors, "pipelining" is also becoming a Sword of Damocles hanging over the heads of independent large model companies. In this silent game, leading players like Zhipu AI, Moonlight Shadow (Kimi), and MiniMax have not chosen passive compromise but have grown from their genes, offering different breakout paths.

Zhipu AI CEO Zhang Peng, in a public dialogue on April 8th, clearly stated that Zhipu's ultimate goal is never to become a "replaceable calling tool" but to build a fully autonomous agent. This positioning attempts to upgrade Zhipu from a "model supplier" to a "task executor," thereby bypassing the low-price trap of pure API pipelines.

Moonlight Shadow (Kimi) adopts a strategy of "decentralized layout + deep cultivation of long text." It synchronously accesses multiple mainstream cloud platforms like Volcano Engine and Alibaba Cloud, achieving multi-source computational power supply, avoiding being bound to a single channel, and ensuring service stability and cost control. Kimi K2.6, launched in April 2026, adopts a Mixture of Experts (MoE) architecture with a standard context window of 256K tokens.

MiniMax focuses its core investments on vertical fields such as content creation, intelligent customer service, education, enterprise services, and entertainment socializing, with key layouts in scenarios like game AI, digital humans, and multimodal interaction, creating "customized capabilities difficult for cloud platforms to replace."

Will platform integration by major vendors accelerate the "pipelining" of model companies? Wang Kai, Chief Asset Allocation Analyst at Guosen Securities, believes it is necessary to distinguish between short-term and long-term perspectives.

"In the short term, distribution channels being controlled by the platform, partial ceding of pricing power, and profits of model vendors shifting to the entry point side are business norms. But in the long run, general models are prone to homogenization; deep learning models in vertical scenarios like finance, healthcare, and law have professional barriers that cannot be erased simply by centralized aggregation." he said.

In terms of responding to the risk of being platformized, strategies from OpenAI and Anthropic can be referenced. On one hand, strengthen channels that directly face end-users, such as the independent operation of ChatGPT and Claude, which essentially establishes user connections bypassing platforms. On the other hand, the speed of technological iteration and user brand recognition are two effective moats, so model companies need to balance R&D investment with productization layout.

The final outcome of this game of "pipelining vs. platformization" might not be about who eats whom, but a further clarification of division of labor. Cloud vendors act as pipes, model companies focus on technology, and both sides gradually find their respective survival boundaries in the game.

As for who eats whom, at this stage, it is far from the end of the story.

This article is from the WeChat public account "Sci-Tech Innovation Board Daily," author: Wang Nai