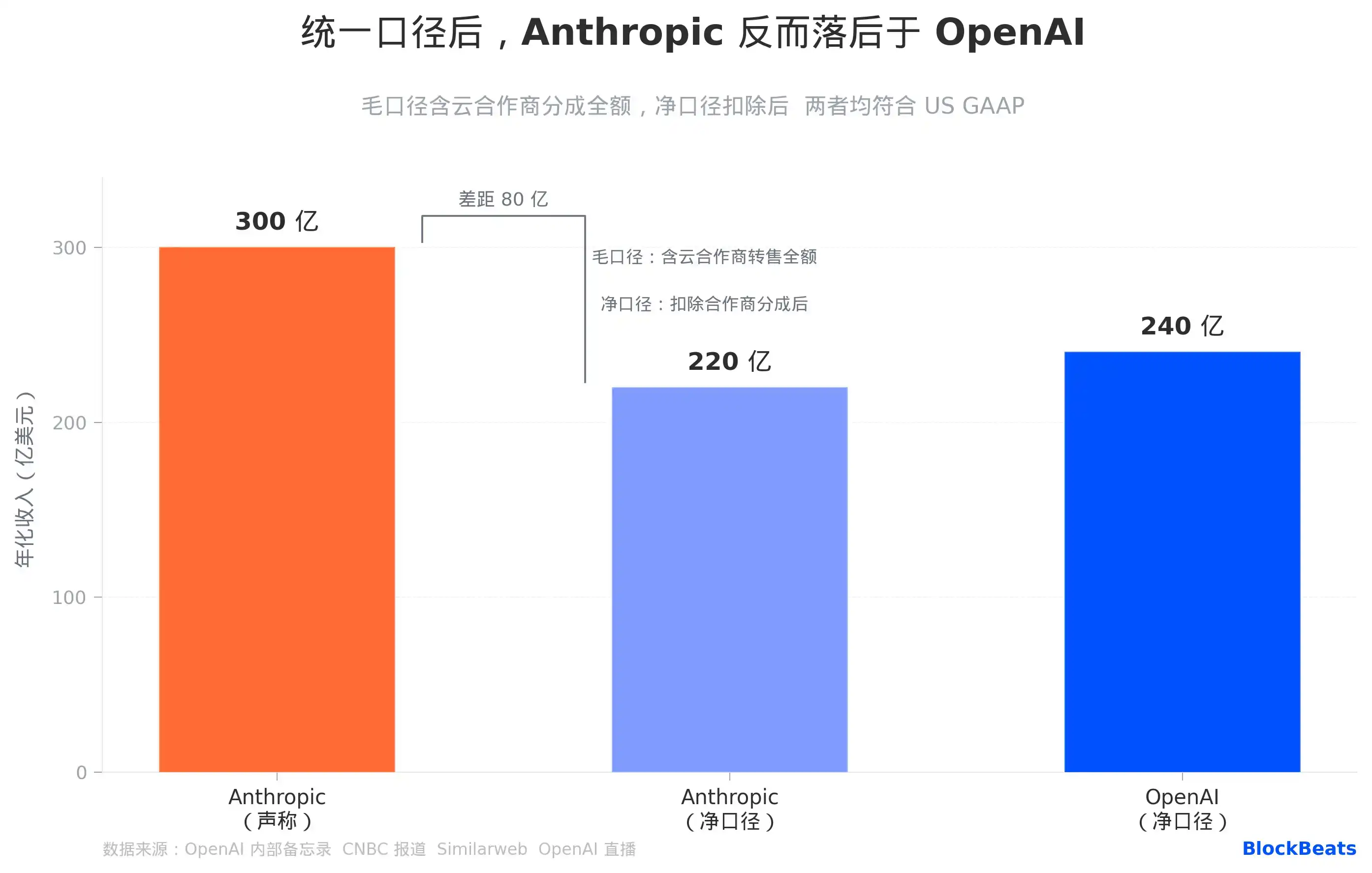

According to Anthropic's books, its annualized revenue is $30 billion, but by OpenAI's conversion, the same set of sales figures is only worth $22 billion. Neither number is fabricated. This is the first cut thrown by OpenAI's Chief Revenue Officer, Denise Dresser, in a four-page internal letter exposed by the media on April 13.

The starting point of the matter is an employee memo obtained by The Information. In the letter, Dresser did three things simultaneously: praised the new Amazon collaboration as having "astoundingly high demand," admitted that the Microsoft partnership "has limited our reach to customers," and spent considerable篇幅 deconstructing Anthropic's revenue figures. The timing of this letter's leak coincides with just one week after Anthropic announced breaking the $30 billion annualized revenue milestone.

Superficially an internal company memo, it is实质上 a carefully constructed information war. To understand it, it's most direct to approach it from three dimensions: revenue口径, the competitive landscape on the enterprise side, and the compute arms race路线, then place them all within the same cloud partnership structure diagram.

Where does the $8 billion accounting gap come from

Anthropic reports $30 billion in annualized revenue; OpenAI says the actual figure is $22 billion. The $8 billion difference stems from the截然不同的 choices the two companies made in revenue recognition口径.

Anthropic uses gross accounting: when a company purchases usage credits for Claude through AWS, Anthropic records the full amount of this money as top-line revenue, then treats the platform share paid to Amazon as a cost. OpenAI does the opposite: it only records the net amount it actually receives from Microsoft, Microsoft's share does not enter the top line.

Both methods comply with U.S. Generally Accepted Accounting Principles (GAAP). Anthropic's logic is that it is the "principal" in customer transactions, with cloud vendors merely being distribution pipelines. OpenAI's logic is that it treats Microsoft as an "agent," booking only the portion that actually reaches its hands. The root of the divergence lies not in who is fabricating numbers, but in who more aggressively asserting their dominant position in the sales chain.

Dresser wrote in the memo that Anthropic "uses an accounting method that makes the revenue figure appear larger," including booking the full gross amount of shares from AWS and Google into top-line revenue. The subtext of this statement is not hard to understand: when Anthropic submits its S-1 prospectus to the SEC, auditors will rule on this口径, and届时 it may need to make adjusted disclosures using a unified口径. Converted to the same口径, Anthropic is $22 billion, OpenAI is $24 billion, and the领先方 has switched places.

It needs to be stated that Anthropic's revenue growth rate itself is already historic. According to data from Bloomberg and Sacra等 media, its annualized revenue grew from about $9 billion at the end of Q4 2025 to the current $30 billion, more than tripling in less than five months, and this is primarily driven by real customer procurement, not something explainable by accounting口径 adjustments. The core of this accounting controversy is not that Anthropic is shrinking, but that OpenAI is using the "口径" knife to redraw boundaries.

The catch-up speed on the enterprise side is faster than most people anticipated

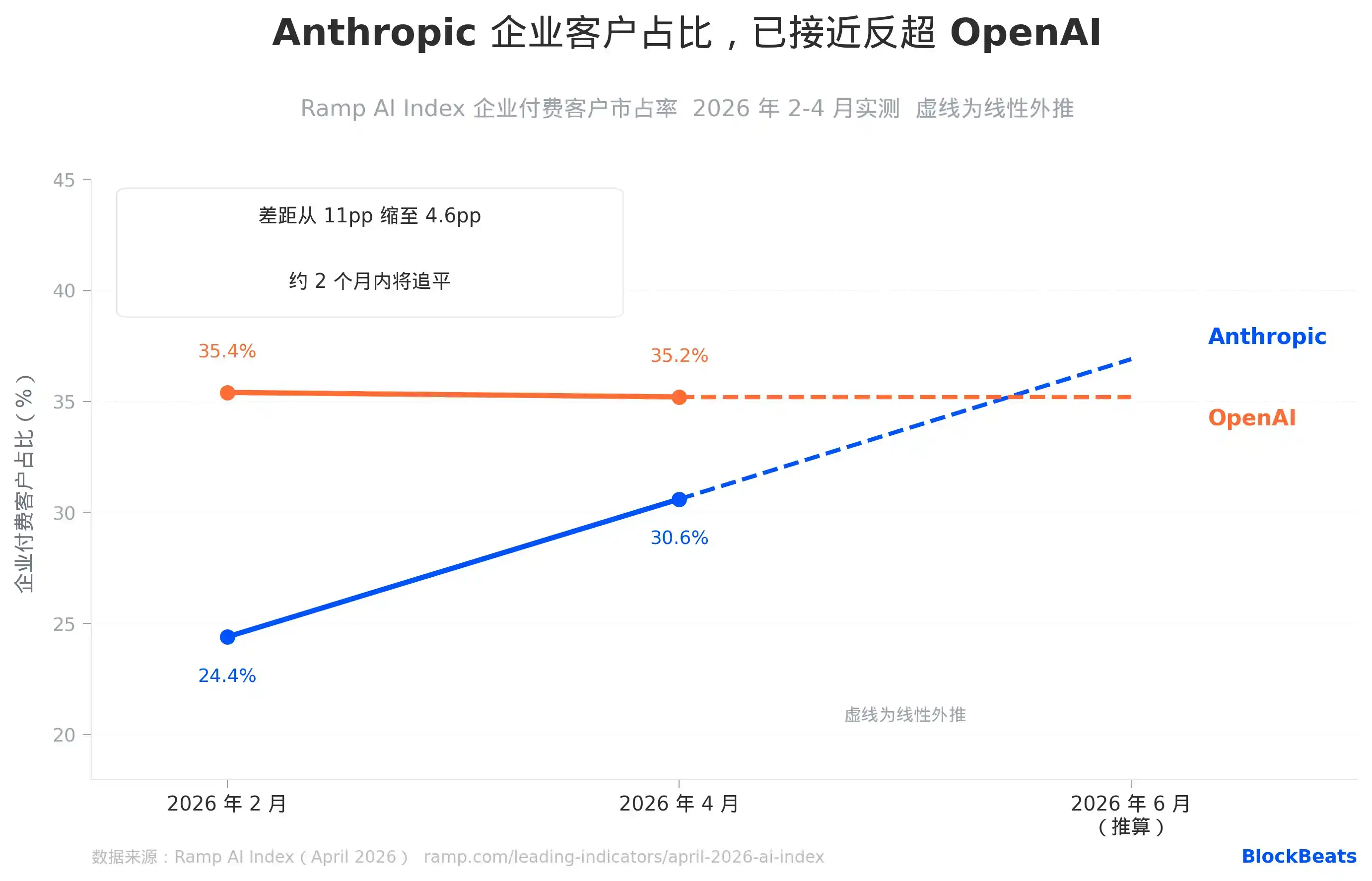

Ramp tracks the actual AI spending behavior of thousands of companies on its platform, making it a first-hand data source for judging real choices on the enterprise side.

Ramp AI Index April data: Anthropic's share among enterprise paying customers rose to 30.6%, OpenAI's is 35.2%, the gap narrowed from 11 percentage points in February to 4.6 percentage points. Based on Anthropic's average monthly increase of +6.3 percentage points over the past two months (which itself is already the largest single-month increase record for this metric), it will overtake OpenAI on this metric in approximately two months.

More notably are the structural signals. In three high-purchasing-power industries, Anthropic's lead has become a fact: Information Technology/Software (63% vs. 54%), Financial Services (52% vs. 46%), and Professional Services (47% vs. 44%) all exceed OpenAI. These three industries happen to be the areas where enterprise AI budgets are most concentrated and procurement decisions are most professional. This means that the companies with the most say in the AI purchasing chain have already collectively begun leaning towards Anthropic.

Dresser罕有地承认ed in the memo that Anthropic "holds a significant lead among enterprise customers," citing programming capabilities. This statement, coming from within OpenAI, carries a weight completely different from external evaluations; it is one company telling its own employees internally that the opponent has won on the core battlefield. She simultaneously added a warning: "You do not want to be a single-product company in a platform war." This is提醒ing employees that Claude's advantage in programming, if it cannot extend to the platform layer, is ultimately just a ticket, not a boarding pass.

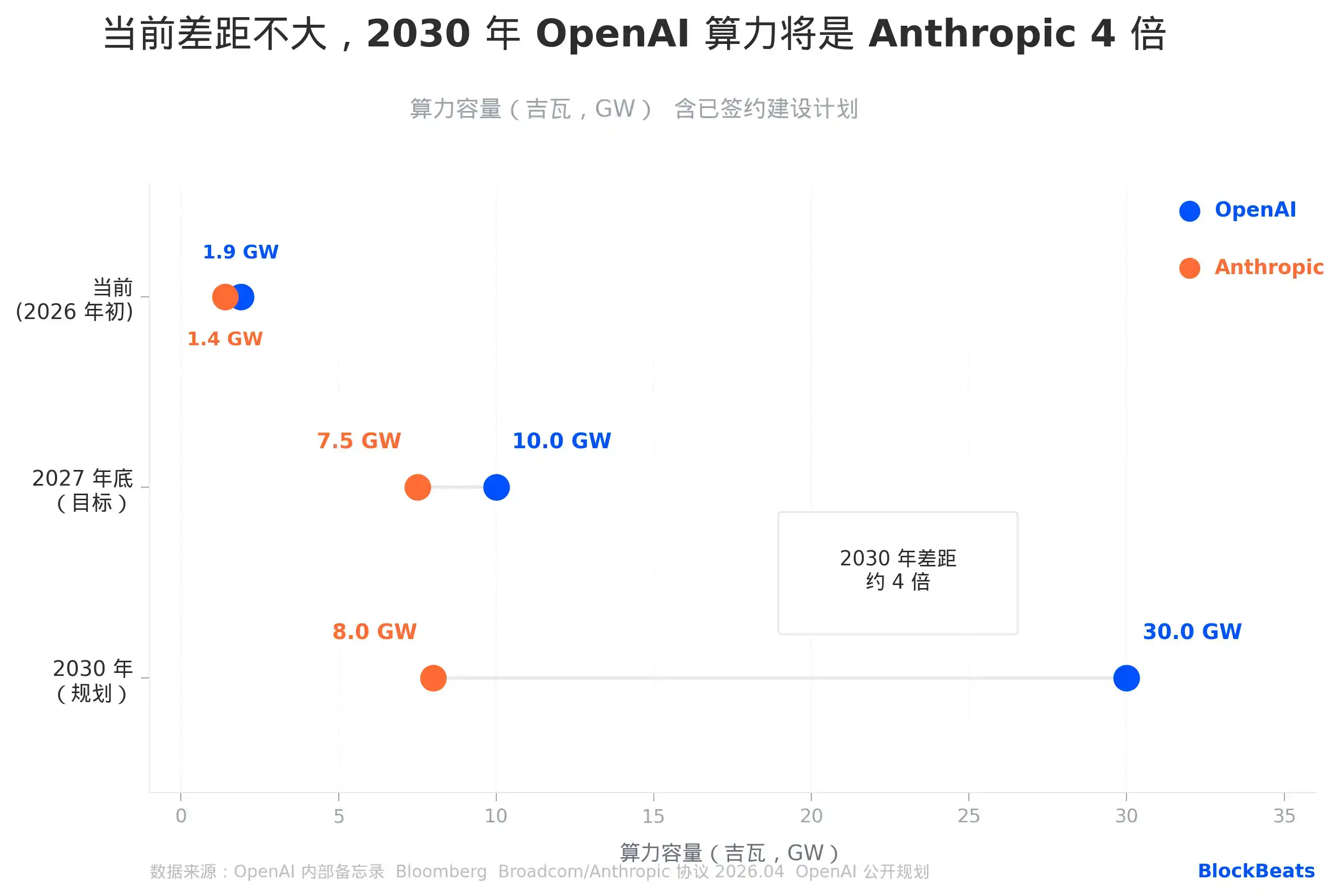

Compute gap: Similar today, fourfold by 2030

Compute capacity is the hardest competitive dimension for AI companies to shorten in the short term because its construction cycle is measured in years, and its funding threshold is measured in tens of billions.

Current numbers seem close: OpenAI约 1.9 gigawatts (GW), Anthropic约 1.4 GW, a difference of about 35%. Dresser described Anthropic in the memo as "operating on a meaningfully smaller curve," but this statement isn't particularly exaggerated in the current capacity comparison; the gap is real, just not yet decisive.

The real fork is after 2027. OpenAI plans to reach 30 GW of compute by 2030, backed by a $30 billion five-year cloud computing contract with Oracle, the entire Stargate infrastructure project, and a total construction commitment of $1.4 trillion.

Anthropic's path relies on a Broadcom custom chip agreement with a capacity of 3.5 GW, deployed through Google Cloud, effective from 2027,加上 existing training clusters on AWS, targeting 7-8 GW by the end of 2027.

Even if Anthropic fully delivers on its 2027 target, there remains a fourfold gap between it and OpenAI's 2030 plan. This chasm is not technically insurmountable; if improvements in model efficiency can make each unit of compute yield more收益, Anthropic could make good enough products with less compute.

But it must do so under the premise that Claude's momentum on the enterprise side continues, using sustained subscription revenue to support its compute procurement costs:据 Sacra estimates, Anthropic will pay cloud partners about $1.9 billion this year, rising to about $6.4 billion in 2027.

Amazon, betting on two competitors simultaneously

The most intriguing sentence in this memo is Dresser's direct characterization of the Microsoft partnership, writing that it "has also limited our ability to reach enterprises where they are."

OpenAI's move towards Amazon is already very clear:据 CNBC reported, in February this year, Amazon announced a $50 billion investment in OpenAI,同时 obtaining the exclusive third-party cloud distribution rights for OpenAI's enterprise Agent management platform, Frontier.

This is an active switch from the Microsoft轨道 to the Amazon轨道. The logic behind it is straightforward: many enterprise customers' AI infrastructure is already built on AWS's Bedrock platform, and Microsoft's exclusivity条款 make it difficult for OpenAI to sell there directly.

But the other side of Amazon's role in this competition is equally noteworthy: it is currently Anthropic's largest cloud infrastructure partner and strategic investor, with cumulative investments of $8 billion. Their collaborative Project Rainier cluster deploys about 500,000 Trainium 2 chips. Amazon's total bet in the entire AI race amounts to $58 billion, flowing simultaneously to two opponents正在 battling head-on in the enterprise market.

This isn't just a hyperscale cloud vendor's diversified betting; it's a more precise structure: Amazon is both Anthropic's "strategic ally and largest backer" and the new cloud foundation OpenAI is using to "replace Microsoft."

When the two companies compete for the same pool of enterprise customers, the channel they are争夺 happens to be Amazon's Bedrock platform, a platform that simultaneously distributes models from both companies. Whichever company has a higher conversion rate on Bedrock, Amazon profits, but OpenAI and Anthropic lose out to each other.

Under pressure from continuously eroding enterprise market share and structural cracks in the Microsoft partnership, OpenAI chose to rebuild the narrative with a carefully calculated numbers war, simultaneously using Amazon to re-layout its distribution管道. When the three sets of numbers are taken apart, this competition is more complex than either side wants you to see.