Author: Rand Group (@cryptorand)

Compiled by: Deep Tide TechFlow

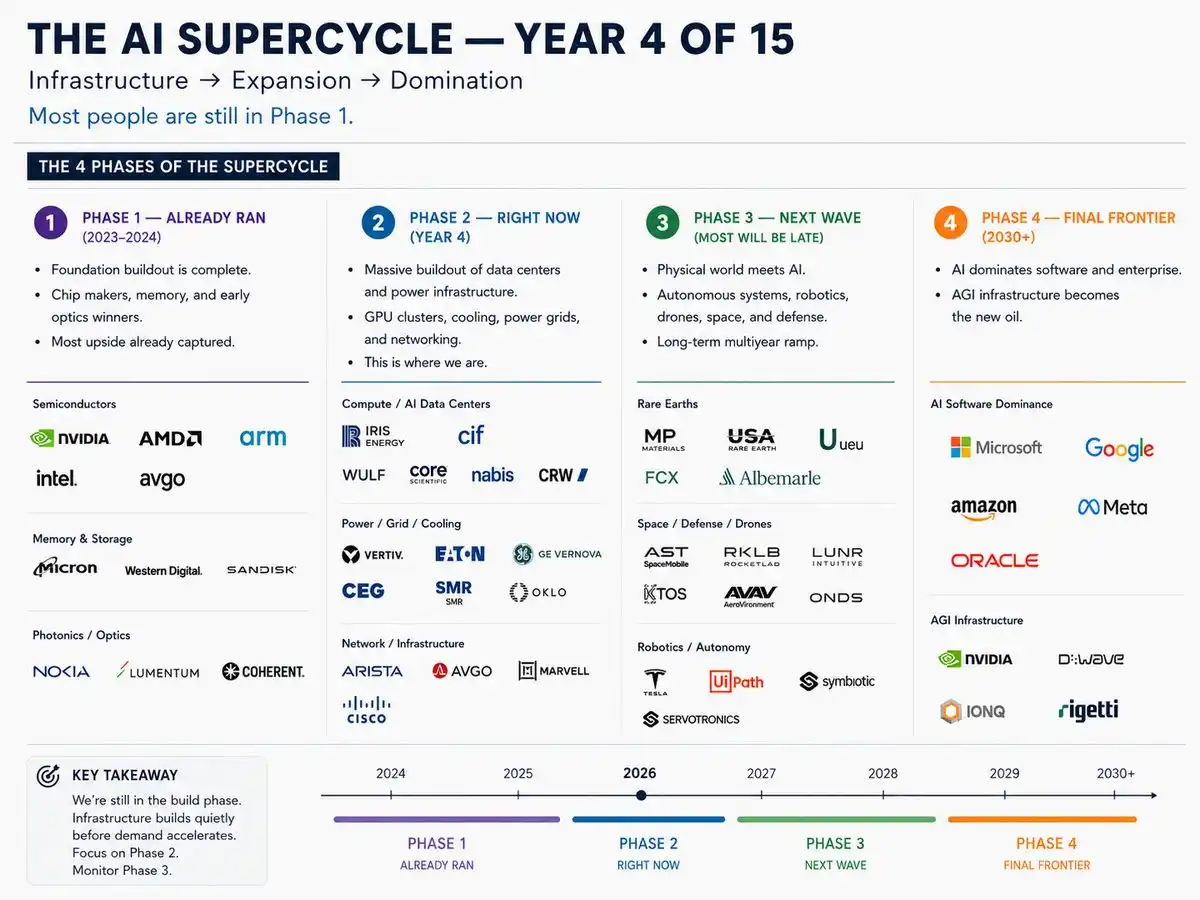

Deep Tide Intro: Crypto KOL Rand Group breaks down the AI supercycle into four stages, from chips to infrastructure to robots to platform software, marking the core targets and risk-reward ratios for each stage. His judgment is: Stage 1 (Semiconductors) is over, Stage 2 (Power/Cooling/Networks) is being priced, and the true asymmetric opportunity lies in Stage 3 — robotics, space, defense, nuclear energy.

The AI supercycle will last 15 years. This is year three.

Most investors are still buying Stage 1 stocks, but smart money is already rotating into Stage 3.

I've broken the entire cycle into four stages, with the most important tickers labeled for each.

The AI supercycle is the biggest investment theme of this generation. Bigger than mobile internet, bigger than cloud computing. A 15-year structural shift that will reshape every industry in the global economy. Hyperscale cloud providers just committed $725 billion in capex for 2026, nearly double last year's. Microsoft, Google, Amazon, Meta — each over $100 billion individually.

This is not speculation.

🔴 Stage 1: Over (2023-2025)

The foundation layer is complete. AMD was up 78% in 2025, NVDA up 39%, Intel just delivered a blowout Q1, pushing the Philadelphia Semiconductor Index above 10,000 for the first time. Chips still drive every stage, but the historic entry opportunity is gone; the risk-reward has compressed.

Tickers: NVDA, AMD, ARM, INTC, AVGO, MU, GLW

Sectors: Semiconductors, Memory, Photonics/Optics

Status: Foundation complete, still growing, but priced in.

🟡 Stage 2: Buildout Peak (2025-2027)

The stage most investors are just waking up to. CEG acquiring Calpine to become the largest private U.S. power producer at 55 GW. GEV up over 200% in a year. VRT co-designing cooling for NVIDIA's Rubin architecture. GLW up 74% YTD on fiber demand. Nuclear SMR is the biggest dark horse — OKLO, SMR, BWXT are laying direct power lines for data centers.

Still upside, but the most obvious names have moved.

Tickers: CEG, GEV, VRT, VST, TLN, ANET, GLW, MOD, EQIX, OKLO, SMR, BWXT, NNE

Sectors: Power/Grid, Cooling, Networking, Nuclear SMR Buildout Peak

Note: Nuclear SMR is the hidden major opportunity.

🟡 Stage 3: Positioning Window (2026-2028)

The stage where AI leaves the data center and enters the physical world. Most will be late.

Tesla is converting its Fremont factory into an Optimus robot production line — $25 billion capex, targeting mass production in H2 2026. Rocket Lab posted a record $602M revenue, backlog at $1.85B. LUNR up 47% YTD with $943M in contracts. KTOS's Valkyrie drone selected by the Marine Corps.

The positioning window is open now.

Tickers: TSLA, RKLB, LUNR, KTOS, AVAV, PATH, ISRG, MP, FCX, ALB, ASTS

Sectors: Robotics/Autonomy, Space/Defense/Drones, Rare Earths

Judgment: The asymmetric risk-reward is here.

🟢 Stage 4: Endgame (2028+)

The endgame. Microsoft capex $190B, Alphabet $190B, Amazon $200B, Meta $145B. Google Cloud backlog exceeds $460B. They are building AI software dominance and AGI infrastructure. Quantum computing is early, but IONQ and D-Wave are laying the groundwork.

The platforms controlling the software layer win the entire supercycle.

Tickers: MSFT, GOOGL, AMZN, META, ORCL, IONQ

Sectors: AI Software Dominance, AGI Infrastructure, Decade-long thesis

Strategy: Buy the dips.

Key Conclusions

- Stage 2 is confirmed (hyperscale $725B capex)

- Stage 3 is where smart money is positioning — robotics, space, defense, nuclear

- SMR is the core trade from 2026 to 2028

- Most will rotate into these names 12 months late

A 15-year supercycle. Not a single trade. Stage 1 is over, Stage 2 is being priced, Stage 3 is where you should be.