Author: a16z

Compiled by: Deep Tide TechFlow

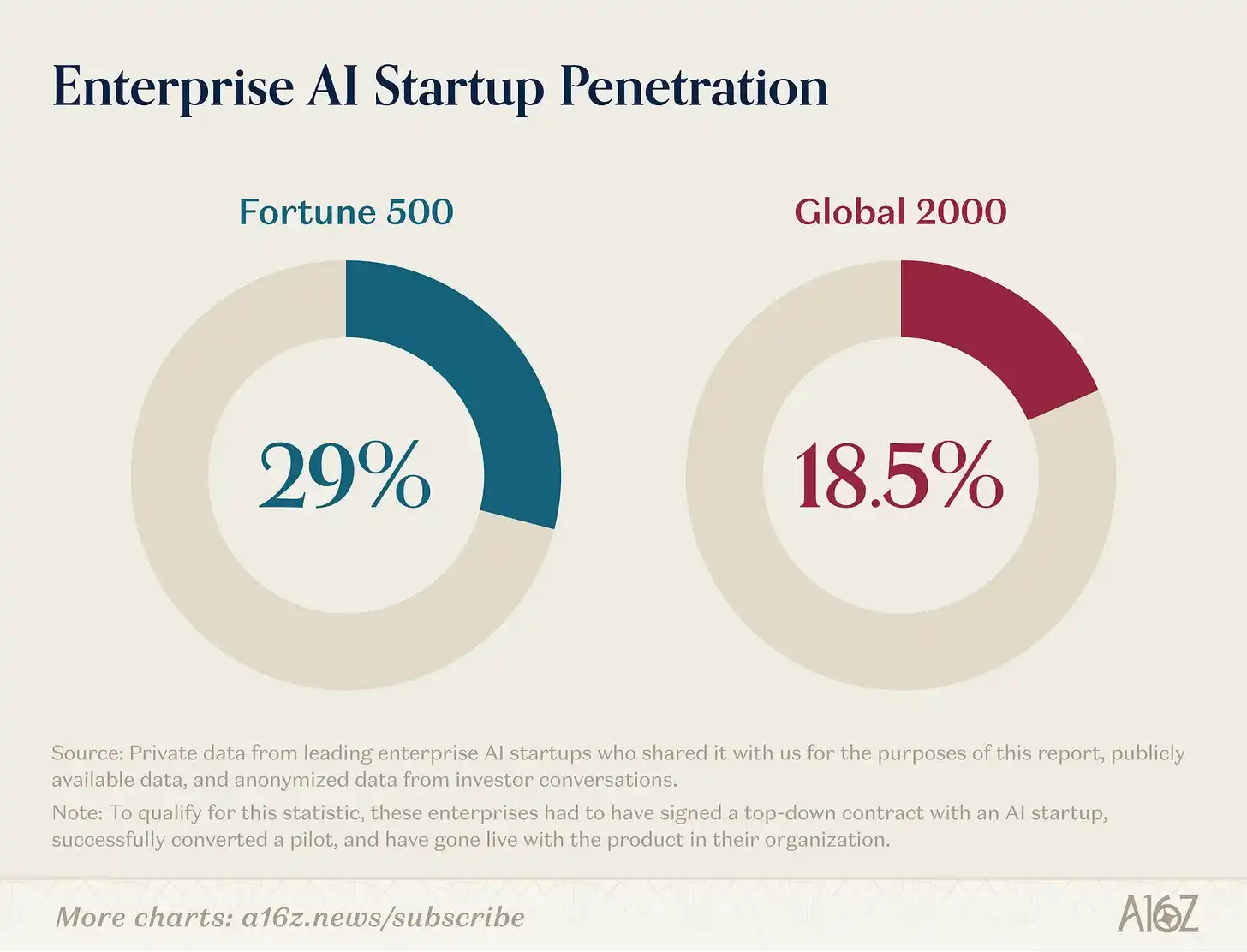

Deep Tide Introduction: MIT claims that 95% of enterprise generative AI pilot projects fail to convert, but a16z counters this with firsthand data from its portfolio companies. 29% of the Fortune 500 and 19% of the Global 2000 are already paying customers of leading AI startups. Coding tools have boosted the efficiency of top engineers by 10-20 times. This 23,928-word report, based on internal data, reveals which AI scenarios are truly generating value and which are still conceptual hype.

There is much speculation about how much progress AI has made in large enterprises, but most existing information consists only of self-reported AI usage or surveys capturing qualitative buyer sentiment rather than hard data. Furthermore, the few existing studies assert that AI performs poorly in enterprises, most notably an MIT study claiming that 95% of generative AI pilots fail to convert.

Based on our internal data and conversations with enterprise executives, we find this statistic难以置信 (unbelievable). We have been closely tracking where AI is seeing the most adoption and where ROI is clear, and have compiled hard data on what actually works in enterprise AI.

AI Penetration in Enterprises

According to our analysis, 29% of the Fortune 500 and approximately 19% of the Global 2000 are active paying customers of leading AI startups.

To qualify for this statistic, these enterprises must have signed top-down contracts with AI startups, successfully converted pilots, and deployed the products within their organizations.

Reaching this level of penetration in such a short time is remarkable, as Fortune 500 companies are not known for being early technology adopters. Historically, many startups had to first sell to other startups to gain early momentum; it took years before a startup could sign its first enterprise contract, and even more revenue and time before finally landing Fortune 500-scale clients.

AI has颠覆 (disrupted) this norm. OpenAI launched ChatGPT in November 2022, immediately demonstrating AI's potential to both consumers and enterprises. Doing so unleashed a storm of interest that previous generations of technology never triggered, making large enterprises more willing than ever to bet on new products earlier. The result: just over 3 years later, almost one-third of the Fortune 500 and one-fifth of the Global 2000 have real enterprise AI deployments within their organizations.

What Works in Enterprise AI

Where is this adoption happening fastest, and how does it map to the work models are inherently better at doing?

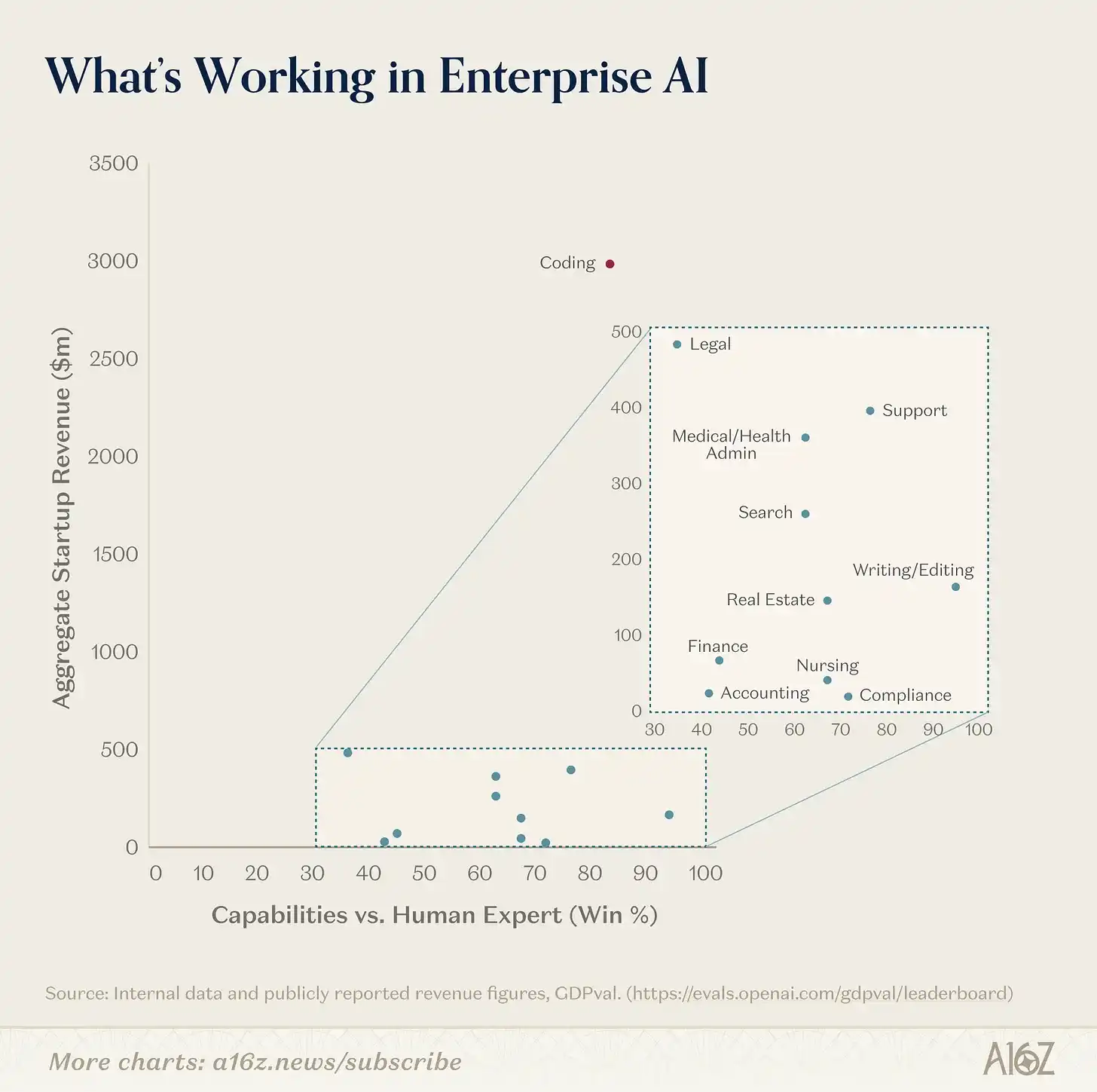

We found that the most indicative assessment method is to overlay the revenue momentum of various use cases onto the model's theoretical capabilities as defined by GDPval, a well-known OpenAI benchmark that evaluates a model's ability on economically valuable tasks in the real world. For us, these two factors both概括 (summarize) how good models can be and how much value they prove to provide today. This makes them very telling about where AI adoption is today, where it might be headed, and where there is still AI悬置 (suspension) in terms of adoption despite maturing model capabilities.

Where is Enterprise AI Providing the Most Value Today?

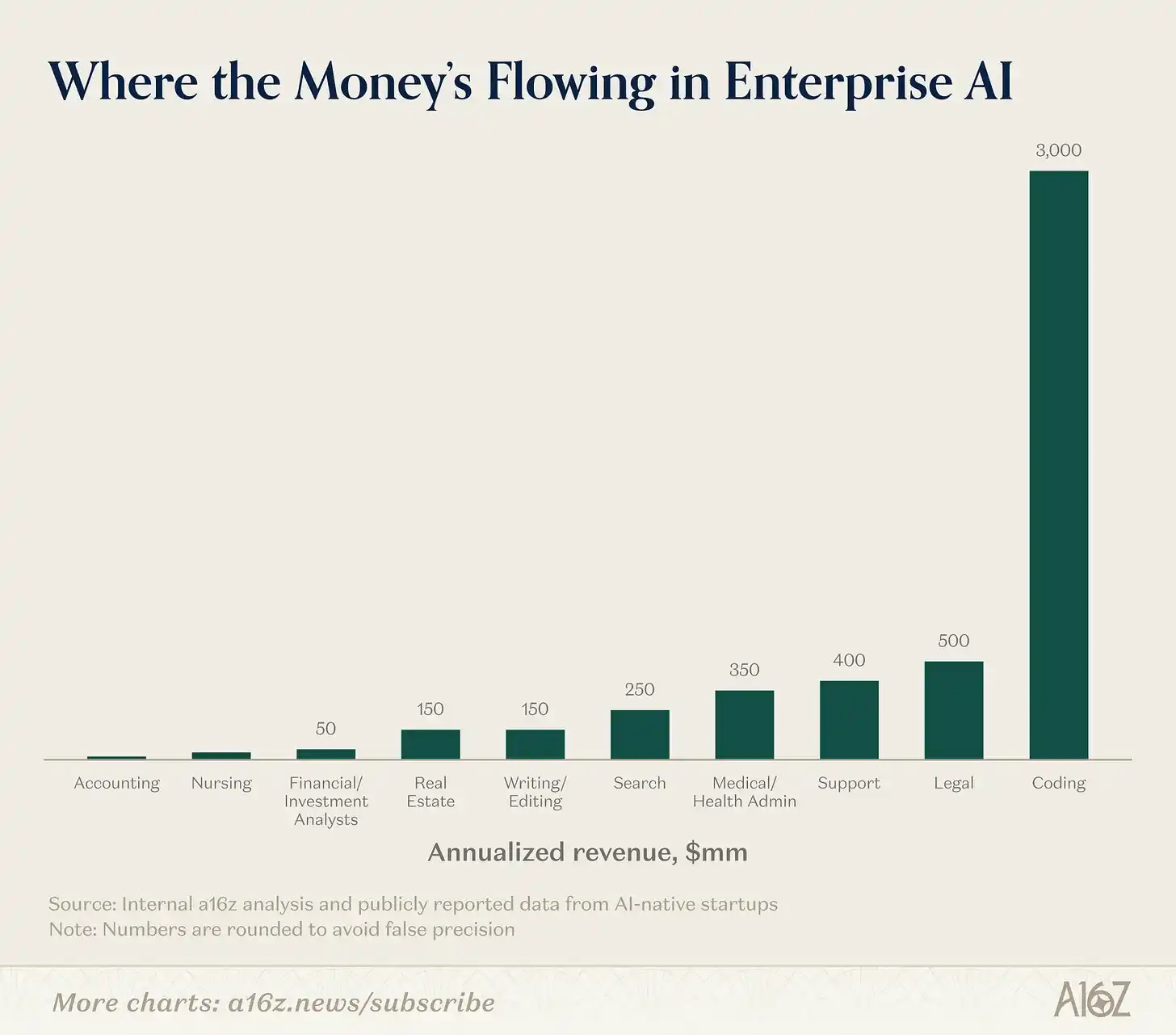

In terms of revenue momentum, enterprise adoption of AI is dominated by a clear set of use cases and industries. Programming, Support, and Search represent the majority of use cases so far (Programming is even an order-of-magnitude outlier in this group), while the Technology, Legal, and Healthcare sectors are the most eager industries to adopt AI.

Programming: Programming is the dominant AI use case, by almost an order of magnitude. This is evident in the explosive growth reported by companies like Cursor and the超高速 (hyper-fast) growth of tools like Claude Code and Codex. These growth rates exceed almost everyone's most optimistic predictions, and the vast majority of Fortune 500/Global 2000 adoption of AI tools so far is in code.

In many ways, programming represents the ideal AI use case, both in terms of technical capability and enterprise market acceptance. Code is data-intensive, meaning there is a vast amount of high-quality code online for model training. It is also text-based, making it easy for models to parse. It is precise and unambiguous, with strict syntax and predictable results. Crucially, it is verifiable: anyone can run it and know if it works, creating a tight feedback loop for model learning and improvement.

From a business perspective, it's also a great application. We consistently hear from portfolio companies that the productivity levels of their best engineers have increased by 10-20 times with AI coding tools. Hiring engineers has always been difficult and expensive, so anything that increases their productivity has a clear ROI – the magnitude of the boost provided by AI coding tools creates a massive incentive for adoption. Engineers also tend to be early adopters who demand the best tools, and because programming is a more solitary task compared to most enterprise work, they can more easily simply find the best tool and adopt it without being bogged down by the coordination and bureaucracy that plagues many other enterprise functions.

Furthermore, programming tools do not need to be 100% end-to-end task completers to have additive value, as any acceleration (e.g., finding bugs, generating boilerplate code) still saves time and is useful. Since programming has tight human-in-the-loop workflows, with developers still overseeing the development process today, these tools accelerate output while still leaving room for human judgment to review, edit, and iterate. This both increases enterprise confidence and makes the adoption path smoother.

Programming capabilities are improving exponentially, with every lab explicitly focused on winning code as a use case. This has huge implications. Code is upstream of all other applications, as it is the core building block of any software, so AI's acceleration impact on code should accelerate every other field. The lowered barrier to building in these fields unlocks new opportunities to solve with AI, but the same accessibility makes building a lasting competitive advantage for startups more critical than ever.

Support: Support is at the other end of the barbell, opposite code. While software engineering typically gets the most investment and attention within organizations, support is often overlooked. The work in support organizations is back-office, entry-level work, often outsourced to offshore companies or Business Process Outsourcing (BPO) firms because companies find it too cumbersome and complex to manage themselves.

AI has proven to excel at managing this work for several reasons. First, the nature of most support interactions is time-bound, with constrained intent (e.g., issuing a refund), providing agents with clearly defined problems to handle. Support is also one of the only functions where the tasks involved in the role are clearly defined. Support teams are large and have high turnover, so there is a need to train new representatives quickly and in a standardized way. To do this, they have clearly articulated Standard Operating Procedures (SOPs) that guide each representative's work. These SOPs create clear rules and guidelines that AI agents can mimic. This distinguishes it from most other enterprise work, which is often longer-lasting, less clearly defined, and involves more stakeholders beyond the customer and service representative.

Support is also one of the enterprise functions with the clearest ROI demonstration. Support runs on quantifiable metrics: number of tickets answered, customer CSAT (satisfaction) scores, and resolution rates. Any A/B test of the status quo versus an AI agent would yield favorable results for the AI agent: it would answer more tickets, increase resolution rates, and improve consumer satisfaction scores – all at a lower cost. Since most support is already outsourced to BPOs, adopting an AI solution requires limited change management, making the adoption path easier.

Support also does not require 100% accuracy to be useful, as it has a natural出口 (escalation path) to humans (e.g., "I'm escalating you to a manager"). This allows sales cycles to move faster and makes piloting an AI support agent relatively low-risk; in the worst case, 100% of cases would simply be escalated and resolved by a human.

Finally, support is inherently transactional. Customers are indifferent to who is actually on the other end, meaning support does not require any interpersonal touch that AI struggles to replicate. These characteristics explain why companies like Decagon and Sierra are growing so fast, along with more vertical-specific support players like Salient, HappyRobot, etc.

Search: The last horizontal category with clear enterprise market pull is Search. A primary use case of ChatGPT itself is search, so the impact of search is likely heavily baked into ChatGPT's revenue and usage and may be significantly underestimated here.

AI search as a category is so broad that it has enabled many independent large startups to emerge. A major pain point inside many enterprises is simply enabling employees to locate and extract relevant information across their different systems. Glean has thrived as a leading startup vendor for this use case. Many large industries also operate based on very specific industry information (internal and external), and companies like Harvey (started with legal search) and OpenEvidence (started with medical search) have thrived by building core products around this.

Industries

Technology: By far the most common industry adopting AI is the technology industry. ChatGPT itself reports that 27% of its business users are from tech, and many early customers of companies like Cursor, Decagon, and Glean are tech companies. Given that tech is almost always an early adopter and is the industry that spawned the AI wave, this is完全不令人惊讶 (not surprising at all).

More surprising is that markets historically not considered early adopters are proving eager this time.

Legal: Legal is surprisingly one of the先行 (leading) industries in AI. Legal has historically been considered a difficult market for software, with冗长 (lengthy) sales cycles and buyers less tech-savvy.

This is because traditional enterprise software offered limited value to lawyers: static workflow tools did not accelerate the unstructured, nuanced work lawyers typically do. But AI has made the value proposition of technology for lawyers much clearer. AI excels at parsing dense text, reasoning over large amounts of text, and summarizing and drafting responses – all work lawyers do frequently. AI now often acts as a copilot to increase individual lawyer productivity but has begun expanding beyond this: in some cases, it can actually generate revenue by allowing law firms to handle more cases (as in the case of Eve, which specializes in plaintiff law).

The results are clear. Harvey reportedly has about $200 million in Annual Recurring Revenue (ARR) within 3 years of founding, and companies like Eve have over 450 clients and reached a $1 billion valuation this fall.

Healthcare: Healthcare is another market responding to AI in a way traditional software never did. Companies like Abridge, Ambience Healthcare, OpenEvidence, and Tennr are growing revenue very rapidly based on discrete use cases like medical transcription, medical search, or back-office automation of the Byzantine rules governing how healthcare is delivered and paid for.

Healthcare has historically been a slower market for software adoption because 1) the high-skill and complex work mapped poorly to the problems traditional workflow software could solve, and 2) the dominance of systems of record like Epic for EHR squeezed out brand new software vendors. However, with AI, companies are able to take on discrete manual labor jobs that bypass the system of record, either by replacing administrative work (e.g., medical scribes) or augmenting the higher-value work doctors are doing. This work is discrete enough that it doesn't require ripping and replacing the EHR, allowing these companies to scale quickly without needing to displace existing software vendors.

Notes on the Analysis

These estimates are best estimates. It likely underestimates the amount of revenue generated in each category and overstates model capabilities.

We likely underestimate revenue because:

The revenue analysis is purely based on which departments and use cases succeeded enough to generate large, independent enterprise AI businesses, and excludes the long tail of use cases other startups are tackling.

Many of these markets also have significant non-startup players generating significant revenue (e.g., Codex/Claude Code in code, Thomson Reuters' CoCounsel in legal), but we focused the analysis on independent startup players.

Many of the work tasks articulated in our analysis might be baked into the core products of model companies (e.g., search in ChatGPT and OpenAI) but are not broken out and included in this analysis.

This analysis focuses on enterprise businesses rather than consumer or prosumer businesses. There are successful businesses (e.g., Replit and Gamma in app generation and design) with a considerable number of business users but primarily focus on consumers or prosumers today. Given this analysis focuses on enterprise AI and where enterprises get value, we excluded consumer-led businesses.

On the capability side, measuring AI's impact on different sectors of the economy is extremely difficult, although many economists are trying. Jobs are inherently ill-defined and long-tailed, making them extremely difficult to fully automate. It's unclear today how much value enterprises can derive from partial automation – if AI can only do 50% of a human task, the importance of the non-automatable tasks might rise as they become the bottleneck, increasing their relative value. Therefore, we likely overstate the capability state today, as each incremental 1% of capability does not translate to 1% of economic value, but noting the relative capabilities and how they improve with each new model release is still illustrative.

AI is Entering All Markets

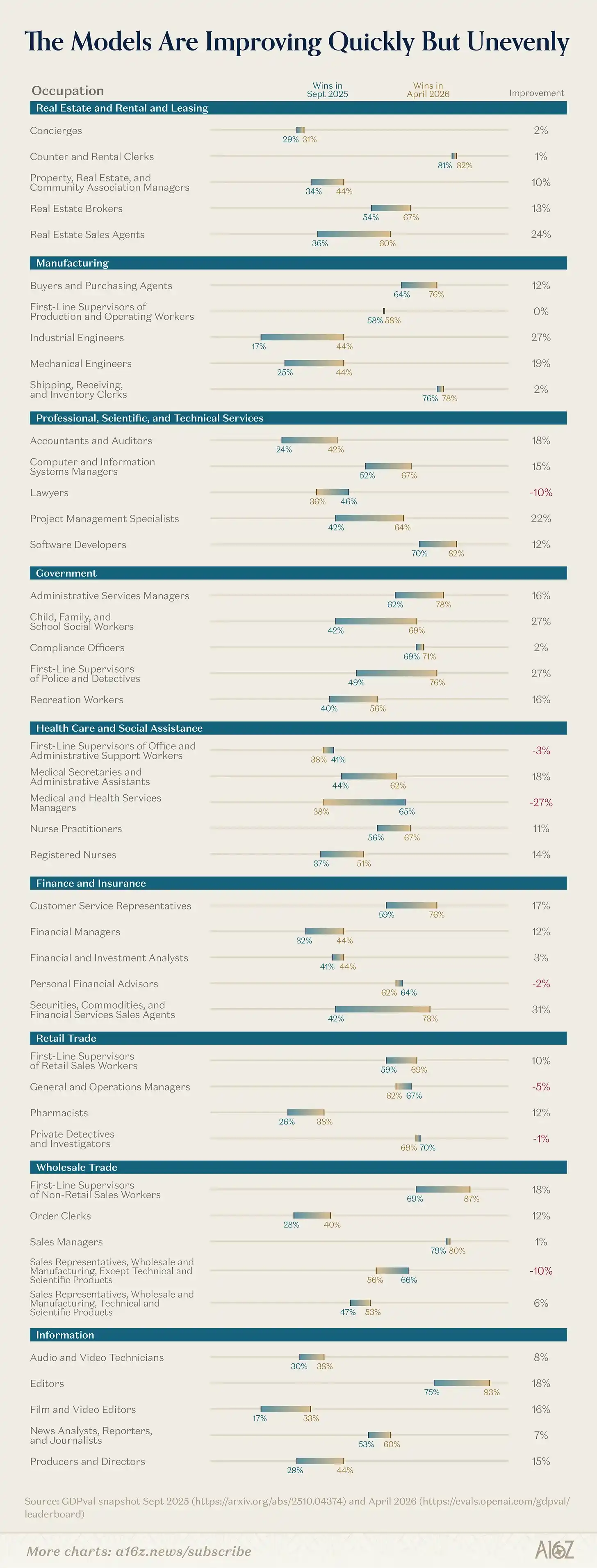

This analysis measures the win rate of top evaluation models against human experts based on the GDPval benchmark. Based on this, it's clear that models have become significantly better at economically valuable work since the fall of 2025.

So why don't we see the same type of revenue momentum in all industries that rank high on this assessment?

The industries that have enthusiastically adopted AI so far share several similarities: they are text-based, involve mechanical and repetitive work, have natural human-in-the-loop participation to inject human judgment, have limited regulation, and have clearly verifiable final outputs (e.g., running code, resolved support ticket). Many industries lack these attributes. They either deal with the physical world, rely heavily on interpersonal relationships, have significant coordination costs among many stakeholders, impose regulatory or compliance barriers, or lack verifiable outcomes. While revenue momentum and model capability are clearly correlated, in areas where model capability is theoretically below a 50% win rate against humans (as was the case with legal), companies like Harvey were still able to quickly gain market share with copilot products that augment individual legal work, and then continuously improve their core product as models evolved.

The most notable finding here is that model capabilities are improving rapidly. Several areas have shown huge improvements in the past 4 months – Accounting and Auditing showed a nearly 20% jump on GDPval, and even areas like Police/Detective work showed nearly a 30% improvement. We expect these jumps to yield compelling new products and companies in their relevant fields. Furthermore, model companies have explicitly announced their intention to improve core capabilities on economically valuable work, doing core work on spreadsheets and financial workflows, using computers to handle棘手 (intractable) work on legacy systems and industries, and making meaningful improvements on long-horizon tasks, which opens up a whole new class of work that cannot be easily cut into small, digestible pieces.

Implications for Builders

Understanding where enterprises get value and how they think about ROI – and which sectors are clearly seeing pull versus which are即将到来 (imminent) – allows us to think more clearly about where the opportunities lie for AI builders.

Serving Tech, Legal, and Healthcare buyers is clearly fertile ground now, but we don't believe there will be one "winner" in each category. For example, in the legal field, there are many types of lawyers – in-house counsel, law firms, patent lawyers, plaintiff lawyers, etc. – all with different workflows and different needs that companies can address. The same is true for healthcare given the patchwork of different types of doctors, healthcare facilities, etc.

Beyond these sectors, another productive way to think is about places where capabilities are getting stronger but haven't yet seen breakout companies on the revenue side. Many current businesses were built before model capabilities truly unlocked the product, but they have built enough technical infrastructure and customer/market awareness that they are best positioned when the model unlock arrives.

Finally, it's important to pay attention to where labs are focusing their latest research efforts on economically valuable work. With rapid improvements in long-horizon agents, heavy investment in computer use, and research into reliable interfaces for modalities beyond text (e.g., spreadsheets, presentations), there is a whole new class of startups that will soon have the enabling infrastructure needed to generate meaningful enterprise value.

Data Methodology: This data is aggregated from leading enterprise AI startups, including private data shared with us by companies for the purposes of this report, publicly available data, and anonymized data analyzed from the thousands of conversations we at a16z have had with startups and large enterprises.