Qianxun AI has once again accelerated its funding pace.

On April 7, 2026, Qianxun AI announced the completion of a new round of financing, raising 1 billion yuan. This round was jointly led by Shunwei Capital and Yunfeng Capital, with significant participation from Dawnview Capital, a leading RMB fund, Galaxy Yuanhui, Turing Fund, New Ding Capital, Gengxin Capital, and others.

This is already its second major funding round within 30 days. Just recently in February, the company had completed a nearly 2 billion yuan financing. Combined, the total funding has directly reached 3 billion yuan.

Even more interestingly, this round featured an extremely buzzworthy combination: Lei Jun (Shunwei) + Jack Ma (Yunfeng) co-leading an investment in the embodied AI track for the first time.

In the past, they have each bet on key cycles like mobile internet, e-commerce, smart hardware, and cloud computing. This time, their joint investment in robotics, especially in the still-early field of embodied AI, indicates that this direction is moving from technological imagination to capital consensus, beginning to enter an elimination round of ranking backed by giants and highly concentrated capital.

Qianxun AI was founded in January 2024 by serial robotics entrepreneur Han Fengtao, top AI scientist Gao Yang, and robotics overseas expansion pioneer Zheng Lingyin.

Founder and CEO Han Fengtao previously served as Co-founder and CTO of Rokae Robotics, leading the delivery of nearly a hundred robot models, possessing profound engineering and mass production capabilities. Co-founder Gao Yang graduated from the University of California, Berkeley, studying under computer vision master Trevor Darrell, and is currently an assistant professor at the Tsinghua University Institute for Interdisciplinary Information Sciences. The team he leads open-sourced the Spirit v1.5 model, which surpassed the US leading model Pi0.5 on the RoboChallenge leaderboard, becoming the first Chinese open-source embodied model to top the list. Co-founder Zheng Lingyin is a pioneer in the overseas expansion of industrial robots, having built an overseas division from 0 to 1, leading the team to deeply cultivate multiple overseas markets and quickly achieve commercial results.

The three founders cover the three core capabilities of AI, robotics, and commercialization respectively, together forming a rare "hexagonal warrior" team in the industry. This is also the underlying confidence behind securing 3 billion yuan in funding within 30 days and the heavy investment from Shunwei Capital and Yunfeng Fund. Such a combination allows Qianxun AI to possess world-class technological foresight and commercialization genes from its inception.

Han Fengtao once pointed out that in 2026, the competition is about data scale and model performance. The most important thing this year is not expanding scenarios, but making the embodied model rank among the global Top 3. To achieve this, there must be enough money in the bank.

Therefore, the blitzkrieg-style continuous financing is essentially using capital density to exchange for time advantage, quickly piling up resources, widening the performance gap, and locking in a leading position early. Meanwhile, old shareholders continuing to invest in this round means that investors have switched from观望验证 (wait-and-see verification) to加速押注 (accelerated betting).

So, what exactly did Qianxun AI rely on to get this accelerated entry pass? How deep has its moat been dug?

The Underlying Logic of Capital Betting: A Path More Like Large Models is Validated

Why is capital willing to continuously invest? The answer: the model has provided a阶段性答案 (stage answer).

In January of this year, Qianxun AI open-sourced the embodied model Spirit v1.5. In public evaluations, this model directly surpassed the then strongest open-source model, Pi0.5.

But what most impressed capital was the inflection point of the capability curve.

Spirit v1.5 has already demonstrated relatively stable zero-shot generalization capabilities—it can complete a series of complex operations like wiping, opening/closing hinges, and handling flexible objects without additional training.

In other words, robots are beginning to not just learn one task, but have acquired the ability to transfer across tasks, showing the potential for embodied AI to liberate human productivity.

Behind this lies a technical path highly similar to Large Language Models (LLM): make the model larger, feed it enough data, iterate continuously, and then believe in the "emergence" of capabilities.

Specifically, Spirit v1.5 is an end-to-end VLA (Vision-Language-Action) unified model. It does not obsess over还原世界的全部细节 (restoring all the details of the world), nor does it emphasize that explicit layer of world simulation in the middle, but directly learns the mapping relationship from perception to action.

The training method is also very LLM-like. However, the text data is replaced with robot data. First, use massive internet videos for pre-training to establish a basic understanding of the world, then use real interaction data for alignment—first obtain generalization ability, then approach specific tasks.

The result, with lower computing power and parameter scale,反而跑出了更强的泛化表现 (instead ran out stronger generalization performance).

Just a few days ago, this path also received "synchronized resonance" from Silicon Valley counterparts.

On April 3, Silicon Valley embodied AI company Generalist AI released the foundation model GEN-1, using 500,000 hours of real physical interaction data to validate the Scaling Law in the field of embodied AI. How powerful is the effect?

These robots increased the average success rate of multiple physical tasks from 64%大幅提升至 (sharply increased to) 99%; the execution speed is almost as fast as humans, reaching about 3 times that of the most advanced existing systems, and they can also improvise on the spot. Even more夸张的是 (exaggeratedly), obtaining each capability required only about 1 hour of robot data.

Company CEO Pete Florence pointed out that what is happening now in robotics is similar to when people opened GPT-3 and asked it to write a brand new limerick.

Similar observations have also been verified by the Qianxun team. "Our team also discovered the Scaling Law in the field of embodied AI; for every 10x increase in data, the result gains an extra '9'." Gao Yang once形容 (described) the steepness of this curve. We are in the Scaling Law moment of embodied AI. Because robot data is harder to obtain, I think the GPT-4 for robots will take longer, maybe 4-5 years.

It can be said that capital is betting on a technical route that has been preliminarily verified,同时具备更高性价比和扩展潜力 (while possessing higher cost-effectiveness and expansion potential).

Data Engine: The Key to the Path's Success

In the field of embodied AI, almost everyone has a consensus: data collection is a fundamental bottleneck.

Large models can consume the vast amount of corpus on the internet, but robots cannot—in the world of physical labor, there is no Wikipedia. Superficially, everyone is competing on models, but the deeper competition is actually the data engine. "To achieve scaling, we will spare no expense." Pete Florence said bluntly.

Since we believe in Scaling Law, what kind of data system can be acquired at low cost, continuously expanded, and have sufficient diversity?

Previously, robot general models with success rates over 90% relied on extremely expensive and difficult-to-scale大规模远程操作数据集 (large-scale teleoperation datasets) (like Physical Intelligence). But Generalist AI developed "data hands"—a two-finger wearable device worn on the wrist that turns human hands into grippers similar to robots, thereby collecting visual and sensory data.

As a result, the progress of GEN-0 and GEN-1 verified that this data engine can also achieve high-level mastery—they did not use robot data, but only used data generated by humans wearing low-cost wearable devices performing millions of activities.

Qianxun AI is also advancing a Scaling route centered on diversity.

In terms of hardware solutions, Qianxun also chose a wearable方案 (solution), but went further. To allow the model to learn human-level fine manipulation, they adopted a three-finger structure design—the smart整机 (whole unit) is equipped with 26 degrees of freedom, each joint integrates force sensors (力传感器), and is equipped with a three-finger dexterous hand (三指灵巧手). But the technical challenges also increased significantly. The three-finger structure faces higher degrees of freedom, finer force control requirements, and more complex action mapping in wearable data collection.

Currently, Qianxun's wearable device has iterated to the fifth generation, with data usability increasing from 30% to 95%, while the cost has been compressed to about one-tenth of teleoperation.

It is important to note that, unlike Generalist AI's complete reliance on wearable data, Qianxun is building a multi-source fusion data engine.

In the pre-training phase, in addition to a large amount of wearable data, Qianxun AI also actively integrates internet videos for pre-training to acquire common sense and basic capabilities. Subsequently,引入 (introduce) teleoperation data from real machines for fine SFT (supervised fine-tuning) to improve the model's performance in actual tasks. Finally, further optimize through reinforcement learning: let the model continuously roll-out in real environments, constantly generating new data to feed back into the model.

So far, Qianxun has acquired over 200,000 hours of real interaction data, sourced from internet videos, teleoperation, wearable collection, and other channels, and this number is still growing rapidly, expected to exceed 1 million hours in 2026. By April 2026, Qianxun AI's data collection team will also reach a scale of one thousand people.

It is worth mentioning that Qianxun's understanding of data has also undergone a fundamental转变 (transformation).

They are no longer obsessed with the industry's mainstream meticulously crafted scripted data (脚本化数据), but have turned to a more open and diverse collection paradigm (采集范式): no longer strictly stipulating the action path, but围绕任务目标 (centering on the task goal), letting the execution process unfold naturally: allowing failure, allowing spills, allowing interruptions, and then continuing to complete.

The change this brings is fundamental; the model learns not how to do this specific thing, but how to handle similar situations. Under the same data scale, this data distribution significantly improves the model's migration efficiency while reducing reliance on computing power.

"Laying Eggs Along the Way": Real Scenario Data Feeds Back into the Model

In Qianxun's data engine, what truly determines whether the flywheel can spin is not just the data source, but the ability to continuously roll-out in real environments.

Han Fengtao once summarized that moving towards real scenes is to obtain the fuel (data) for model evolution. Commercialization is to make this acquisition process sustainable and scalable.

Behind this, there is actually a clear differentiation between Chinese and US paths. In the US, some companies can invest long-term around the foundation model itself, trading time for capability上限 (upper limit); but in China, without a demo or落地信号 (landing signal), it is difficult to obtain continuous financing. Most companies that survive, or even thrive, often choose a more折中的路径 (compromising path).

The road to general AI is even more a long and厚雪 (snowy) one; you can't wait for the model to mature before finding applications. Only by first putting robots into real production environments and having them participate in real business operations can the massive amounts of data generated by real business operations be used to feed back into the model for continuous evolution.

As the first domestic embodied AI company to push the diverse data collection route from theory to engineering, scaling, and complete dual verification in real business scenarios, Qianxun Robotics insists on "laying eggs along the way". They start with controllable scenarios,优先进入 (prioritize entering) industry and service sectors—two areas with relatively stable structures, clear task boundaries, high profits, and willingness to pay—to verify model capabilities while supporting company operations.

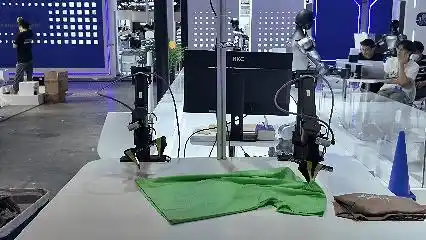

For example, in retail scenarios, cooperation with JD.com (also an investor) is deepening. "Xiao Mo" has entered京东 MALL (JD MALL),上岗担任 (taking up the role of) barista. While completing service tasks, the robot also simultaneously collects multi-modal perception data, joint motion trajectories, and精细力反馈信息 (fine force feedback information).

These "expert-level data" from real retail environments will be directly used for the training and fine-tuning of the embodied model, forming a positive closed loop of "data collection — model iteration — capability improvement".

Qianxun AI robots have officially started working at JD MALL, serving as baristas.

The two parties also plan to further expand embodied AI to more retail细分领域 (sub-sectors), including digital appliance shopping guides, automated inspection and tour guidance, and automated cleaning. Meanwhile, JD Pharmacy is also regarded as a core breakthrough point; robots will participate in high-precision tasks like automatic sorting and precise dispensing, exploring unmanned smart pharmacy solutions.

Before entering JD Mall, Qianxun had already completed a round of verification in industrial environments. "Xiao Mo" has walked onto the power battery pack production line of CATL,承担 (undertaking) the final functional test before下线 (offline). So far, it has completed plugging operations for over 1000 batteries, with a success rate稳定在 (stabilizing at) over 99%, and the作业节拍 (operation rhythm) is also approaching the level of skilled workers.

"Xiao Mo" has already walked onto the power battery pack production line

Embodied AI will not迎来落地即分胜负的时刻 (usher in a moment of decisive victory upon landing) in the short term. But a clearer trend has already emerged—competition is no longer just about who has more data, but is shifting towards who can obtain real scenario data more efficiently, and who can build a data-model flywheel closed loop that operates at a higher frequency.

After completing a阶段性 (stage) valuation leap, Qianxun AI will一方面 (on one hand) bet on the model's generalization ability, and on the other hand continuously amplify the data scale advantage, using high-frequency feedback from the real world to accelerate model iteration.

Looking back at GPT-2 in 2019, it might be nothing special, but as the scale continued to expand, the回报 (return) brought by generalization ability迅速放大 (quickly amplified). Now, the same inflection point is being reenacted in the field of robotics.

This article is from the WeChat public account "机器之心" (ID: almosthuman2014), author: Sia