Zhejiang University Research Team Proposes New Approach: Teaching AI How the Human Brain Understands the World

A research team from Zhejiang University published a paper in *Nature Communications* challenging the prevailing notion that larger AI models inherently think more like humans. They found that while model performance on recognizing concrete concepts improved as parameters increased (from 74.94% to 85.87%), performance on abstract concept tasks slightly declined (from 54.37% to 52.82%) in models like SimCLR, CLIP, and DINOv2.

The key difference lies in how concepts are organized. Humans naturally form hierarchical categories (e.g., grouping a swan and an owl into "birds"), enabling them to apply past knowledge to new situations. Models, however, rely heavily on statistical patterns in data and struggle to form stable, abstract categories.

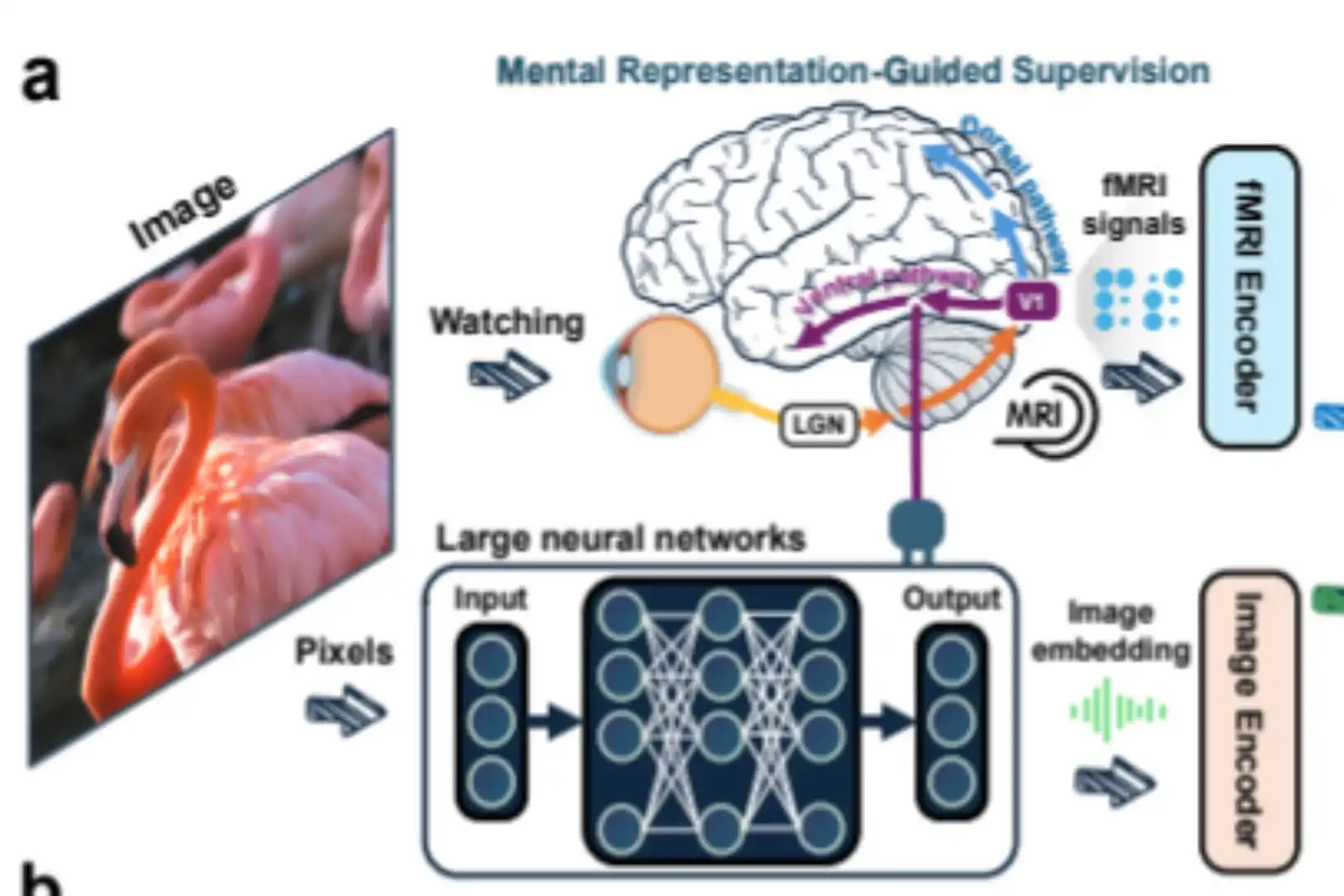

The team proposed a novel solution: using human brain signals (recorded when viewing images) to supervise and guide the model's internal organization of concepts. This method, termed transferring "human conceptual structures," helped the model learn a brain-like categorical system. In experiments, the model showed improved few-shot learning and generalization, with a 20.5% average improvement on a task requiring abstract categorization like distinguishing living vs. non-living things, even outperforming much larger models.

This research shifts the focus from simply scaling model size ("bigger is better") to designing smarter internal structures ("structured is smarter"). It highlights a new pathway for developing AI that possesses more human-like abstract reasoning and adaptive learning capabilities.

marsbit04/05 04:41