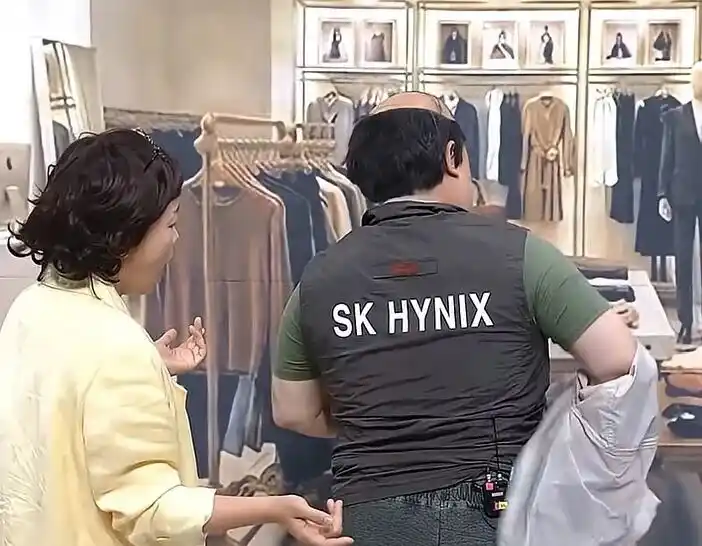

The King of Blind Date Attire in Korea: How SK Hynix Made a Comeback Against Samsung?

In South Korea's dating scene, SK Hynix employees are now highly sought after, a status shift fueled by the company's astronomical profits and employee bonuses, projected to reach up to 6.1 million RMB per person by 2027. This marks a dramatic reversal for the long-time second-place player in memory semiconductors, which has now surpassed its rival Samsung in annual operating profit.

The turnaround story began in 2008 when a struggling Hynix, emerging from bankruptcy restructuring, took a risky bet by agreeing to develop High Bandwidth Memory (HBM) with AMD. At the time, HBM had no clear market beyond high-end graphics cards and was a costly, complex technology. Major players like Samsung, pursuing its own HMC technology, declined. For Hynix, with only memory as its core business, it was a gamble born of necessity.

The pivotal moment came in 2012 when SK Group Chairman Chey Tae-won acquired Hynix. Defying industry downturns, he invested heavily in R&D and fabrication, sustaining the HBM project through over a decade of commercial uncertainty and internal challenges. A key break occurred around 2016-2017 when Samsung faced production issues supplying HBM2 for Google's TPU, allowing SK Hynix to gain a crucial foothold in the data center market.

The AI explosion post-ChatGPT in 2022 was the catalyst, turning HBM into a critical bottleneck for AI accelerators like NVIDIA's GPUs. By 2025, SK Hynix captured 62% of the global HBM market, leaving Samsung at 17%. For the first time, its annual operating profit exceeded Samsung's.

Analysts point to the "innovator's dilemma" to explain Samsung's miss: its vast, successful business portfolio made it risk-averse, preventing an all-in bet on the initially niche HBM technology. In contrast, SK Hynix, as a challenger with its back against the wall, had no choice but to commit fully.

The story highlights how Korea's chaebol system allows for ultra-long-term bets beyond quarterly pressures. However, SK Hynix's lead isn't guaranteed. Samsung is aggressively catching up on HBM4, and challenges like customer concentration (heavy reliance on NVIDIA) and technical hurdles in advanced packaging remain. The narrative underscores a market truth: the greatest alpha often comes from betting on uncertain, long-term directions others dismiss, much like HBM in 2008.

marsbit05/11 11:08