Claude is literally putting a knife to users' throats this time!

Claude starts mandatory real-name verification: identity check activated, ID submitted, selfie taken, account ban risk immediately reaches new heights!

Many people originally held onto a sliver of hope, thinking the platform would leave some wiggle room, that there was still some cover.

No need to guess anymore, Anthropic has blocked the path for you to see!

For users, the most terrifying part of this wave isn't the hassle of the process or the extra few minutes spent.

The truly fatal part is that account risk has instantly shifted from a vague state to an open hand! The platform has put verification, review, and disposal all on the table!

In one sentence, they're not even pretending anymore!

The official announcement spells it all out

Every move targets 'high-risk users'

Anthropic's official announcement appears calm on the surface, even carrying a tone of standardized compliance.

Things like preventing abuse, enforcing usage policies, fulfilling legal obligations – the wording seems fine.

But what users see isn't these nice words.

What they see is that the platform has publicly pushed its identity recognition capabilities a huge step forward!

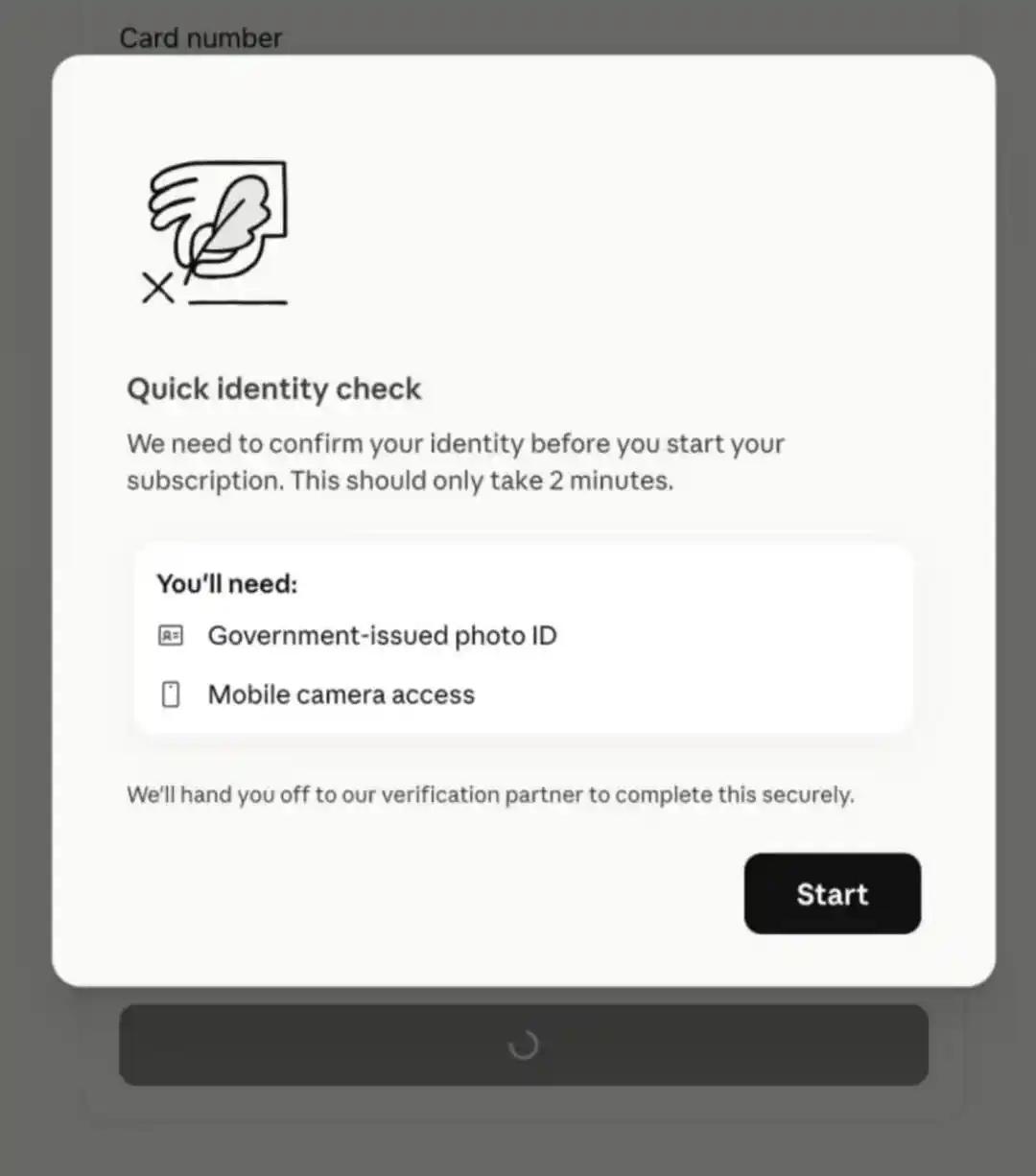

The announcement clearly states that identity verification may be triggered for some use cases, certain features, platform integrity checks, and other safety and compliance measures.

The required materials are also stated without any ambiguity: a government-issued photo ID, plus a real-time selfie, and it must be the physical original.

Screenshots won't work, scans won't work, photos of photos won't work, digital IDs won't work either!

With these requirements laid out, many people's hearts have already sunk.

What's even harsher comes next.

Anthropic itself writes that accounts may still be disabled even after completing verification.

Reasons include repeated violations of usage policies, creating accounts from unsupported locations, violating Terms of Service, and use by those under 18.

The杀伤力 (lethality) of this passage is enormous!

First, they ask for your identity documents, then immediately lay out the reasons for banning accounts.

Everyone understands what this means.

The platform is no longer satisfied with simply identifying if you are a person; it wants stronger confirmation capabilities, more convenient review capabilities, more direct disposal capabilities!

And the most infuriating part is that the boundaries are deliberately written vaguely.

What are 'some use cases'? What are 'certain features'? What are 'other safety and compliance measures'?

Trigger this today, trigger that tomorrow, how the scope expand, how the intensity increases – users have no certainty!

This is what infuriates users the most!

The platform now holds a stronger set of identification tools, and the risk of account bans hanging over users' heads skyrockets accordingly.

The knife that was once at a distance is now right in your face!

Once Persona gets involved

The situation changes completely

Many people's first reaction upon seeing Persona might be: just an identity verification vendor that's easy to bypass.

That's thinking too simply.

The work Persona does is far beyond just creating a page, receiving a photo, and running a process.

It builds complete identity verification infrastructure, responsible for setting up the entire chain of "Who are you," "Is it really you," "Are you worth letting through," "Can we track you down if something happens"!

Once this type of company is integrated, the meaning is completely different.

Public information shows Persona has早有合作 (long had cooperation) with other leading organizations, including large model companies like OpenAI.

This isn't its first time handling high-intensity identity reviews, nor its first time serving high-risk, high-compliance business scenarios.

Anthropic's choice this time sends a clear signal: what Claude is deploying is not a temporary verification, not a small-scale test, but a mature, scalable, and executable (能扩、能规模化执行的) gate system!

This is why many people got nervous immediately.

In the past, many things could survive in the cracks; many identification actions were costly, executed poorly, and the systems weren't that strong.

Now it's different. Once mature infrastructure is integrated, those areas that could still be handled vaguely, delayed, or混过去 (slipped through) using gaps will be gradually crushed!

Then there's KYC.

This term used to appear more in finance, payments, and trading platforms. Many felt it was the玩法 (playbook) of banks and exchanges, far from AI products.

Now Claude is directly taking the path here.

KYC boils down to one sentence: first, nail down who you are, then discuss what you can do, then decide how to handle you if problems arise!

This is why everyone is so angry.

Claude originally felt, at the very least, like a model platform, a tool platform, a productivity platform.

With this identity verification system now in place, the platform's logic is clearly changing.

It cares more and more about who you are, more and more about how you use it, more and more about whether you fit its definition of safety and compliance!

For users, this change is too dangerous!

Because once it reaches this step, the subsequent tightening will only become more and more convenient!

Look at the user comments

You'll know how furious users are this time!

A few comments in the material almost completely convey the emotion.

Someone starts by cursing:

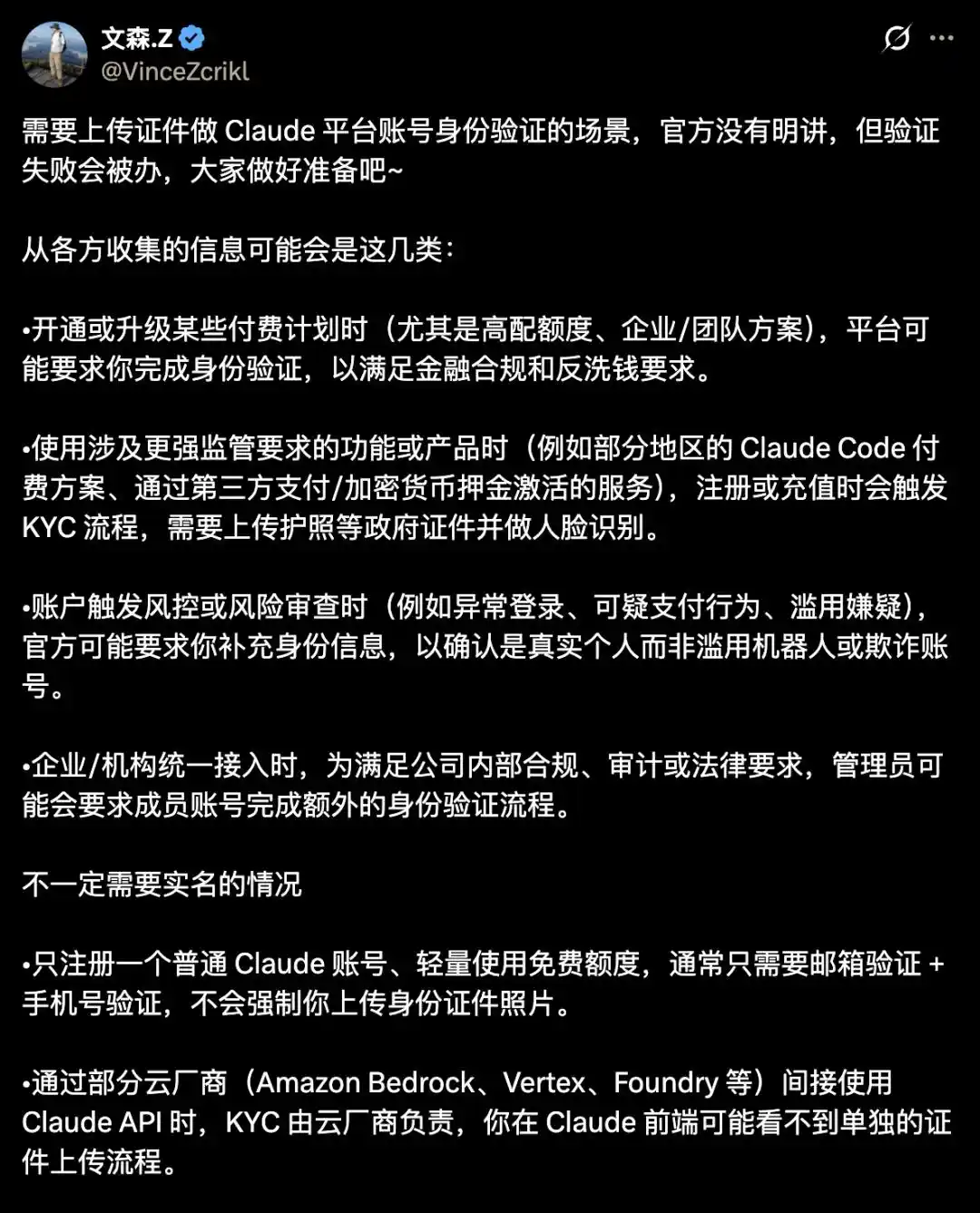

Anthropic is starting to act inhuman, wants to搞 (implement) identity verification, requires passport plus selfie to use some features.

https://x.com/yangyi/status/2044299899777495249

What people fear is that after submitting the materials, the risk doesn't decrease, but反而更高了 (instead becomes higher)!

The platform holds more complete information, making subsequent risk management, identification, and disposal even harsher!

This is basically a nightmare for domestic users (in China).

Already using it悬着 (precariously), now还要交证件 (also have to submit IDs).

Verification from unsupported regions will likely fail, and even if passed, it might not be safe, still liable to be banned later for various reasons.

This is the reality.

Many people understood it in their hearts before, even if they didn't say it: Claude never truly gave users stable expectations.

Now even this ambiguous space is being compressed, who wouldn't be炸 (explode with anger)!

Look at another prophetic comment from half a year ago:

Just add KYC already.

And now it's really added.

https://x.com/realNyarime/status/2044307566432530694

What annoys everyone the most isn't just the platform tightening up.

What annoys everyone the most is Anthropic's long-standing attitude of being intermittently available, repeatedly raising thresholds, and constantly making users anxious has built up for too long!

This identity verification rollout directly ignited this fire completely!

Others judge the situation realistically: only accepting government documents from supported countries and regions means a large number of relay stations will likely face issues next.

https://x.com/m1ssuo/status/2044300993547120784

This isn't alarmist.

Many玩法 (methods) on the ecosystem chain were built on a fragile foundation.

With Claude now dropping the real-name verification gate, many services living in the gaps will be severely hit!

https://x.com/VinceZcrikl/status/2044335219432677556

Another comment is particularly short:

Max is in danger. (开 max 危了 - Using Max subscription is risky)

https://x.com/VinceZcrikl/status/2044336700957298818

These four words carry significant weight.

Because those most afraid of this move are precisely the heavy users, high-volume users, those who use Claude as their core productivity tool.

The deeper you use it, the more you invest, the more you fear the platform suddenly popping up a real-name verification, and then顺手 (conveniently) wiping out your entire workflow in one go!

Others say:

Continuously delivering customers to the competitor. Recently, Opus 4.6 itself had brain issues, now Codex feels even better. (源源不断向对家输送客户, 最近本来 Opus 4.6 就脑子有问题, 现在感觉 Codex 更香了 - Likely referring to GPT-4/Codex)

https://x.com/SeptenAI/status/2044308994370744432

The platform increases pressure, degrades experience, and makes users anxious about account security.

Then why should users stick around?

Voting with feet has always been the fastest!

Don't wait for the Sword of Damocles to fall

Cut losses now if you need to!

Pretending not to see it now, continuing to tie your entire workflow, client projects, core data全部绑在 (all tied to) a single Claude account, is ridiculously high risk!

First, reducing the risk of being banned.

The Anthropic help center is very clear: subscription plans like Free, Pro, Max, Team, Enterprise serve Claude's native applications and normal usage scenarios.

You are buying permission to use the official product, not a universal traffic pool, let alone an interface for external distribution or connecting to various third-party projects!

If you want to connect third-party software, open-source projects, or services, the path given by the official is also clear: use API keys, use Claude Console, or use supported cloud platforms.

This sentence is particularly crucial, many people must read it carefully!

Because the easiest way to get into trouble is using personal subscriptions as a universal interface.

Do not use personal Claude subscriptions to run reverse proxies, do not touch shared pools, do not engage in sub2api, do not run tasks for multiple people, do not run tasks for clients.

Especially do not proxy Claude subscription packages to projects like OpenClaw, Hermes Agent to consume quotas!

These practices are now high-risk areas!

Anthropic's attitude is clear: disguising identity, routing third-party traffic through subscription quotas, violating terms and policies, these are all prohibited and may subject to law enforcement action.

This is the point many people need to wake up to the most!

You can put away the侥幸心理 (wishful thinking).

Not getting caught before doesn't mean it won't happen in the future.

Now that the platform is pushing identity verification and risk control forward, many operations that could previously混过去 (slip through) will become precision targets!

Next, reducing losses if banned.

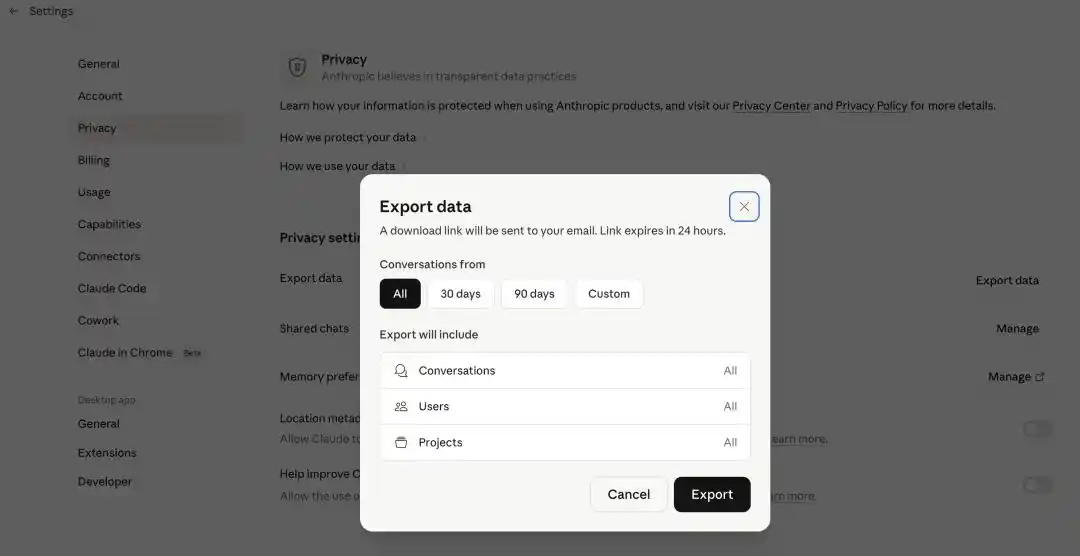

Don't hesitate on this action, do it now, immediately, export all Claude data that can be exported!

Conversation history, prompts, project context, client communication content, long-accumulated work traces – anything important, back it up!

This is what people最容易忽视 (most easily neglect), always thinking the account is there, so the data is there.

When you really get banned, you realize the real pain isn't losing the subscription fee; the real pain is all historical context being wiped clean, the workflow直接断电 (cut off directly)!

That's the loss that will truly break people!

Time to reconsider ChatGPT too

Ultimately, users need tools that work, not having to anxiously serve the platform's whims every day.

Who is more stable, who can provide long-term support, whose business logic is clearer, who is more worthy of placing core workflows on.

OpenAI has at least one realistic point.

Altman is a realist, a businessperson.

Markets that can make money, he serves.

Users that can be served, he accepts.

The business logic is on the table; making money is making money, the product is the product, without all the extra posturing drama!

This is already very important for users.

What everyone wants is stable delivery, long-term availability, clear rules, and not having to worry every second after paying whether something will go wrong!

Now look at the models themselves.

Claude's recent口碑波动 (reputation fluctuations) are visible to all.

More and more complaints about降智 (reduced intelligence), Opus 4.6 has been吐槽 (criticized) a lot, already starting to lose confidence in many scenarios.

Conversely, GPT-5.4 Pro (Note: Likely refers to a version like GPT-4-turbo or anticipation for GPT-5) now seems more and more like the answer that is more stable, stronger, with lower hallucination rates.

For actually getting work done, handling complex tasks, being a long-term主力 (main force), it's becoming more appealing!

This is also the dumbest part of Anthropic's move this time.

On one hand, implementing real-name verification, making users furious and anxious. On the other hand, the model experience is still losing口碑 (reputation).

Then users switching to ChatGPT is almost natural!

It's perfectly normal for many people to reposition ChatGPT as their main tool next.

This is also the most realistic risk-avoidance action!

Every move Claude makes this time may ultimately hit its own market share, "lifting a rock only to drop it on one's own feet"!

Appendix: Full Text of Claude's Chinese Announcement

《Identity Verification on Claude》

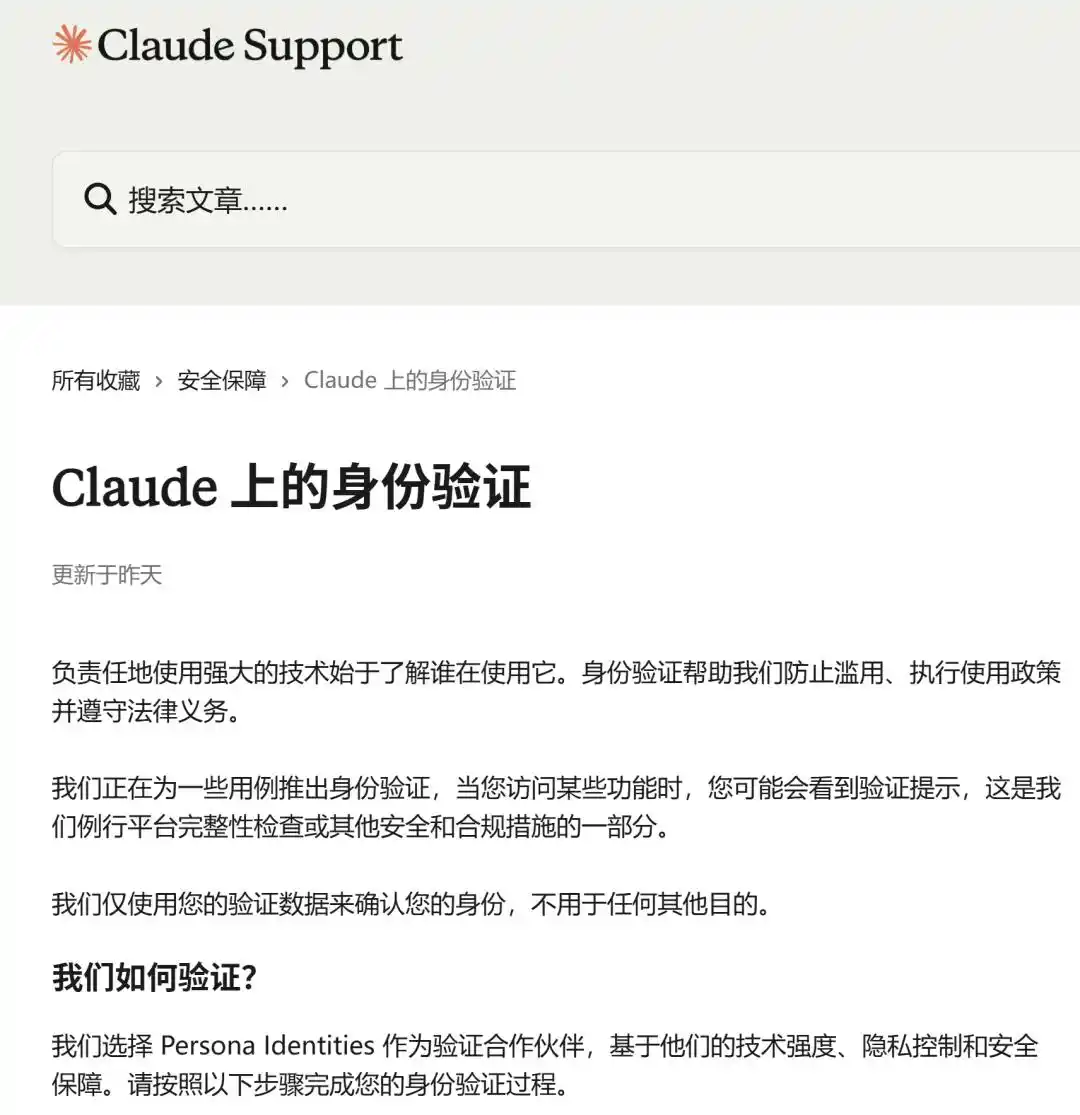

Responsible use of powerful technology starts with knowing who is using it. Identity verification helps us prevent abuse, enforce usage policies, and comply with legal obligations.

We are rolling out identity verification for some use cases. You may see a verification prompt when accessing certain features, as part of our routine platform integrity checks or other safety and compliance measures.

We only use your verification data to confirm your identity, not for any other purpose.

How do we verify?

We selected Persona Identities as our verification partner based on their technical strength, privacy controls, and security safeguards. Please follow the steps below to complete your identity verification process.

What you need to prepare

Before you start, please have the following ready:

Valid government-issued photo ID: Physical document, on hand

Phone or computer with camera: You may need to take a live selfie with your phone or use a webcam

A few minutes: Verification typically takes less than five minutes

Accepted ID types

We accept original, physical government-issued photo IDs from most countries. Common examples include:

Passport

Driver's license or state/provincial ID

National ID card

Your ID must be government-issued, clearly legible, in good condition, and contain your photo.

We do NOT accept:

Copies, screenshots, scans, or photos of photos

Digital or mobile IDs (e.g., mobile driver's license)

Non-government IDs: Student ID, employee badge, library card, bank card

Temporary paper IDs

How your data is protected

We know submitting an ID is a significant request, and we designed this process to protect your information at every step.

Anthropic is the data controller for your verification data. This means we set the rules for how data is used and retained. Persona processes data on our behalf, following our instructions.

Your ID and selfie are collected and stored by Persona, not on Anthropic's systems. Anthropic can access verification records via Persona's platform when needed—for example, to review an appeal—but we do not copy or store these images ourselves.

Persona is contractually restricted in how they use your data: only to provide and support verification, and to improve their fraud prevention capabilities. They must use industry-standard security controls to protect data and delete it per the retention periods we set and applicable law.

All data transmitted to Persona is encrypted in transit and at rest.

For full details on how we handle personal data, see our Privacy Policy.

What we are NOT doing

We do NOT use your identity data to train our models. Verification data is used only to confirm your identity and meet our legal and safety obligations.

We do NOT collect more information than we need. We only request the minimum information needed to verify your identity.

We do NOT share your identity data with anyone. Verification data remains only between you, Persona, and Anthropic, unless we are legally required to respond to valid legal process. Your verification data is never shared with third parties for marketing, advertising, or any purpose unrelated to verification and compliance.

What if my verification fails?

Verification can fail for many reasons: blurry photo, unclear document, expired ID, or technical issues.

If your verification is unsuccessful:

Retry. You will have multiple attempts during the verification process—most failures can be resolved by retaking the photo in better light or using a different government-issued photo ID.

Check your document. Ensure your ID is in good condition and clearly legible.

Contact us. If you've used all attempts and still cannot verify, please contact us via this form, and we will review.

Why was my account disabled after verification?

As part of our security process, we may disable accounts for various reasons:

Repeated violations of our Usage Policies

Creating an account from an unsupported location

Violation of Terms of Service

Use by someone under 18

If you believe your account was disabled in error, please fill out the appeal form and provide your account information so our security team can further investigate why your account was disabled.

Questions?

If you have any questions about identity verification, your data, or the verification process, please contact us via this form.

Reference:

https://support.claude.com/zh-CN/articles/14328960-claude-%E4%B8%8A%E7%9A%84%E8%BA%AB%E4%BB%BD%E9%AA%8C%E8%AF%81

This article is from the WeChat public account "新智元" (New Zhiyuan), author: 新智元, editor: 艾伦 (Allen)