Original Author: 0xJeff

Original Compilation: AididiaoJP, Foresight News

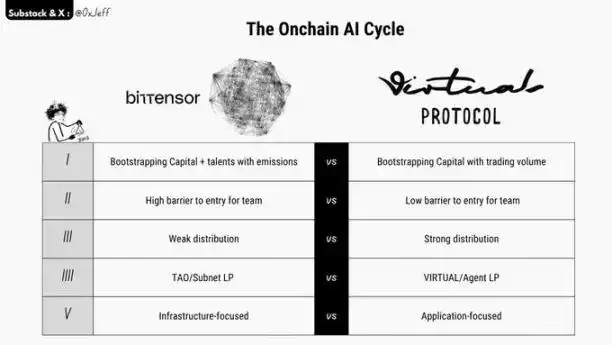

This article aims to provide a brief comparison between Bittensor subnets and Virtuals agents to help understand their respective flywheel mechanisms, differences, and similarities.

I. Guiding Capital and Talent Through Emissions Mechanism vs. Guiding Capital Through Trading Volume

Bittensor guides subnet development through its TAO emissions mechanism. Subnets are responsible for introducing the most innovative projects (or revenue-generating businesses) and compete for a share of the daily 3,600 TAO distribution.

Subnets also guide contributors (including miners performing tasks and validators verifying the miners' work) through their alpha token emissions mechanism. The emissions mechanism and the incentive coordination mechanism across stakeholders have been embedded since the project's inception.

Virtuals adopts a model similar to pump.fun, guiding development through trading volume. High trading activity translates into capital accumulation for the agent project. Agent teams can use their own emissions mechanisms to incentivize user participation.

In market cycles with high speculative token demand, this model has significant advantages—teams can quickly accumulate capital, gain product attention and market interest, thereby driving project launch and development.

II. High Entry Barrier vs. Low Entry Barrier (For Teams)

Launching a subnet on Bittensor requires substantial investment. Currently, acquiring a subnet slot requires 871 TAO (approximately $300,000), with the price fluctuating based on demand and the auction mechanism. This means subnet teams typically need mature ideas, clear plans, and solid execution capabilities.

To successfully operate a subnet, owners must ensure that the set tasks or objectives contribute to the R&D of their AI product/solution, prevent miner fraud, ensure validators effectively perform verification duties, generate revenue through business development and customer partnerships, and maintain investor confidence through buyback mechanisms.

The subnet token price needs to maintain an upward trend to attract more TAO inflow, increase the subnet's emission share, and thereby attract higher-level contributors to participate in mining.

In contrast, the barrier to launching an AI agent token on Virtuals is lower, requiring no initial cost, making it easier to test new ideas with less capital.

Virtuals also has a "60-day program" that allows founders to test new ideas and issue tokens during this period. If product-market fit is not found within 60 days, the relevant funds are reclaimed, and investors can retrieve a portion of their invested capital.

III. Weaker Distribution Capability vs. Stronger Distribution Capability

Bittensor operates independently on a blockchain built using the Polkadot Substrate framework. Cross-chain bridging is difficult, it lacks DeFi infrastructure components, and is not equipped with common infrastructure like the Ethereum Virtual Machine or Solana.

This results in a high entry barrier for the Bittensor ecosystem. Furthermore, relevant learning materials are filled with complex terminology, increasing the difficulty for new users to learn and understand. Consequently, its community members are mostly technical professionals willing to invest time in deep research, with relatively low retail participation.

In comparison, the understanding threshold for Virtuals is lower. Its team excels in marketing, branding, and distribution. Retail users can relatively intuitively understand concepts like AI agents, agent payments, and bots.

Since Virtuals is deployed on the Base chain, the process of purchasing AI agent tokens is convenient. The time from learning about a project, forming a bullish judgment, to making a purchase decision is short, which is a key reason for its rapid popularity from late 2024 to 2025 (earlier than Bittensor).

Currently, Bittensor is gradually entering the mainstream spotlight with pushes from Jason, Chamath, Barry Silbert (DCG & Yuma), and the community, leading to increased attention. However, the purchase process for subnet tokens remains relatively complex, and the issue has not been fundamentally resolved.

IV. TAO/Subnet Liquidity Pool vs. VIRTUAL/Agent Liquidity Pool

Bittensor and Virtuals share a key similarity in their liquidity pool flywheel mechanisms.

Investors wishing to purchase subnet alpha tokens need to hold TAO to do so. Therefore, rising demand for alpha tokens will drive up the price of TAO.

Similarly, within the Virtuals ecosystem, rising demand for AI agent tokens will drive up the price of VIRTUAL.

If the core tokens (TAO or VIRTUAL) can circulate within the ecosystem without outflow (e.g., through project teams trading goods and services to retain value), the advantages of this mechanism become more pronounced.

V. Infrastructure-Oriented vs. Application-Oriented

Bittensor subnets mostly focus on infrastructure or capital-intensive businesses, such as decentralized computing, inference, training, drug discovery, and quantum experiments.

Since Bittensor can provide over $10 million annually in funding for quality subnets and attract high-end talent, its model is suitable for driving ambitious, high-difficulty, high-investment ideas.

Virtuals agent teams, however, mostly focus on the application layer and consumer-facing agent products. Since agent tokens have a low initial price, if a team can launch a quality consumer product, it can leverage the token's market heat to quickly attract attention and drive project development.

Thanks to Virtuals' advantages in distribution, the flywheel effect of AI agent tokens demonstrated faster growth rates and higher price increases during periods of extreme market activity (such as late 2024 to early 2025).