Source: LEGION (@legiondotcc)

Original Title: What prediction markets reveal about ICOs

Compiled and Edited by: BitpushNews

Prediction Markets in the Token World

Polymarket's token launch prediction markets have cumulatively processed nearly $2.5 billion in trading volume, with the platform claiming an astonishing prediction accuracy rate—100% for fundraising size predictions and over 90% for valuation predictions. However, in-depth analysis reveals that these figures are severely misleading: the true market signal lies not in "what was predicted correctly" but in "how much was predicted incorrectly."

By analyzing data from 231 prediction markets across 29 token launch events and comparing it with actual token performance from CoinGecko, we found that these markets are not reliable prediction tools but rather contrarian indicators reflecting market sentiment.

Key Findings:

One week before the prediction markets closed, the true prediction accuracy rate was only 66.7%. At critical moments, the crowd was wrong one out of every three times, and the errors systematically showed excessive optimism.

24-Hour Volatility Issue: Using CoinGecko's hourly data, we found that PM's "FDV exceeding X 24 hours after listing" markets were betting on extreme volatility.

Average 24-hour change: ±23% | Best Case: Monad +54.8% | Worst Case: Trove -38.7%.

75% of tokens saw their prices drop within the first 24 hours of listing. PM's 24-hour FDV prediction accuracy rate: only 62.5%.

The Fallacy of Accuracy

When we track how market probabilities change over time, rather than just looking at the final outcome, a different picture emerges. The promise markets appear "100% accurate" because the final data leaks as the sale progresses. Insiders and observers update prices accordingly; this is merely price discovery after the fact.

Key Finding:

Both promise markets and FDV markets tend to approach ~100% accuracy at closing because they settle after the outcome is known. Promise markets close after the sale ends; FDV markets close after the 24-hour post-listing period ends. The only meaningful predictive metric is the accuracy rate one week before closing, when genuine uncertainty still exists. The 66.7% promise accuracy rate indicates that, at critical moments, the market was wrong one-third of the time.

Where User Judgment Went Wrong

We examined every market where users had over 60% confidence in an unrealized outcome. In every case, the error was consistently in the same direction: excessive optimism. The crowd consistently believed the fundraising size would be larger and the valuation higher than they actually were.

This systematic bias suggests that the participants in these markets are optimistic speculators who are drawn there precisely because they are bullish on the token sales.

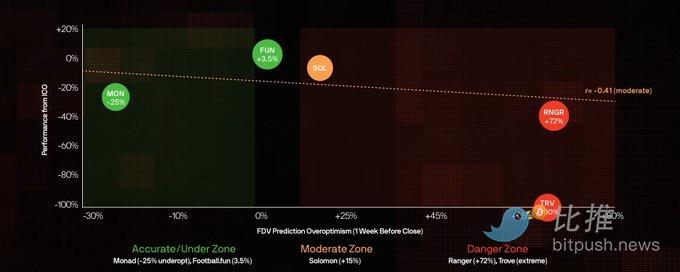

Excessive Optimism vs. Token Performance (Since ICO)

Methodology: This analysis only includes markets that had a public ICO and have issued tokens, using PM odds from one week before closing. Degree of Excessive Optimism = (PM Predicted FDV – Actual 24h FDV) / Actual 24h FDV. The Y-axis shows performance (return) since the ICO.

The data shows a moderate negative correlation (r=-0.41) between the degree of excessive optimism and ICO returns. Monad was undervalued (-25%) but has still fallen -24% since its ICO. Ranger was the most over-optimistically predicted (+72%) and has fallen -32% since its ICO. Only Football.fun remains above its ICO price (+1%).

Token Performance Ranking

The table below uses historical PM odds from one week before closing, revealing the true prediction accuracy.

The pattern is clear: extreme excessive optimism foretells disaster, and high PM trading volume is a contrarian indicator even when its predictions are accurate.

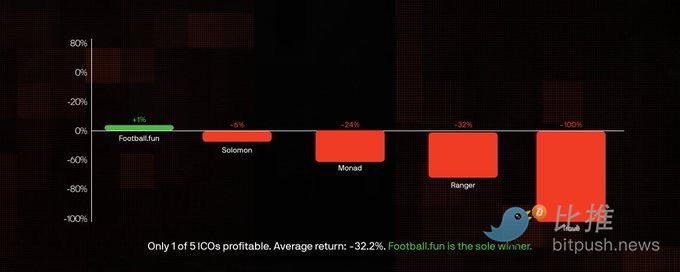

ICO FDV vs. Listing Performance

Key Finding: Among tokens with ICO data, 40% listed at a price below their ICO valuation. Average return since ICO: -32.2%. Only Football.fun is trading above its ICO price.

The pattern is starkly clear: Even tokens that listed above their ICO valuation (Monad, Solomon) eventually fell below the ICO price. Among the 5 ICO tokens in this dataset, only Football.fun is currently trading above its ICO price (+1%), making it the sole winner.

Key Conclusions

After analyzing 231 markets, $241.5 million in trading volume, and 8 listed tokens with verified 24-hour FDV data, several conclusions clearly emerge:

1. "100% accuracy" is meaningless. Markets close after the outcome is known (promise markets after the sale, FDV markets after 24 hours), so late-stage accuracy is trivially close to ~100%. One week before closing, the true prediction accuracy of promise markets was only 66.7%. At critical moments, the crowd was wrong one-third of the time.

2. Systematic excessive optimism. Among the top 15 markets, 5 markets showed over 60% confidence in thresholds that were not met. Average FDV overestimation: +35%.

3. High PM trading volume is a contrarian indicator. Monad ($89M) and MegaETH ($67M) had the highest degrees of excessive optimism. The more money the crowd pours in, the more confident they become, and the more wrong they tend to be.

4. Conservative predictions = Better outcomes. Tokens where PM predictions were accurate (Monad, Football.fun) fell less. Low hype and accurate predictions appear to be bullish signals.

Trading Signals

Based on the analysis, we can extract actionable signals for evaluating future token sales. These are not absolutely precise, but they represent patterns that appeared repeatedly in our dataset.

Bearish Signals

-

PM Trading Volume > $50 Million

-

FDV Excessive Optimism > 50%

-

All FDV thresholds likely won't be met

-

Promise Amount Excessive Optimism > 30%

Bullish Signals (Relatively)

-

PM Trading Volume < $5 Million

-

FDV Prediction Error within 20%

-

Multiple FDV thresholds will be met

-

Crowd expectations are conservative

This asymmetry is crucial. Bearish signals are strong indicators of poor outcomes, while bullish signals are relatively weaker—only suggesting that a token might "fall less" than its hyped counterparts. In a market where token prices have generally fallen from their highs, "falling less" is already the best-case scenario.

Ultimately: Polymarket's token sale markets are essentially hype gauges. The true signal lies not in the prediction itself, but in the deviation between the predicted outcome and the actual performance. When public money floods in, betting that valuations will continue to climb, it is wise to be cautious.

History repeatedly proves: when market consensus peaks, it is often the moment when investors experience the most pain.

Data sources in the article: Polymarket CLOB API, CoinGecko, CMC (cross-verified), price data as of January 27, 2026.

Twitter:https://twitter.com/BitpushNewsCN

Bitpush TG Discussion Group:https://t.me/BitPushCommunity

Bitpush TG Subscription: https://t.me/bitpush