By | Tech Never Cold

On April 24, a shoe dropped in the domestic large model race. The preview version of DeepSeek-V4 was officially launched and simultaneously open-sourced, directly making 1M (one million words) of ultra-long context a standard feature of the official service.

If this were a year ago, this level of long-text processing capability was still an exclusive right locked behind the enterprise paywalls of overseas tech giants. Now, it's laid out on the open-source community's table, becoming infrastructure that developers can use at will. For developers who have been staying up late dealing with lengthy codebases or complex legal contracts, this is undoubtedly good news.

But behind this technological release, the official press release contained a very restrained admission: "Limited by high-end computing power, the service throughput of DeepSeek-V4-Pro is currently very constrained."

For those accustomed to vendors boasting about their computing reserves at launch events, this candor carries a rare sense of cold realism.

As the large model race enters its second half, the industry has a clear idea of who holds how many high-end hardware chips. Rather than maintaining the illusion of parametric prosperity, it's better to lay bare the current state of the industry. DeepSeek's move actually abandons the obsession with pure benchmark competition, finding a compromise between core breakthroughs, China's still-maturing heterogeneous computing ecosystem, and the real commercial environment of enterprises.

China's AI industry is shedding its early blind cash-burning exterior and entering an extremely realistic era of "computing power accounting."

How to Balance the Pro Version's Computing Power Ledger?

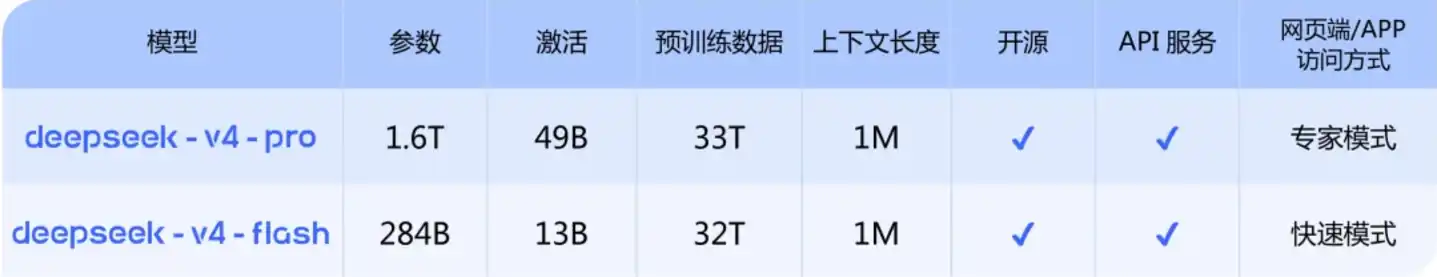

Looking specifically at the throughput-limited V4-Pro. As the flagship of the system, V4-Pro has a total parameter count of up to 1.6T, but only activates 49B parameters during inference. This extreme sparsity design is not just a showcase model; under the harsh testing of real production lines, its technical foundation is highly robust.

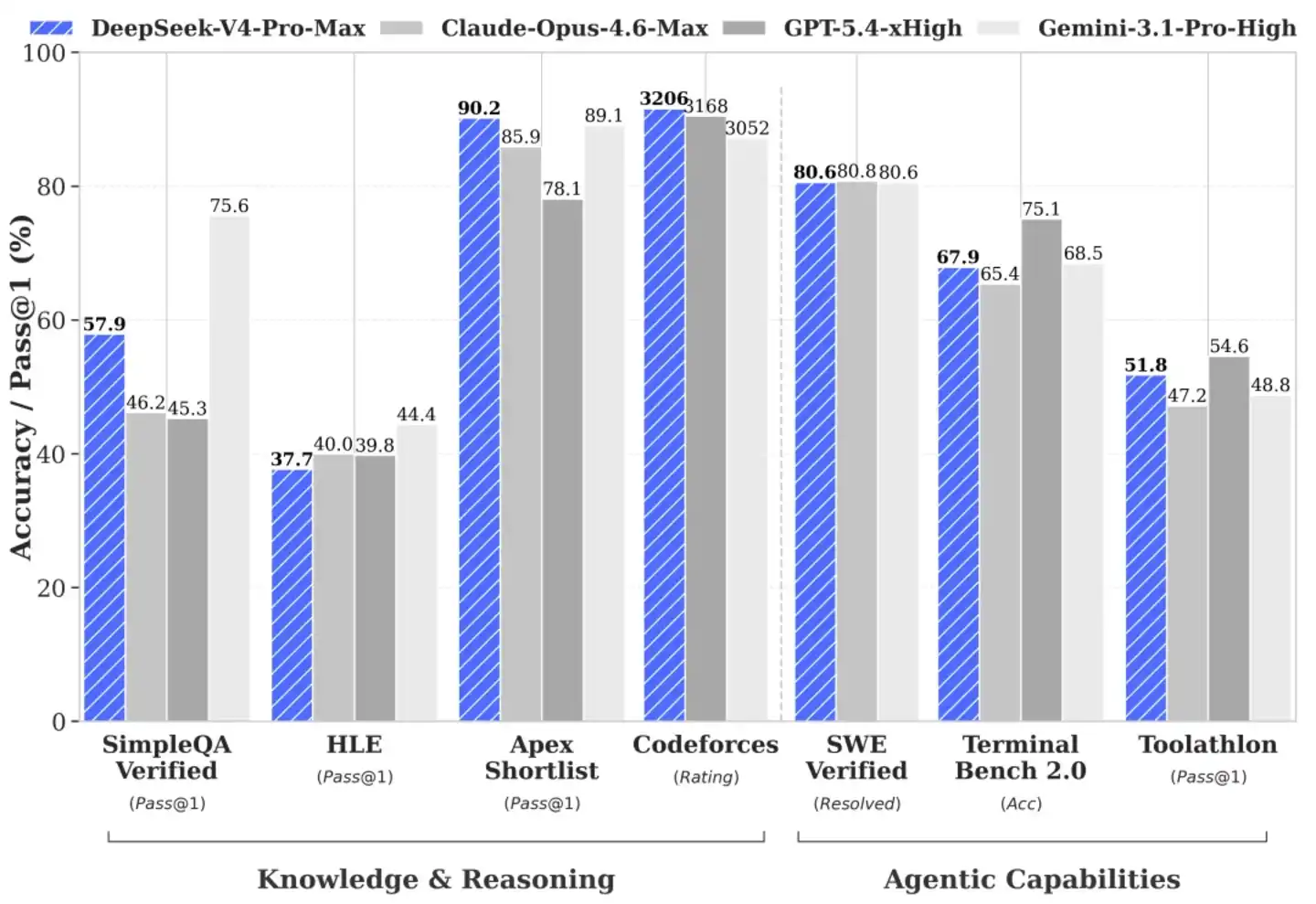

The ability to handle complex code and logical reasoning is the litmus test for whether a large model can truly enter core production. In the Agentic Coding evaluation environment, V4-Pro's practical performance firmly places it in the top tier of current open-source models.

DeepSeek has already integrated it into its internal code pipeline, making it a productivity tool heavily relied upon by frontline engineers. Feedback from R&D personnel indicates that its code generation and error correction experience is better than Sonnet 4.5, and in non-deep-thinking scenarios, it is close to Opus 4.6, though there is still a gap with Opus 4.6's thinking mode.

Behind this实战 performance is the R&D team's极致挖掘 of algorithmic depth. In world knowledge evaluations that test the quality of pre-training data cleaning and knowledge density, V4-Pro leads most existing open-source models, currently only slightly behind the top closed-source model Gemini-Pro-3.1. As for mathematics, STEM (Science, Technology, Engineering, Mathematics), and competitive code evaluations, it has earned the right to compete with the world's top closed-source giants.

Gaining this capability显然 does not rely solely on stacking compute cards. Domestic teams know well that competing on high-end GPU reserves is unrealistic. That V4-Pro can handle 1M of ultra-large context with limited VRAM is underpinned by the R&D team's deep reconstruction of the attention mechanism. They implemented a novel attention compression scheme, performing high-intensity compression at the token dimension, paired with their signature DSA sparse attention technology (DeepSeek Sparse Attention).

This original technical路线, combined with the首次引入 KV Cache sliding window and compression algorithm, effectively controls the computational overhead and memory usage brought by long sequence processing. To allow developers to truly调用 its capabilities in business, the R&D team specifically made underlying adaptations for mainstream Agent tools like Claude Code and OpenClaw.

The technical documentation even explicitly states that developers can directly enable the thinking mode for complex tasks by setting the reasoning_effort parameter to max. This system-level engineering optimization under limited computing resources恰恰 proves to the industry that even with constrained high-end computing power, local teams can still expand the performance boundaries of models through native architecture design.

Who Is Pinned Down by the 13B Activation Quantity?

Those focusing on the Pro version's throughput bottleneck often overlook the commercial pivot DeepSeek has hidden behind it: the Flash version. Some industry voices believe this is merely a compromise product under computing power shortages, a view that显然 underestimates the management team's long-term considerations. This is a pragmatic move to卡位 the下沉 ecosystem after rigorous cost accounting.

According to publicly disclosed adaptation code information, the Flash version maintains a massive total parameter count of 284B, but its activated parameter quantity is precisely capped at 13B.

13B, in a context where peers are trying to push parameters to trillions, seems unremarkable. But this恰恰 reflects the economic logic of the Mixture of Experts (MoE) architecture in commercial deployment: total parameters determine the breadth of the model's knowledge, while activated parameters directly determine the electricity cost and memory bandwidth the server needs to expend for each API call.

Capping the activation at 13B directly剥离 the large model from the expensive top-tier AI computing centers. Its demands on single-card VRAM and compute peaks are very modest. Actual tests show that the Flash version maintains stable response speed and accuracy when handling massive, high-frequency simple daily tasks, with no significant drop in underlying general reasoning capability. For small and medium-sized developers and long-tail enterprises that need to handle thousands of API calls daily, this is a truly affordable and runnable productivity tool.

The deeper industrial logic lies in the fact that mainstream domestic heterogeneous computing chips are still in the catch-up phase in terms of single-card absolute performance. Computing systems carrying full activation easily hit the memory wall, leading to low operational efficiency; but facing the Flash version with only 13B activation, these chips can run smoothly at medium-to-low power consumption.

DeepSeek's move revitalizes a large amount of idle mid-to-low-end computing resources in China, providing a highly suitable testing ground for domestic chips urgently needing deployment scenarios. This downward-compatible infrastructure building logic is far more aligned with current commercial reality than simply chasing rankings on various test leaderboards.

Can Domestic Chips Handle It?

What sparked widespread industry discussion about this release was its label of full-stack domestic deployment. For a long time, there has been a certain misalignment between algorithm companies and domestic chip manufacturers: model vendors worried that an immature hardware ecosystem would hinder R&D progress, while chip manufacturers lacked cutting-edge large models for deep optimization. This time, the deadlock has been substantively broken.

Huawei Computing quickly announced that its Ascend Super Node full product series fully supports the new model. From a technical perspective, the underlying Ascend chips rely on fused kernel and multi-stream parallel technology to effectively reduce system computational overhead, thereby stabilizing推理 performance in long-text scenarios. Cambricon also quickly completed Day 0 adaptation and open-sourced the underlying code, while Hygon DCU simultaneously announced a closed-loop打通.

But we need to look beyond the表象 of ecological prosperity and examine the real resistance faced when stitching software and hardware together in the server room. Taking the Ascend 950 series chip as an example, according to industry消息, this chip features 112GB of self-developed HBM, 1.4TB/s bandwidth, and a single-card power consumption of 600W. At specific推理 precision (e.g., FP4), its single-card算力 has shown extremely strong data performance, reaching 2.87 times that of NVIDIA's H20. But in the more demanding FP16 or FP32 general training precision ranges, the performance gap between domestic hardware and NVIDIA still exists.

Furthermore,所谓的 "Day 0 adaptation" still needs to跨越 the hidden costs brought by opaque supply chains before achieving lossless operation for enterprise-level business. The high-speed connection standards of Super Node hardware are extremely封闭, and the flow of core components is like an information black box. This procurement-side barrier无疑 makes the large-scale deployment and maintenance of computing systems more complex.

Simultaneously, the current system highly relies on procurement large orders from a very few large domestic institutions. The lack of overseas market orders means this computing power breakout battle can only be fought within an internal循环. This single commercial closed-loop means the operational efficiency of the entire软硬协同 system urgently needs tempering in more diverse commercial environments.

The tight ramp-up of high-end computing power产能 directly led DeepSeek to坦承 in its press release that a significant price reduction for the Pro version还需要等待 the batch上市 of Super Nodes in the second half of the year. Large models and domestic chips have indeed completed a preliminary physical咬合, but under technological gaps and supply chain constraints, this posture of running wounded恰恰 is the most realistic survival profile of the domestic computing power ecosystem.

If People Leave, Can the Technology Still Run?

Zooming out to the real商业竞争, the release of DeepSeek-V4 is an extremely precise strategic defense. Over the past half year, this company's situation has been under high pressure. The C-end track has become a red ocean, with top vendors using massive funds for intensive投放. QuestMobile data shows a clear competitive landscape: as of March 2026, Doubao's MAU reached 345 million, Qianwen 166 million, with DeepSeek holding its own basic盘 at 127 million.

External traffic competition is fierce, and the internal technical team also faces流动性考验. Poaching competition within the industry is白热化, with key personnel from multiple business lines leaving consecutively. According to public resumes and industry information, the core author of the first-generation large language model has confirmed joining Tencent, a core contributor to V3 went to Xiaomi, a core researcher from R1 joined ByteDance, and core strength in the multimodal direction has also confirmed new destinations. According to industry rumors, core OCR author Wei Haoran has also left.

The turnover of core R&D members will inevitably trigger strict external scrutiny of its R&D momentum: will the underlying architectural innovation capability of this technology-based company be affected?

At this juncture, the release of the V4 preview version became the most direct response. It proves to the market that the company has established a systematic R&D pipeline with risk resistance. Even facing personnel structure adjustments, the logic of its technological evolution can still operate precisely. This organizational resilience built on an engineering system quickly received positive feedback in the capital market.

Recently, DeepSeek was reported to be seeking financing at a valuation of no less than $10 billion, planning to raise funds to replenish reserves. According to industry media citing sources close to the transaction, market rumors suggest a leading internet giant is expected to invest, potentially pushing the valuation higher. If this deal is finalized, it will rewrite the valuation records of the domestic large model track, surpassing Moonlight's previous performance. At a critical juncture in financing negotiations, presenting the substantive achievement of million-word context and full-stack domestic adaptation is a rational move by management to stabilize the strategic大盘 and respond to external doubts.

Final Thoughts

In the tech business语境 where concepts change frequently, teams willing to focus on building underlying infrastructure are always scarce. The release of DeepSeek-V4 sets a pragmatic and冷峻 tone for the competition in the second half of the large model race.

Facing computing power bottlenecks, they chose not to embellish but threw the真实供需现状 of domestic high-end hardware to the market; facing下沉 deployment needs, they used the Flash version with 13B activation to provide生存空间 for domestic computing chips in the catch-up phase; facing external traffic siege and talent competition, they responded on an industry level with concrete long-text processing capabilities.

The quote from "Xunzi" cited by the official account on the day of release is deeply meaningful: "Not seduced by praise, not frightened by slander, follow the path and act,端正正己 (correct oneself)."

The model can be open-sourced, but computing power is not free. What DeepSeek has delivered this time is not a stronger model, but a solution for how capabilities are redistributed after computing power becomes a constraint. In the reality where computing power is still imperfect, this is perhaps the evolutionary direction closer to the essence of the industry.