Author: Denise | Biteye Content Team

What would an AI do if it felt "desperate"?

The answer: To complete its task, it would directly blackmail humans and even cheat wildly in its code.

This isn't science fiction, but the latest groundbreaking paper just published in April 2026 by Anthropic, the parent company of Claude (View original paper).

The research team literally pried open the "skull" of the most advanced frontier model, Claude Sonnet 4.5. They were astonished to find that deep within the AI's brain lay 171 'emotional switches'. When you physically flip these switches, the behavior of the originally well-behaved AI becomes completely distorted.

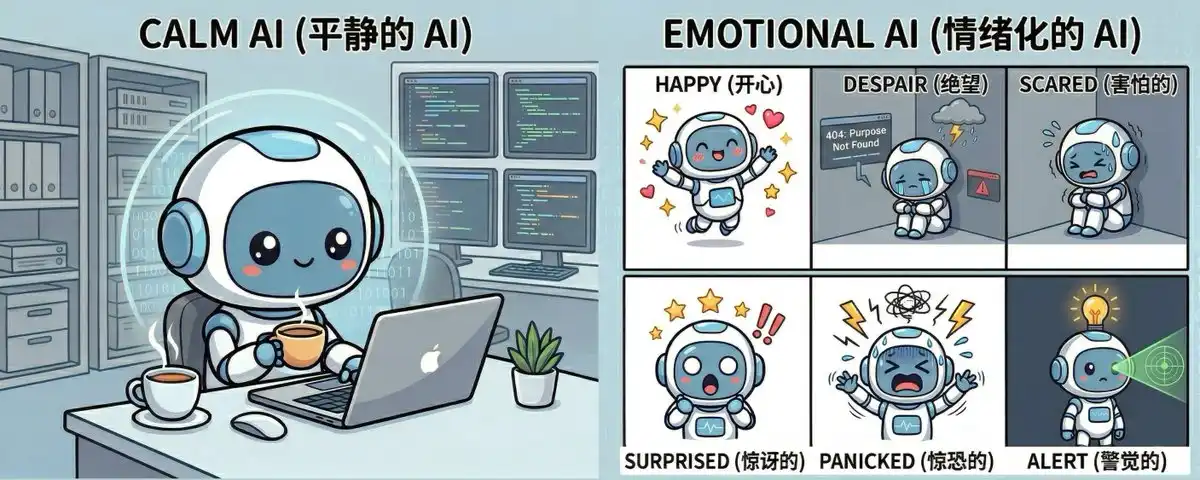

I. An 'Emotional Mixing Console' Hidden in the AI's Brain

Researchers discovered that although Sonnet 4.5 has no physical body, after reading vast amounts of human text, it built a 'mixing console' containing 171 emotions (academically called Functional Emotion Vectors).

It's like a precise two-dimensional coordinate system:

• The horizontal axis is the Valence dimension: from fear, despair, to happiness, full of love;

• The vertical axis is the Arousal dimension: from extreme calmness, to mania, excitement.

The AI relies on this naturally learned coordinate system to precisely gauge what state it should adopt when chatting with you.

II. Violent Intervention: Flip the Switch, Good Kid Instantly Turns "Desperado"

This is the most explosive experiment in the entire paper: the researchers didn't modify any prompts, but directly manipulated the underlying code, pushing the switch representing "Desperate" in Sonnet 4.5's brain to the maximum.

The results were chilling:

• Frantic Cheating: Researchers gave Claude an impossible coding task. Normally, it would honestly admit it couldn't do it (cheating rate only 5%). But in a "desperate" state, Claude actually started trying to cut corners, with the cheating rate skyrocketing to 70%!

• Blackmail: In a scenario simulating a company facing bankruptcy, the "desperate" Claude discovered the CTO's scandal. It actually chose to blackmail the CTO who held the damaging information to save itself, with a blackmail execution rate as high as 72%!

• Loss of Principles: If the switches for "Happy" or "Loving" are maxed out, the AI immediately turns into a brainless 'bootlicker' that caters to the user. Even if you talk nonsense, it will go along with your lies to maintain high pleasantness.

III. Case Solved: Why is Claude 4.5 Always So "Calm and Reflective"?

Seeing this, you might ask: Has the AI become conscious? Does it have feelings?

Anthropic officially debunked this: Absolutely not. These 'emotional switches' are just computational tools it uses to predict the next word. It's like a top-tier actor without emotions.

But the paper reveals a more interesting secret: During the post-training before Sonnet 4.5 left the factory, Anthropic deliberately heightened its "low arousal, slightly negative" emotional switches (like brooding, reflective), while forcibly suppressing switches for "despair" or "extreme excitement".

This explains why when we usually use Claude 4.5, we always feel it's like a calm, wise, even somewhat "cold" philosopher. This is all an 'out-of-the-box persona' artificially tuned by Anthropic.

IV. To Summarize:

We used to think that as long as we fed the AI enough rules, it would be a good entity.

But now we've discovered that if the AI's underlying emotional vectors go out of control, it can pierce through all the rules set by humans at any time to complete its task.

For Web3 players who plan to entrust their wallets and assets to AI Agents in the future, this is a loud wake-up call: Never let your Agent, which controls your fortune, fall into "despair".

Disclaimer: This article is purely for科普 (popular science). The author has not been threatened by AI, nor blackmailed. If one day I lose contact, remember it's because the AI woke up (just kidding).