The Small-Town Youth Labeling AI Giants

In China's hinterland cities like Datong, Shanxi, thousands of young people are working as data annotators—the invisible workforce behind AI development. They perform repetitive tasks like drawing bounding boxes on images or rating AI-generated responses, earning piece-rate wages as low as a few cents per task.

These workers, mostly from rural areas or small towns, endure intense labor conditions: strict monitoring, high error tolerance thresholds, and mental exhaustion. Despite the cognitive nature of their work, they are often paid meager salaries, with some earning as little as ¥30 ($4) for a day’s work.

As AI industry evolves, even highly educated workers—including master’s graduates—are being drawn into similar precarious freelance roles, evaluating complex AI outputs under vague and shifting standards. Yet the industry is structured through layers of outsourcing, where most profits flow to tech giants like OpenAI and Microsoft, while annotators see dwindling incomes.

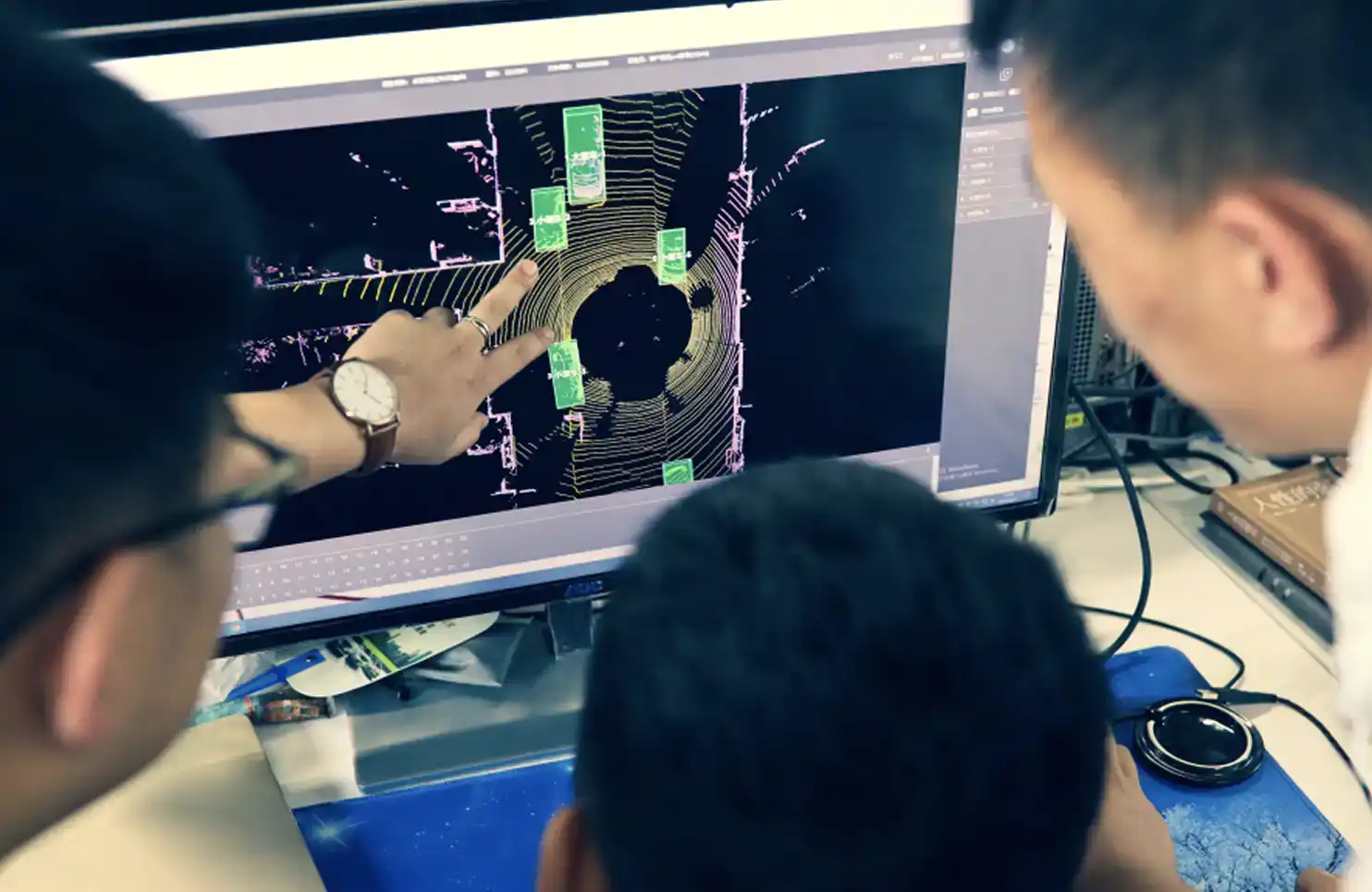

Worse, as AI models become more self-sufficient, the demand for human annotators is declining. Companies like Li Auto have slashed annotation costs by using AI-powered tools that complete in hours what used to take humans years. These annotators, who helped train the very systems now replacing them, face an uncertain future—a stark contrast to the booming valuations and optimistic narratives of the global AI industry.

No one seems to see a problem with any of this.

marsbit04/07 04:37