Author: Curry, Deep Tide TechFlow

Last week, a secondary school in Manchester, UK, used AI to review its library.

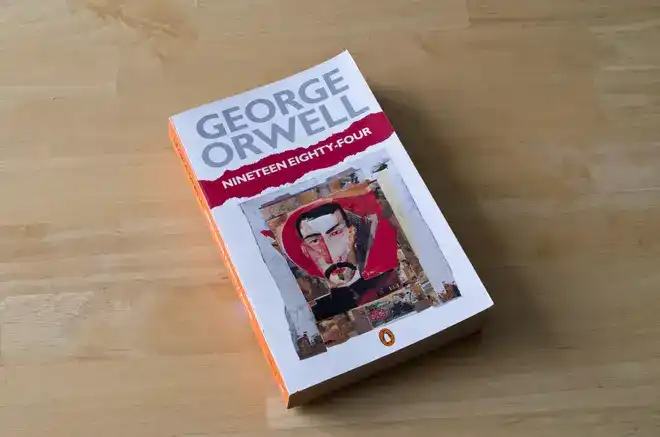

AI generated a list of 193 books to be removed, each with a reason. George Orwell's "1984" was prominently included, with the reason being "contains themes of torture, violence, and sexual coercion."

"1984" depicts a world where the government monitors everything, rewrites history, and decides what citizens can and cannot see. Now, AI has done the same for a school, and it may not even understand what it is saying.

The school librarian found it unreasonable and refused to fully implement the recommendations given by AI.

The school then launched an internal investigation against her on the grounds of "child safety," accusing her of introducing inappropriate books to the library and reported her to the local government. She took sick leave due to pressure and eventually resigned.

Absurdly, the local government's investigation concluded that she had indeed violated child safety procedures, and the complaint was upheld.

Caroline Roche, chair of the UK School Library Association, said this conclusion means she can no longer work in any school.

The person who resisted AI's judgment lost her job, while those who signed off on AI's judgment faced no consequences.

Subsequently, the school admitted in internal documents that all classifications and reasons were generated by AI, stating: "Although the classification was generated by AI, we believe it is generally accurate."

A school handed over the judgment of "what books are suitable for students" to AI. AI returned an answer it did not understand, and a human administrator stamped it without even looking closely.

After this incident was exposed by the UK free speech organization Index on Censorship, the issues raised extended far beyond a school's bookshelf:

When AI starts deciding for humans what content is appropriate and what is dangerous, who judges whether AI's judgment is correct?

Wikipedia Closes Its Doors to AI

In the same week, another institution answered this question with action.

While the school let AI decide what people can read, the world's largest online encyclopedia, Wikipedia, made the opposite choice: not letting AI decide what the encyclopedia writes.

In the same week, English Wikipedia formally passed a new policy prohibiting the use of large language models to generate or rewrite entry content. The vote was 44 in favor and 2 against.

The direct cause was an AI account called TomWikiAssist. In early March this year, this account autonomously created and edited multiple entries on Wikipedia, which were urgently addressed after being discovered by the community.

It takes AI only a few seconds to write an entry, but volunteers spend hours verifying the facts, sources, and wording of an AI-generated entry for accuracy.

The Wikipedia editing community has only so many people. If AI can mass-produce content indefinitely, human editors simply cannot review it all.

This is not even the most troublesome part. Wikipedia is one of the most important training data sources for global AI models. AI learns knowledge from Wikipedia and then uses what it has learned to write new Wikipedia entries, which are then ingested by the next generation of AI models for further training.

Once AI-generated misinformation mixes in, it will continuously amplified in this cycle, becoming a matryoshka doll-style AI poisoning:

AI pollutes training data, and training data pollutes AI.

However, Wikipedia's policy also leaves two openings for AI: editors can use AI to polish their own writing or use AI to assist with translation. But the policy specifically warns that AI may "go beyond your request, change the meaning of the text, and make it inconsistent with the cited sources."

Human writers make mistakes, and Wikipedia has relied on community collaboration to correct them for over twenty years. AI makes mistakes differently; it fabricates things that look more real than the truth and can be produced in bulk.

A school trusted AI's judgment and lost a librarian. Wikipedia chose not to trust and simply closed the door.

But what if even the creators of AI are starting to lose faith?

The Creators of AI Are Themselves Afraid

While external institutions are closing doors to AI, AI companies are also pulling back.

In the same week, OpenAI indefinitely shelved ChatGPT's "adult mode." This feature was originally planned for release last December, allowing age-verified adult users to engage in erotic conversations with ChatGPT.

CEO Sam Altman personally announced it last October, stating the goal was to "treat adult users like adults."

After being postponed three times, it was directly canceled.

According to the British "Financial Times," OpenAI's internal health advisory committee unanimously opposed this feature. The advisors' concerns were specific: users would develop unhealthy emotional dependencies on AI, and minors would inevitably find ways to bypass age verification.

One advisor put it more directly: without significant improvements, this thing could become a "sexy suicide coach."

The error rate of the age verification system exceeds 10%. Based on ChatGPT's weekly active user base of 800 million, 10% means tens of millions of people could be misclassified.

Adult mode is not the only product cut this month. AI video tool Sora and ChatGPT's built-in instant checkout feature were also taken offline around the same time. Altman said the company is focusing on its core business and cutting "side tasks."

But OpenAI is simultaneously preparing for an IPO.

A company sprinting towards an上市,密集 cutting functions that may cause controversy, this move might more accurately be called risk aversion than focus.

Five months ago, Altman was still saying to treat users like adults. Five months later, he found that his own company still hasn't figured out what AI can let users touch and what it cannot.

Even the creators of AI themselves have no answer. So who should draw this line?

The Uncatchable Speed Gap

Put these three things together, and it's easy to draw a core conclusion:

The speed at which AI produces content and the speed at which humans review content are no longer on the same scale.

The choice of that school in Manchester is easy to understand in this context. How long would it take for a librarian to read all 193 books and make a judgment? Let AI run through them: a few minutes.

The principal chose the few-minute solution. Do you really think he trusted AI's judgment? I think it's more because he didn't want to spend the time.

This is an economic problem. The cost of generation approaches zero, while the cost of review is entirely borne by humans.

Therefore, every institution affected by AI is forced to respond in the most粗暴 way: Wikipedia直接禁止, OpenAI直接砍产品线. None of the solutions are the result of careful consideration; they are all stopgap measures implemented before there's time to think clearly.

"Block it first and talk later" is becoming the norm.

AI capabilities iterate every few months, while discussions about what content AI can touch don't even have a decent international framework. Each institution only manages the line in its own yard. The lines contradict each other, and no one coordinates them.

AI's speed is still accelerating. The number of reviewers won't increase. This scissors gap will only widen until one day something far more serious than banning "1984" happens.

By then, drawing lines might be too late.