Written by: Eric, Foresight News

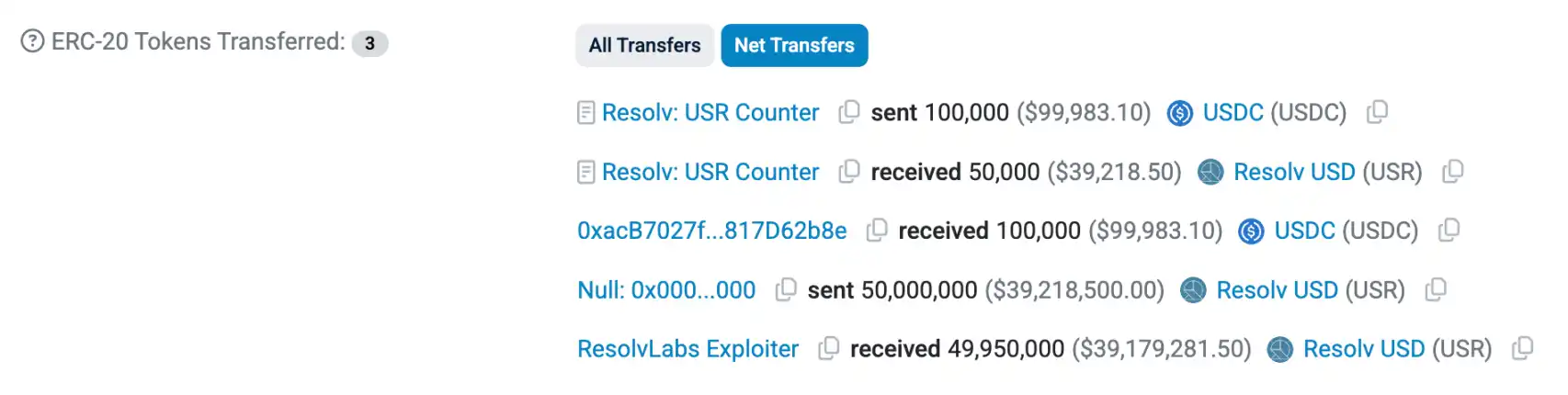

At approximately 10:21 Beijing time today, Resolv Labs, which issues the stablecoin USR using a Delta neutral strategy, was hacked. An address starting with 0x04A2 used 100,000 USDC to mint 50 million USR from the Resolv Labs protocol.

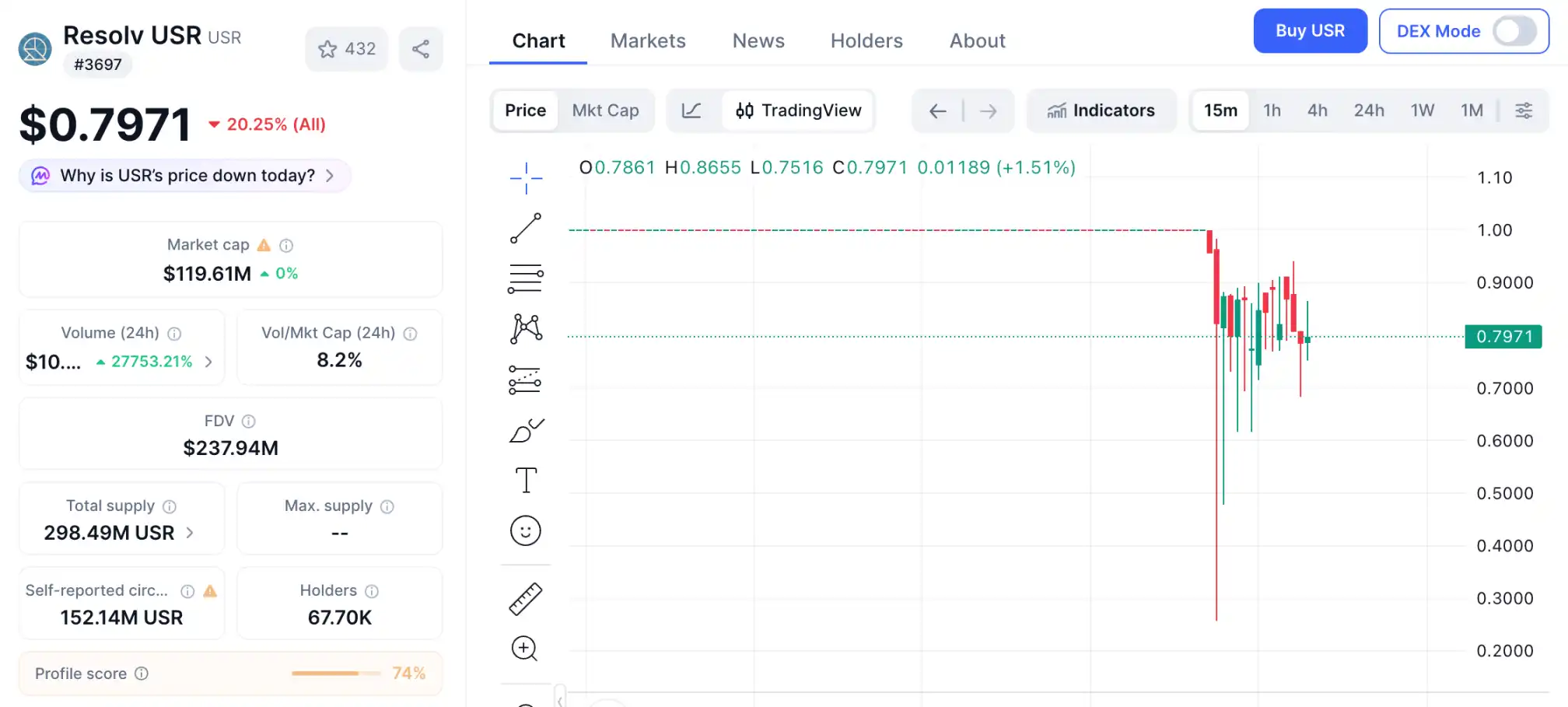

As the incident was exposed, USR plummeted to around $0.25, and as of writing, it has recovered to approximately $0.80. The price of the RESOLV token also saw a short-term drop of nearly 10%.

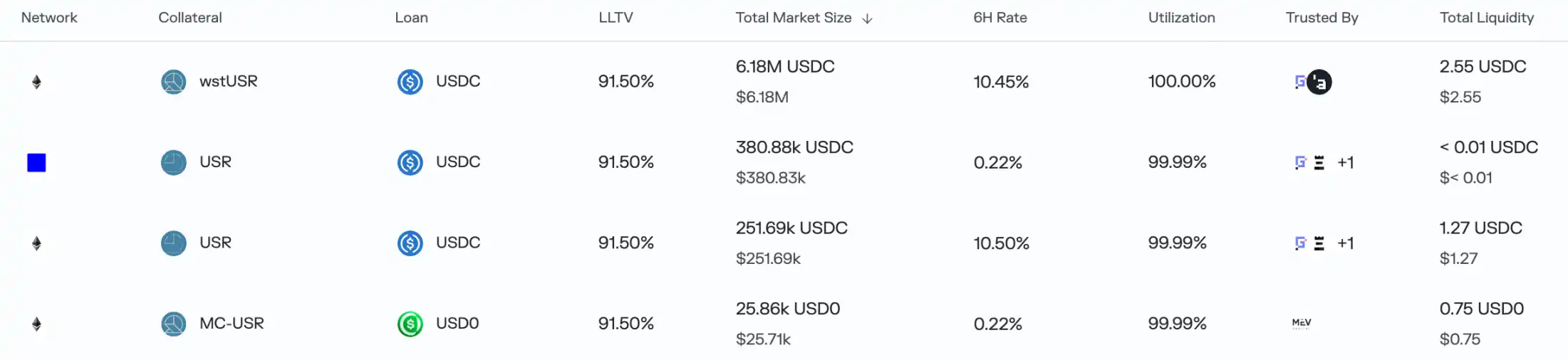

Subsequently, the hacker repeated the same method, using another 100,000 USDC to mint 30 million USR. As USR significantly depegged, arbitrage traders quickly took action. Many lending markets on Morpho that supported USR, wstUSR, and others as collateral were almost drained, and Lista DAO on BNB Chain also suspended new borrowing requests.

The impact was not limited to these lending protocols. In the Resolv Labs protocol design, users can also mint an RLP token, which has greater price volatility and higher returns but requires bearing compensation liability when the protocol incurs losses. Currently, the circulating supply of RLP tokens is nearly 30 million, with the largest holder, Stream Finance, holding over 13 million RLP, representing a net risk exposure of approximately $17 million.

Yes, Stream Finance, which was previously hit by the xUSD incident, may be hit again.

As of writing, the hacker has converted USR into USDC and USDT and continues to buy Ethereum, having purchased over 10,000 ETH so far. Using 200,000 USDC, the hacker extracted over $20 million in assets, finding their "hundred-fold coin" during the bear market.

Another Exploit Due to "Lack of Rigor"

The sharp drop on October 11 last year caused collateral losses for many stablecoins issued using Delta neutral strategies due to ADL (Auto-Deleveraging). Projects using altcoins as assets for strategy execution suffered even more severe losses, with some even directly absconding.

The attacked Resolv Labs also uses a similar mechanism to issue USR. The project announced in April 2025 that it had completed a $10 million seed round led by Cyber.Fund and Maven11, with participation from Coinbase Ventures, and launched the RESOLV token at the end of May/early June.

However, the reason for the attack on Resolv Labs was not extreme market conditions but rather a "lack of rigor" in the design of the USR minting mechanism.

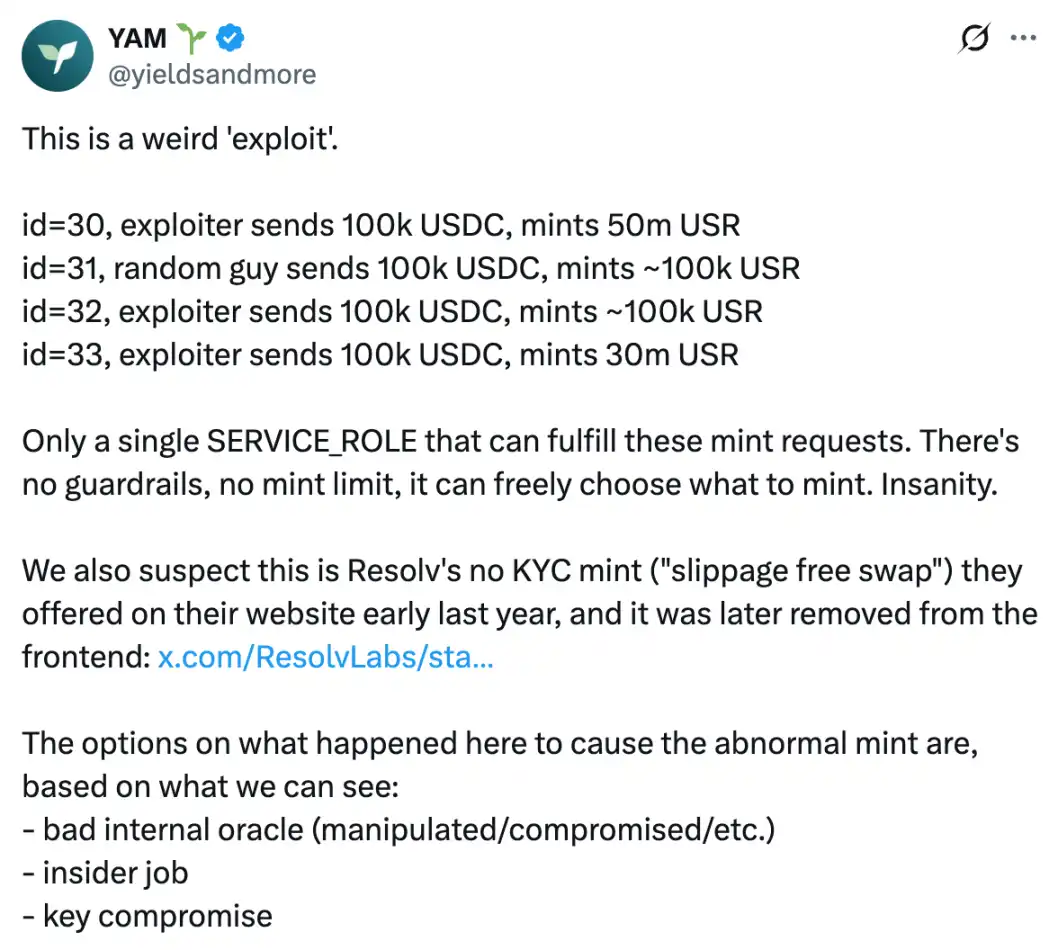

No security firm or official has yet analyzed the cause of this hack. The DeFi community YAM preliminarily concluded through analysis that the attack was likely caused by the SERVICE_ROLE, used by the protocol's backend to provide parameters to the minting contract, being compromised by the hacker.

According to Grok's analysis, when a user mints USR, they initiate a request on-chain and call the contract's requestMint function, with parameters including:

_depositTokenAddress: the address of the deposited token;

_amount: the amount deposited;

_minMintAmount: the minimum expected amount of USR to receive (slippage protection).

Subsequently, the user deposits USDC or USDT into the contract. The project's backend SERVICE_ROLE monitors the request, uses the Pyth oracle to check the value of the deposited assets, and then calls the completeMint or completeSwap function to determine the actual amount of USR to mint.

The problem lies in the fact that the minting contract fully trusts the _mintAmount provided by the SERVICE_ROLE, assuming this number was verified off-chain by Pyth. Therefore, it did not set an upper limit restriction, nor did it perform on-chain oracle verification, and directly executed mint(_mintAmount).

Based on this, YAM suspects that the hacker gained control of the SERVICE_ROLE, which should have been controlled by the project team (possibly due to internal oracle failure, insider theft, or key compromise), and directly set the _mintAmount to 50 million during the minting process, achieving the attack of minting 50 million USR with 100,000 USDC.

In conclusion, Grok's assessment is that Resolv did not consider the possibility that the address (or contract) receiving user minting requests could be compromised by hackers when designing the protocol. When the USR minting request was submitted to the final USR minting contract, no maximum minting amount was set, nor did the minting contract perform secondary verification using an on-chain oracle; it simply trusted all parameters provided by the SERVICE_ROLE.

Inadequate Prevention

In addition to speculating on the cause of the hack, YAM also pointed out the project's lack of preparedness in crisis response.

YAM stated on X that Resolv Labs only paused the protocol 3 hours after the hacker's first attack was completed, with about 1 hour of delay coming from collecting the 4 signatures required for the multisig transaction. YAM believes that an emergency pause should require only one signature, and the authority should be assigned to team members as much as possible, or to trusted external operators, to increase attention to on-chain anomalies, improve the possibility of quick pauses, and better cover different time zones.

Although the suggestion of requiring only a single signature to pause the protocol is somewhat radical,确实 requiring multiple signatures across different time zones to pause the protocol can indeed cause significant delays when emergencies occur. Introducing trusted third parties who continuously monitor on-chain behavior, or using monitoring tools with emergency protocol pause permissions, are lessons learned from this incident.

Hacker attacks on DeFi protocols have long gone beyond contract vulnerabilities. The Resolv Labs incident serves as a warning to project teams: the assumption in protocol security should be to trust no single link; all parameter-related links must undergo at least secondary verification, even if it's the project's own operational backend.