Just before Google I/O, Google held an Android 17 pre-event at 1 AM on May 13. Unexpectedly, Google unveiled an entirely new product series at this event — Android PCs. Different from Chromebooks, Android PCs are positioned higher-end, with productivity as their core selling point. Google is no longer content with just the entry-level market; it aims to capture more territory in the PC space beyond just netbooks.

The concept of AI PCs has been very hot in recent years. Countless PC chip and terminal manufacturers emphasize the AI features of their products, tirelessly touting the new changes AI brings to PC usage scenarios. The sudden emergence of Android PCs, however, shows the world a new AI PC solution: no longer reliant on traditional desktop systems, cloud AI is not an accessory but the core, from which all related functions derive.

(Image Source: Google)

If Android PCs succeed, cloud PCs might very well become the version answer for the AI era.

Current AI PCs Are Still Not "AI" Enough

Currently, AI PCs in the industry are more like putting an AI shell over traditional PCs. Regarding chips, both Intel and AMD have added dedicated AI computing units to their PC processors to enhance on-device AI capabilities. For systems and ecosystems, terminal manufacturers are building their own AI applications into the systems, including their own computer managers, agents, etc., and integrating external large models.

However, these AI PCs are essentially still traditional Windows PCs, with AI merely serving as an add-on feature. Moreover, the vast majority of AI scenarios implemented on AI PCs rely on cloud AI, including document summarization/editing, image generation, and various "lobster" tools.

Although chip vendors keep promoting their chips' local AI capabilities and emphasize scenarios deploying open-source models using CPU, GPU, and NPU heterogeneous computing, in reality, consumer-grade PC chips can only provide limited AI computing power. After all, not every consumer has a 5080 graphics card or memory starting at 32GB.

(Image Source: JD.com)

In this situation, an ordinary consumer-grade PC can hardly run large-parameter local models and cannot truly handle slightly more complex AI tasks.

Recently, OpenClaw went viral, directly causing the Mac mini to sell out and prices to rise. But the vast majority of people use cloud models to "raise lobsters," with various lobster deployment tutorials mentioning which AI's tokens are cheap and how to reduce token consumption.

(Image Source: Gitbook)

This leads to a new question: If AI PCs still rely on cloud AI to implement AI scenarios, then what is the value of the AI PC hardware itself?

After all, theoretically, a traditional PC without the AI chip premium, as long as it can connect to the internet and access cloud AI, can also transform into an AI PC.

We could even be more radical: drastically reduce the PC's hardware configuration. As long as it has a screen, keyboard, and internet capability, it can become a cloud AI computer. The rapid development and popularization of AI seem to give the not-so-new species of "cloud PC" a chance to explode.

Cloud PC + AI: The Future of AI PCs?

For us, cloud PCs are not a strange concept. The cloud gaming craze a few years back essentially operated in the form of cloud PCs. At that time, the comprehensive rollout of 5G, with its low latency and high throughput, was seen as a panacea for popularizing cloud PCs.

But reality was harsh; the concept of cloud gaming never really took off. Google's cloud gaming service Stadia, launched in 2019, was shut down in less than three years. According to overseas media reviews and user feedback, for Stadia to achieve a smooth experience close to local gaming platforms, it required extremely high network quality, such as using local high-speed broadband via wired connection. Even using WiFi significantly degraded the experience, let alone using 5G, a more volatile mobile network.

(Image Source: Google)

However, cloud gaming is highly sensitive to network latency, while online AI is much more tolerant. As ordinary users, we've become accustomed to AI taking time to "think" when answering questions or handling tasks; we don't demand the immediacy of results like in games.

Ultimately, the bottleneck for AI response speed is not bandwidth but computing power. Even if you install a local large model, it still requires sufficient inference time to generate answers.

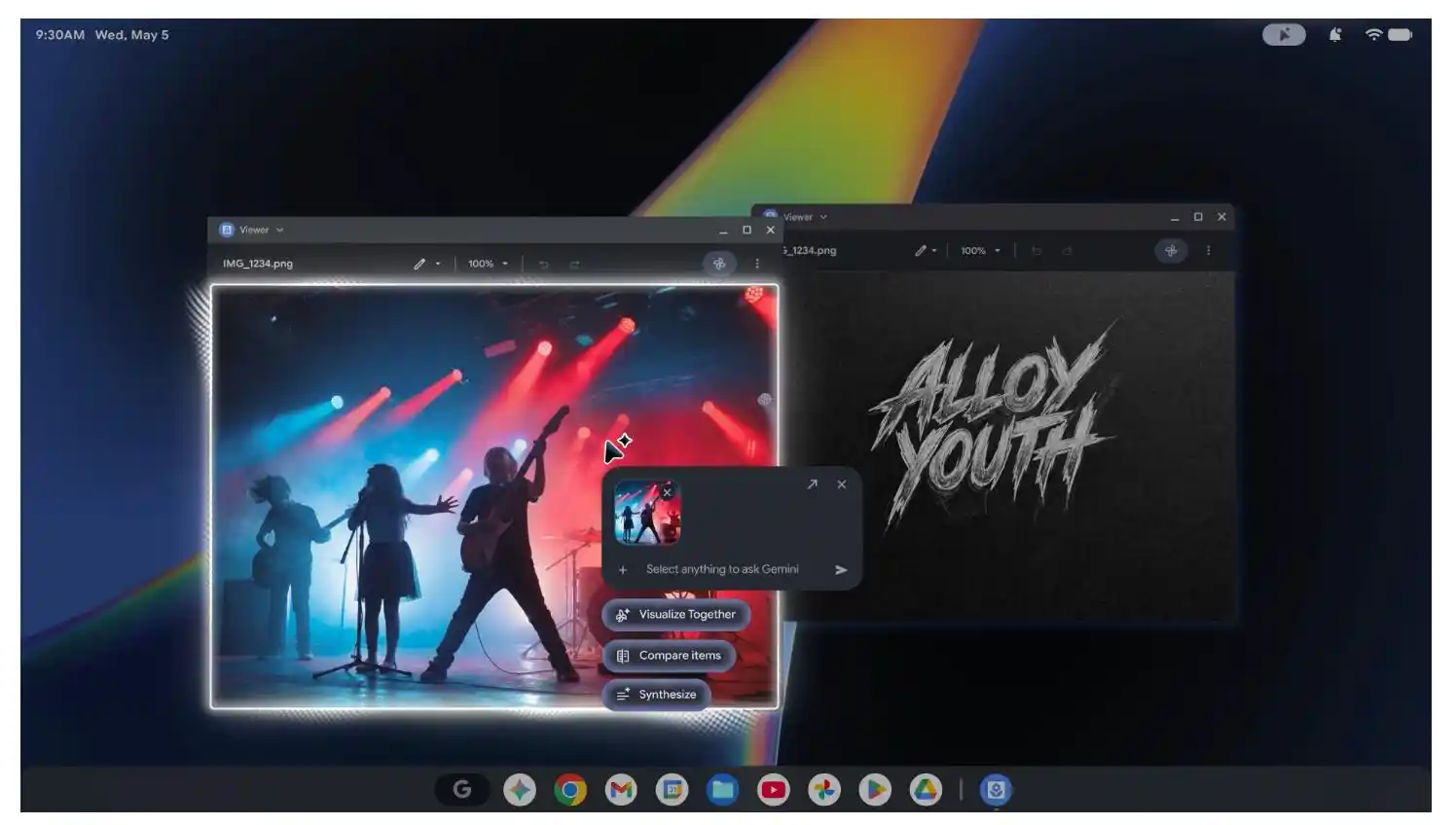

Therefore, we believe that the cloud PC form is naturally suited for AI PCs. And Google's Android PCs are creating AI PCs in a mode distinct from traditional PCs. On Android PCs, AI is not an accessory but a core function. Google stated that currently, most AI tools are standalone apps, requiring users to copy data into the AI interface to use AI functions. Android PCs integrate AI throughout the system. Most visually, the AI appears wherever the mouse cursor moves, capturing and directly processing/operating text, images, code, and other information near the cursor.

(Image Source: Google)

Furthermore, the implementation plan for Android PCs is very diverse. For Android PCs, Google mainly provides product concepts and implementation forms; the hardware itself still needs to be built by partner manufacturers. According to Google's announced partner brands, they are mainly divided into two categories: chips and terminals. The former includes Intel, Qualcomm, and MediaTek; the latter includes HP, Lenovo, Acer, Asus, and Dell.

Looking at the chip brands, it's clear Google doesn't care what architecture the Android PC uses—X86 is fine, ARM is fine. After all, currently, the implementation of AI scenarios on Android PCs still heavily relies on the cloud Gemini, making local hardware computing power relatively less important.

Additionally, internet and cloud service providers have been offering cloud PC services and are evolving toward AI PCs.

Take Alibaba as an example. In 2024, it launched the Wuying AI Cloud PC, featuring not only powerful cloud hardware configurations but also strong support for large models. By 2026, Wuying AI Cloud PC was further upgraded, providing comprehensive support for raising lobsters with OpenClaw, enabling one-click deployment, direct access to Qianwen, and integration with communication tools like DingTalk, Feishu, and WeChat.

(Image Source: Alibaba Cloud)

Another notable point: AI giants are engaged in a frenzied arms race in AI infrastructure construction, becoming the "culprit" behind storage price hikes. Moreover, there's no sign of storage prices dropping in the short term. This will further curb upgrades to consumer-grade PC configurations. If the traditional PC iteration model is still used to create AI PCs, progress will be difficult. Rather than piling up local AI configurations with high costs and obvious computing power ceilings, it might be better to simply hand over AI tasks directly to the cloud.

The Times Are Changing. How Should PC Manufacturers Respond?

The AI-ification of PCs is an irreversible mega-trend. Players across the PC industry chain are racking their brains on how to board the AI PC ship. Their roles differ, hence their approaches to advancing AI PCs also vary.

First are chip manufacturers. They continue to emphasize the AI computing power of consumer-grade chips and build AI scenarios around it. More importantly, Intel and AMD are constantly making efforts in the server market, continuously vying for orders from AI giants.

After all, as AI companies build AI infrastructure, they naturally need to purchase large quantities of AI chips. Apart from Nvidia, the main players able to fulfill these orders are traditional CPU brands like Intel and AMD.

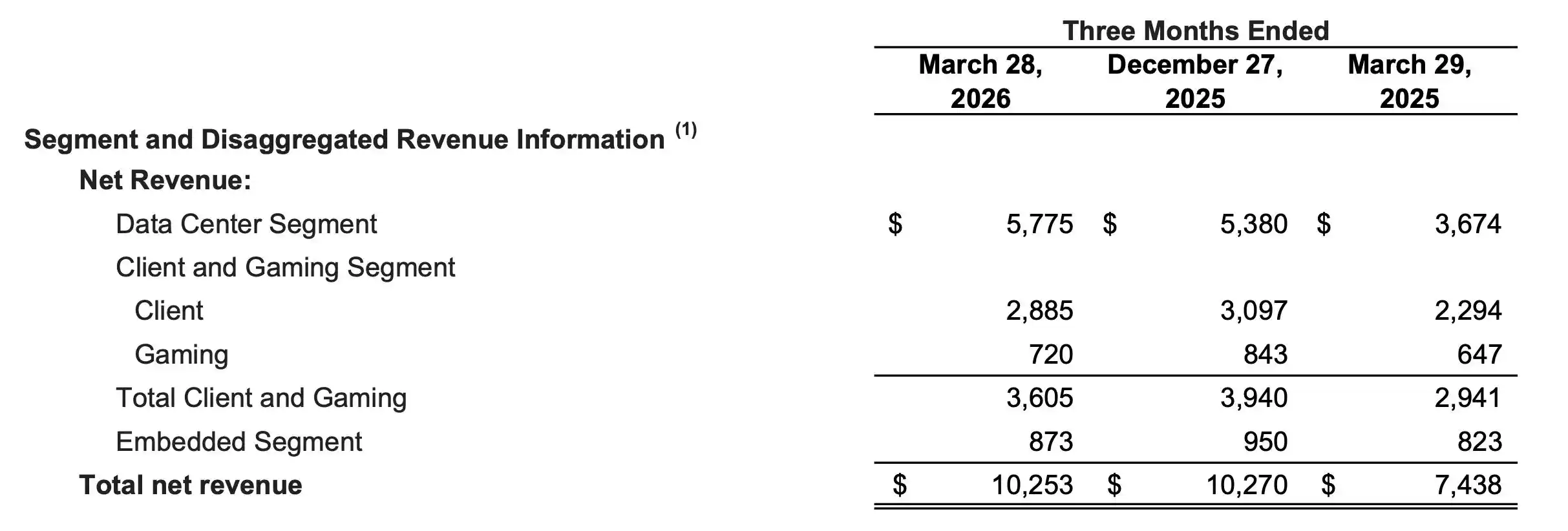

AMD's latest financial report shows that its "Data Center" business segment contributed $5.8 billion in revenue in Q1, accounting for over half. Moreover, neither Intel nor AMD can meet the order volume with their own capacity; AMD is already seeking assistance from other wafer foundries like Samsung besides TSMC.

(Image Source: AMD)

Next are terminal manufacturers, including both traditional PC brands like Lenovo, Asus, and HP, as well as emerging brands like Huawei, Xiaomi, and Honor. Currently, their approach to building AI PCs mainly remains based on the traditional architecture of Intel/AMD chips + Windows systems, enhancing PC AI capabilities by embedding software forms like computer managers and agents.

Meanwhile, smartphone brands have an additional advantage in the AI PC field: they can connect PC products with various other form factors within their own hardware ecosystems, such as phones, car infotainment systems, wearables, and smart home devices, enabling seamless AI capability flow across devices. Taking Xiaomi as an example, Super XiaoAI, a tool integrating agent, AI assistant, voice assistant, and other capabilities, can appear on various devices within the Xiaomi ecosystem.

(Image Source: Xiaomi)

Additionally, Apple is a special case in the AI PC field. Apple Intelligence was announced early, but its rollout has been slow, making Mac AI-ification awkward. Apple's advantage in the PC field remains its unparalleled hardware-software integration capability and absolute control over M-series chips and the macOS system.

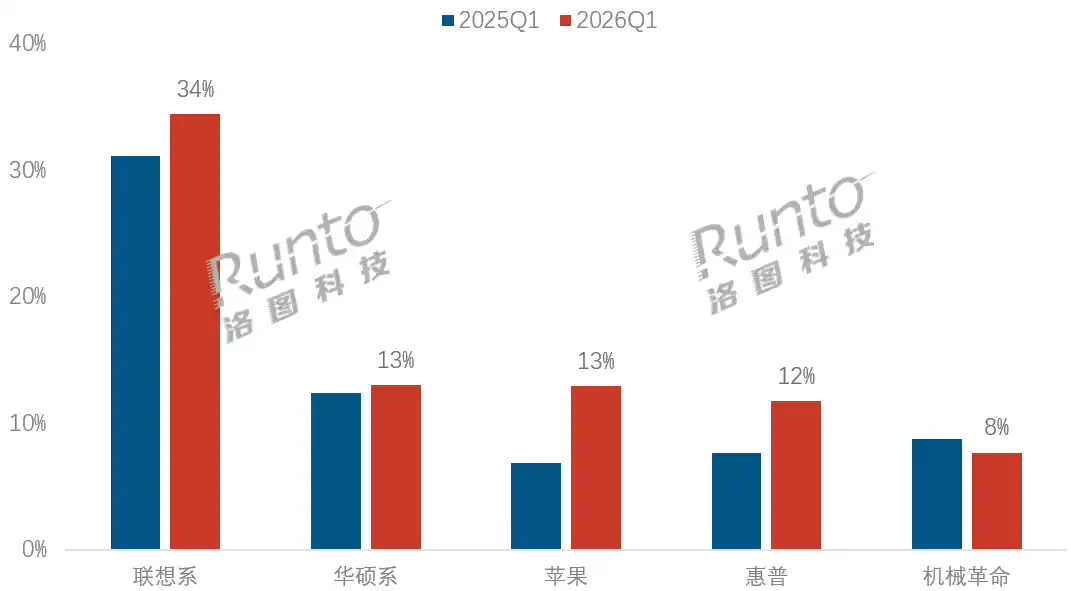

Recently, Apple increased MacBook Neo production from 5 million to 10 million units and is willing to maintain high-cost production of the A18 Pro chip. Due to this laptop's success, in Q1 online notebook market data released by Luotu, Apple has become the PC brand with the second-largest market share in China, second only to Lenovo.

(Image Source: Luotu)

Against the backdrop of soaring storage prices, the low-cost MacBook Neo shows surprising appeal. Frankly, the MacBook Neo wasn't initially viewed favorably; it seemed more like a product to consume A18 Pro inventory. This reflects Apple's ability to make successful budget PCs. Once it has a solid user base, MacBooks powered by Apple Intelligence have the potential to be latecomers in the AI PC era.

Finally, Microsoft, as the dominant player in PC systems, cannot be ignored. Microsoft's actions regarding AI PCs mainly focus on three areas: defining AI PC hardware standards, system reconstruction, and hardware architecture diversification.

Microsoft requires AI PCs to have over 40 TOPS of computing power and at least 16GB of memory. It introduced Windows Copilot Runtime into the Windows底层 (underlying layer), integrating multiple small models. Simultaneously, Windows provides AI features like live captions and Recall.

(Image Source: Microsoft)

A crucial point among these is that Copilot utilizes GPT's large model technology and Bing's internet connectivity capability and is deeply integrated into the Windows system, Edge browser, and Office 365, fully leveraging its ecosystem advantage. And this still primarily relies on cloud AI capabilities.

In Conclusion

The emergence of Android PCs challenges the long-solidified traditional PC form. It represents another product philosophy for PC development in the AI era: light on local hardware, heavy on the cloud.

Today, with storage costs remaining high and local consumer-grade computing power hitting bottlenecks, this solution that breaks hardware barriers and hands over core productivity directly to cloud large models is undoubtedly more imaginative.

Of course, this PC form revolution sparked by AI has just begun. Microsoft and traditional PC manufacturers won't just stand by. They still emphasize the importance of on-device computing power but have fully embraced cloud AI. Apple will also continue to grab market share with its integrated hardware-software ecosystem advantage and market penetration strategy. The upcoming PC market won't be a simple battle of hardware specs but a comprehensive competition involving cloud leverage, AI reconstruction at the system底层 (underlying layer), and cross-device ecosystems.

Whether Android PCs become the final version answer still needs to withstand tests like network stability, data privacy, and user habit migration. But one thing is certain: AI has fundamentally reshaped the definition of a PC.

The PC of the future might truly no longer need an expensive graphics card and large memory capacity. Just a screen and a network connection to the cloud could unleash productivity. A whole new era of AI cloud PCs is approaching us.

This article is from "Lei Technology".