Author: Eli5DeFi

Compiled by: AididiaoJP, Foresight News

Looking back from the rearview mirror of 2024, the Bitcoin mining industry seemed like a group of survivalists trudging through difficult times, having to cope with the Bitcoin halving event while enduring the lingering chill of the "crypto winter".

But by early 2026, that impression was completely overturned. The industry had undergone a fundamental transformation, evolving from speculative outposts of computing power into the cornerstone of a new era—the "AI factory".

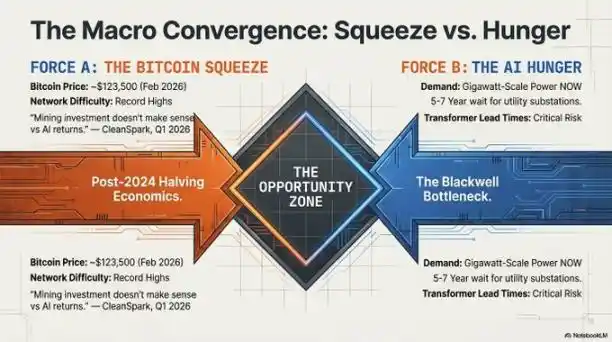

Driving this change was a brutal battle for resources.

As global demand for AI computing power reached a fever pitch, the bottleneck had shifted from "not enough chips" to "not enough power". High-performance computing requires something that cannot be downloaded or manufactured quickly: land that is already connected to the electrical grid.

Those Bitcoin miners, once mocked as volatile and unreliable, successfully transformed the land and power resources they secured around 2021 into the infrastructure monopoly capital of 2026, reinventing themselves as indispensable "landlords" in the AI gold rush.

The Great Compute Flip

In the landscape of 2026, electricity became the new scarce resource.

The primary "physical moat" protecting the industry's winners was the utility power interconnection point. With new substation construction now taking 5 to 7 years, those already-electrified holy sites—the old mining farms already connected to the grid—became the only places that could meet the immediate demands of cutting-edge AI model training.

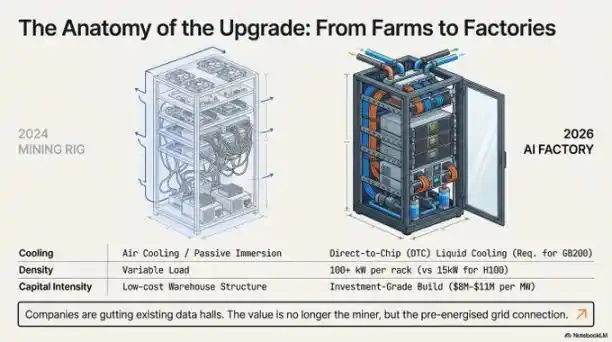

However, the barrier to entry had shifted from simple "land grabs" to capital-intensive fortresses. Due to high-density liquid cooling requirements and a global transformer shortage, the cost of building an AI-ready facility had skyrocketed to approximately $8 to $11 million per megawatt. This high capital expenditure threshold drew a clear line between the "execution leaders" and other players:

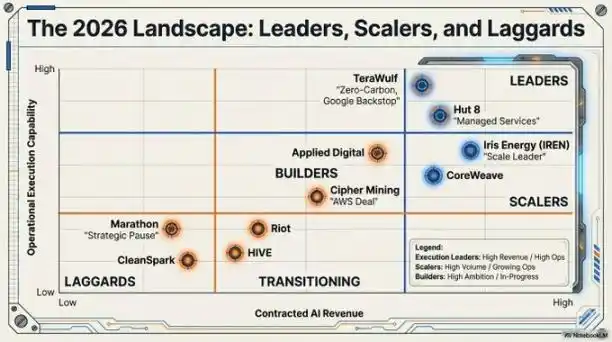

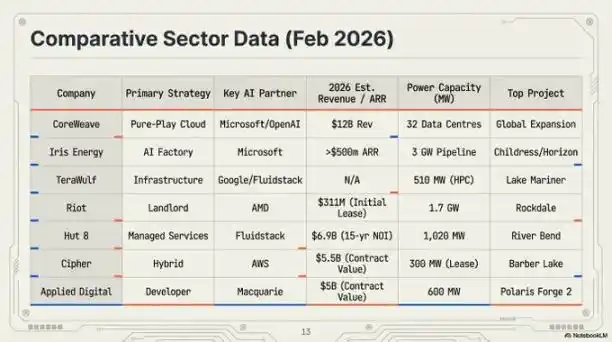

- Iris Energy (IREN): The industry scale leader, valued at $14 billion. It possesses a 2,910-megawatt power and land portfolio, underpinning its expanding "AI factory" footprint.

- Riot Platforms: Holds 1.7 gigawatts of approved power capacity. Riot transformed its "Texas Triangle" assets into strategic hosting centers, recently signing a landmark lease with AMD.

- TeraWulf and Hut 8: Recognized execution leaders. These companies secured contracts worth $6.7 billion and $7 billion respectively, successfully converting mining farms into high-value, investment-grade AI assets.

"Hyperscaler Guarantees" — The End of Crypto Volatility?

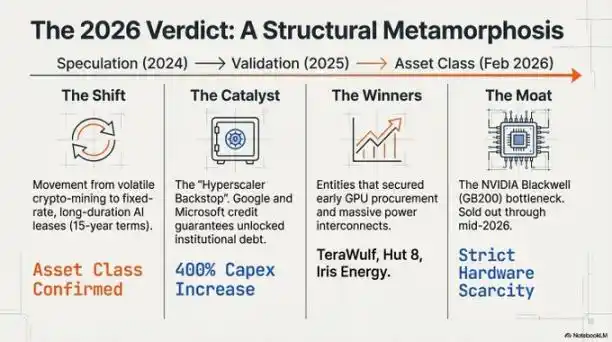

Perhaps the most profound change was the structural re-rating of the business model, enabled by "credit enhancement".

In the past, top financial institutions were reluctant to lend to miners due to Bitcoin's high price volatility. This changed with the advent of the "hyperscaler guarantee".

Through "take-or-pay agreements", industry giants like Google and Microsoft now provide financial guarantees for the rents paid by these former miners.

Thus, what was once a high-risk miner lease contract became a low-risk tech giant credit contract. The result: the industry gained access to the bond market at favorable interest rates of around 7.125%. Companies like Cipher Mining and Hut 8 could obtain non-dilutive project financing covering up to 85% of project costs from J.P. Morgan and Goldman Sachs. This "landlord" model with its "take-or-pay" clauses attracted massive capital from institutions like Vanguard, Oaktree, and Citadel.

Blackwell Reality and Subsea Data Centers

The technical requirements of AI in 2026 rendered the old air-cooled mining rig designs not only obsolete but entirely unusable for deploying high-density AI clusters.

The NVIDIA Blackwell GB200 NVL72 platform, consuming 120 kilowatts per rack, forced the industry to adopt direct-to-chip liquid cooling.

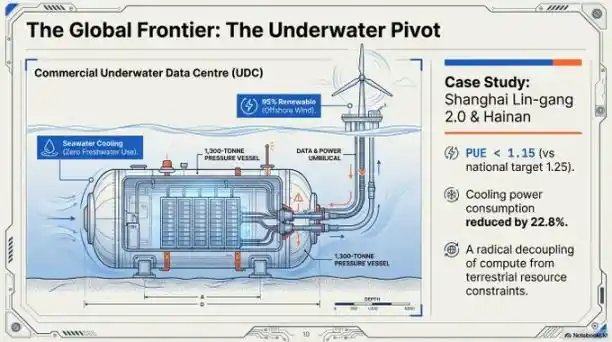

To address both cooling and land scarcity simultaneously, the industry turned its gaze to the "blue economy". Shanghai's Lingang 2.0 project is a prime example of a commercial-scale subsea data center.

- Technical Specs: The facility achieves a Power Usage Effectiveness (PUE) of 1.15, far exceeding the national target of 1.25. It utilizes seawater as the primary cooling source, reducing total power consumption by 40-60%.

- Precision Deployment: Using GPS-guided vessels like the "Sanhang Fengfan", these 1,300-ton subsea modules can be submerged with zero-error precision, powered by offshore wind farms, completely freeing them from terrestrial resource constraints.

The "Blackwell Moat" and Hardware Holders

By 2026, a "supply chain wall" had solidified the industry's hierarchy. With NVIDIA's Blackwell architecture chips sold out until mid-2026, a company's orders placed back in 2024 became its competitive moat today.

No chips, power is useless; no power, chips are just bricks. The winners were those who locked down both power and chips early.

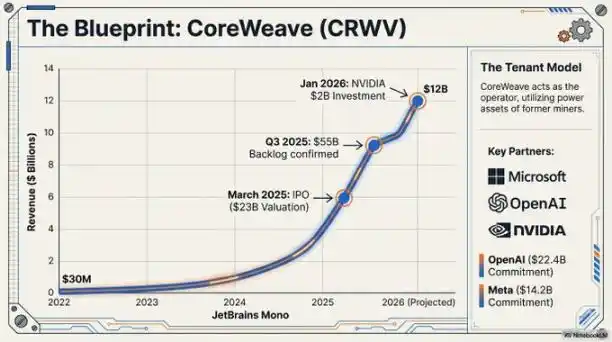

CoreWeave's preparation for a public listing with a $35 billion valuation is backed by its massive hardware orders, including a $22.4 billion commitment from OpenAI. Latecomers who failed to secure chips during the 2024 window were essentially locked out of the primary market for AI infrastructure.

"A backlog of 3.6 million units for the Blackwell architecture effectively locks latecomers out of the primary AI infrastructure market, a situation unlikely to change for the foreseeable future." — Jensen Huang, CEO of NVIDIA, 2026.

Beyond the Miner

The transition from "Bitcoin factory" to "AI digital infrastructure hub" marks the maturation of a once-marginal industry and its integration as a key component of global industrial policy.

The isolated, pure-play mining model is nearing its end. It is being replaced by industrial-scale energy transformation companies. They view computing—whether it's Bitcoin SHA-256 algorithm or large language model training—as an interchangeable output from their core power assets, allocated on demand.

As these gigawatt-scale "AI factories" become permanent fixtures of the power grid, we are compelled to ask:

Can a pure mining model without AI diversification survive given the vast disparity in revenue per megawatt? More importantly, how will global grids adapt as these facilities shift from flexible power consumers (mines) to stable, baseload-demanding AI "foundation loads"? Then, data centers will no longer be mere power customers but designers and architects of the grid itself.

The machines have changed, but this high-stakes game of energy arbitrage has only just begun.