Source:Kyle Samani

Compiled by|Odaily Planet Daily(@OdailyChina); Translator|Azuma(@azuma_eth)

Editor's Note: The man who knows best how to promote Solana, the former co-founder of Multicoin Capital Kyle Samani, who had loudly exited the crypto world not long ago, is back!

Last night, Kyle Samani posted a long Thread on his personal X account. In the article, Kyle Samani once again demonstrated his highly persuasive "shilling" (not derogatory here) rhetoric, using "efficiency"—a weak point in the decentralized narrative—as a breakthrough. He detailed how the Solana ecosystem's currently promoted PropAMM will catch up with or even surpass traditional centralized models in efficiency, arguing that PropAMM is one of the most important innovations in market microstructure in recent years, or even decades.

- Related articles: 《The Man Who Knows Best How to Shill SOL Exits the Crypto World》; 《Is There More to Kyle Samani's Exit?》.

Below is the original content by Kyle Samani, compiled by Odaily Planet Daily.

PropAMM is one of the most important innovations in market microstructure in recent years, and perhaps even one of the most significant in decades.

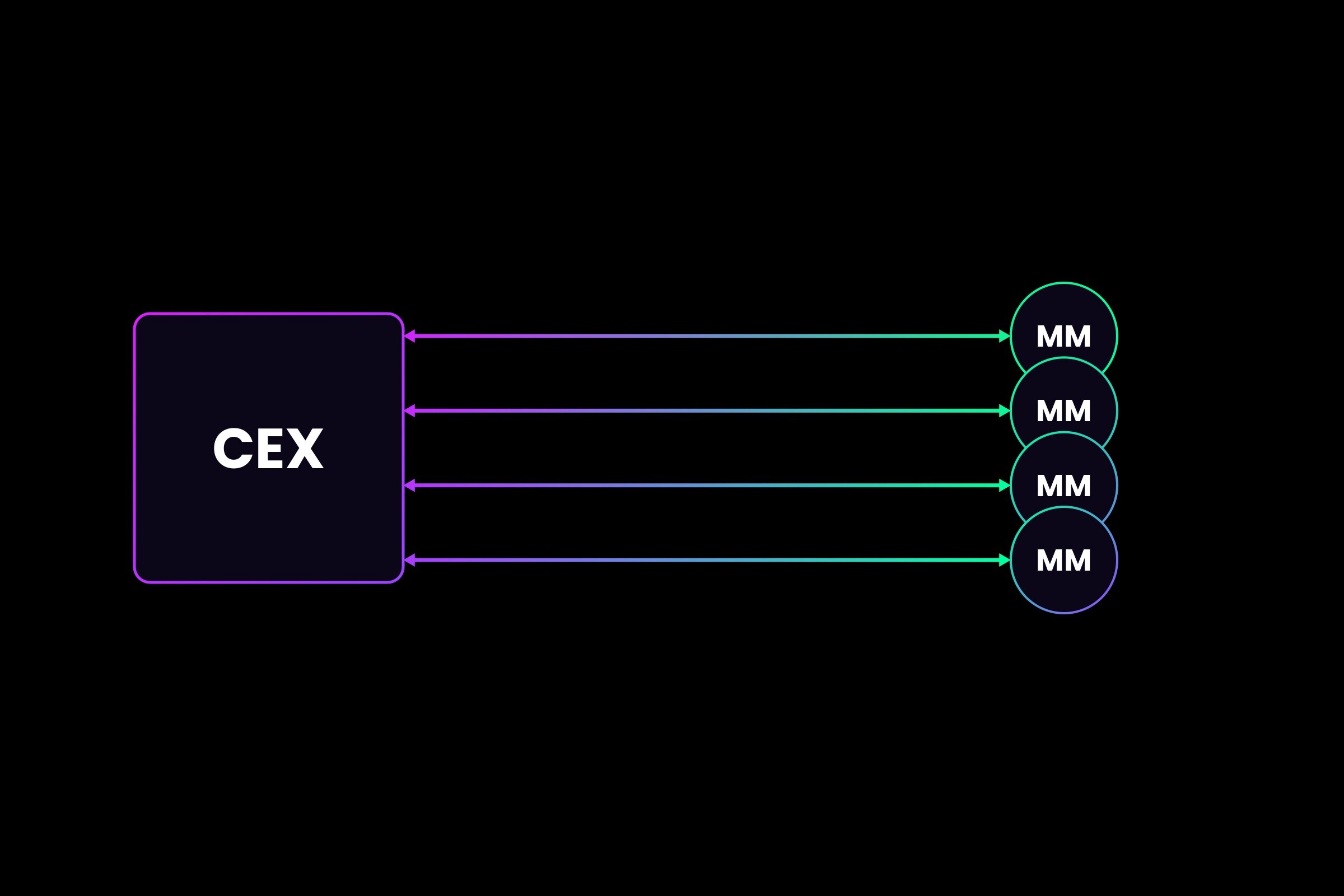

To help everyone understand this conclusion, let's first look at how market makers (MMs) quote prices on traditional centralized exchanges (CEX).

Market makers typically engage in physical co-location with the exchange. Each market maker runs an algorithm on a server and connects to another server running the exchange's system via a network cable of uniform length (e.g., 50 meters).

A massive stream of data is constantly exchanged back and forth between market makers and the exchange. Whenever a market maker sends an order to the exchange—whether it's a limit order, cancellation, or market order—the exchange must broadcast this information to all other market makers; then, the other market makers resend their own orders based on the new information; this cycle repeats indefinitely.

Here is a simple diagram.

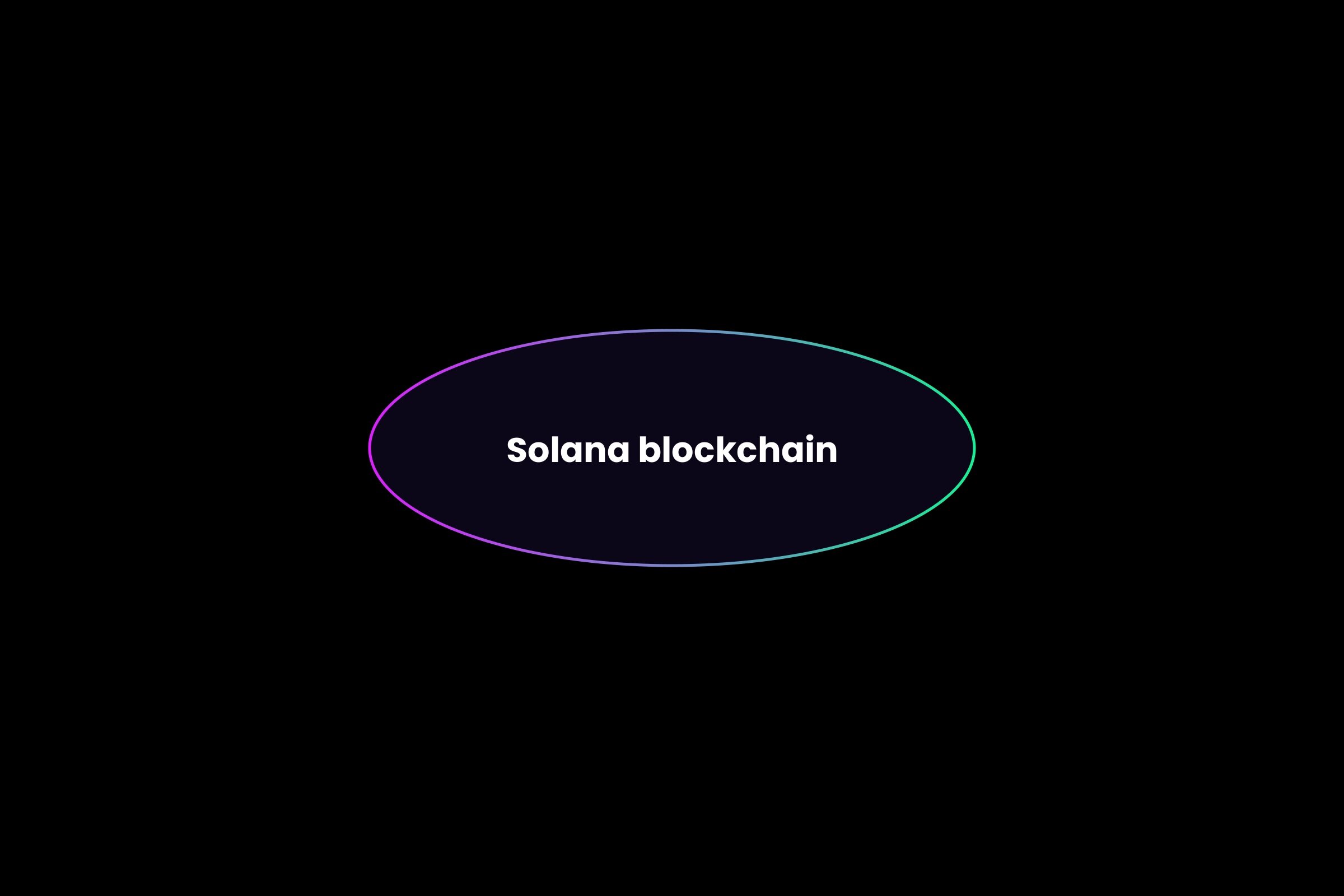

Now, let's look at how propAMM works on the Solana mainnet.

The beauty of propAMM on Solana is that the blockchain itself directly "hosts" the market maker algorithms. This means the system no longer needs to send billions of messages back and forth between market makers and the exchange; the market-making algorithms will run directly on the same physical machine as the exchange.

The new diagram is as follows. (That's right, only the Solana blockchain is needed!)

There has long been a common view in the cryptocurrency industry that decentralized systems must be slower (have higher latency) than centralized systems because they require communication between global nodes.

But if you think about it differently, on-chain hosted algorithms could actually have lower latency than traditional centralized exchanges in finance.

Why is that? The reason is that the latency required for propAMM to update prices only involves electrons moving within the same physical piece of silicon. For example, if the last market order causes a change in the SOL-USD price, this information is immediately visible to all propAMMs and used to price the next market order. Everything happens within the same piece of silicon; there is no longer a need for two-way communication between servers.

It's worth noting that propAMM does require frequent oracle updates, but this is not a problem and does not change the overall fact I described above.

The most critical point remains that when the exchange—in this case, the Solana blockchain—directly hosts the propAMM algorithms, the market makers' pricing changes in real-time within the same physical piece of silicon.

propAMM has already become the dominant mechanism for SOL-USDC spot quotes on Solana, with narrower spreads than all major CEXs. I expect this market structure to become the dominant model for on-chain trading this year, including spot, perpetual contracts (perps), and even prediction markets.

The biggest challenge for propAMM is that there is currently no way to ensure that the taker always gets the best execution, because:

- All propAMM algorithms are not public (which is reasonable, as traditional market-making algorithms are also proprietary);

- When routing trades between multiple propAMMs, the result is non-deterministic.

However, this problem can be solved. I expect all relevant aggregator teams to launch solutions this year, such as Jupiter and dFlow for spot, and Phoenix for contracts.

Current propAMM is still under-optimized and subject to various limitations of the Solana blockchain itself. This year, Solana will roll out a series of major upgrades that will significantly enhance propAMM's performance, including:

- Higher CU (Compute Unit) limits per transaction and larger transaction sizes;

- Higher CU limits per block;

- Alpenglow: Reducing slot time from 400ms to 100–150ms;

- DoubleZero: Reducing global network latency;

- Application-controlled execution;

- Multiple concurrent leaders.

If propAMM on the Solana mainnet can already offer narrower quotes than all CEXs without these upgrades, imagine how powerful they will become as these upgrades are gradually implemented.