Editor's Note: On May 14th, Cerebras officially listed on the NASDAQ under the ticker symbol CBRS. Its closing price on the first day rose approximately 68% above the issue price, making it one of the most notable AI hardware IPOs since 2026.

This article is written by Steve Vassallo, an early investor in Cerebras, who recounts his nearly nineteen-year partnership with Andrew Feldman, spanning from SeaMicro to Cerebras. On the surface, the article tells the venture capital story from term sheet to IPO. In essence, it chronicles how a frontier hardware company bet on the fundamental reconstruction of AI computing architecture during a period when consensus was skeptical: From wafer-scale chips and memory bandwidth bottlenecks to a series of engineering challenges in power supply, heat dissipation, and electrical continuity, what Cerebras faced was not a single-point technological challenge, but the re-invention of an entire modern computing system.

The most noteworthy aspect is not that Cerebras ultimately created a wafer-scale chip 58 times larger than traditional chips, but that from the outset, this company chose a direction contrary to industry inertia: When GPUs became the default answer for AI training, it attempted to redefine "what a computer designed for AI truly is." Behind this lies not only technical judgment but also the patience of capital, and, crucially, the long-term, non-transactional trust relationship between investors and the founding team.

For today's AI hardware competition, the significance of Cerebras lies in reminding the market that the compute revolution isn't just about stacking more GPUs; it may also come from re-imagining the computing architecture itself.

The following is the original text:

Friday, April 1st, 2016. I sent Andrew Feldman an email, telling him I would climb over the fence in his backyard and hand-deliver our term sheet for investing in Cerebras to him.

It was April Fools' Day, but I wasn't joking.

Strictly speaking, this wasn't standard operating procedure for a venture capital firm. But by then, I had known Andrew for nine years and had been discussing his next company with him for nearly two years. I couldn't afford to miss this deal over some sentence in the term sheet that was still being revised on a Saturday afternoon.

I first met Andrew in October 2007. At that time, he and Gary Lauterbach had just founded SeaMicro. I didn't invest in that round, but we really clicked, especially admiring their first-principles approach to problem-solving. I've been following them ever since.

Truly valuable relationships need time to mature. The same is true for truly valuable companies. Today, viewed from the outside, Cerebras is a ten-year-old company about to go public. But in my view, this is the culmination of a nineteen-year relationship, finally reaching the bell-ringing moment.

Deep Relationships, and Unreasonable Ambition

When AMD acquired SeaMicro in 2012, I had a hunch: Andrew wouldn't stay long in a big corporation. He possesses a strong unwillingness to lose and a rebellious heart. By early 2014, he was already looking for opportunities to leave, and we began meeting frequently to discuss what could be next.

At that time, two things were far from consensus: First, that AI would actually become useful; second, that GPUs were not the optimal computing architecture for AI.

Regarding the first question, many smart people I knew also disagreed. After AlexNet emerged in 2012, some corners of the research community had already begun achieving near-magical results with convolutional neural networks. But in the broader software industry, AI was still somewhere between a marketing buzzword and a research project.

The second question, the hardware question, had hardly been seriously raised. GPUs had become the default choice for neural network training, mainly because researchers accidentally discovered they were "less bad" compared to CPUs. Building a new computing system specifically for AI workloads meant challenging the mainstream architecture then being used by researchers worldwide.

But Andrew, Gary, and their co-founders Sean, Michael, and JP saw a different path. They each brought decades of experience in chips and systems: Gary's background stemmed from pioneering work on dataflow and out-of-order execution in the 1980s; Sean focused on advanced server architecture; Michael handled software and compilers; JP was deeply versed in hardware engineering. They were an exceptionally rare group: individually outstanding; collectively, their capabilities multiplied. They could imagine an entirely new kind of computer.

They believed that if AI truly unlocked its potential, the resulting market size would far exceed the sum of all existing computing paradigms.

They also saw the essence of the GPU: It was originally a chip designed for graphics processing, just temporarily promoted as an AI training tool on a new battlefield. It was indeed better at parallel processing than CPUs, but if one designed from scratch for AI workloads, no one would create an architecture like the GPU. What truly limited neural network capabilities was not raw compute power, but memory bandwidth. This meant the chip they aimed to create would not primarily optimize matrix multiplication in isolated cores, but rather how data flows efficiently throughout the entire computational structure.

Internally, investing in Cerebras was far from a consensus decision. Several of my partners had seen the previous round of semiconductor investments resulting in mostly losses, and they were very candid about their concerns. But ultimately, we agreed as a team. That weekend in April 2016, we clearly told Andrew: We wanted to be the first to give him a term sheet.

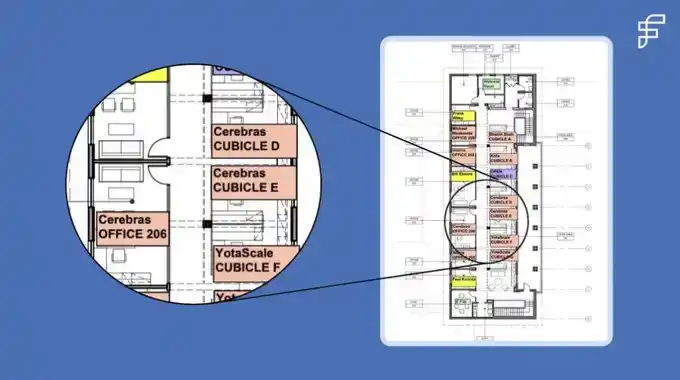

A few weeks later, Andrew, Gary, Sean, Michael, and JP moved into our EIR office space on the second floor at 250 Middlefield. I still have the floor plan the office manager drew back then. On that map, Cerebras sat next to a founder from Foundation, just a few doors away from Bhavin Shah, who later founded Moveworks. It was a good floor for startup growth.

Knowing Which Rules Can Be Bent, Which Must Be Broken

Before Cerebras, the largest chip in computing history was roughly 840 square millimeters, about the size of a postage stamp. The chip Cerebras created measures 46,000 square millimeters, 58 times larger than its predecessor.

Choosing a wafer-scale chip also meant choosing all the downstream design challenges that came with it. In the nearly 80-year history of computing, no one had truly accomplished this before. It also meant that no one had systematically solved these problems: How to power such a massive chip? How to cool it? How to maintain electrical continuity across tens of thousands of connection points?

To achieve wafer-scale computing, Cerebras essentially had to simultaneously reinvent nearly every facet of modern computing: semiconductors, systems, data structures, software, and algorithms. Each direction alone could be a startup. Andrew and his team chose to tackle the most difficult technical problems first. Through their intense, almost tireless efforts, these problems were tackled one by one.

Every six to eight weeks, we'd have a board meeting. They would walk us through what they had tried since the last meeting: a new variant of system design, a new power delivery scheme, or a thermal management adjustment. By repeatedly confronting systemic challenges head-on from every angle, they developed a hard-won clarity in articulation. They would explain where they thought things went wrong and what they planned to try next.

We would ask questions, then dive deep with the team, mobilizing the people, resources, and connections needed to help them find new approaches. Six to eight weeks later, when we met again, the story would repeat with another technical frontier: another boundary that needed exploring. Each solution would reveal the next problem that had to be solved.

Their first prototype wafer literally smoked the first time they powered it on. The team called it a "thermal event"—what you call a fire when you don't want to scare the board or the landlord.

I had been calculating power consumption per square millimeter, partly out of curiosity, partly because the numbers seemed too high to be true. So, we brought in engineers from Exponent, a failure analysis firm whose former company name was, aptly, Failure Analysis. They confirmed that the power numbers were indeed as audacious as they appeared and helped us think through options that didn't challenge the second law of thermodynamics. After all, that was one law Andrew was smart enough not to argue with.

The discipline of an engineer lies in knowing which rules can be broken, which can be bent, and which must be respected. Andrew and his team had a practiced intuition for that distinction. They knew when they were challenging convention—which they intended to do—and when they were challenging physics—which they did not.

When you're building frontier technology, failure is inevitable. The only way through it is discipline, persistence, and most importantly, trust: trust in the mission, trust in each other, and trust in the idea that when the first prototype self-destructs, you'll all be back in the lab the next morning for the next iteration.

There's no transactional version of this work. There's only the long-term version: staying in the room, through the incomplete solutions and patient explanations, so that when it finally works, you are there to see it.

That moment arrived in August 2019. Andrew, Sean, and their team stood in the lab, watching a new computer they had designed from scratch run for the first time. To an outsider, it superficially didn't seem to be doing anything interesting. According to Andrew, it was probably about as exciting as watching paint dry. The difference this time was: no bucket of "paint" like this had ever dried before. They stood there together for 30 minutes, then went back to work.

Who You Build With, Matters

Some people choose problems based on what they know they can solve. Andrew's criteria for choosing problems is what he believes is worth solving. Incremental iteration doesn't excite him; he wants 1000x leaps. From day one, he wanted to build Cerebras into a generational, one-of-a-kind company.

Part of that drive comes from his personality. Andrew describes it as a computer architect's "disease"—being haunted by an idea for decades. But to me, it's more broadly a founder's "disease." He looks at a problem and first asks himself: Can I make something that causes a step-function improvement? Then he asks: If I succeed, will anyone care? If the answer to both is yes, he will commit the next decade of his life to it.

Another part of that drive comes from his upbringing. Andrew grew up surrounded by geniuses as naturally as most kids grow up watching TV. His father was a pioneering evolutionary biology professor who played rotating doubles tennis every Sunday with six other people. Three of those six later won Nobel Prizes, and one won a Fields Medal.

According to Andrew, these giants would patiently explain their work in physics, mathematics, and molecular biology to him in language a child could understand. He formed a deep impression of what true intelligence looks like and also understood, as his mother said, that being smart doesn't mean you have to be a jerk.

I've come to realize this is one of Andrew's core traits, as important as his rebellious ambition and his almost phototropic instinct for truly worthy problems. He deeply believes that the most exceptional people he's encountered are also often extraordinarily kind.

This belief shaped how his team came together to accomplish incredibly hard things. The first 30 people Cerebras hired had all worked with him before; some had been with him since 1996. Today, Cerebras has about 700 employees, and roughly 100 of them have followed him across multiple companies.

The important thing is, kindness and competitiveness are not mutually exclusive. Andrew has an intense desire to win. He likes to say he's a professional version of David, fighting Goliath. Goliath is slow-moving and always guarding against frontal attacks, which leaves room for every other move. David's advantage lies in showing up in ways and places Goliath cannot.

At SeaMicro, Andrew's largest channel partner in Japan was NetOne. NetOne's primary supplier was Cisco, which would entertain partners with private jets and yachts worth more than most houses in Palo Alto. Andrew's budget was far more modest, so he invited NetOne's CEO to his backyard for a barbecue. Later, the CEO told him he had done business with Cisco for decades but had never been invited to anyone's home. That seemingly small, very human gesture—something a Goliath would never think to do—cemented their relationship.

From the First Term Sheet to IPO

This morning, Andrew rang the opening bell at NASDAQ. I stood next to him. It's been ten years and 2600 miles since it all began in our 250 Middlefield office.

Today, there are still rare founders doing what Andrew did: sketching on whiteboards at 3 a.m., wrestling with technical problems not yet solved. They also harbor a strong unwillingness to lose and a rebellious heart. They are trying to find a partner who is truly willing to work side-by-side: willing to dive in and help solve the problem when the first prototype won't power on; and who will stay until it finally runs.

These are precisely the founders I want to back: those who choose problems worth solving, imagine a solution 1000x better than the status quo, and persistently hone and persevere through the inevitable challenges along the way.

For founders like Andrew, Gary, Sean, Michael, and JP, I'm willing to climb over a backyard fence on a Saturday afternoon to hand-deliver a term sheet.