Author: David, Deep Tide TechFlow

Netflix has never been as profitable as it is now, yet its founder chose this moment to leave.

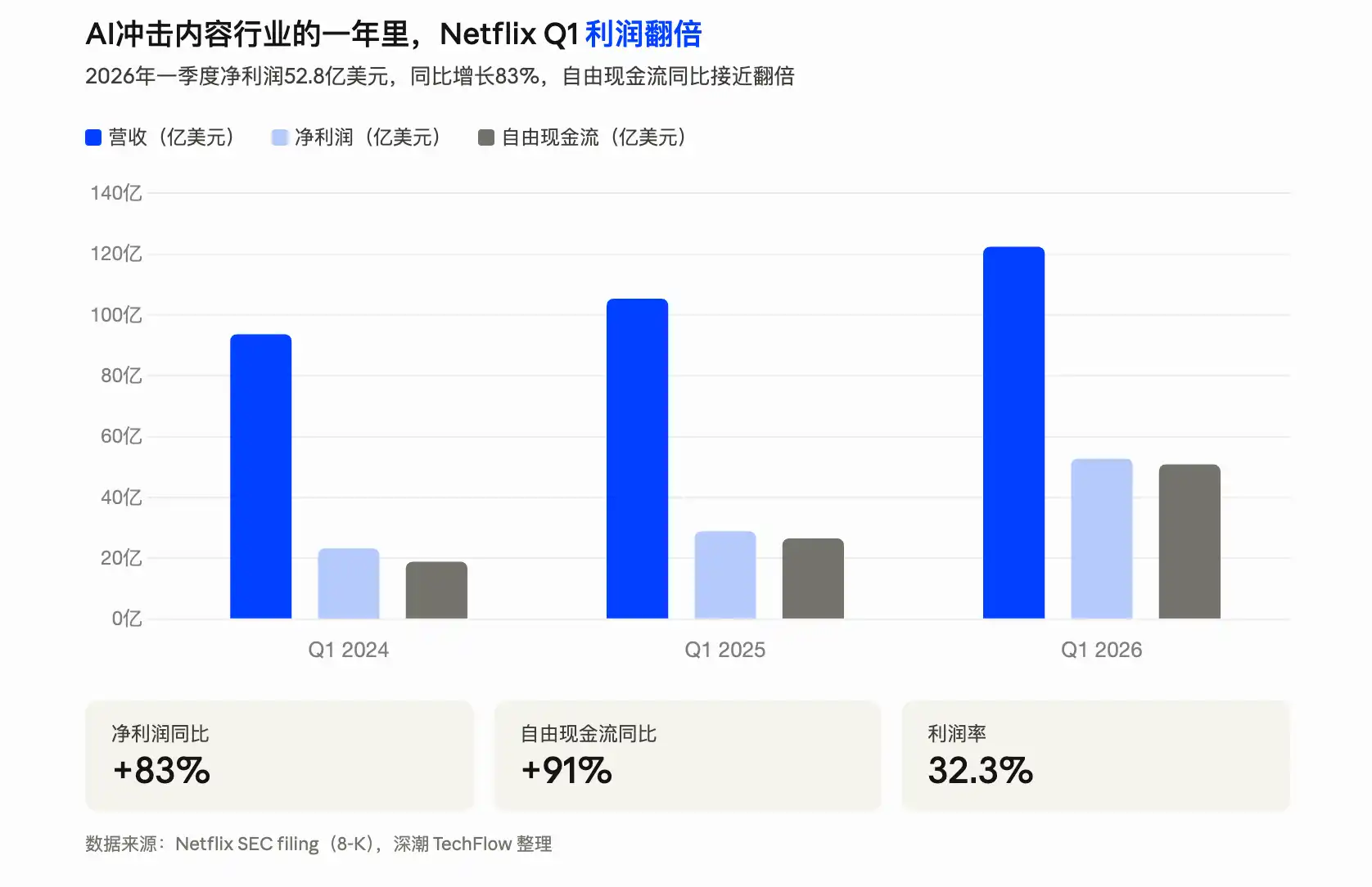

On April 16, Netflix released its Q1 2026 financial report, with revenue of $12.25 billion, a 16% year-over-year increase, and net profit surging 83% year-over-year. Earnings per share were $1.23, nearly 60% higher than Wall Street's expectation of $0.76.

But the report also announced another matter: Co-founder and current Chairman Reed Hastings will not seek re-election after his term ends in June.

Hastings founded Netflix in 1997, building it from a DVD-by-mail business into a streaming giant with over 325 million paid subscribers worldwide, working for nearly 30 years. In 2023, he handed over the CEO role to his successor and stepped back to chairman. Now, he's leaving the chairman position too.

In the filing submitted to the U.S. Securities and Exchange Commission, Netflix specifically wrote: "This decision is not related to any disagreement with the company."

But the more they emphasize no disagreement, the more it makes people wonder what he is actually going to do.

A little-known fact is that in May last year, Hastings had already joined the board of Anthropic. For nearly 30 years, his business has essentially been about getting people to pay for content, while Anthropic's Claude, though not directly generating video, is changing the way content is produced.

From text to images to video, the cost is getting lower and the speed faster.

Netflix's profitability relies on good content being worth paying for. If AI lowers the barrier to content creation enough, does this premise still hold?

Hastings is clearly already thinking about this question.

What is he afraid of?

As a top global content producer and distributor, Netflix's founder has always had an intellectual concern regarding AI.

You might not know this, but in 1988, Hastings was studying for a master's degree in AI at Stanford. Yes, 40 years ago he was researching artificial intelligence. It's just that the AI of that era was nothing like the useful tool it is today...

In 2022, Hastings was invited as a speaker at Stanford University's graduation ceremony.

He later mentioned this himself, sounding like he was telling a joke about a wrong turn taken in his youth. AI didn't work out, so he turned to starting a software company, and later founded Netflix, which he worked on for nearly 30 years.

Someone who studied AI couldn't help but pay attention to the field.

In a 2024 interview discussing AI, he was quite relaxed: "AI will help us become more creative; we can use these tools to make more shows." Back then, his attitude was one of embrace. AI was a tool, here to help, not to take jobs.

In March 2025, he donated $50 million to his alma mater, Bowdoin College.

This liberal arts college in Maine doesn't work on large models; Hastings gave them money for a research initiative called "AI and Humanity," specifically studying the impact of AI on work, education, and human relationships.

On the day of the donation, he said something completely different from his relaxed tone a year earlier: "We are fighting for the survival and prosperity of humanity."

Within a year, AI had advanced rapidly, and his stance shifted from AI helping work to AI being a threat to humanity.

Two months later, he joined the board of Anthropic.

He was appointed by an independent body called the "Long-Term Benefit Trust," whose five members hold no Anthropic stock, with the sole duty of ensuring AI development aligns with humanity's long-term interests.

In March of this year, he spelled it out most clearly in another interview. The host asked him what the biggest risk facing Netflix was; he skipped over competitors and subscriber growth and said two words directly:

AI.

He said if AI makes the free content on YouTube cool and attractive enough, and all the young people go watch free content, then who will pay for Netflix?

From public information, you can find Hastings calling himself an "extreme techno-optimist." He doesn't think AI itself is bad; the problem lies in the speed gap.

AI technology is advancing too fast, and humanity's moral and institutional systems can't keep up.

This explains his seemingly contradictory choices over the past year. Donating not to a technical AI lab, but to a humanities college; joining not the advisory board of any commercial AI company, but the safety committee of Anthropic.

The author believes Hastings is more qualified than most to be concerned about whether AI will颠覆 industries.

Netflix itself was the disruptor in the last cycle. It used streaming to kill DVD rentals, crippled cable TV, and forced all of Hollywood to rebuild its distribution system. He personally did the thing: "Use new technology to drive content and distribution costs low enough to kill the previous winners."

Now he looks at AI and probably wonders who's next.

So, Hastings is simultaneously a major shareholder of Netflix and a director at Anthropic. Holding shares in the company he founded, he takes a seat in the industry that might颠覆 it.

This might not be called retirement, but hedging.

Despite the AI impact, Netflix has actually never been better

Four years ago, Netflix was a company with just over $30 billion in annual revenue and less than 20% profit margins, being chased by Wall Street asking "when will you start making real money?" Four years later, this earnings report provided the answer.

In Q1 2026, net profit was $5.28 billion, up 83% year-over-year. Free cash flow was $5.09 billion, almost double that of the same period last year. Meanwhile, the profit margin reached 32%. The full-year revenue guidance is $50.7 to $51.7 billion. If they actually achieve this by year-end, it would mean Netflix's revenue has nearly doubled in three years.

Beyond daily operations, Netflix is not blind to AI either.

A few weeks ago, it spent up to $600 million to acquire InterPositive, a company that makes AI-assisted film and television production tools, using AI to accelerate script development, scene previews, and post-production. Netflix also specifically mentioned generative AI in its earnings letter, saying it will use it to improve content production and user experience.

Using AI to reduce production costs and improve efficiency is a sound strategy. In fact, the entire Hollywood or content production industry is moving in this direction.

It's just that the concern founder Hastings expressed in the interview might be about a different issue.

In February of this year, ByteDance released its video generation model Seedance 2.0. Upload a photo, and in 60 seconds it generates a 2K video with camera movement, sound effects, and lip-syncing.

At the time, Feng Ji, producer of "Black Myth: Wukong," tested it and said four words: "The childhood era of AIGC is over." Director Jia Zhangke posted on Weibo saying he was preparing to use it to make a short film...

More concrete numbers come from within the industry. According to Securities Times reports, in the e-commerce advertising sector, one person using Seedance 2.0 can complete in 30 minutes what used to take 7 people 3 days, with a cost reduction of over 99%.

Extras in Hengdian, video editors, special effects producers—people across the entire industry chain are talking about the same term—job anxiety.

Gong Yu, founder of iQiyi, publicly stated a judgment late last year: AI could reduce the cost of the film and television industry by an order of magnitude, increase the number of creators by an order of magnitude, and increase the number of works by two orders of magnitude.

Netflix using AI to reduce production costs is equivalent to improving efficiency within the existing model. But what Seedance and others are doing is lowering the barrier to "making video" from millions of dollars to a few dollars.

The future Hastings spoke of, where "free content on YouTube becomes good enough," is step by step becoming reality.

Of course, all of this may not be directly related to his decision to leave Netflix now. He started handing over power in 2023—CEO, chairman—step by step, with a transition period of at least three years.

It's just that the timing is indeed微妙. Netflix delivered its best-ever financial report, and the stock fell 8% after hours. On the same day, the founder announced his complete departure.

After June, Hastings' name will disappear from Netflix's board list.

His current titles are Director at Anthropic, Director at Bloomberg, and owner of a ski resort in Utah. He still holds Netflix stock; Forbes estimates his net worth at $5.8 billion, mostly tied to Netflix.

He holds Netflix's money while sitting at AI's table.

As for whether this choice is foresight or overcaution, we might only get the answer when AI can truly produce a movie that audiences are willing to watch till the end.