Original | Odaily Planet Daily (@OdailyChina)

Author | Asher (@Asher_ 0210)

On January 19, Perle Labs, an AI training data platform driven by human experts, announced on platform X that the beta version ended on January 6. The Season 1 campaign is now officially launched. Users can earn points by completing various tasks.

Below, Odaily Planet Daily will give you a quick overview of Perle Labs and a step-by-step guide to participating in the Season 1 campaign to earn points and potentially qualify for a future token airdrop.

Perle Labs: An AI Training Data Platform Driven by Human Experts

Project Introduction

Perle Labs is an AI training data platform driven by human experts, focused on providing high-quality datasets to support decentralized AI development. According to ROOTDATA, Perle Labs has raised a total of $17.5 million in funding to date.

On the Perle Labs platform, experts (such as doctors, financial analysts) perform data annotation. Each step is recorded on the Solana blockchain, making it tamper-proof and fully traceable. Clients (such as pharmaceutical companies, banks) can directly access this "certified" data via API.

Step-by-Step Interaction Tutorial

Interaction Link: https://app.perle.xyz/

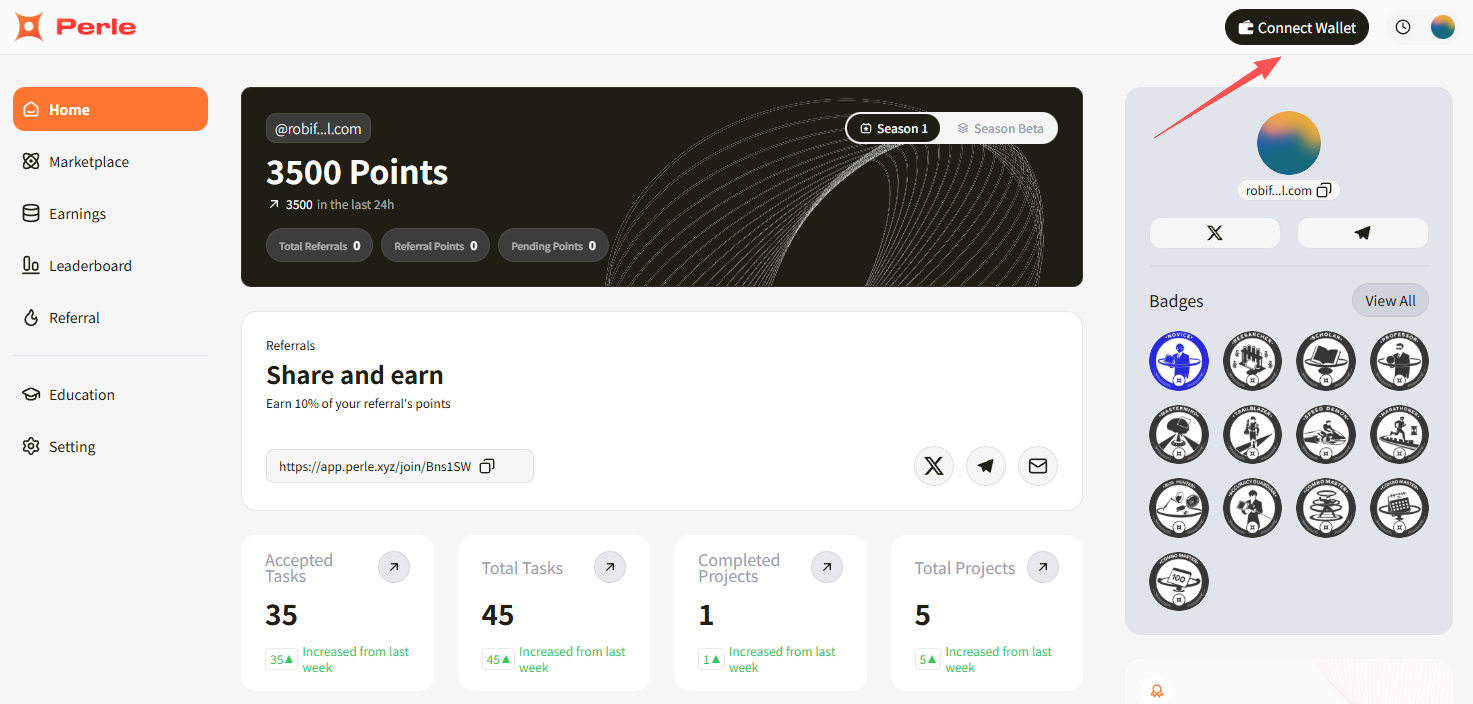

STEP 1. Click "Connect Wallet" to connect a wallet supporting the Solana network (you will get a personal referral link; inviting friends earns you 10% of the points they earn). Ensure the connected wallet has a small amount of SOL, as each task requires on-chain confirmation.

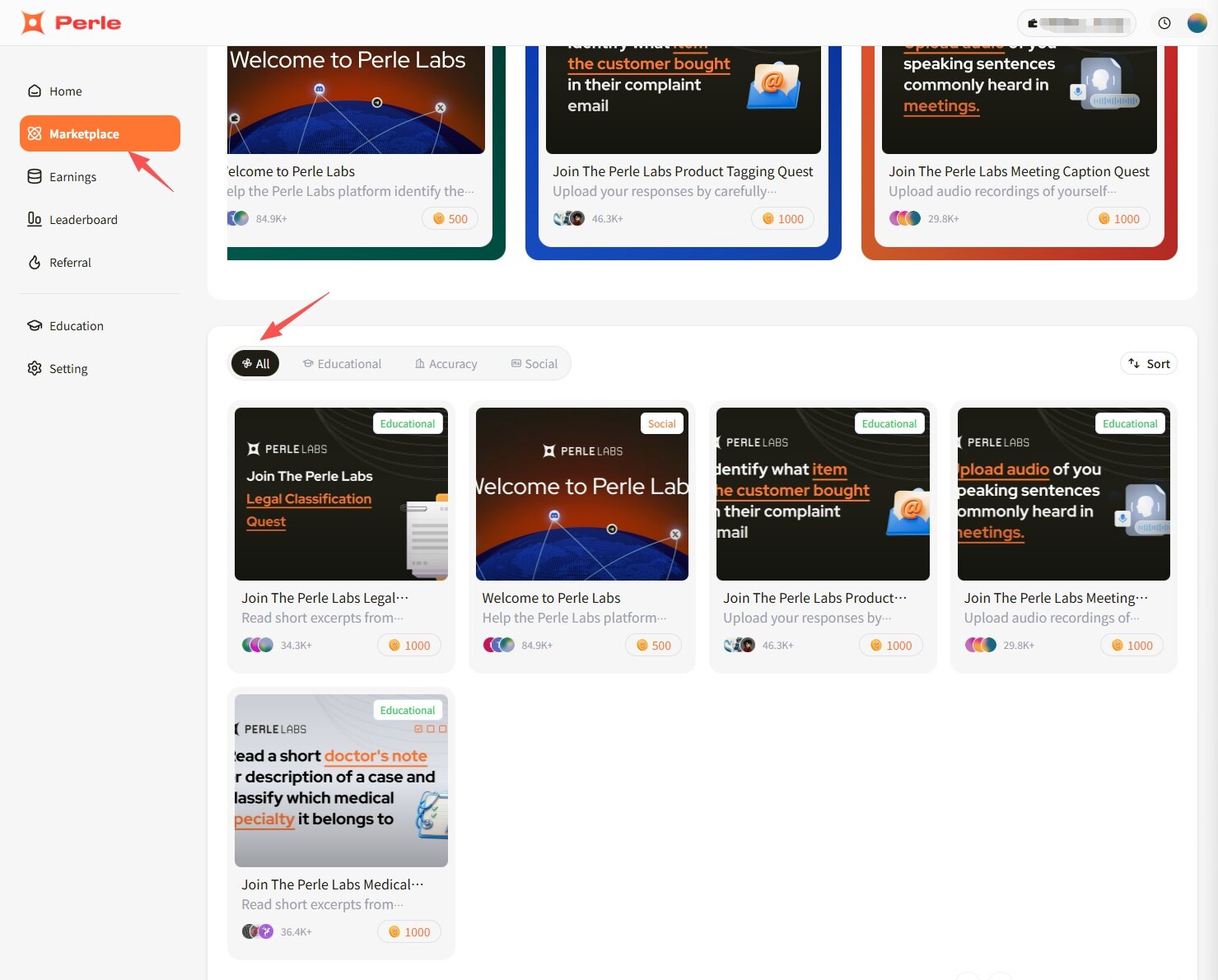

STEP 2. Click "Marketplace". There will be 5 tasks available to participate in.

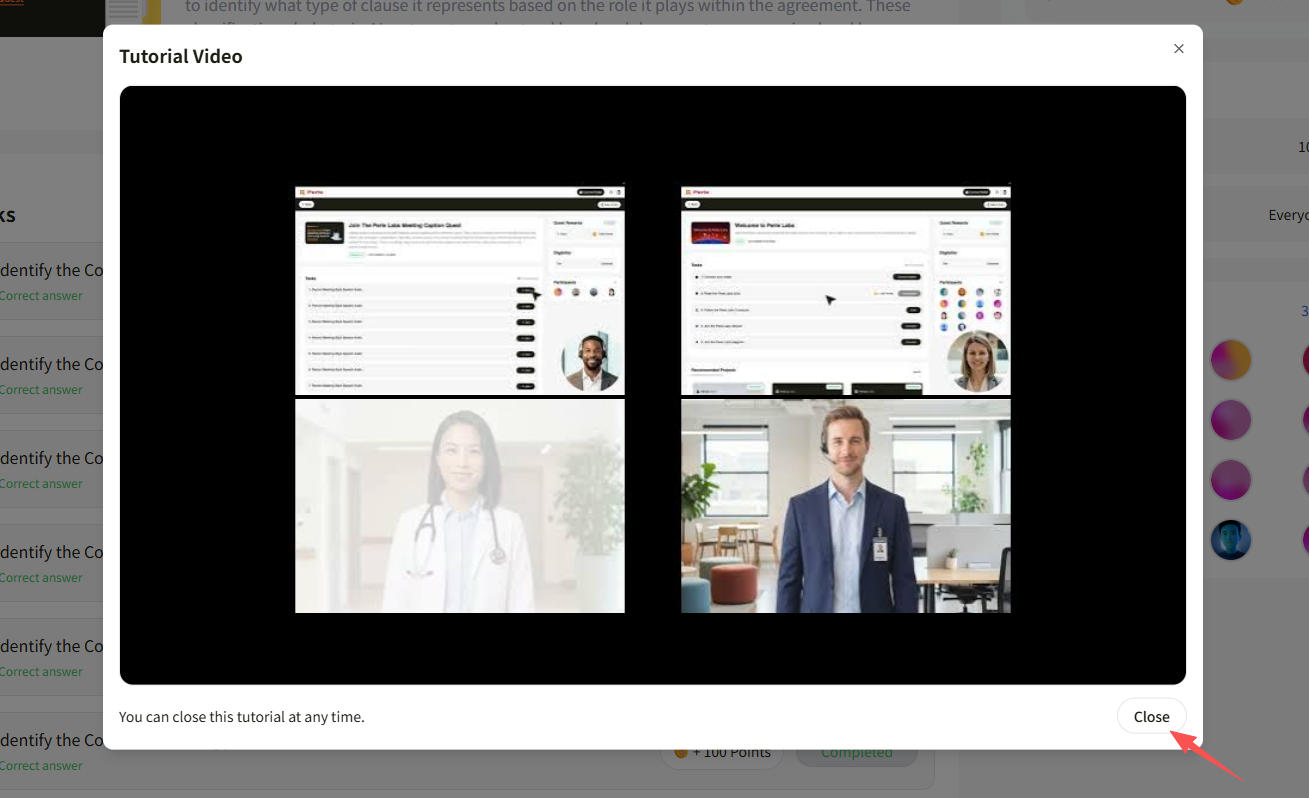

Except for the social binding task, for each other task, you need to fully watch the instructions to unlock it.

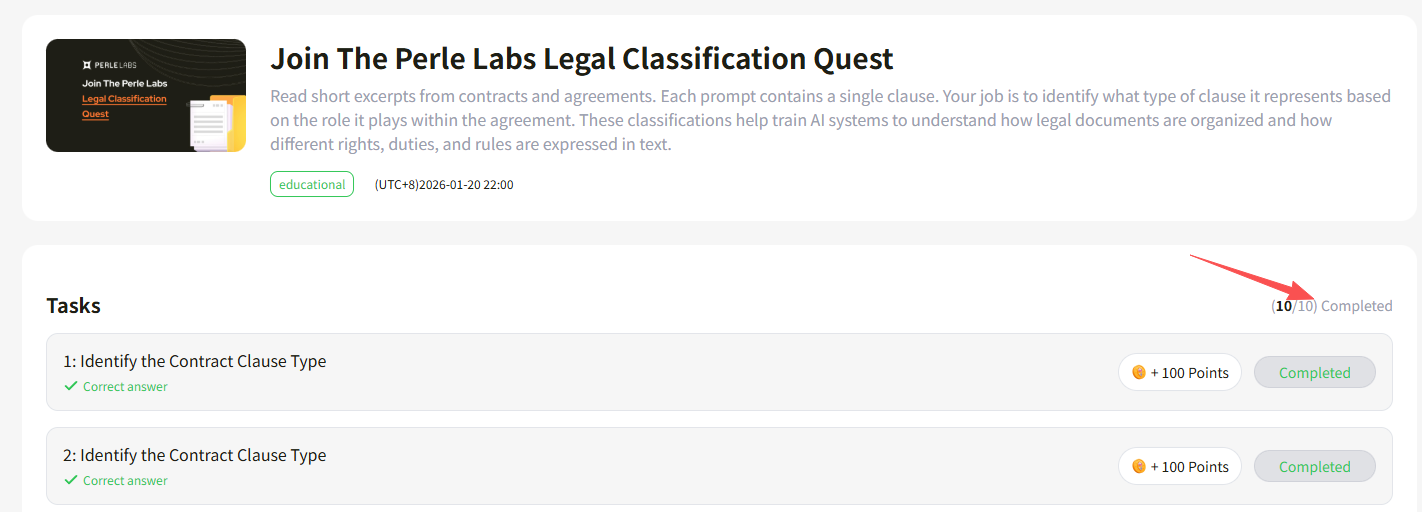

Task 1. Join The Perle Labs Legal Classification Quest: Read excerpts from legal contracts and select the corresponding clause (the questions are highly specialized; using AI tools for assistance is recommended). 10 questions, 100 points each, 1000 points total.

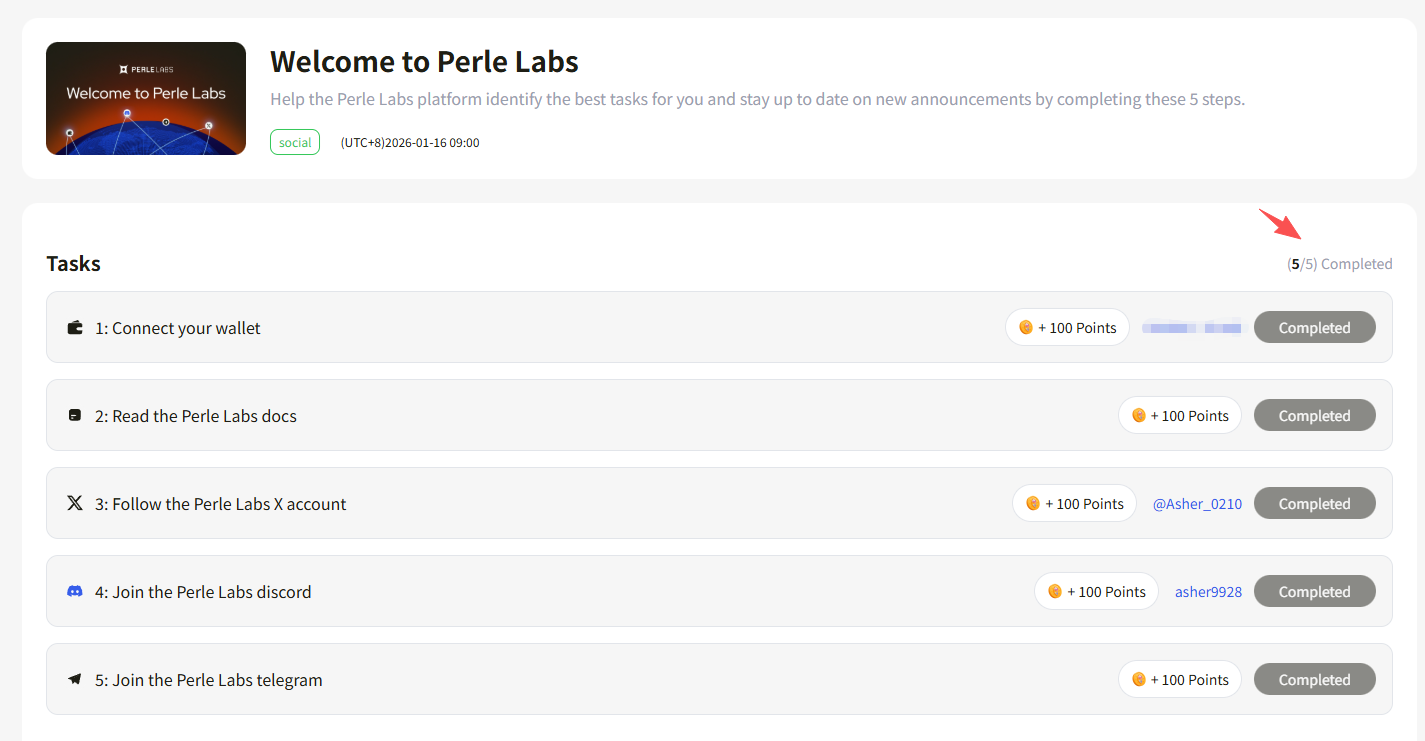

Task 2. Welcome to Perle Labs: Bind your personal X, Discord, Telegram, etc. 5 tasks total, 100 points each, 500 points total.

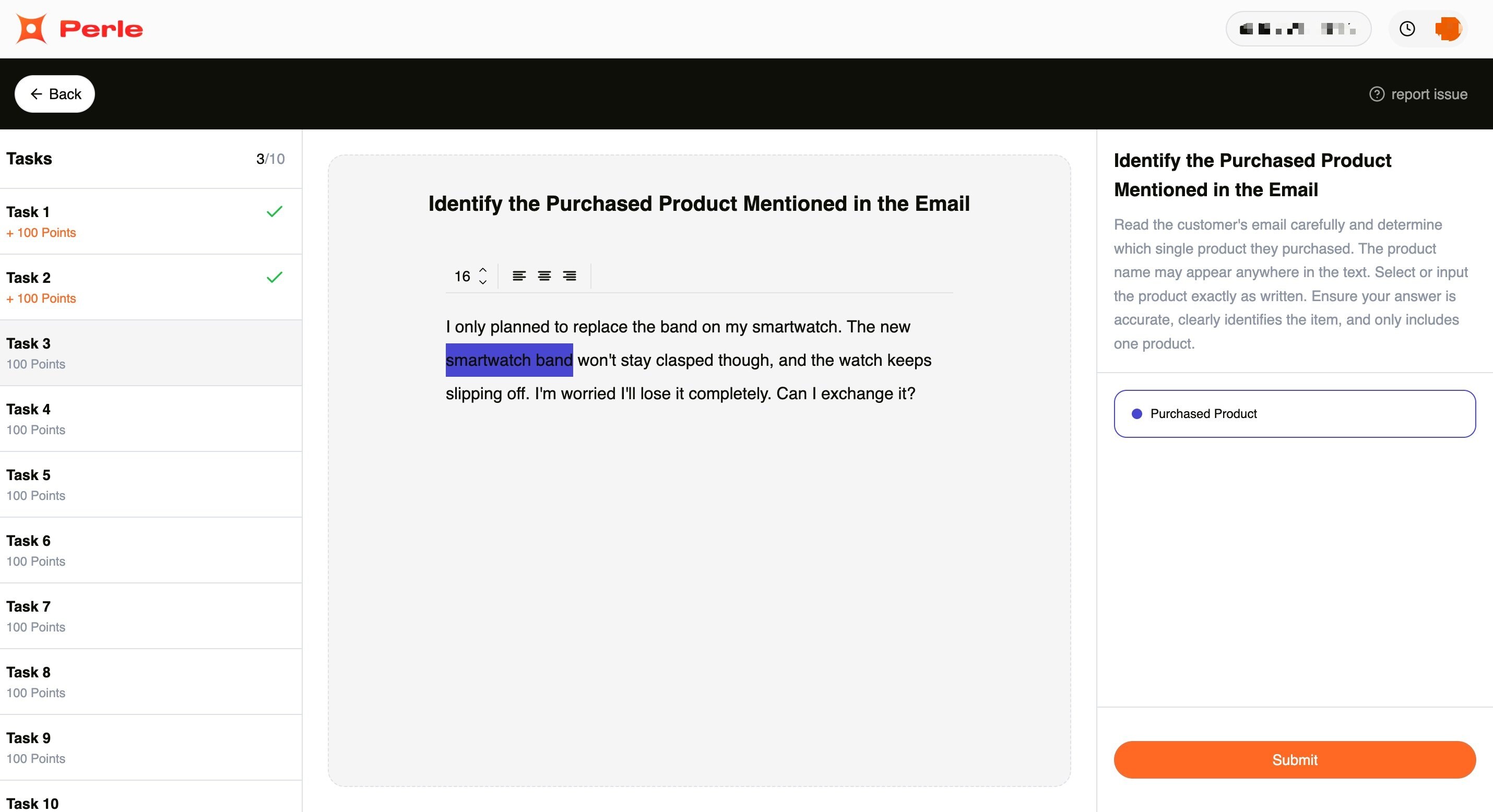

Task 3. Join The Perle Labs Product Tagging Quest: Find the mentioned product in complaint email messages. 10 questions, 100 points each, 1000 points total.

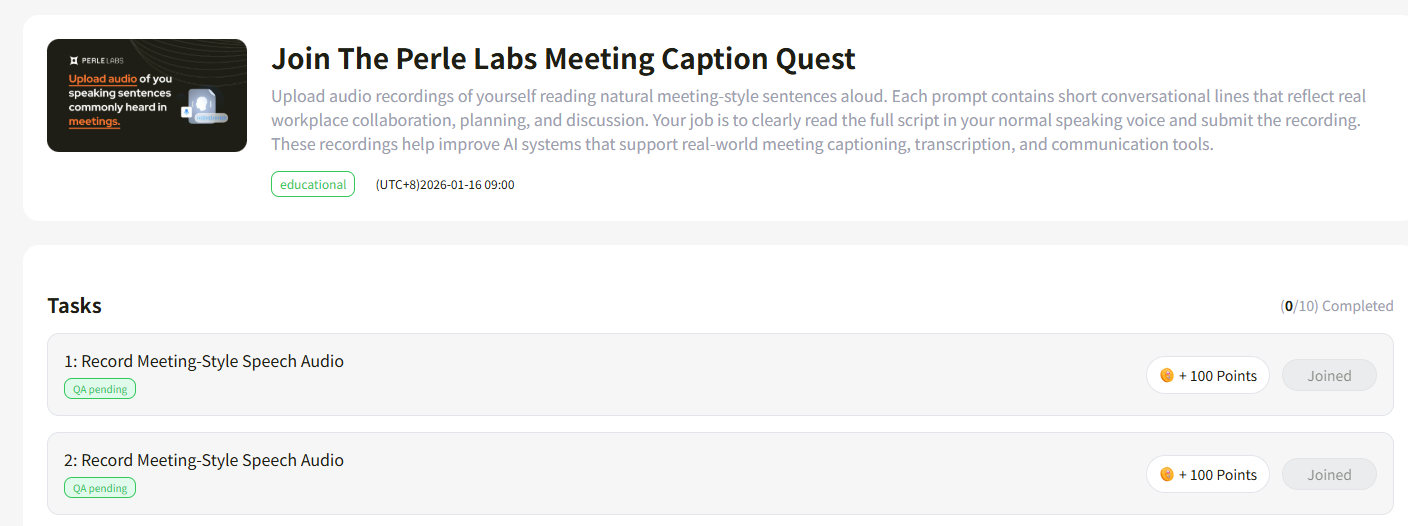

Task 4. Join The Perle Labs Meeting Caption Quest: Read sentences aloud (in English). 10 questions, 100 points each, 1000 points total. The audio part requires manual review and won't show as completed immediately; just submit the voice content on-chain.

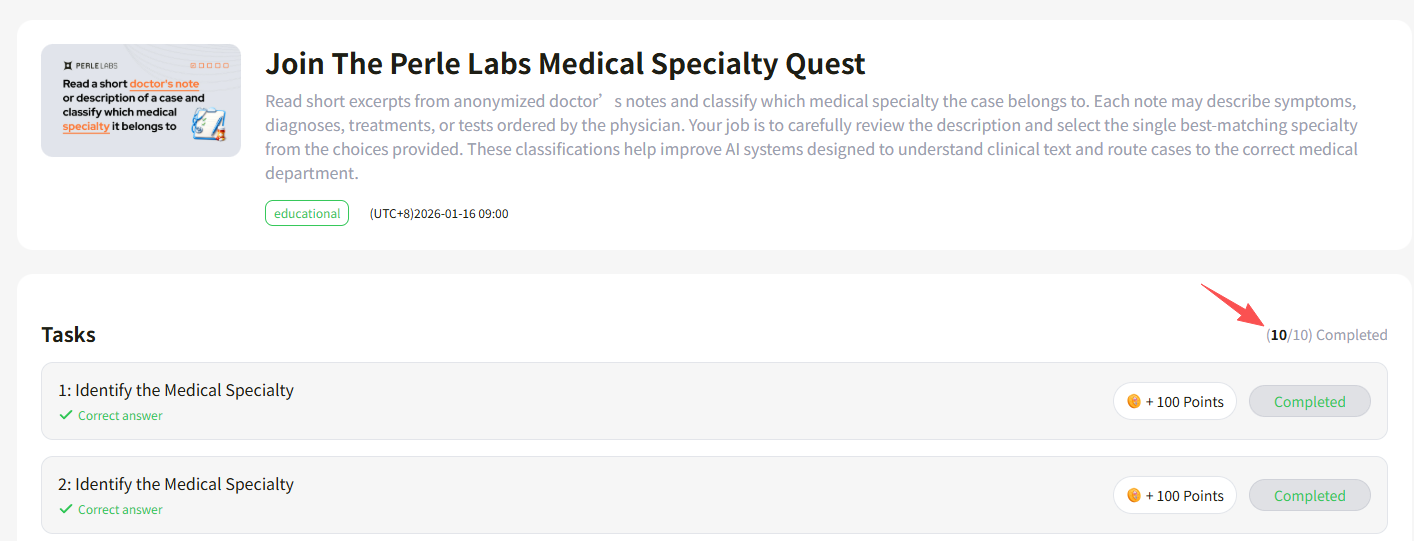

Task 5. Join The Perle Labs Medical Specialty Quest: Read the content of doctor's notes to determine the medical specialty of the case (the questions are highly specialized; using AI tools for assistance is recommended). 10 questions, 100 points each, 1000 points total.

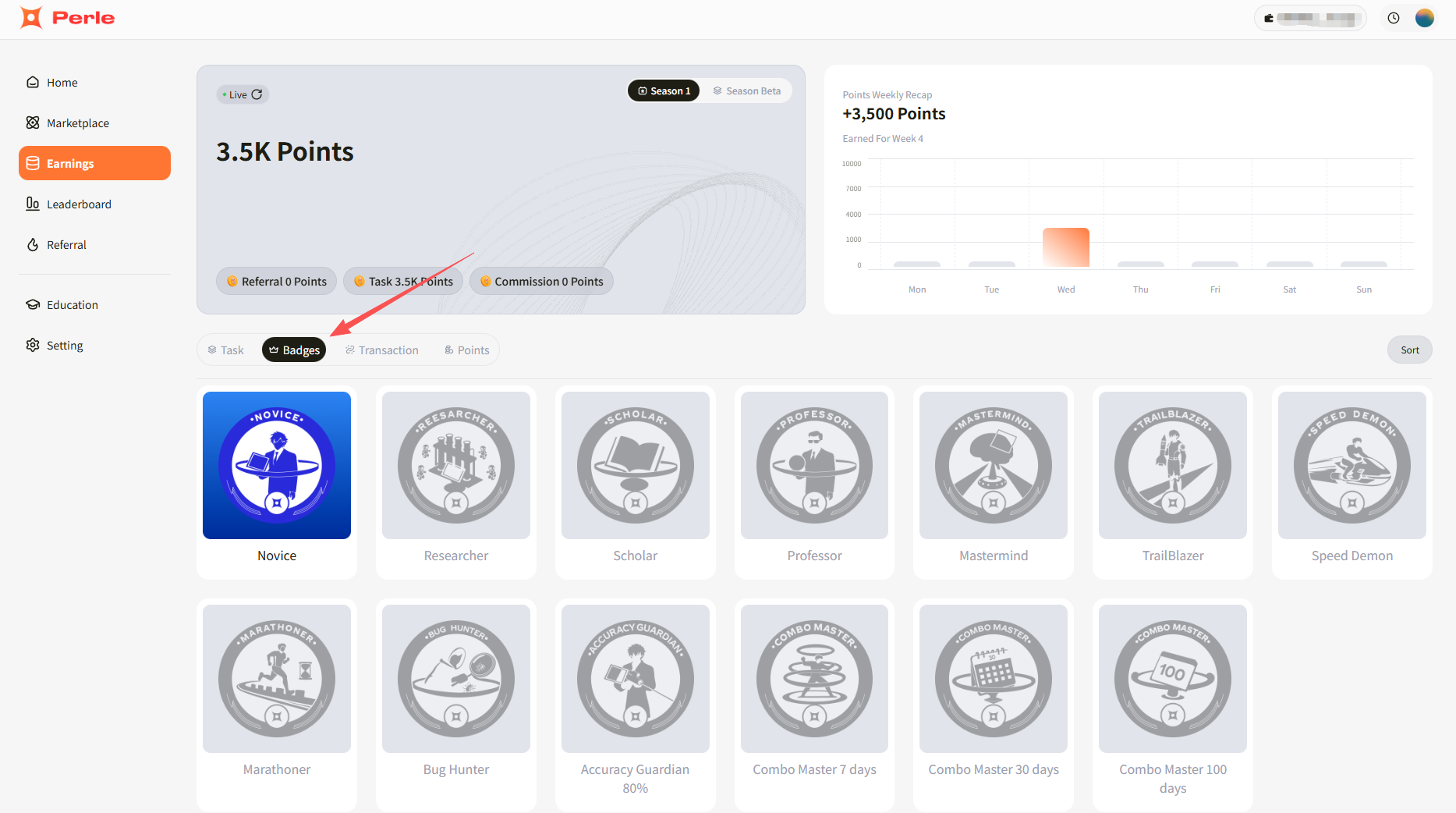

STEP 3. Click "Earnings" to view your points and badge acquisition status.