BitMine Immersion Technologies hit a major milestone on Wall Street today, marking a big start to Q2.

On Thursday, the firm was uplisted to the New York Stock Exchange (NYSE) from the ‘smaller’ NYSE American. In a statement, Tom Lee, Chairman of the world’s largest Ethereum treasury said that being uplisted to the “big board” is a “major milestone.” He added,

“The NYSE is the most prestigious venerable stock exchange with a storied history.”

This could increase the firm’s visibility and, by extension, its trading volume.

Additionally, the firm’s board approved the overhaul of its share buyback from 2025’s $1 billion to $4 billion. Commenting on the massive buyback cash, Lee claimed,

Bitmine’s expanded $4 billion buyback reflects our commitment to shareholders. There may be a time in the future when Bitmine shares are trading below intrinsic value, and the Company wants to be in a position to accretively retire common shares.

This means that the firm could actively begin its share buyback if the mNAV (market-to-Net Asset Value) falls below 1. In other words, when its share trades at a discount to its ETH holdings, then the buyback will be initiated.

The $4B program ranked BitMine among the top 10 firms with the largest corporate buybacks.

BitMine’s holdings hit 4.8M ETH

Separately, the firm reported that it now holds 4.8 million ETH and was 79% done with its target of holding 6 million ETH or the ‘5% Alchemy.’ In the past week alone, it bought 40K ETH.

Unlike Strategy, which currently holds BTC for pure price appreciation and to extend the same volatility to MSTR, BitMine eyes a steady annual revenue.

The firm recently launched a MAVAN staking platform and plans to stake its entire ETH stash on it. Thanks to current staking rewards, BitMine could earn $300 million annually. In fact, the MAVAN platform won’t stop at ETH, with planned expansion to target other Proof-of-Stake (PoS) chains like Solana.

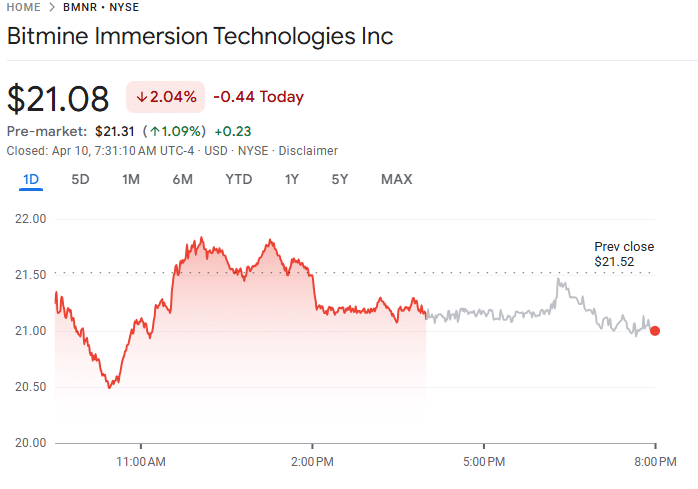

Meanwhile, BitMine’s stock, BMNR, slipped by 2% and closed the Thursday market session at $21.29 after the bullish update. On a year-to-date (YTD) basis, BMNR and ETH have posted nearly similar losses of 22% and 26%, respectively.

Final Summary

- BitMine was uplisted to the NYSE, an upgrade from the prior NYSE American, offering it an extra visibility to Wall Street investors seeking indirect exposure to ETH.

- Treasury firm now holds 4.8 million ETH and is about 20% away from hitting its goal of holding 6 million ETH.