Original | Odaily Planet Daily (@OdailyChina)

Author | Azuma (@azuma_eth)

On the evening of February 27, OpenAI announced the completion of its latest funding round of $110 billion at a pre-money valuation of $730 billion.

The funding for this round came from three giants: Amazon invested $50 billion (an initial investment of $15 billion, with the remaining $35 billion to be delivered over the coming months upon meeting specific conditions), NVIDIA invested $30 billion (which will be recouped through the procurement of a total of 5 GW of computing power), and SoftBank also invested $30 billion.

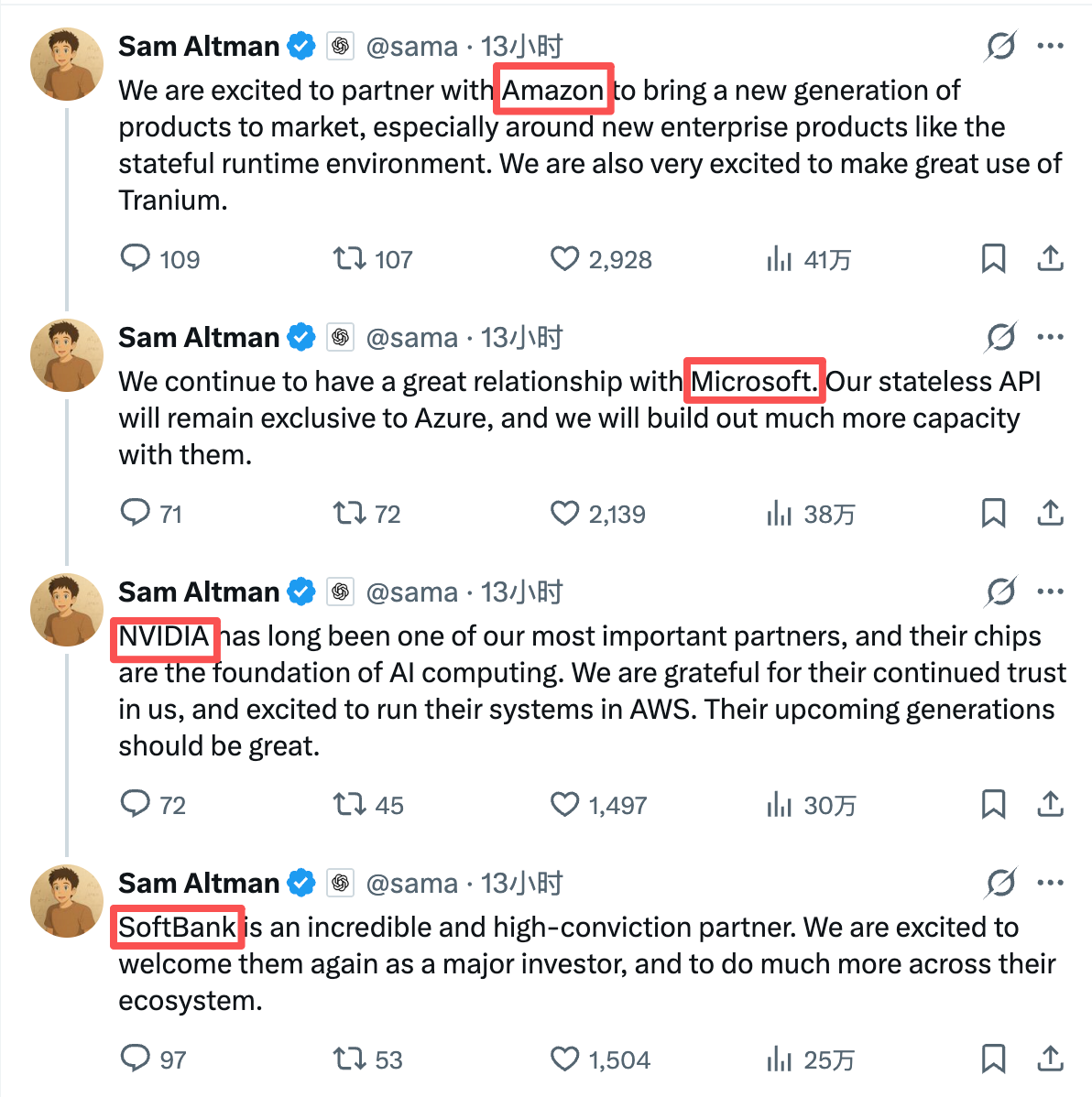

After the funding was completed, OpenAI founder Sam Altman expressed his thanks to the three investors on his personal X account. Notably, Sam Altman's order of thanks was Amazon, Microsoft, NVIDIA, SoftBank — the name of Microsoft, the "old" shareholder and important partner who did not contribute in this round, was mentioned immediately after Amazon, which committed the most funding.

Aakash Gupta, an overseas blogger who has long followed the AI sector, pointed out that while most people are focused on the astronomical figure of $110 billion, the most critical piece of information in Sam Altman's statement lies in two overlooked technical terms: "Stateless API" and "Stateful Runtime Environment," which were attributed to Microsoft and Amazon, respectively.

Behind the Technical Terms: The Present and Future of AI

The core difference between Stateless API and Stateful Runtime Environment lies in the two words: "Stateless" and "Stateful".

The "Stateless" nature of a Stateless API means that the server does not maintain persistent state across requests — one call completes one inference; you ask a question, the AI answers, and after the lifecycle of this request ends, the system does not retain context or continue running. The "Stateful" nature of a Runtime Environment, on the other hand, implies a persistent execution environment — the Agent has historical memory, can persist, collaborate across tasks, and perform tasks over the long term.

Stateless API is currently the mainstream form of LLM commercialization. Industries such as finance, retail, manufacturing, and healthcare mostly integrate AI in this form to embed it into existing systems (e.g., various Q&A assistants, document summarization, search enhancement, etc.). The advantage of this model is that enterprises can quickly overlay AI capabilities into their existing architecture with minimal friction, achieving functional optimization without significant restructuring of organization and processes. However, as model capabilities converge, computing power costs continue to decrease, and price competition intensifies, the token-based billing of Stateless APIs is more likely to become standardized and commoditized, with marginal profits facing ongoing compression.

In contrast, Stateful Runtime Environment currently has limited commercial scale, but it represents not just simple "functional optimization" but a shift in business paradigm — it is not only able to answer questions but can also be seen as a digital workforce to concretely execute tasks. This means that the budget it touches will extend from mere interface call fees to automation, process management, and even part of the labor costs. Because of this, the market's expectations for Stateful Runtime Environment are far higher than its current scale.

Aakash Gupta also stated on this matter that in 2026 and 2027, almost all enterprise roadmaps will revolve around "autonomous agent workflows," not one-off API calls. Companies that invest heavily in AI in the future will increasingly tend to purchase systems that can run sustainably, collaborate across tools, and maintain context over the long term.

To put it in the simplest terms: Stateless API represents the present, while Stateful Runtime Environment represents the future.

What Did Microsoft and Amazon Take, Respectively?

On the day the funding was completed, Microsoft and Amazon each announced their latest cooperation agreements with OpenAI.

Microsoft stated in its announcement that the terms of the partnership jointly announced by Microsoft and OpenAI in October 2025 would remain unchanged (the terms include OpenAI purchasing $250 billion worth of Azure services). Azure remains the exclusive cloud provider for OpenAI's Stateless API; any Stateless API calls to OpenAI models generated through cooperation with any third party (including Amazon) will be hosted on Azure; OpenAI's first-party products, including Frontier, will also continue to be hosted on Azure.

Amazon stated in its announcement that AWS and OpenAI will jointly build a Stateful Runtime Environment powered by OpenAI models and provide services to AWS customers through Amazon Bedrock, helping enterprises build generative AI applications and Agents at production scale; AWS will also become the exclusive third-party cloud distribution service provider for OpenAI Frontier; the existing $38 billion multi-year cooperation agreement between AWS and OpenAI will be expanded to $100 billion, with a term of 8 years. OpenAI will consume 2 GW of Trainium computing power through AWS infrastructure to support the needs of Stateful Runtime Environment, Frontier, and other advanced workloads; OpenAI and Amazon will also develop customized models that can be used to support Amazon's customer-facing applications.

Comparing the two announcements, the current situation becomes clear.

Microsoft, with its $250 billion agreement and exclusive service rights, has locked in the current traffic engine. As long as OpenAI's Stateless API is called, Azure will bill behind the scenes — regardless of who the customer is or where the channel is, the traffic will ultimately return to Azure. This is a highly certain cash flow, but the problem lies in the trend of shrinking profit margins for Stateless APIs; call volume may continue to grow, but actual profits may not necessarily remain stable in the long run.

On the other hand, Amazon, with its $50 billion in real money and a $100 billion expanded agreement, has secured the underlying hosting rights for the AI Agent era for AWS. Once Agents become the core carriers of enterprise productivity, the resources that are truly consumed over the long term — computing power, storage, scheduling systems, workflow orchestration, and cross-tool collaboration — will all be deposited on AWS's runtime environment.

One controls the current cash flow; the other bets on the future structure of productivity.

OpenAI's Diversified Bet

Before the future truly arrives, no one knows whether Microsoft's or Amazon's choice is correct. But what is certain is that under these two clearly defined cooperation agreements with explicit interest divisions, OpenAI's initiative is significantly increasing.

Over the past few years, OpenAI has been highly dependent on Microsoft for cloud infrastructure. Microsoft is not only a major shareholder holding 27% of the shares but also the controller of the infrastructure. This binding brought effective early-stage resource advantages to OpenAI, but it also meant that the balance of bargaining power would naturally tilt toward Microsoft. With Amazon's strong entry, it and Microsoft are bound to engage in direct competition to seize the future service rights of OpenAI.

For OpenAI, this is a typical diversified betting strategy — not deeply binding with any single cloud service provider, not letting future growth be entirely constrained by one party, and using future business as a bargaining chip to obtain better terms.

Neither Microsoft nor Amazon can afford to give up OpenAI at present. When neither can leave the table, bargaining power naturally returns to OpenAI's hands.