Across the Internet and socials, there has been a heated debate between traditional finance and blockchain. However, as it stands, most investors and institutions are now accepting blockchain as an equal competitor.

Just recently, digital banking giant Revolut crossed a major milestone, processing over $1.2 billion in stablecoin transfers on the Polygon network. The figure reflects real user activity, not test flows, while highlighting how blockchain rails are quietly entering mainstream finance.

In fact, according to Polygon’s official report, these transactions settled in seconds and cost fractions of a cent, making them significantly cheaper than legacy systems.

Why are institutions choosing Polygon?

The economics behind this shift are hard to ignore. Revolut reportedly processed the entire $1.2 billion volume for less than $700 in total fees, demonstrating the scale advantage of blockchain-based settlements.

Polygon consistently offers the lowest transaction costs among major chains – Up to 426x cheaper than Ethereum and 4x cheaper than Solana in many cases.

For institutions moving large capital, this difference compounds quickly. What would cost millions in traditional infrastructure can now be executed almost instantly at near-zero cost.

Traditional cross-border transfers still lag behind

Despite decades of innovation, traditional cross-border systems remain slow and expensive. Payments routed through correspondent banking networks like SWIFT can take 1–5 business days and involve multiple intermediaries.

Fees are another major drawback. Global remittance costs average around 6.49%, with banks often charging over 14% in some corridors.

On the contrary, Polygon-based transfers eliminate intermediaries, settle in seconds, and offer 1:1 stablecoin conversions with no hidden FX spreads.

A structural shift, not a trend

Revolut’s $1.2 billion milestone is more than a headline. In fact, it’s a proof point. Institutions are no longer experimenting with blockchain; they’re deploying it at scale.

As stablecoin infrastructure matures, networks like Polygon are positioning themselves as the back end for global money movement – Faster, cheaper and increasingly invisible to the end user.

Polygon’s network token is benefiting from network adoption

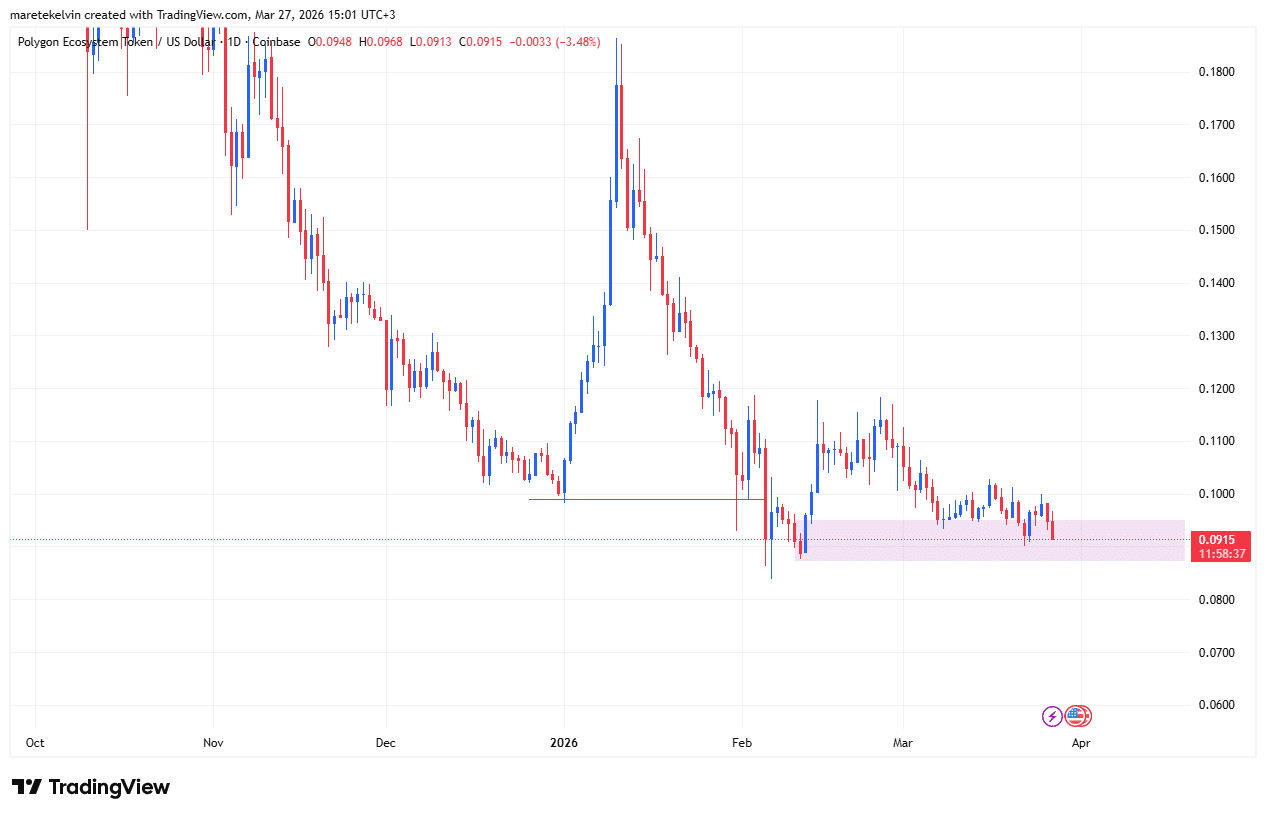

On the daily chart, POL seemed to be gaining some traction at press time. This, despite the fact that the token’s prices have been consolidating over the last few weeks.

If the network keeps recording these significant gains, the altcoin’s prices could usher in a potential breakout as long as the demand zone at around $0.095 holds.

Final Summary

- Blockchain rails like Polygon are proving significantly cheaper and faster than traditional cross-border systems at institutional scale.

- Revolut’s $1.2B volume signals a structural shift towards stablecoin-powered global payments, rather than a temporary trend.