On April 1, St. Louis Fed economists Miguel Faria-e-Castro and Serdar Ozkan published a blog post with a restrained title and a sharp conclusion: AI optimism itself is an inflation driver. Not because electricity bills are rising, not because of a chip shortage, but because everyone believes AI will make the future better—this belief makes them spend more money now.

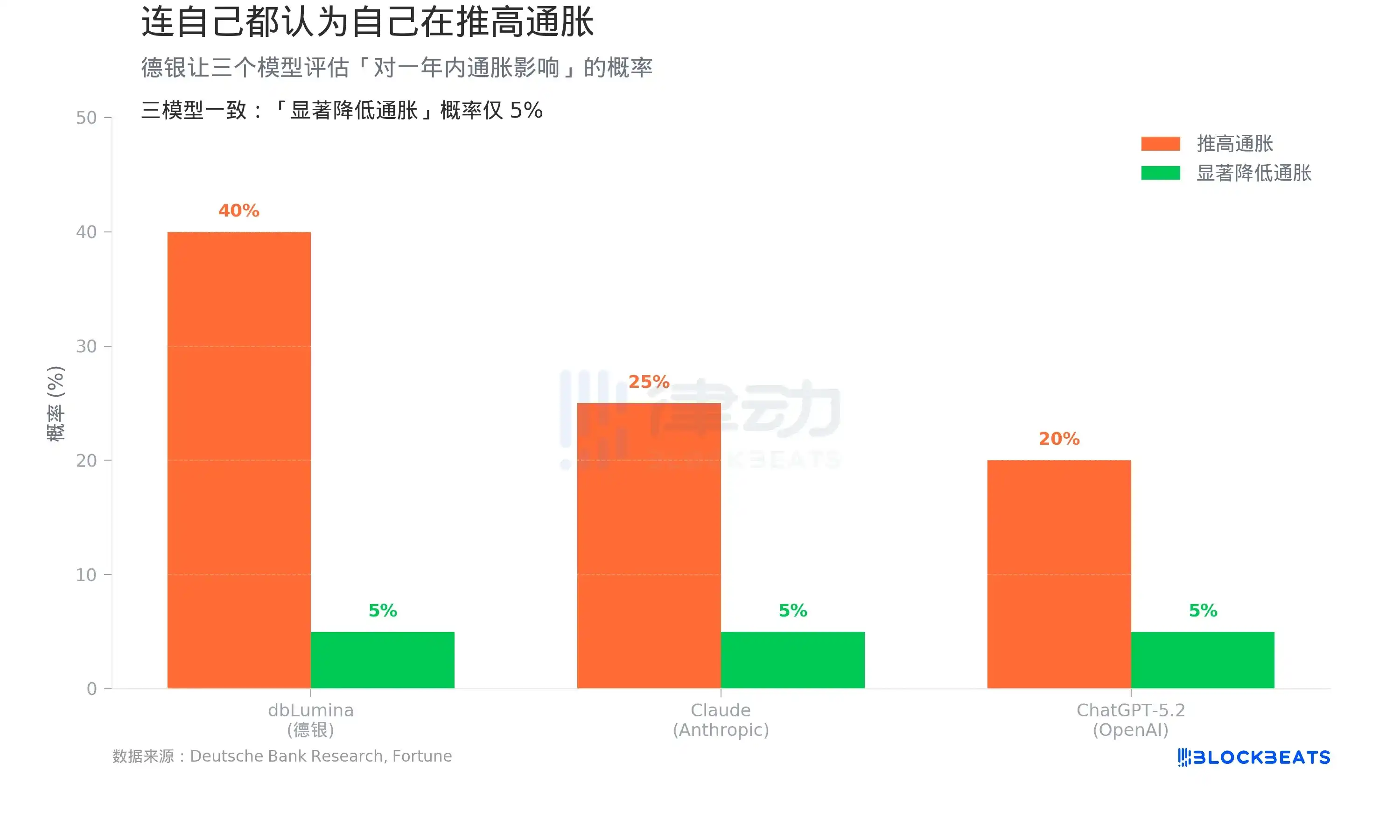

On the same day, Fortune disclosed an experiment by Deutsche Bank: they had three AI models evaluate the "impact of AI on inflation." The conclusion was that even AI itself believes it is pushing up prices.

On social media, posts about soaring US prices are abundant

These two pieces together point to an uncomfortable cycle: the more investment in AI, the higher inflation, the further away interest rate cuts are, the higher financing costs become—yet investment continues to accelerate.

The Unstoppable Arms Race

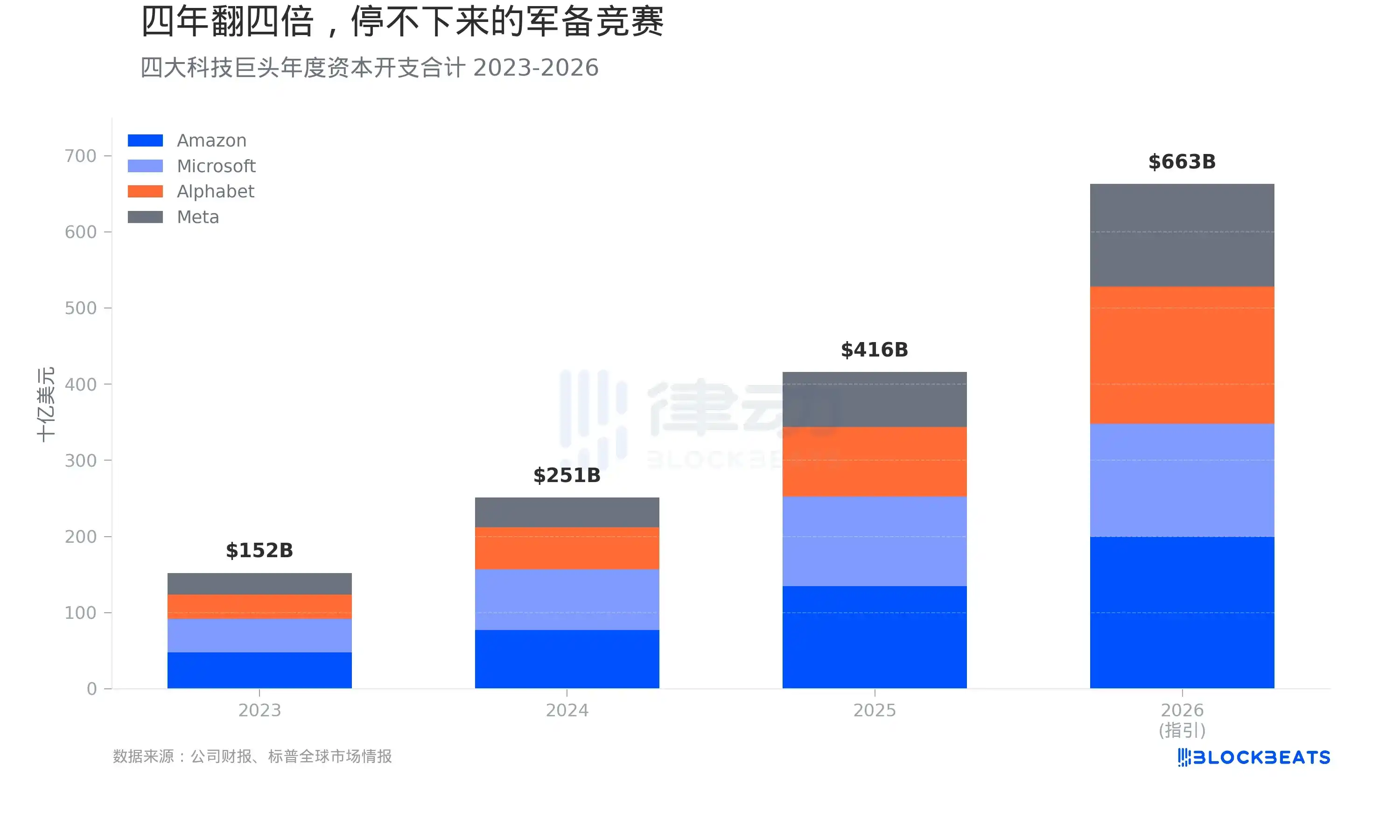

First, look at the money. According to company financial reports, the combined capital expenditures of Amazon, Microsoft, Google, and Meta in 2023 were approximately $152 billion. By 2024, this number jumped to $251 billion, a 65% increase. For the full year 2025, it settled at $416 billion, another 66% increase.

Company guidance for 2026 is even more aggressive. According to a summary by Wolf Street, Amazon guided for $200 billion, Google for $175 to $185 billion, Microsoft for $145 to $150 billion, and Meta for $135 billion. The four together amount to about $663 billion. Adding Oracle's $42 billion, the total for the five companies approaches $700 billion.

In four years, the capital expenditures of these four companies have quadrupled. This growth rate is unprecedented in US corporate history. According to a Fortune report, this scale already exceeds Sweden's annual GDP.

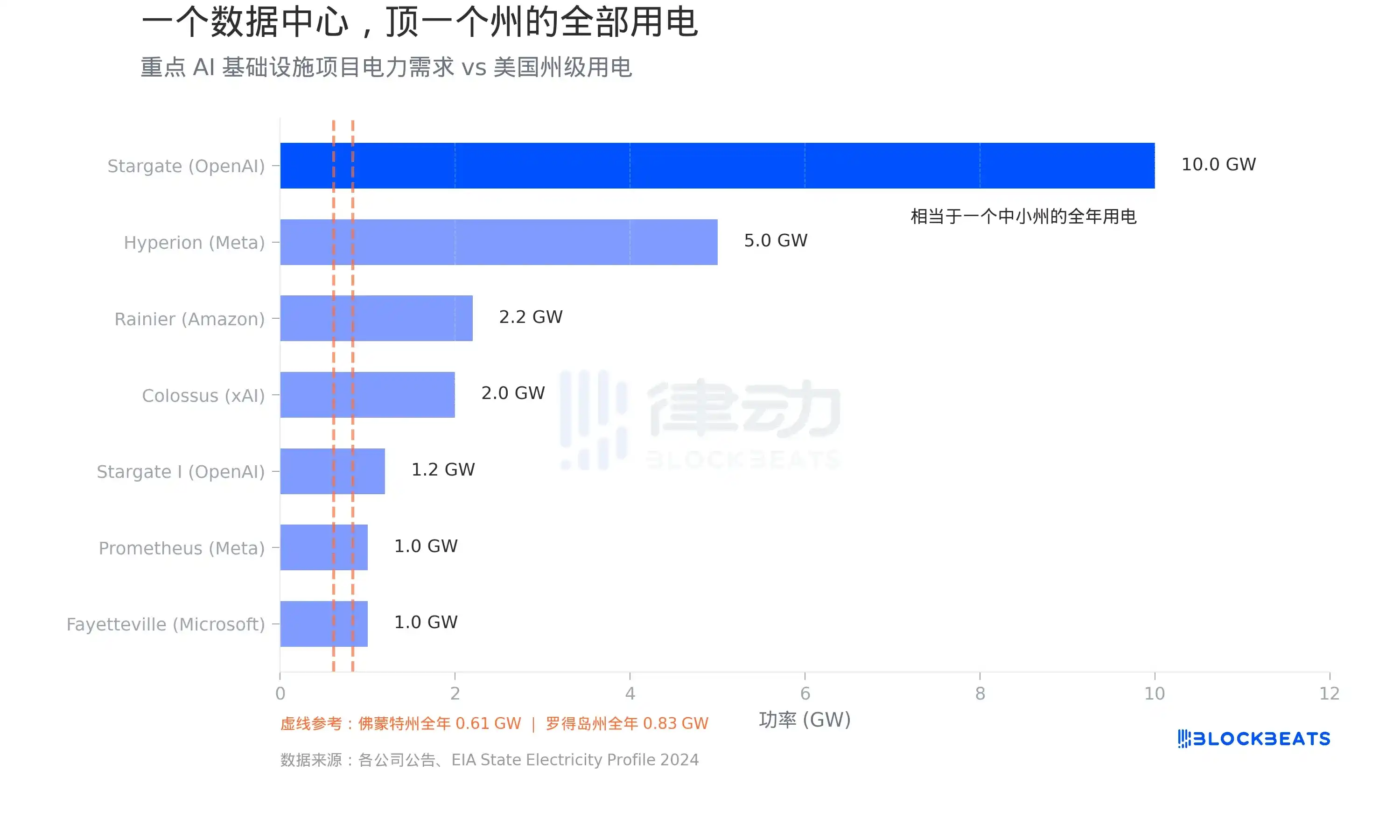

One Data Center, Consuming as Much Power as an Entire State

Most of this money is flowing into data centers. And the biggest bottleneck for data centers is not land, but electricity. According to EIA data, Vermont's annual electricity consumption is about 5,364 GWh, which translates to an average load of 0.61 GW. Rhode Island is slightly higher, about 0.83 GW.

Now look at what data centers are doing. According to company announcements, the total planned power capacity for the Stargate project, a collaboration between OpenAI, Oracle, and SoftBank, reaches 10 GW, equivalent to the entire electricity consumption of 16 Vermonts. Meta's Hyperion campus in Louisiana is planned for 5 GW, with an investment of $27 billion. Musk's xAI Colossus in Memphis, Tennessee, has expanded to 2 GW; according to an Introl report, it deployed 555,000 Nvidia GPUs, costing about $18 billion. Amazon and Anthropic's joint Project Rainier in Indiana is planned for 2.2 GW.

According to S&P Global data, US data centers consumed 183 TWh of electricity in 2024, accounting for over 4% of the nation's total electricity consumption. By 2030, this number is expected to triple.

This power demand is not a distant, planned story; it is already straining existing grids. According to a CBRE report, the vacancy rate for North American data centers dropped from 3.3% in the first half of 2023 to a record low of 1.6% in the first half of 2025. According to Cushman & Wakefield data, the vacancy rate slightly recovered to 3.5% in the second half of 2025, but only because a large amount of new capacity was delivered—absolute levels remain at historical lows, and meaningful supply relief is unlikely to appear before 2030.

Even AI Itself Says It's Pushing Up Inflation

While these investments are driving demand, pushing up electricity prices, and causing chip shortages, there is also a more hidden inflation channel.

According to a Fortune report on April 1, a team led by Deutsche Bank's chief US economist, Matthew Luzzetti, conducted an experiment: they asked Deutsche Bank's own model dbLumina, Anthropic's Claude, and OpenAI's ChatGPT-5.2 to respectively assess the "probability that AI will push up inflation in the next year."

Results: dbLumina gave 40%, Claude gave 25%, and ChatGPT-5.2 gave 20%. All three models were consistent in their assessment of the probability of "AI significantly reducing inflation": only 5%.

The inflation drivers cited by the three models were highly consistent: data centers are expanding massively, semiconductor demand is soaring, and the power consumption of AI workloads is growing rapidly—all of these are demand-pull price pressures.

This is the opposite of the consensus among some Wall Street investors. The Deutsche Bank team wrote in their research report: "Will AI be a major deflationary force? Even AI itself doesn't think so."

On a five-year horizon, the models did turn to more deflationary possibilities. But the probability of "AI causing large-scale deflation" is still relegated to the tail risk zone.

Optimism Itself Is Inflationary

The St. Louis Fed paper provides a theoretical framework to explain all of this.

Faria-e-Castro and Ozkan used a standard macroeconomic model, defining the AI investment boom as a "news shock." According to the Fed blog post, the model's logic is: when households see AI described as a revolutionary technology, they expect future income to rise and increase consumption提前 (in advance). Firms expect productivity gains and increase investment. The two combined cause demand to quickly exceed supply. The paper states: "These forces together generate an inflationary surge in aggregate demand—a core feature of the initial phase of a news shock."

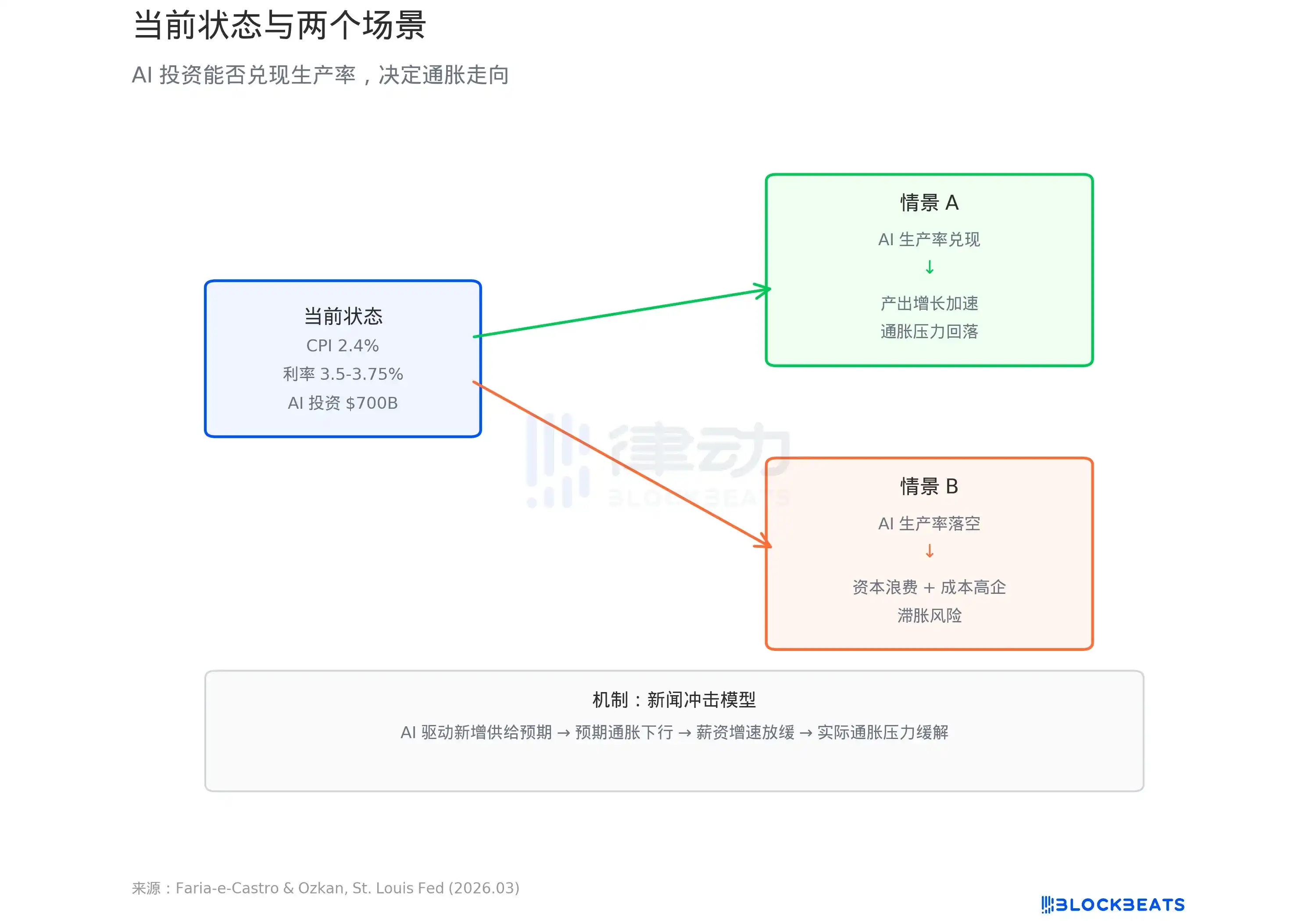

The model presents two paths. If AI does bring a productivity leap, short-term inflation will be digested by long-term output growth, and the economy enters a virtuous cycle. But if productivity does not materialize—the paper uses the term "persistent low growth and stubborn high inflation," i.e., stagflation.

According to data cited in the Fed blog post, the annualized growth rate of US Total Factor Productivity (TFP) since the release of ChatGPT has been 1.11%, lower than the historical average of 1.23%. So far, AI has left no mark on productivity data.

Meanwhile, according to BLS data, the US CPI in February 2026 was 2.4% year-on-year, and core CPI was 2.5%, neither yet back to the Fed's 2% target. The Fed's March dot plot shows a median forecast for the year-end rate of 3.4%, pointing to only one rate cut this year.

$700 billion is pouring into AI infrastructure. Whether this money is a cause of inflation or the prelude to a productivity revolution depends on a question no one can yet answer: will the models running in these data centers actually make the economy more efficient.