Author: Claude, Deep Tide TechFlow

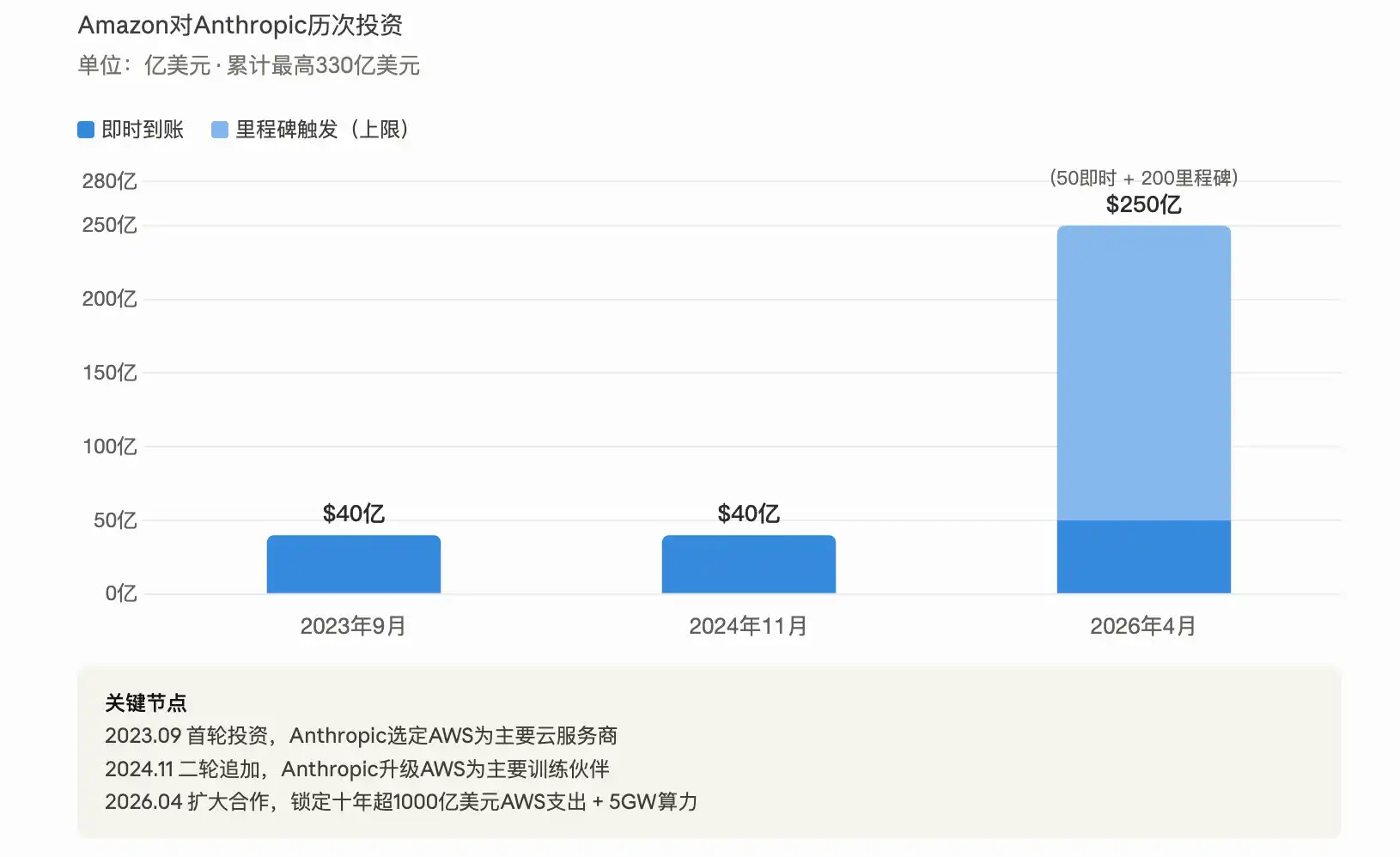

Deep Tide Guide: Amazon announced on Monday an additional investment of up to $25 billion in Anthropic (with $5 billion immediately available), securing a commitment from the latter for over $100 billion in AWS spending over the next decade.

This is Amazon's second hundred-billion-dollar check to a leading AI lab within two months—it had just invested $50 billion in OpenAI.

Anthropic's annualized revenue has exceeded $30 billion, but computing power bottlenecks are hampering the user experience; the core goal of this deal is to resolve the capacity crisis.

Amazon is placing bets on both of the AI field's top labs simultaneously, and the stakes are getting larger.

According to reports from CNBC, Bloomberg, and other media outlets on April 20, Amazon announced an additional investment of up to $25 billion in Anthropic, with $5 billion available immediately and the remaining $20 billion tied to specific business milestones. This investment is executed at Anthropic's $380 billion valuation from its Series G financing in February of this year. Combined with the previous cumulative investment of $8 billion, Amazon's total investment commitment to Anthropic now reaches a cap of $33 billion.

Two months ago, Amazon had just invested $50 billion in OpenAI, Anthropic's main competitor, and reached a cloud services agreement of a similar scale. Amazon CEO Andy Jassy stated in an announcement that Anthropic's commitment to running its large language models on AWS Trainium for up to ten years "reflects the progress we have made in the field of custom chips."

Following the news, Amazon's stock price rose approximately 2.5% in after-hours trading.

$100 Billion Cloud Commitment for 5 Gigawatts of Compute Power, Responding to OpenAI's 'Insufficient Compute' Allegations

The core of this deal is not just equity investment but a deeply binding infrastructure agreement.

Anthropic has committed to investing over $100 billion in AWS technology over the next decade, covering Amazon's custom AI chips Trainium (from Trainium2 to Trainium4 and future generations) and tens of millions of Graviton CPU cores. In exchange, Anthropic will receive up to 5 gigawatts of computing capacity for training and deploying Claude models. According to Anthropic's blog disclosure, the company currently uses over 1 million Trainium2 chips to train and serve Claude and plans to put nearly 1 gigawatt of Trainium2 and Trainium3 capacity into operation by the end of 2026.

This expansion in computing scale directly responds to recent public attacks from OpenAI. OpenAI's Chief Revenue Officer, Denise Dresser, claimed in an internal memo last week that Anthropic made a "strategic error by failing to secure sufficient computing power" and predicted that OpenAI would have 30 gigawatts of computing power by 2030, while Anthropic would have only 7 to 8 gigawatts by the end of 2027. In its announcement that day, Anthropic frankly admitted that demand for Claude from enterprises and developers is accelerating, and consumer usage has seen a "sharp increase," putting "inevitable pressure" on infrastructure and affecting reliability and performance during peak periods.

Anthropic CEO Dario Amodei stated in a declaration: "Users tell us that Claude is becoming increasingly important to their work, and we need to build infrastructure to keep up with the rapidly growing demand."

Amazon Writes Hundred-Billion-Dollar Checks to Two AI Labs in Two Months

Amazon's investment strategy is now very clear: bet on both top players in the AI race simultaneously.

In February of this year, Amazon announced an investment of up to $50 billion in OpenAI, also accompanied by a $100 billion AWS cloud service commitment. The structure of the deal with Anthropic is almost identical—$25 billion in investment plus a lock on over $100 billion in cloud spending. According to GeekWire, Amazon is executing the "same playbook" for both labs.

The two major AI companies are also racing to prove their strength to investors. According to CNBC, both Anthropic and OpenAI are preparing for potential IPOs that could land as early as this year. OpenAI's latest funding round valued it at over $850 billion, while Anthropic is valued at $380 billion. Anthropic claims its annualized revenue has exceeded $30 billion (approximately $9 billion at the end of 2025), while OpenAI's memo alleged that this figure was inflated by about $8 billion because Anthropic accounted for revenue from cloud partnerships with Amazon and Google on a gross rather than net basis.

Microsoft is also betting on both sides—it had already invested over $13 billion in OpenAI and in November 2025 invested up to $5 billion in Anthropic, which committed to purchasing $30 billion in Azure computing power.

Claude Platform Integrates with AWS, Battle for Over 100,000 Customers

Beyond investment, integration at the product level is also deepening.

According to the announcement, the native Claude platform will be directly embedded into AWS. Users will be able to access the full Claude console through their existing AWS accounts, permission controls, and billing systems, without needing additional registration or new contracts. This goes a step further than the previous offering of Claude services through the Amazon Bedrock marketplace. Amazon disclosed that over 100,000 organizations are currently running Claude models on Amazon Bedrock.

Anthropic also emphasized in its blog that Claude is the only frontier AI model simultaneously available on all three major global cloud platforms (AWS Bedrock, Google Cloud Vertex AI, Microsoft Azure Foundry). This multi-platform strategy allows enterprise customers to flexibly choose their deployment path based on needs and is also one of Anthropic's differentiated advantages in competing with OpenAI.

On the client side, after Lyft used Claude via Amazon Bedrock to power its customer service AI assistant, the average resolution time for customer service was reduced by 87%. Pfizer uses Claude to help scientists perform voice searches in drug development documents, saving approximately 16,000 hours of retrieval time per year.

AI Infrastructure Race: Amazon's Capital Expenditure Expected to Reach $200 Billion This Year

The larger context embedding this deal is the AI infrastructure arms race among cloud computing giants.

Amazon stated in February that it expects capital expenditure to reach approximately $200 billion in 2026, with the vast majority directed toward AI infrastructure. The previously co-developed Project Rainier (a super-large-scale computing cluster with nearly 500,000 Trainium2 chips) was once one of the world's largest AI computing clusters, which Anthropic is using to train and deploy current and future versions of Claude.

Earlier this month, Anthropic also expanded its cooperation with Google and Broadcom, locking in computing power on the scale of "several gigawatts," expected to come online starting in 2027. Combined with this 5-gigawatt agreement with Amazon, Anthropic is expanding its computing power reserves across multiple lines simultaneously.

Amazon's custom chip business itself is also accelerating. Jassy recently revealed that the business's annualized revenue has exceeded $20 billion, doubling from the $10 billion reported earlier this year, which in his words is "on fire."