On the afternoon of May 6, NVIDIA announced an investment. The amount wasn't exceptionally large, $5 billion. But the contract stipulated that it could be increased to $32 billion in the future. Corning's stock price rose 14% that day.

What's more intriguing is the structure of this deal. Among the 18 million equity warrants Corning gave to NVIDIA, 3 million had an exercise price of $0.0001. This essentially means those 3 million shares were almost gifted to Corning. That same afternoon, at an investor meeting in New York, Corning raised its revenue growth target to $40 billion by 2030.

But this isn't the most unusual part of Corning's recent months. The company's first-quarter earnings report stated that in the past few months, two unnamed companies had each signed multi-year contracts worth $6 billion with Corning. The reason for saying "another" is that Corning had recently just signed a contract of the same scale with Meta.

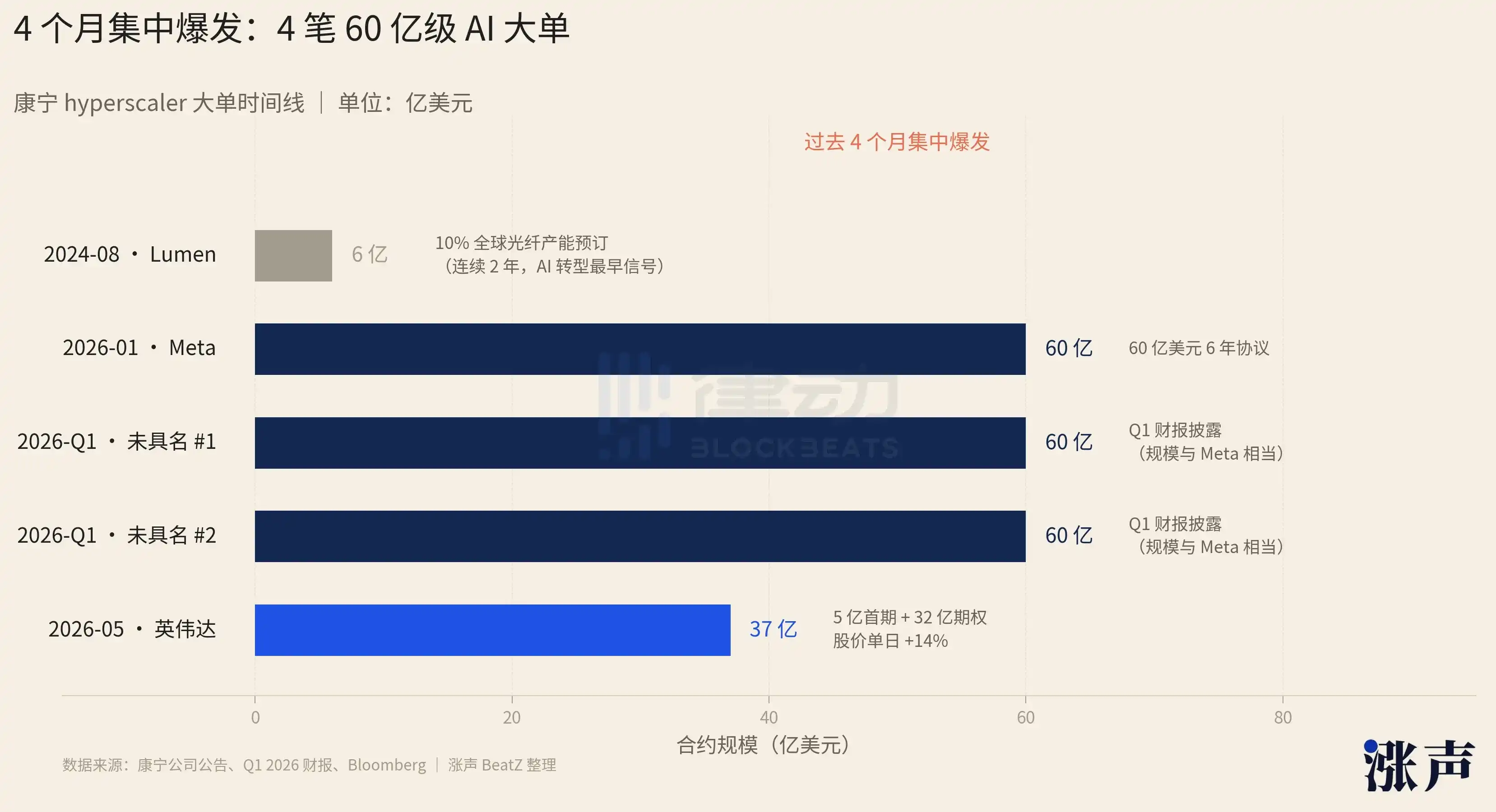

Count them up, and you'll find that in the past 4 months, at least 4 multi-billion dollar AI orders, each worth tens of billions, have concentrated on this 174-year-old glass company. Over the past 6 months, Corning's stock price has risen 140%, and compared to two years ago, it has already increased fivefold.

From Selling Phone Glass to the Darling of AI Factories

If you're reading this article on a phone, the glass covering your screen most likely was produced by Corning. Since the first-generation iPhone in 2007, Corning's Gorilla Glass has almost become the default choice for premium smartphone screens globally. But being a "phone glass supplier" is just one facet of Corning, and not the most profitable one.

Corning's Gorilla Glass production line, Source: Apple

The company was founded in 1851. It made the glass shell for Edison's first incandescent light bulb, invented low-loss optical fiber from scratch in the 1970s, creating the entire modern optical fiber industry. The 2007 iPhone glass marked its third major business transformation. Today's Corning is undergoing its fourth transformation, with optical communications becoming the true locomotive driving its business.

Corning's optical communications business has a history of over 50 years, but its customer structure has undergone a complete reversal in the last two years.

For a long time, Corning's optical fiber was primarily sold to telecommunications operators like AT&T and Verizon. These companies used it to build fiber-to-the-home networks and 4G/5G base stations. In 2009, Corning launched a data center cabling solution called EDGE, formally adding data center operators to its customer list. Over the past decade-plus, with the explosion of mobile internet, the proliferation of cloud services, and the surge in remote work during the pandemic, Corning's optical communications business grew steadily but never became the major revenue driver.

In November 2022, OpenAI launched ChatGPT to the public. From that moment on, data centers worldwide began redesigning their physical infrastructure for the new computational task of AI training. The fiber density required for AI training is unprecedented in any previous era.

The earliest sign appeared in August 2024. A US telecommunications operator named Lumen booked 10% of Corning's global fiber optic capacity in one go, for two consecutive years. This was the earliest public signal of Corning's business transitioning towards the AI domain.

By early 2026, the aforementioned four $6+ billion contracts burst onto the scene. Corning had collaborated with data center operators for 15 years, but the shift from "secondary client" to "absolute mainstay" happened only in the past 24 months.

The direct effect of this customer flip is written in Corning's financial reports. Corning's full-year revenue for 2023 fell 11% year-over-year, a period of industry downturn. But by 2025, full-year revenue surged to $15.6 billion, a 19% increase. In the first quarter of this year, revenue grew another 18% year-over-year. The most explosive growth was in optical communications, with full-year growth at 35%. The proportion of optical communications to total revenue rose from 30% in 2020 to 37% in 2025. The absolute change is more直观: from $2 billion five years ago to $6.3 billion in 2025, more than tripling.

This leap from "secondary business" to "locomotive" isn't accidental; it's driven by a growth plan led by CEO Wendell Weeks. This plan has an internal codename: Springboard.

Two years ago, Corning was described by Wall Street analysts as a "boring glass manufacturer," categorized as a mature, low-growth dividend stock. But three years after the implementation of the Springboard plan, Corning's stock price rose from just over $30 at the beginning of 2024 to $162, a fivefold increase in two years, with a 140% surge in the past six months alone. The glass factory has transformed into the "nervous system of the AI revolution."

Springboard was first announced in September 2024. The starting point was the annualized revenue level in the fourth quarter of 2023, around $13 billion. The initial goal was to increase annualized revenue by more than $3 billion by the end of 2026, achieving an overall operating profit margin of 20%.

However, over the next year and a half, this target was raised three times consecutively, pushing it to $6.5 billion. This aimed to push the annualized revenue by the end of 2026 to the $20 billion scale. Following NVIDIA's investment in Corning on May 6, the company directly raised its internal revenue target for 2030 to $40 billion. Meanwhile, Corning achieved its 20% profit margin target a year early in the fourth quarter of 2025.

The key to the Springboard plan lies in "premium." The company's sales grew by 18%, but earnings per share grew by 46%, with profit growth being 2.5 times that of sales growth. On the business side, Corning mainly did three specific things:

First, it aggressively raised prices for mature businesses. Corning's display glass is already a mature, non-growth business. But at the end of 2024, Corning raised prices on this line by over 10% and locked in the yen exchange rate until 2030. The result was that this line stably contributes $900-950 million in net profit annually, even in a depreciating yen environment, maintaining a net profit margin of 25%.

Second, it upgraded its optical communications products. In full-year 2025, optical communications sales increased by 35%, but net profit increased by 71%. This means optical communications not only sold more, but each fiber earned more profit.

Third, it utilized idle capacity. Corning did not build large-scale new factories but restarted capacity that was idle during previous cyclical downturns, raising the company's overall gross margin from 33% in 2024 to 36% in 2025.

Of course, the ability to raise prices means someone is willing to pay. The ability to earn more from product upgrades means someone is willing to pay more for the upgraded products. The essence of Springboard driving Corning's profit growth faster than its revenue is that its customer structure now includes a group willing to pay a premium.

Everyone is Scrambling for Fiber

The AGI race and order demand have made every data center operator intensely anxious about time.

The core business of cloud giants has always been "renting IT to enterprises." Companies like Netflix, Airbnb, and Uber, which rose with the mobile internet, generate mostly "north-south" traffic. A user opens an app from outside, the request is sent to a server in the cloud, and the server returns data. Servers occasionally communicate with each other, but the volume and frequency aren't high. This network structure doesn't place stringent demands on the underlying physical infrastructure: Ethernet works, copper cables work, ordinary fiber works. Cloud giants have used this architecture for over a decade—stable, reliable, and profitable.

That all started to change with the launch of ChatGPT.

In the following years, almost all cloud giants began to engage in training themselves. Microsoft is the primary compute provider for OpenAI, AWS is deeply tied to Anthropic, and Alibaba trains Tongyi. The core business of cloud giants is shifting from "renting IT to enterprises" to "training AI for the world."

But the chain reaction triggered by this shift at the physical infrastructure level has surpassed all the accumulated knowledge of the past 20 years.

The traffic characteristic of AI training is "east-west." Training a large model might require tens of thousands of GPUs to communicate with each other simultaneously, synchronizing computed gradients. If any single connection is slightly slower, the entire training phase waits for it—tens of thousands of GPUs become "cars stopped at an intersection." Therefore, the latency and bandwidth requirements for east-west traffic are dozens of times greater than those for past north-south traffic.

Prior to this, most high-speed connections within data centers were copper cables. Copper is cheap, easy to install, and stable—it has long been the default choice for data centers. The geometric structure of AI training clusters is precisely what copper cables dislike the most. Tens of thousands of GPUs are distributed across dozens of racks, often separated by tens of meters, making copper connections impractical. Fiber optics, however, have no distance limit in this regard.

Overnight, the previously sufficient sparse networks became inadequate. Cloud giants need to re-lay fiber, denser than ever before.

The scale of this re-laying is already reflected in their capital expenditures. In 2026, the combined capital expenditure of the world's six largest cloud giants is projected to exceed $600 billion. The number of operational hyperscale data centers globally has reached 1,297, nearly triple the number at the beginning of 2018. In 2026 alone, over 150 new data centers are expected to be added, corresponding to AI infrastructure spending exceeding $400 billion.

Market research firms estimate that the total fiber demand for AI clusters is 10 to 100 times that of traditional cloud services. This is the fundamental reason Corning can now secure four $6+ billion mega-orders.

Between data centers, between racks, all fiber must pass through things called conduit/cable trays. These are typically plastic or metal pipes with 2-inch to 4-inch inner diameters, either buried underground or running along racks. A key characteristic is that once laid, it's very difficult to add more. To bury an additional conduit between cities means reapplying for right-of-way, digging up the road again—a process measured in years. To add another conduit in an already operational machine room means downtime for renovation, measured in months.

Conduit about to be buried underground, Source: Internet

What Corning has specifically done for AI data centers over the past two years is to enable existing conduits to hold more fiber without increasing their number.

Besides making the fiber itself thinner, Corning changed the fiber arrangement from loose "spaghetti-style" to flat, ribbon-like cables that can be rolled up. They are flattened when needed and rolled up when not, then densely packed into the conduit. Originally, a 2-inch conduit could hold a little over a thousand fibers. Corning's new design can pack in over three thousand, doubling the count. If using a 4-inch conduit with six such cables side-by-side, it can hold over twenty thousand fibers, more than six times the traditional design.

Corning's rollable fiber ribbon, Source: Corning

It's not just about packing more; termination is also less labor-intensive. A 3,456-fiber cable, using traditional methods, would require over 200 man-hours to connect strand by strand. Corning's ribbon design can reduce this to under 40 hours, and cable preparation time is reduced by 30%. Remember, the US already faces a shortage of optical communication engineers.

In the construction of a large AI factory, every month of delay means massive GPU depreciation and postponed training tasks, costing hundreds of millions on the books. A product that can cut months of time and millions in engineering costs is an absolute bargain compared to paying a 30% to 70% premium on the fiber itself.

Jensen Huang's "Unprecedented Scale"

On May 8, NVIDIA CEO Jensen Huang emphasized again in an interview that next-generation AI infrastructure requires massive optical connectivity, and copper wires can no longer meet the demand. He also stated that NVIDIA needs to expand the application of optical technology on an unprecedented scale.

The details of the investment deal with Corning indeed reveal this "unprecedented scale." Among the 18 million equity warrants, 3 million were "given away." This structure is rare in NVIDIA's ecosystem investments over the past year, indicating that NVIDIA secured significant equity exposure in Corning immediately without using cash. It's more like a signing bonus for a long-term partnership agreement.

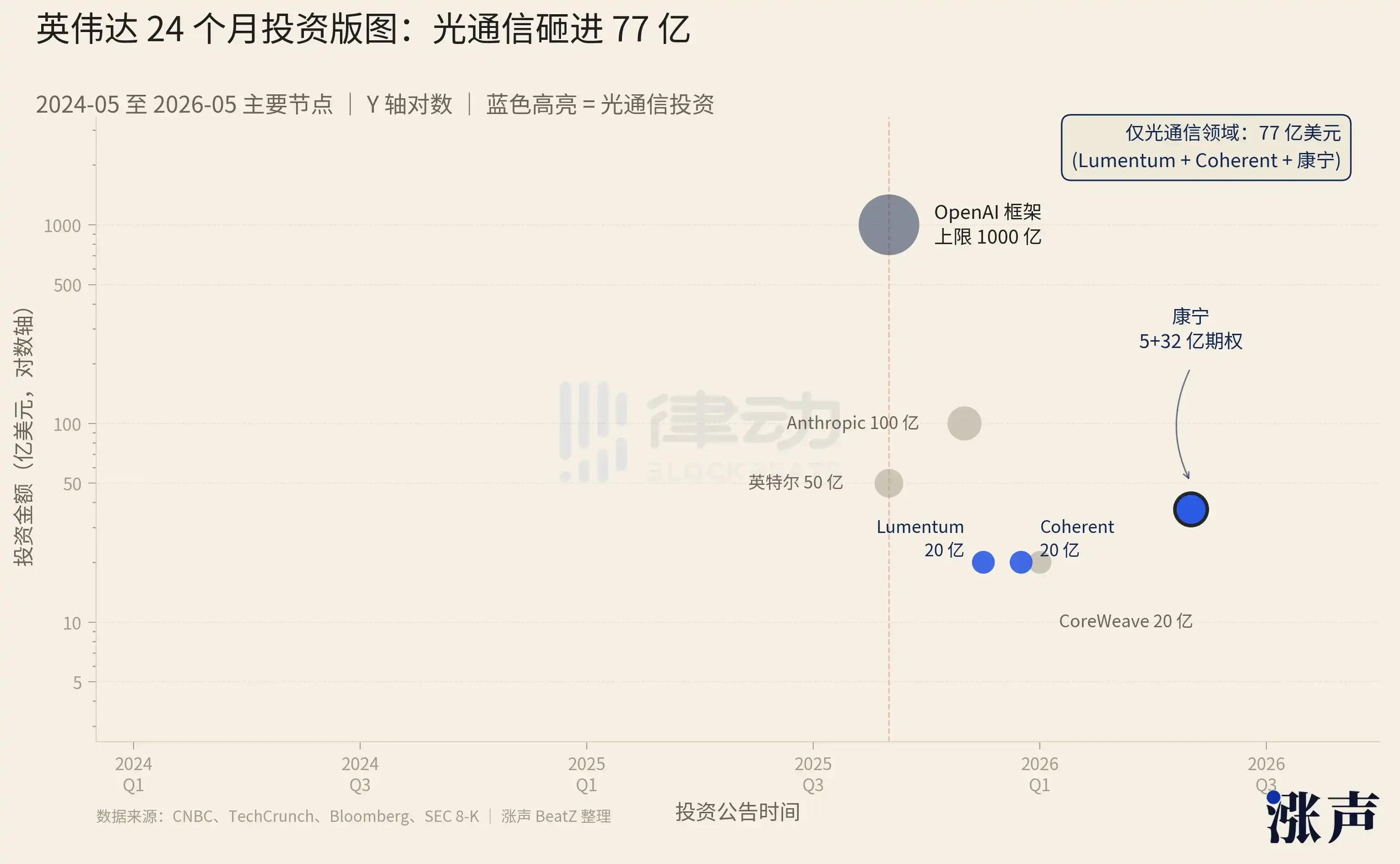

And Corning isn't the only piece NVIDIA has bet on. Since last September, NVIDIA has entered a new investment rhythm. First, the scale has grown larger. Second, the structure frequently uses financial instruments like "frameworks," "options," and "prepaid warrants" to lock in commitments first and then fulfill them in stages. Besides the $100 billion investment framework for OpenAI, NVIDIA has successively invested tens to hundreds of billions of dollars in AI infrastructure companies like Anthropic, Intel, and CoreWeave.

The most easily overlooked is its investment in the optical communication line. Besides Corning, NVIDIA invested $2 billion each in Lumentum and Coherent, two of the world's largest optical component companies. Counting Corning's $500 million initial investment plus the $3.2 billion option, NVIDIA has poured approximately $7.7 billion into just the optical communication niche.

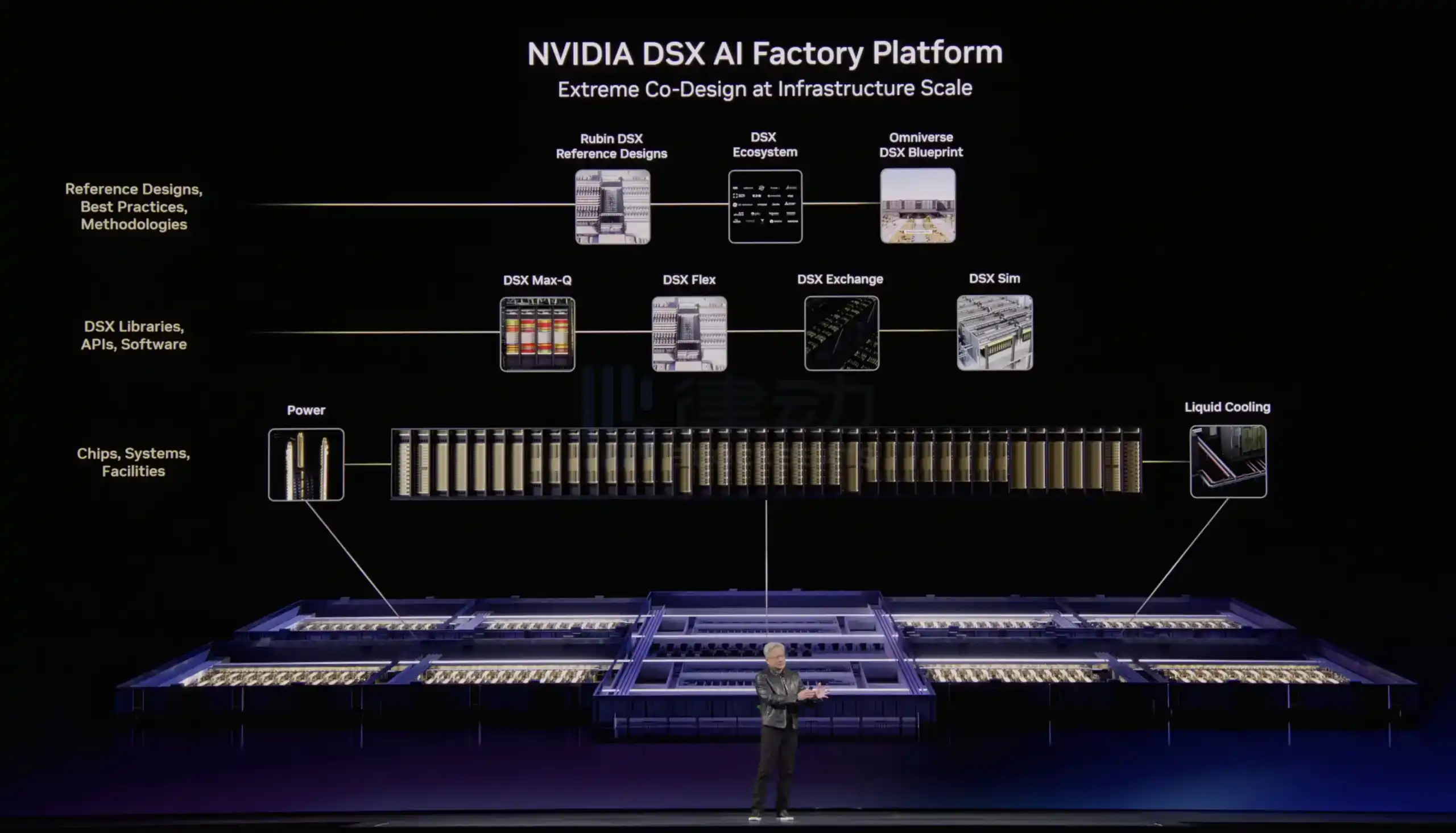

Placing this investment list on a table reveals it's essentially an AI factory construction checklist: compute, networking, optics, power, cooling, software, customers, models—every layer has NVIDIA locking in at least one key supplier. At this year's GTC conference, NVIDIA packaged this full-stack integration into a publicly available blueprint, releasing a hardware reference architecture called Vera Rubin DSX and a digital twin solution called Omniverse DSX Blueprint. The whole thing is basically the "construction blueprint for an AI factory."

A GW-scale (electricity usage scale for 1 million households) AI factory takes 18 to 24 months from planning to operation, requiring coordination among over 100 suppliers. Previously, data center operators handled this themselves, each redoing interface verification. But NVIDIA's Omniverse DSX systematizes this process. All partner products have been validated in NVIDIA's digital twin, parameters aligned, and interfaces standardized. Cloud giants can simply purchase according to NVIDIA's blueprint.

Jensen Huang unveiling the AI factory blueprint platform at GTC 2026, Source: NVIDIA

This marks NVIDIA's critical step from a chip company to an "AI factory general contractor." Increased integration expands profit margins. Even if AMD or Broadcom developed a GPU with equivalent performance tomorrow, replicating this supply chain coordination capability from chips to fiber to power grids would take at least several more years.

Therefore, the true meaning behind NVIDIA's $3.2 billion option for Corning is locking in a key player for the "localized optical communication capacity" slot in its AI factory blueprint. Of course, at present, NVIDIA is the only one capable of drawing this blueprint.