Author: Byron Gilliam

Original Title: Jobpocalypse now?

Compiled and Edited by: BitpushNews

Even during the good times at the investment bank where I used to work, it always felt like another round of layoffs was just around the corner—partly, I think, because management had no idea how many people they actually needed.

I worked on the sales and trading floor, where there was a revenue number at the end of every day: client commissions minus trading losses (and occasionally profits). So you might think it would be easy to quantify who contributed what and who caused the losses.

But it wasn't.

The commission paid on a trade could be credited, in part or in full, to the research analyst who spoke to the client, the salesperson, or the sales trader—or to the trader who took the other side of the trade (that was me at the time!).

No one really knew why a client chose to trade with us. Therefore, it was impossible to definitively attribute each commission to a specific individual, and thus impossible to figure out who was absolutely essential to the business.

To paraphrase (department store magnate) Wanamaker, half the payroll was probably wasted; they just didn't know which half.

The only way to find out was to fire some people and see what happened.

It feels like something similar is about to play out at companies everywhere, because it's not just investment banks that face this dilemma.

When work was primarily in agriculture and manufacturing, measuring employee productivity was easy: just count how many apples they picked or how many parts they produced.

However, when most people started working in offices, things became much more difficult.

"Knowledge work is not defined by quantity," wrote Peter Drucker. "Nor is knowledge work defined by its cost. Knowledge work is defined by its results."

Employers didn't know how to measure these results—what is the unit of output for a day of meetings, phone calls, and internal memos?

So they measured time instead: employees were required to be in the office for eight hours a day in exchange for pay, and employers hoped they would get eight hours of work done in those eight hours.

Time became a proxy for output.

But what happens when everyone works from home?

If employers can't measure their employees by their time in the office, they have to measure their output instead.

This is a good thing. "Emphasizing output rather than activity is the key to increasing productivity," Peter Drucker wrote in 1967.

But employers never really figured out how to do it.

Now, artificial intelligence (AI) is forcing employers to try again. Large language models can handle many time-consuming tasks, so employers are starting to rethink what they pay their employees to do.

I'm not sure they'll do any better than the bank I worked for. But the AI narrative is putting immense pressure on companies to find ways to increase productivity, so much so that many will simply lay people off and see what happens.

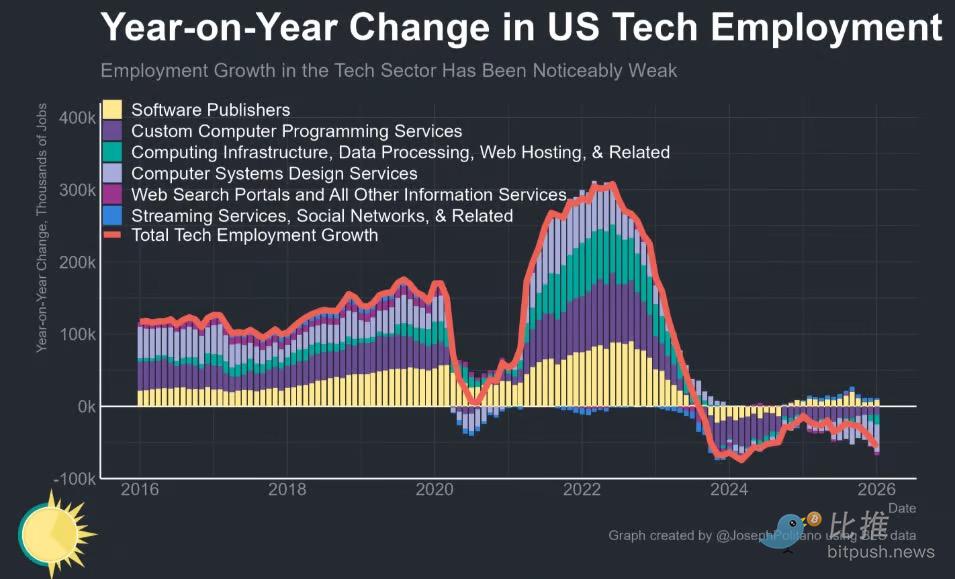

Data from March 6 suggests this may have already begun: The U.S. Bureau of Labor Statistics reported that employment in the tech industry fell by 12,000 jobs month-over-month last month, and by 57,000 jobs over the past year.

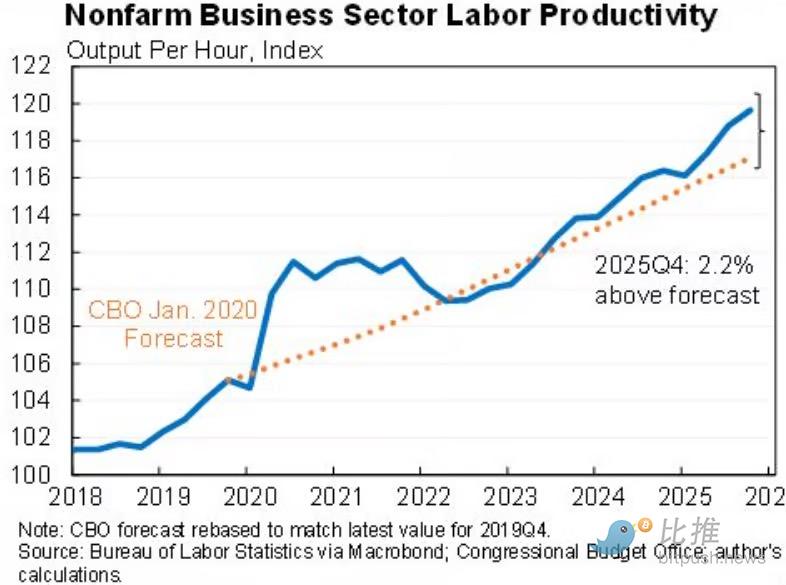

Good productivity data was also released this week, which some economists believe is the first sign that companies are starting to use AI productively.

So, companies might soon be able to do more with fewer people.

But they might also just be doing more.

A new paper in the Harvard Business Review found that "AI doesn't reduce work; it just makes work more intense."

In an eight-month survey of work practices at a tech company, the authors found that AI led to employees working at a faster pace, taking on a wider range of tasks, and extending their working hours into more parts of the day.

"Many people send prompts to AI while eating lunch, in meetings, or while waiting for files to load. Some described sending 'one last quick prompt' before leaving their desk so the AI could keep working while they walked away."

That sounds good for employers looking to squeeze more value out of their employees. And this part sounds even better: "Employees are increasingly absorbing work that previously might have required additional staff or headcount."

But the researchers issued a warning to employers:

What appears to be higher productivity in the short term may mask a quiet creep in workload and growing cognitive strain as employees juggle multiple AI-driven workflows. Because the extra effort is voluntary and often described as 'fun to try,' it's easy for leaders to overlook how much extra load employees are actually taking on. Over time, overwork can impair judgment, increase the likelihood of errors, and make it harder for organizations to distinguish genuine productivity gains from unsustainable work intensity.

If that's the case, companies might soon find they need more people, not fewer.

At least, that's what the head of HR at IBM anticipates. Nick LaMoreaux told Bloomberg that cutting early-career hiring might save money in the short term, but it risks creating a scarcity of mid-level managers later on.

Therefore, IBM plans to triple its entry-level hiring. "That's right," LaMoreaux said, "for the very jobs everyone says AI can do."

The investment bank I worked for was always hiring between rounds of layoffs—constantly churning through staff in an attempt to figure out who actually did what.

The entire U.S. economy might soon be doing the same.

Let's look at the charts.

This morning's jobs report was "brutal" for the tech industry. Losing 57,000 jobs in the past year is "almost as bad as the worst of the 2024 tech slump, and significantly worse than during the 2008 or 2020 recessions."

The tech industry is just the tip of the iceberg. Looking at the entire U.S. economy, employers announced 48,307 layoffs in February, according to outplacement and executive coaching firm Challenger, Gray & Christmas. That's down 55% from the 108,435 announced in January and down a sharp 72% from the 172,017 announced in the same month last year.

The cumulative total of layoff announcements for January and February is 156,742, the lowest start to a year since 2022 (when only 34,309 were cut in the first two months). Then again, this number still ranks as the fifth highest for the same period in any year from 2009 to the present.

In other words: The wave of layoffs has indeed eased compared to the beginning of the year and the same period last year, but historically, it's still not low. The days aren't getting better for workers anytime soon.

Too many leaders?

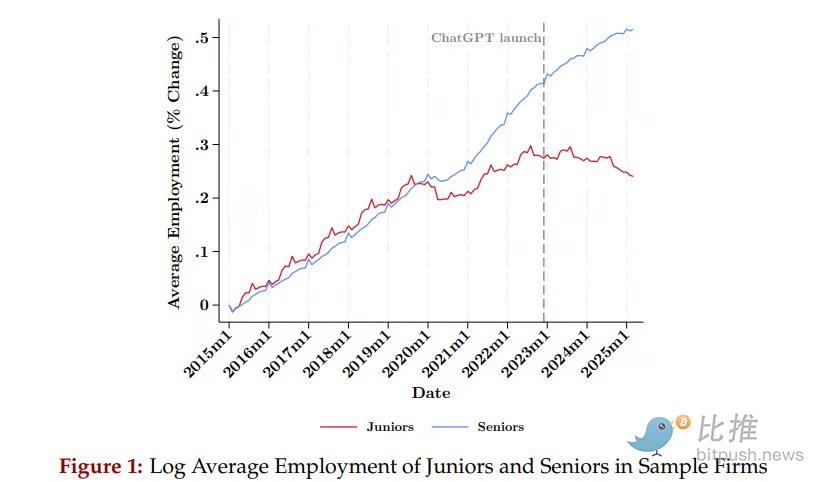

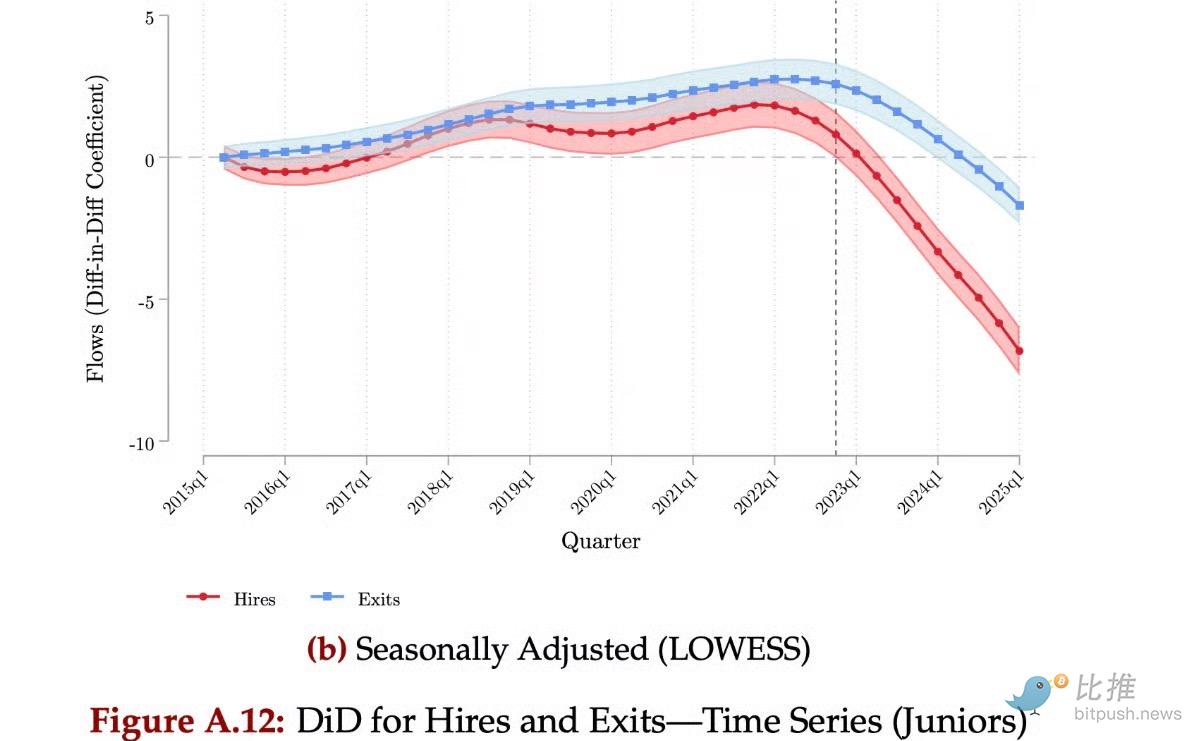

An academic paper found that generative AI is creating a "seniority-biased technological change" in the employment landscape, one that is particularly severe for junior employees. This isn't just happening in tech: the study analyzed resume data from 285,000 employers.

Hiring recession:

The same study explains that the decline in junior-level employment is "achieved entirely through a decline in hiring."

The AI effect:

Websites people have long turned to for buying advice, like Wired and Tom's Guide, have seen a plunge in traffic. We now ask chatbots directly—

and the bots get their information from the very websites they are crowding out of the market.

Or is it AI?

Applied AI professor Alex Imas noted that this week's productivity data "shows signs" that companies are already benefiting from AI.

All talk?

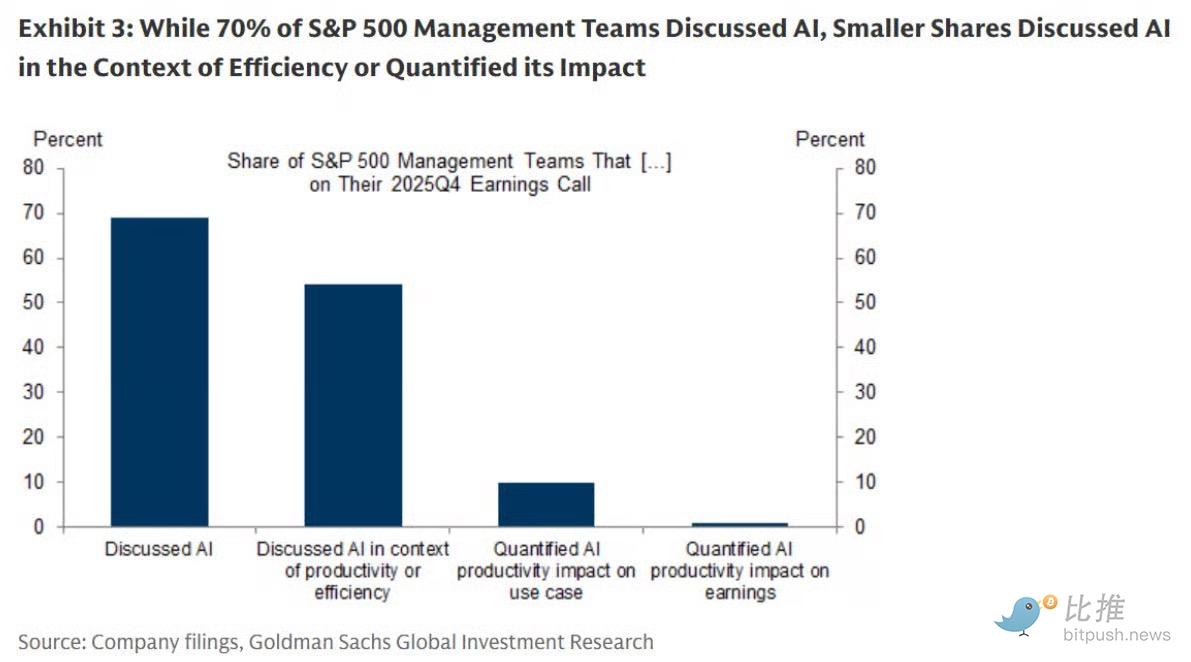

Data from Goldman Sachs (via Callum Williams) shows that while 70% of companies are talking about AI, only 10% can explain how it helps their business, and only 1% can quantify its impact on earnings.

Work is always changing:

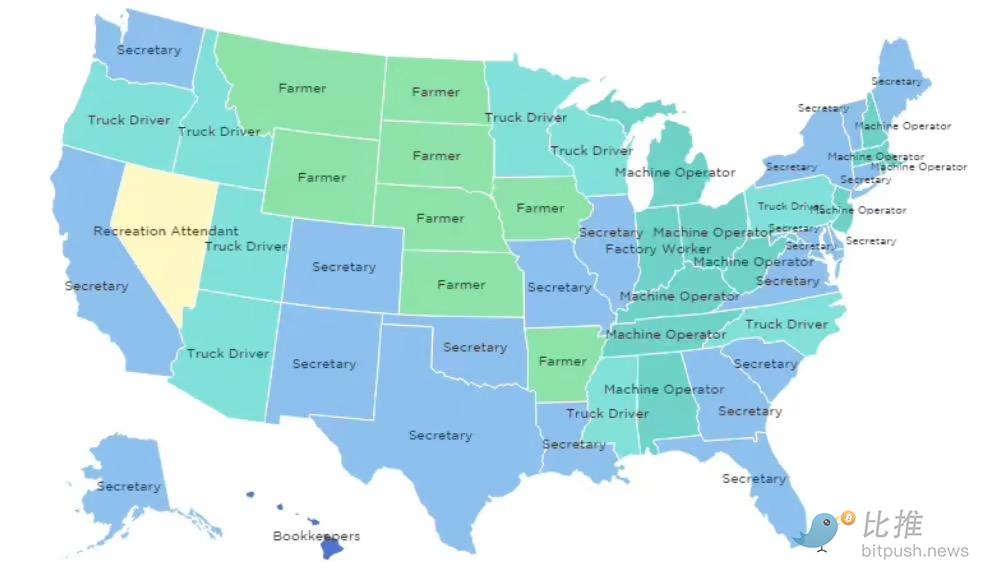

Tech journalist Roland Mansop mapped the most common jobs by state in the 1980s and found that "secretary" was the most common job in 19 U.S. states.

What AI can and cannot do:

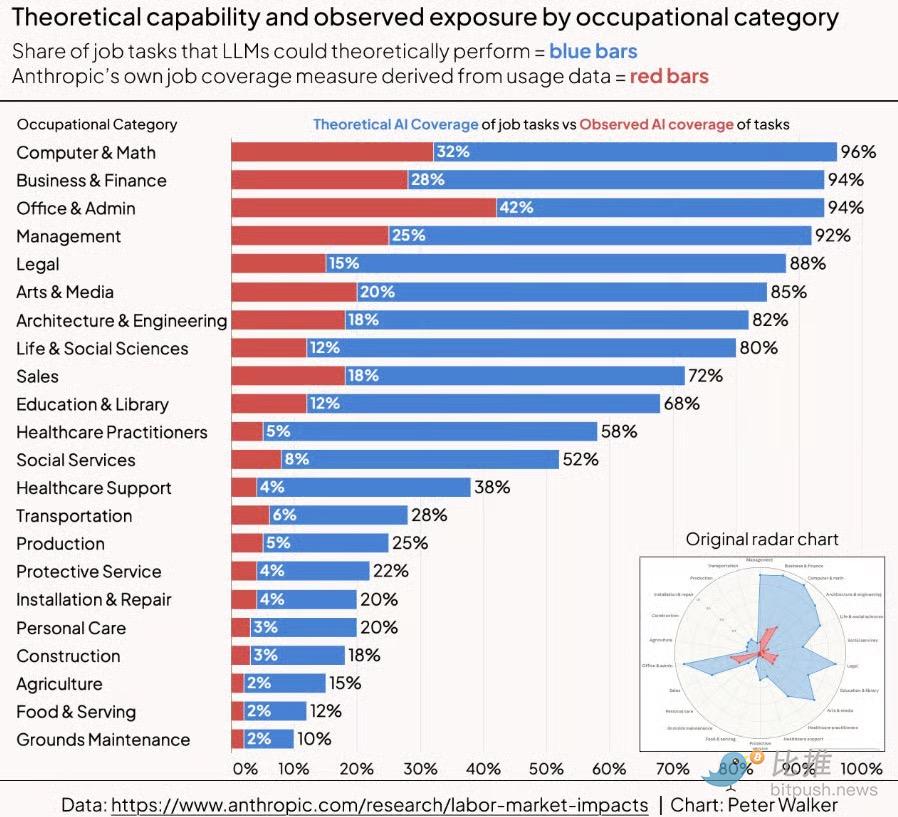

Peter Walker reorganized data from Anthropic showing what portion of each occupation AI could theoretically perform (blue) and how much it currently actually performs (red).

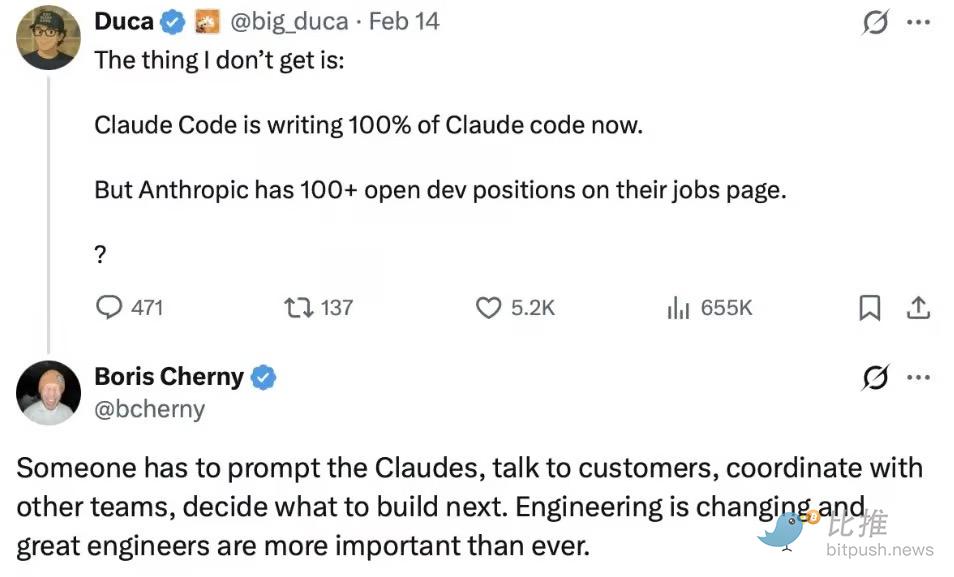

This next question is a good one!

In a reply on platform X, Boris Cherny, who works on Claude Code, explained that all the code Claude is writing is creating new work that only humans can do.

Annual salary: $405,000−$485,000.

These are a few of Anthropic's job openings and their salaries. The code is writing code, but someone has to tell the code what code to write, and that's a high-paying job.

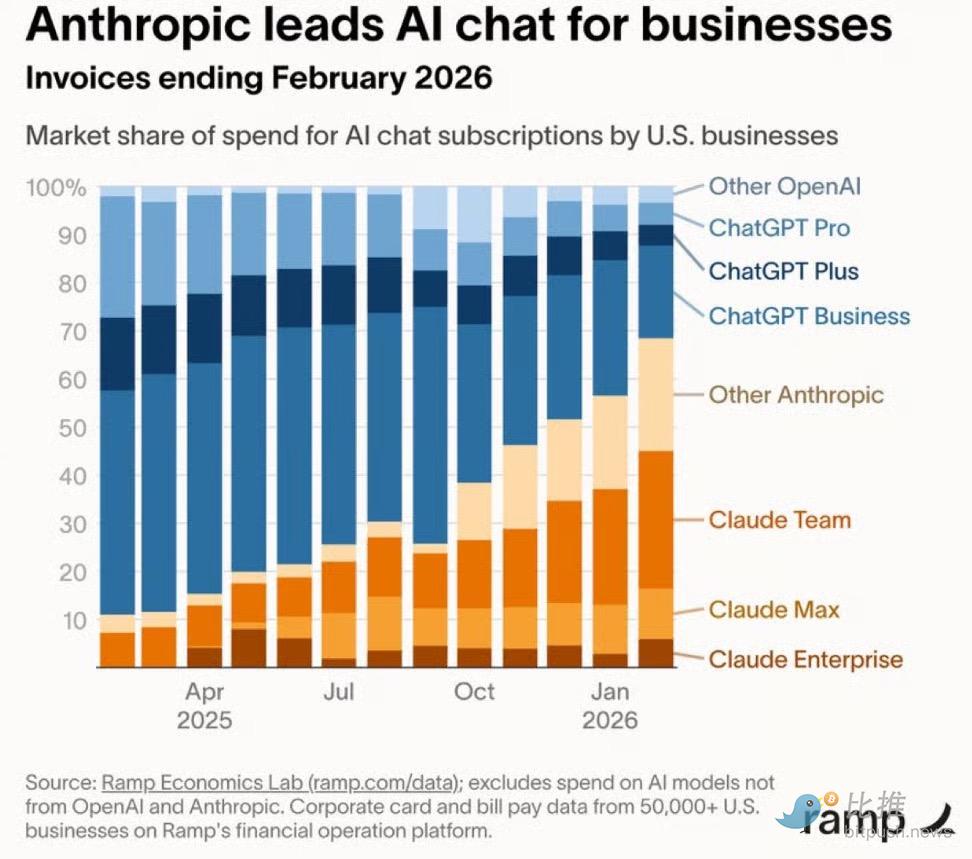

Claude is winning:

An incredible chart from Ramp shows OpenAI's shrinking share (blue) of the business market versus Claude's growing share (orange).

Misaligned timing:

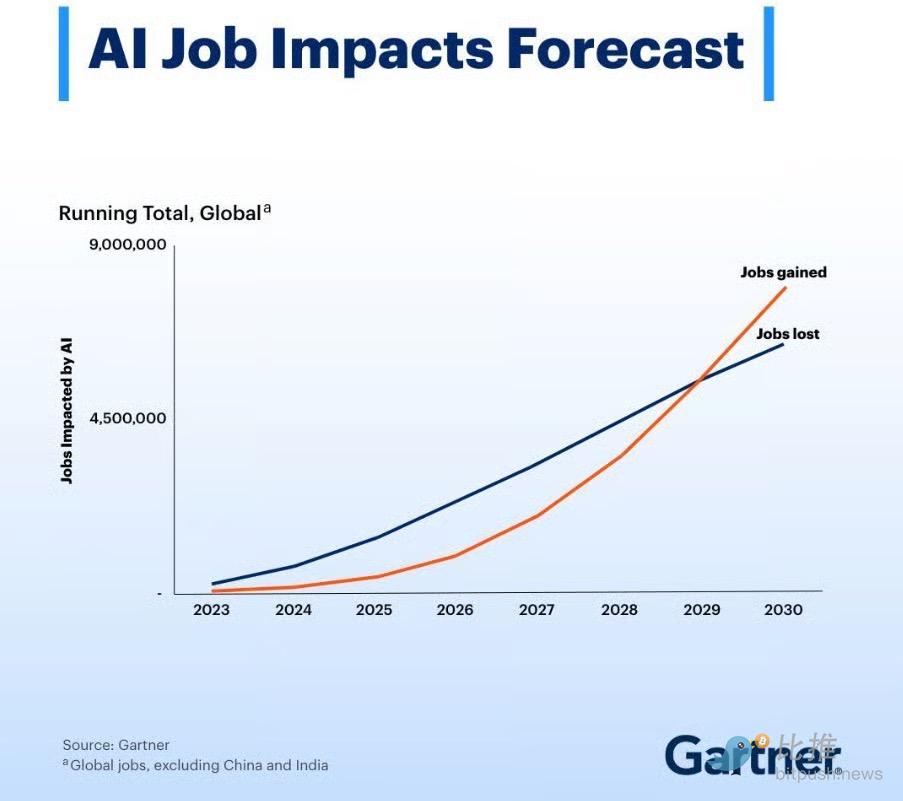

A Gartner study predicts that "AI will not bring a 'job apocalypse'—but it will bring job chaos." They expect AI to create more jobs than it eliminates starting in 2028.

Call me an "apocalyptic optimist," but I think this will all happen faster than expected.

Happy weekend to all you hard-working readers.

Twitter:https://twitter.com/BitpushNewsCN

Bitpush TG Discussion Group:https://t.me/BitPushCommunity

Bitpush TG Subscription: https://t.me/bitpush